at a multi-day loss for any idea how to proceed, I wrote several draft log entries touching on the concepts I discovered in the last post...

Some of those are much more detailed, including some schematics and explanations of the bit-level link protocol... but, that stuff's on the web and surely better-explained (and understood)...

But one result of the final attempt, which got posted in that last log, was a moment of curiosity about how the link cable is actually implemented, electronically.

So, immediately after finishing that log entry, I ripped open the link cable...

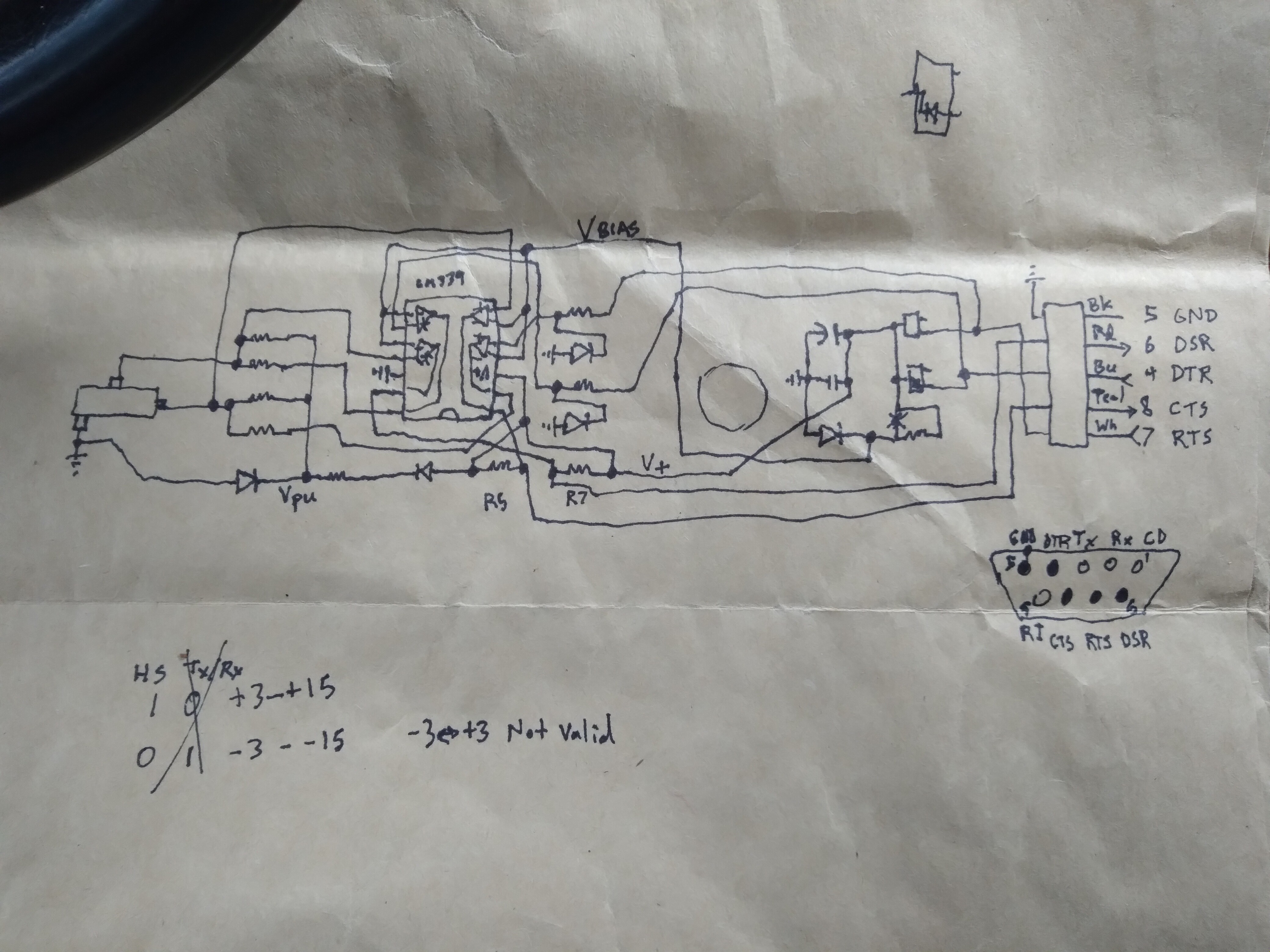

Above: This is laid-out roughly as on the PCB itself.

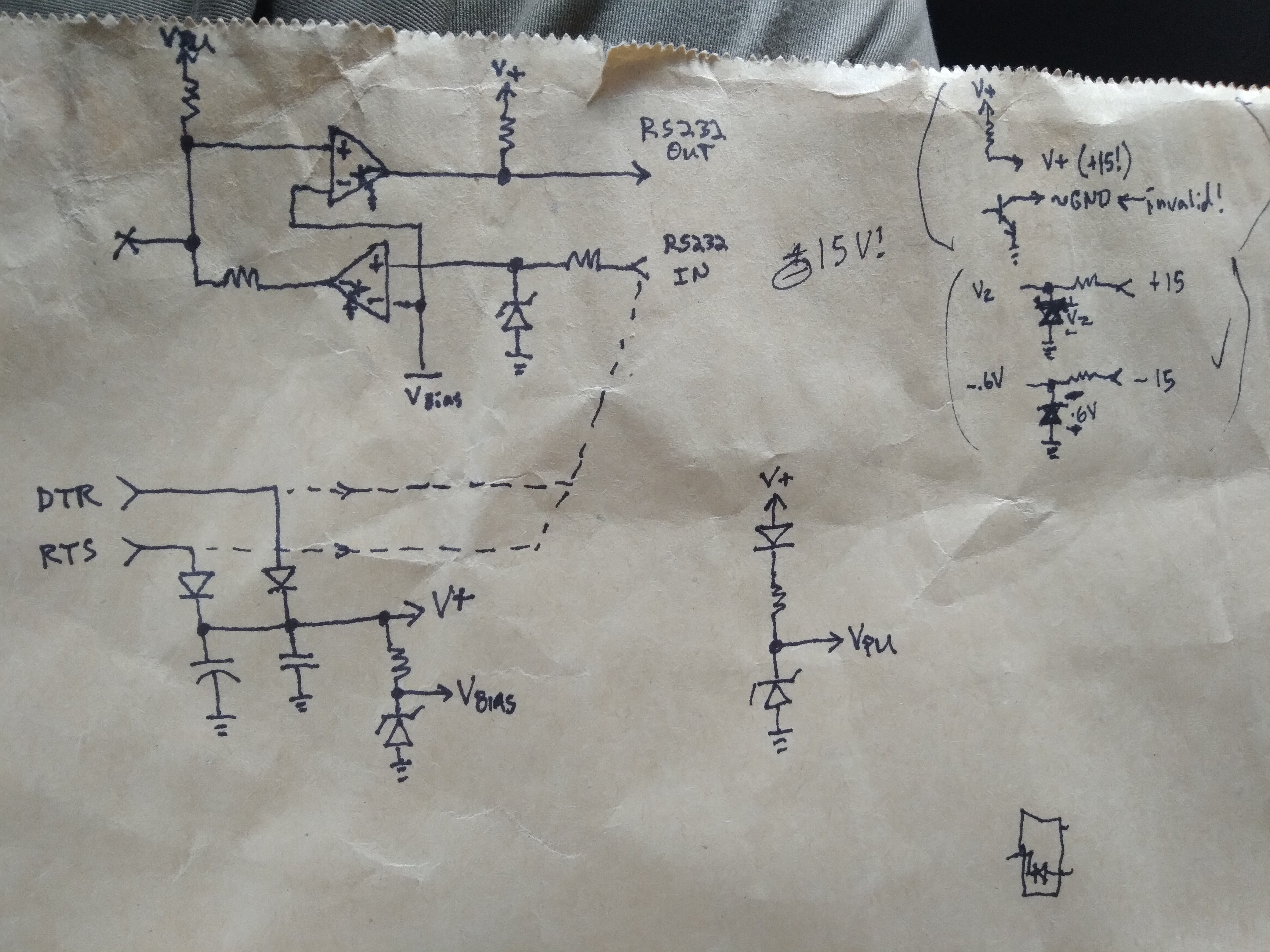

Below: These are the sub-circuits, as I understand/guess. More detail follows.

The upper left circuit implements one of two identical circuits in the link to the calculator. It is actually quite similar electrically, at the link, to the circuits shown on the web of the calculator's internals...

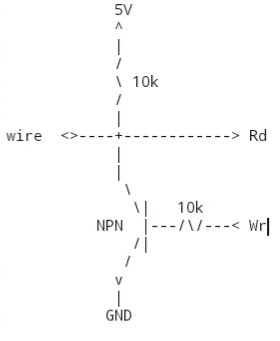

The LM339 quad comparator has an Open-Collector output, thus, the wire (marked with an X which is really two point-to-point arrows, indicating it's bidirectional) is either pulled up by the resistor, or tied down through the transistor in the 339's output. (Both sides of that wire work the same, so it could be pulled-down at the other end).

The power is interesting... they draw a positive voltage from one or both of the RS-232 handshaking outputs, which ALSO are used for data output. In most cases there will never be reason to send 0x00, one of the bits is usually high. But, this circuit wouldn't handle outputting 0x00 for very long (as the capacitor drains), EXCEPT that if the outputs are both low, the positive rail is really only necessary to power the LM339, which has a wide voltage range... So, usually, it might be running from up to 15V, it could be running from 3V as that capacitor discharges.

Pretty much all the internal voltages seem to be derived from zener regulators. (I haven't confirmed they're zeners, but it makes sense based on their polarity).

There's also the matter of the diodes at the comparator inputs... these may be zeners to assure the input to the comparator doesn't exceed V+, which could happen as the capacitor recharges, or they may just be regular diodes, so -3 to -15V, indicating a '0' will be dropped to more like -0.6V, so as not to drive the input too far out of the comparator's input range. Regardless of whether they're zeners, they do that. So, it could be an interesting double-duty depending on the input level.

Now, there's one other thing... which I'm kinda surprised they got away with. Driving the RS-232 handshaking /inputs/ is done with the comparator output (when low) or a pull-up resistor (when high). But that means "low" is close to 0V... and the RS-232 spec says low should be -3 to -15V, and that anything between -3 to +3V should be considered invalid!

So, I'm aware that folk get away with this in "hacks" connecting MCUs almost directly (without dedicated level-shifters or line-drivers, or even a transistor) to their computers' serial ports... but to think it reliable-enough to put into a product makes me wonder... That's /probably/ part of the reason they say it's "For Windows [Computers Only]" from back in the day when that meant an x86 with long-standardized "hacks" that weren't within official specs...

PCs of the ~386-pentium era were based on many such hacks becoming standardized, e.g. the original floppy controller chip could only handle one bit rate, so when they came out with 1.2MB 5.25in floppies, they added a small circuit to change the clock source depending on whether you put a 1.2MB floppy, or a 360KB floppy in the drive... but /kept/ the same floppy-controller chip. Later floppy controller chips just embedded that "hack" within the silicon, keeping compatibility with that hack, rather than redesigning with a new strategy entirely. Similarly, the original parallel port was output-only on its data pins... but making it bi-directional was a simple matter of adding a 74244 buffer and a single D-flip-flop... which could easily be done by an end-user... So, eventually, it became standardized in exactly that way. But we're talking about serial ports... So, I'm pretty certain they too have gone far out of the RS-232 specs. One example is the baud-rate which can't be nearly as fast as we can now set them, because the spec is very clear about /slew/ rates (being quite slow) in order to reduce noise, signal bounce, etc. over the long distances RS-232 was designed for... (Imagine a dumb-terminal in an office on the third floor of a building, connected to a mainframe in the basement). Home PC's seldom did anything even remotely like that, so got away with many "cut corners" or "hacks" that aren't at all within the RS-232 spec... yet, became standardized, amongst PCs.

Which leads me to wonder if there's some list of such hacks/standards... maybe even "specs."

Because, in the case of 0V (or maybe even up to 0.6V, being tied to ground through a transistor) being an acceptable "low"... well, frankly, I don't think nearly so many "home hackers" were connecting microcontrollers to serial ports in such ways way back then... as for it to become standardized. Serial, of the era, was still a pretty complicated thing, often requiring dedicated UART chips... We're not talking about a simple shift-register, here... because of the "Asynchronous" [A] in UART. Microcontrollers with inbuilt UARTs were still pretty new/niche in comparison to, say, folk making TTL circuits attached to their parallel port. And using handshaking lines in a serial port as though they're general-purpose inputs and outputs would be niche, as well, considering it'd be much easier to do-so with a parallel port already working at TTL levels.

So, I have a hard time imagining this "hack" had enough basis in such standardizations to work across many different implementations... remember, at one point many different manufacturers were building the "bridge" chips that contained all the circuitry for floppy controllers, IDE controllers, parallel ports, serial ports, and more, all in a single chip. VIA and Intel were big ones, but I'm sure there were many others... So, how, then, would TI "get away" with such a far-out-of-spec "hack" as to consider it reliable enough to market?

Maybe they were "riding on the tails" of others who'd already "done the research" (and indirectly set the standards)... e.g. PDAs were pretty common... or maybe serial mice (duh)... and maybe 0V=low was already standardized. Or maybe they really did the research themselves, testing with the leading UARTs of the time... but, it's hard to imagine they really saved a whole lot of money implementing it this way... it'd take, what, two extra transistors and one extra wire to use the negative "rail"...

which also leads me to wonder about all the circuitry used for power harvesting... if they'd've just added one more wire they could've had power regardless of the data output, and fewer rectifier diodes... if they were really so cost-conscious then surely they'd've saved a few pennies by not puting [gold-plated?] connector-pins in the unused pins in the DB-9.

Heh!

I dunno.

Anyhow, it's an interesting implementation... some clever design went into it.

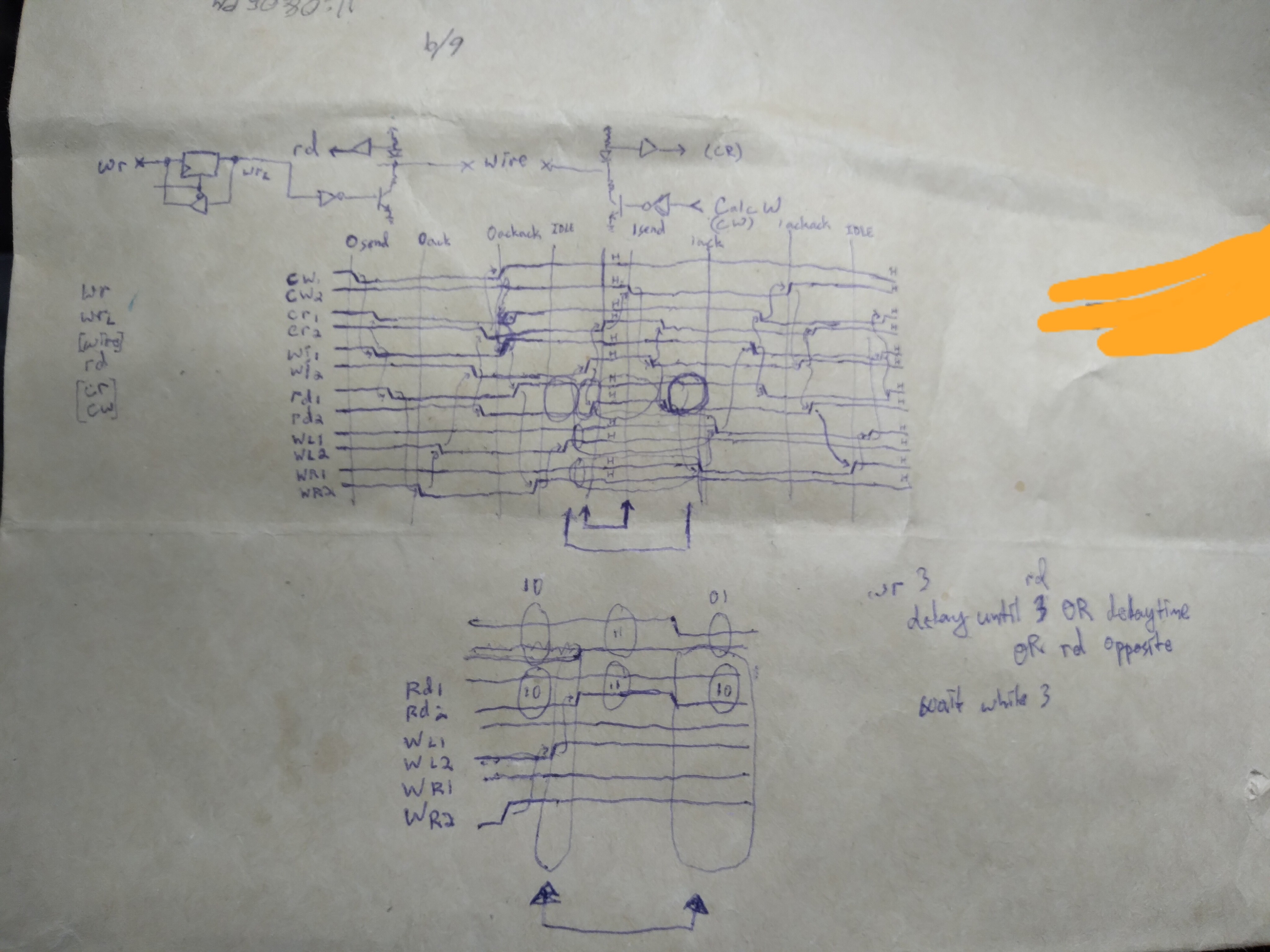

It might also help to answer some other questions I had before... for example, when writing a bit, it takes some time for the change to be read-back... There are a LOT of delays in the process from sending the "write Handshake signals" command to their actually being written at the wires... The OS has to send the command to the driver, the driver to the UART, the UART to the actual pins... then many delays thereafter due to capacitance in the diodes and the propagation time through the comparator, and who knows about the effects of sagging voltages on the capacitor... the output capacitance and the pull-up resistance... and, of course, I'm making it worse by doing this via USB-to-serial dongle. But, some of those delays shouldn't be noticeable through the USB conversion. Sending a write command, followed by a read command, they *should* happen in order, right? Regardless of how long each command takes through the OS, then driver, then over USB packets. But, when I send a write, it doesn't get read-back until many reads later... that has to be circuitry, then, right?

The only other thought may be in how USB packets work... I'm not certain, but I think I recall that the packets usually occur all in one go... E.G. The computer asks the USB-Serial converter device for the data on its handshaking pins, the device responds *within* that *same* packet (I think). That would mean it has to already have that data ready to go stored in memory somewhere... it doesn't read those pins when-asked, it merely responds with the values it read from those pins the last time it (the microcontroller in the converter) checked. So, it's entirely possible it could be that those pins do toggle long before they're read-back as having-toggled.

In the case of trying to run the "Blacklink" through a USB converter, these extra delays shouldn't make it unusable with the original code designed to work with a "real" serial port (indeed I have used it, after adding /dev/ttyUSB0 to the code) (because of the handshaking protocol in TI's linking protocol), but it might slow things a bit (indeed, it's going VERY slow, but, again, the slowest part seems to be the calculator's response to the end of a bit transmission, rather than during the long back-and-forth communication process of transmitting the bit!).

So, I've still got some thinking to do.

The handshaking process *should* account for any of the circuitry/OS/USB delays, without having to explicitly add additional delays...

...

Oh, some other "standards" this cable relies on:

Current-output of the computer's handshaking outputs! This has a lot of circuitry in it, and zener-regulators aren't exactly efficient... (maybe going back to serial mice?)

It's also plausible the pull-up on the linking-wires, at the "computer-side" actually usually does nothing, due to the zener-regulator plausibly usually having a lower voltage than the calculator's pull-up at the other end. This'd reduce current-consumption from the computer's handshaking outputs, but would also increase the transition times from low to high. Again, the handhaking protocol should account for this added delay. But, it also means a little more power draw from the calculator.

My reasoning, here, is based on the zener regulator that outputs Vpu... since that drives the pull-up resistors for *both* link wires. If it only handled one wire, it'd be possible to tune those resistors for the least amount of current "wasted" through the zener, accounting for only a couple states. But, because two circuits rely on it, there are four possible states it has to handle (well, more if you consider the fact that both the calculator and the computer can drive the wire low, and their circuits differ slightly). So, the resistances are a lot more difficult to fine-tune. Instead, they probably went with something more like the "10-to-one rule [of thumb]" usually used with voltage dividers, which says basically, if you need 1mA of current from your voltage divider, then run 10mA through it, and your simple voltage-divider-equations will get yah close-enough. But, running ten times the current through the resistor above the zener diode, here, could be a bit of a waste, especially since it'd be ten times the worst-case, which is when all the outputs on both link wires are low. And still wasting roughly the same power through the zener when all ouputs are high. So, then, instead, maybe that zener-regulator is intentionally designed to output a lower voltage than a normal "high" on those wires, and the zener would "drop out" when the calculator's pull-ups can do the job. I think I need to think about this a bit more to be sure it makes sense... but, if something like that is the case, then that could add delay to the output transitions; charging capacitances with fewer current paths, higher resistance, from positive.

Again, the low level linking protocol's handshaking should account for such added delays, which i find rather intriguing about its design. It should, basically, work properly at the fastest rate possible between the two systems, regardless of their inherent differences in propagation-delays or response times, etc. It's really quite clever. But, the weird thing I discovered a while back about how the calculator seems to transition so quickly from the last bit's final state to the next bit's first state, making it seemingly impossible to determine when the calculator is ready for the next bit, so adding some arbitrary delay to account for it, seems to completely destroy that elegance of the handshaking protocol! Surely I'm missing something!

The best I can guess is that the protocol is expected to be edge-triggered, at both ends, and doing-so via USB-dongle isn't really doable since most such dongles don't have edge-trigger detection implemented (at least in their drivers). HOWEVER, "real" serial ports do... and... that functionality is not used in tilp2's serial linking library... which, again, might be why the delay is there. BUT: IF that inter-bit transition pulse is as quick as it seems, (too fast to be detected between two successive reads) THEN it can't possibly be assumed to have travelled down the wire and overcome all the capacitances and propagation delays at the other end... even to be detectable as an edge... Thus, AGAIN destroying that handshaking elegance... so, I must be missing something...

.......

8/18/21:

Measurements:

Driving a handshaking line directly from "5v" power from the USB dongle:

C1=4.51V

Vbias=1.62V

Vpu=4.14V

Driving that same line from a low 9V battery measuring a little over 8V:

C1=8.04V

Vbias=1.79V

Vpu=5.12V

I did NOT run measurements while powered via the DB9 on my dongle, as would usually be the case, because... well I'd need to power up the computer and boot the OS to take the PL2303 and HIN213 out of shutdown, AND, because the power at C1 would vary dramatically depending on the serial port it's connected to. (In a more recent log I note that the HIN213 may be /right at/ its limits driving this thing. So I've been lucky, I guess, that it's been functional-enough to do uploads/downloads!)

Key Factors:

Vpu seems to be regulated by a 5.1V zener.

(Won't do much with less than 5V available!)

Vbias seems to be regulated by a 1.7V zener.

(Of course, varies slightly with more current going through it)

Thus, Vin Low is <1.6V, Vin High is > 1.8V. This is for BOTH directions (from calculator AND from RS232).

Other notes:

Unloaded it seems to take around 1mA at 8V. Loaded, that varies dramatically with the load.

HIN213 has 5k pull-to-ground resistors at the RS232 inputs. That nearly /halves/ the output voltage from the graphlink... thus the RS232-side pull-ups must be around 5K.

If the graphlink is running at 5V on C1, that voltage-divider on its outputs makes for a high that is just-at the Vin L->H threshold specs of the HIN213.

Also of-note: the LM339 is spec'd to run as low as 2V.

Eric Hertz

Eric Hertz

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.