It took me about 8 months so far from the very birth of this idea, to developing Kinetic Soul 1.0, the very first working prototype. During these 8 months, Kinetic soul was developed through these 3 main modes of inquiry-theoretical, prototyping and human-centred.

Theoretical

The project was inspired by a few key findings discussed by other researchers.

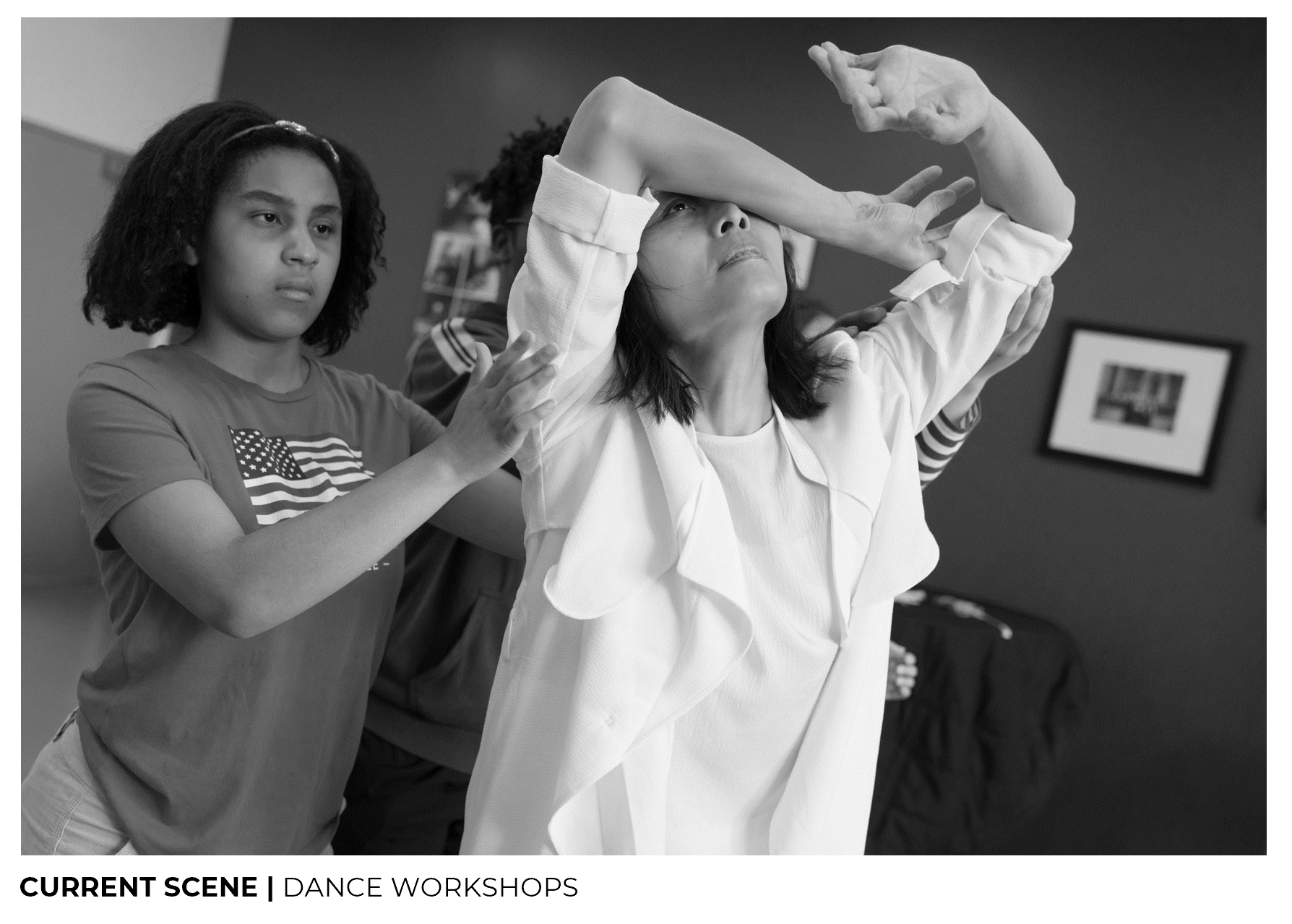

Based on the current dance scene for the visually impaired (dance workshops), I found opportunities in making the dance experience less intrusive and more accessible remotely.

Dance being subjective in nature, how do we define and break down dance? I referred to Laban Movement Analysis, a method for structuring and interpreting human movement. It is broken down into four main categories, body, effort, shape and space, which is essentially, what, how and where it is moving.

From here, how do I then deconstruct dance into structured parameters?

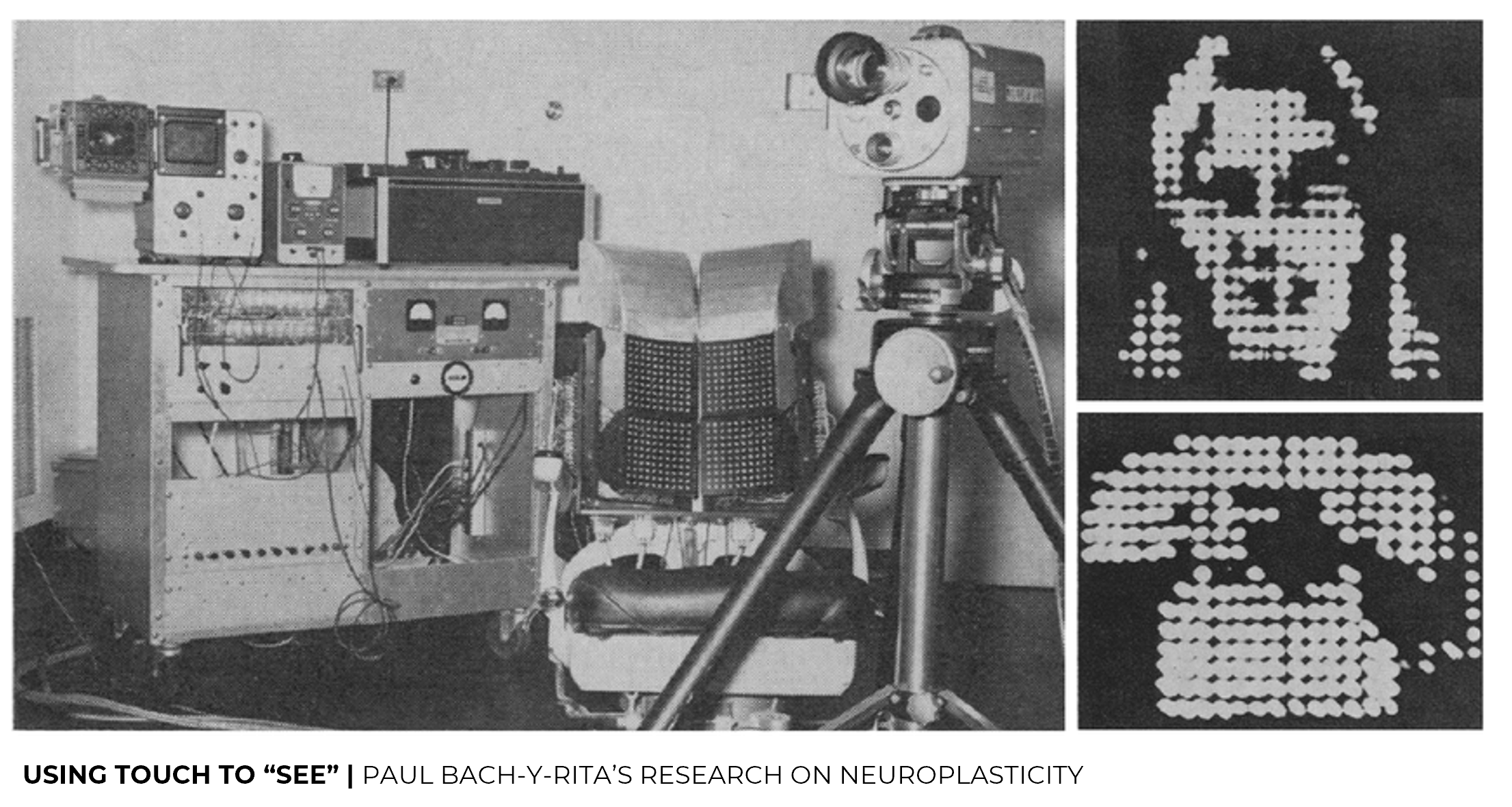

I referred to a research by Paul Bach-y-Rita on neuroplasticity, where it is shown that the brain is far more flexible than we think. Specific to the visually impaired, they can be taught to see using touch. There is a “tactile television” where images captured are projected onto the skin using haptic actuators, and studies have shown that these images were perceivable by the visually impaired. This supported my idea of having visual information of deconstructed movement be perceived through haptics.

Prototyping

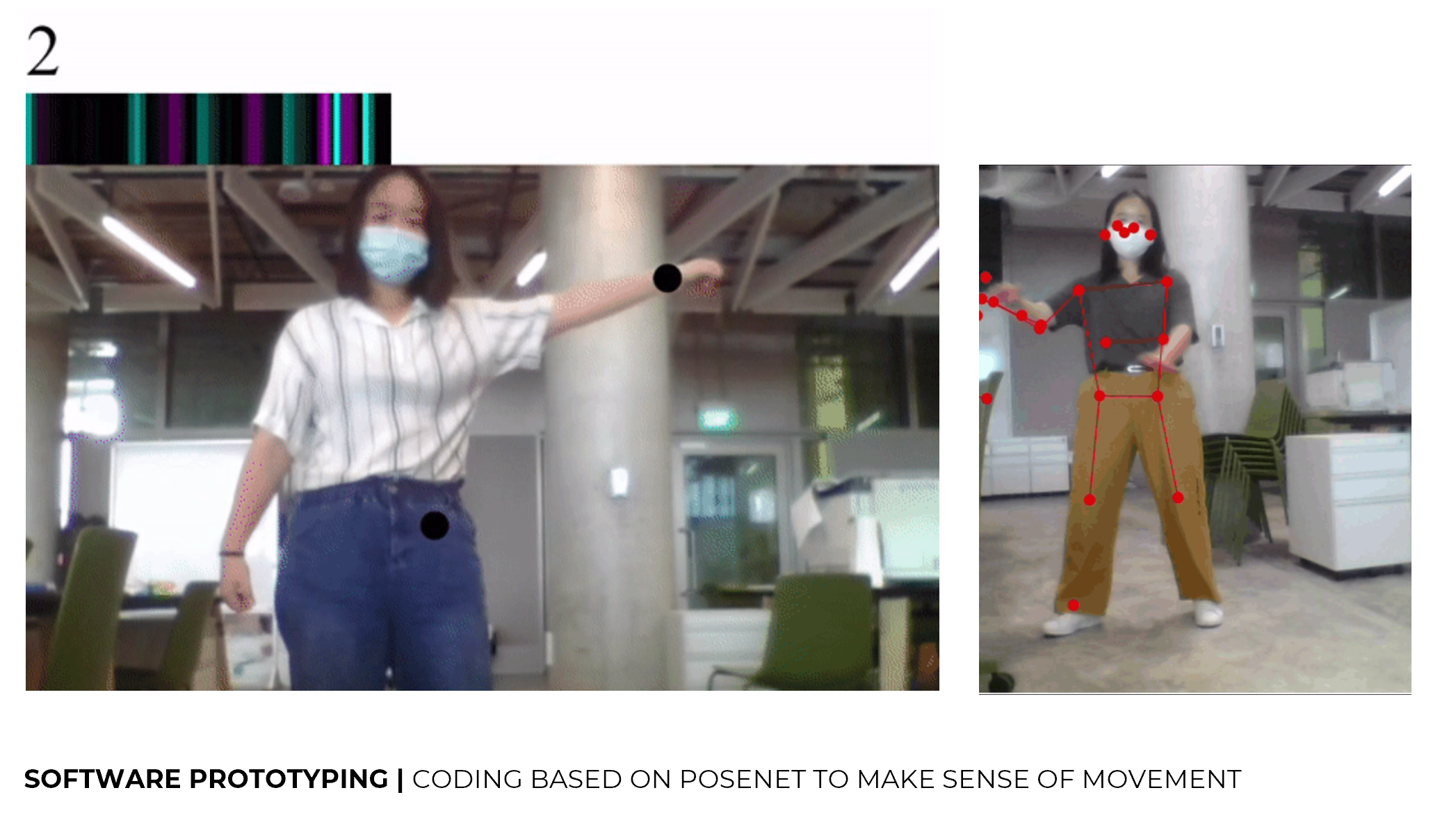

Another key approach would be prototyping, which consists of both software and physical haptic prototypes.

I found a computer vision based system, posenet, which detects human figures in a video input and estimates where key body joints are. I explored how we could make sense of the key body joints to convey deconstructed movement. For example, we can make use of relations between points to determine the distance between the points, the range of motion, its speed as well as direction.

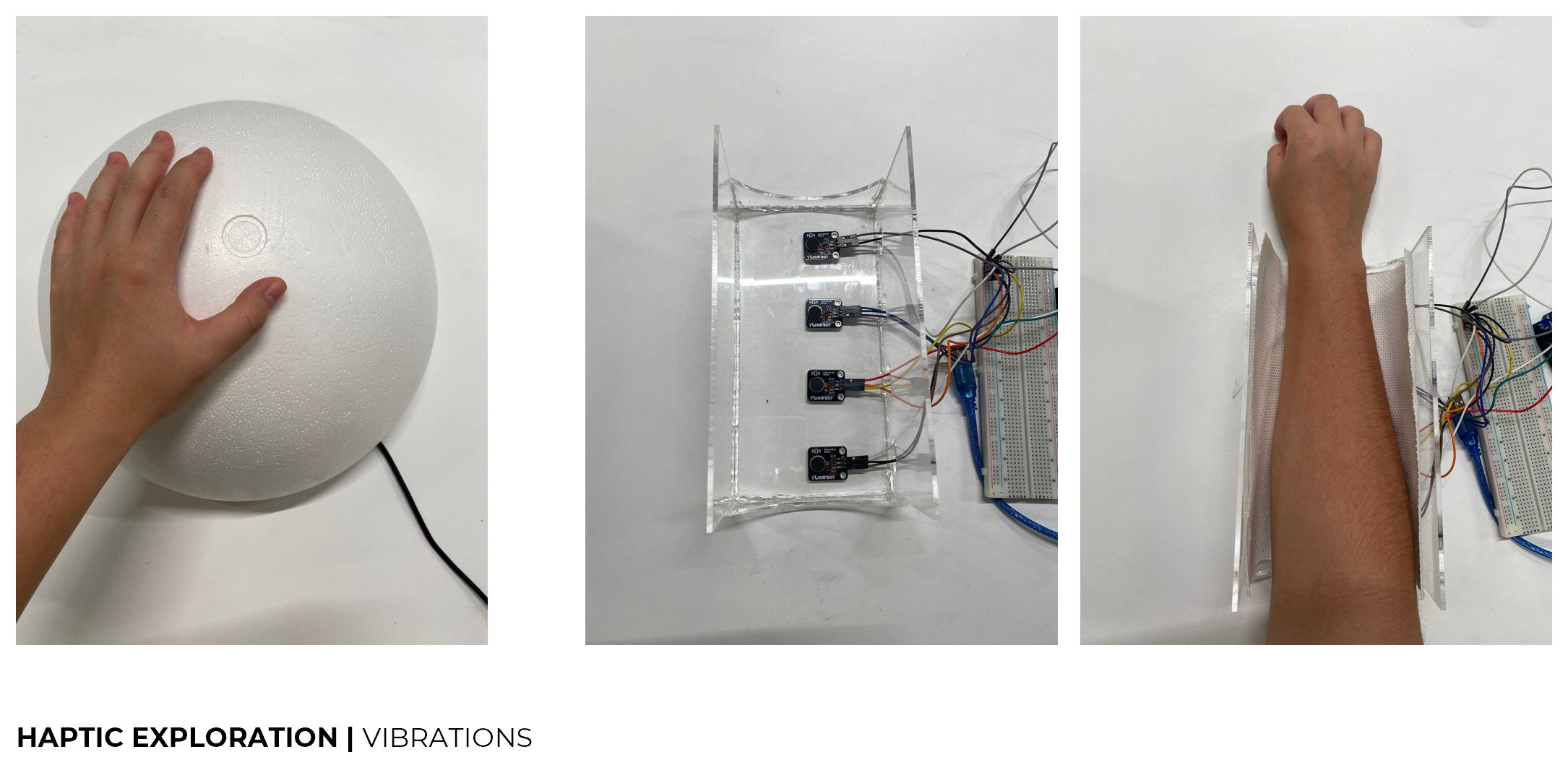

I explored the use of vibrations, one to be felt by the palms and the other to be felt through different parts of the body such as the arm.

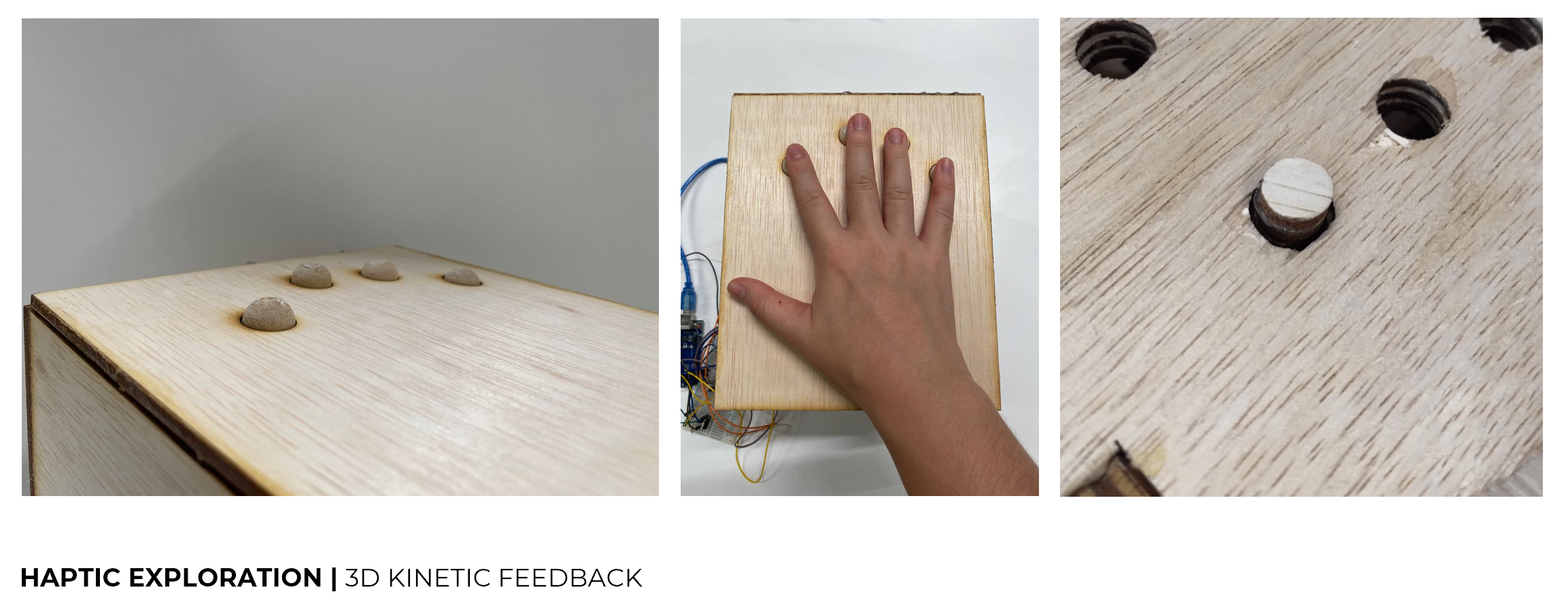

While exploring kinetic feedback of 3D forms as well, I realised the difference in the perception between the two. For 3D kinetic feedback, the visually impaired are able to perceive in a clearer and more definite manner through touch. Whereas, for vibrations, it is hard for them to perceive their locations within the same physical interface.

Human-centred

I also took on a human-centred approach, where I connected with a few visually impaired people to understand them and their take on dance better.

As I build my prototypes, I checked in with my target audience through workshops to gather their feedback.

They shared that they felt more comfortable and familiar with such a touch experience like this, which has some similarities to Braille reading.

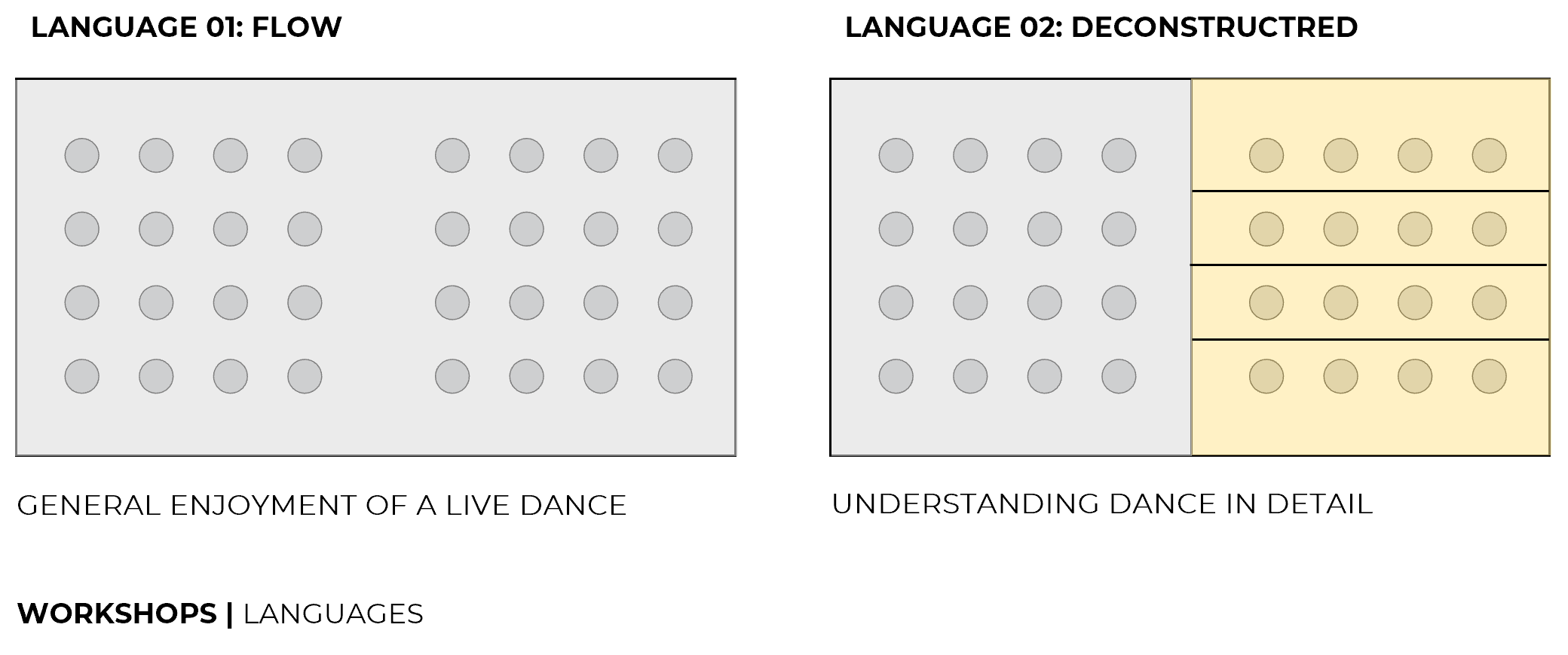

We had discussions on how dance should be communicated, and they each had their preferences. I decided on having two languages available through this platform, language one communicating the flow of dance, and language two, the deconstructed details of dance. After a few discussions and testings, these are the two main different ways they would like to experience dance.

Language one, which they felt that they did not have to process too much information, and are able to get the overall feel of the dance. Whereas for language two, it is more structured, and informed them of more details of the dance, which a few of them appreciated better. To cater to different purposes and preferences of my target audience, I decided to develop my platform to accommodate both languages. Language one can be used to render a dance in real time, while language two can be used like subtitles for one to rewatch and understand the details of a dance, including being able to replay or even slow down a segment of a dance performance.

-----------------------------

Moving forward, I am aiming to continue developing this concept and prototype with these 3 main approaches. Some things I am looking at: making the prototype more robust, improving on the touch experience, refining the languages.

Shi Yun

Shi Yun

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.