After doing 3D LIDAR-Camera Sensor Fusion, we need to do the mathematical variation for 2D, as the assembled gadget is equipped with 2D RP LIDAR A1 to minimize the cost.

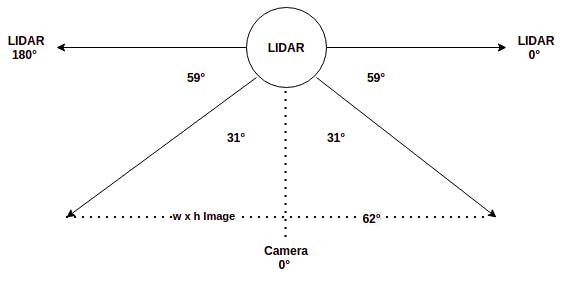

2D LIDAR scans the environment in a 2D plane, orthogonal to the camera plane. The rotating scan will estimate the distance to the obstacle, for each angle from 0° to 360°. Due to the placement of LIDAR w.r.t. Pi Cam in the gadget, the camera is at +90° on the LIDAR geometry. However, note that the Field of View of Pi cam V2 is 62°x48° in horizontal x vertical direction respectively.

The integrated front view of the device is as shown below.

As both the LIDAR and Camera sensor data is available in the frontal 62° arc, we need to fuse the data. In the LIDAR scan plane, the camera data starts from +59° to +59° + 62° = 121°. We can run object detection on the image to get bounding boxes for the objects of interest. Eg: human, car, bike, traffic light, etc. Since 2D LIDAR has only width information, consider only the x_min and x_max of each bounding box.

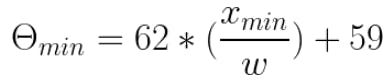

We need to compute the LIDAR angle corresponding to an image pixel, in order to estimate the distance to the pixel. To find the distance to the object inside the bounding box, compute θ_min and θ_max corresponding to x_min & x_max using the below formula, based on the above diagram,

Now you can find the distance to each angle between θ_min and θ_max based on the latest LIDAR scan data. Then compute the median distance of all LIDAR points that subtends the object bounding box to estimate the object depth. If the distance is below a threshold, then trigger a warning based on the angle. Repeat warning, if the box center shift by a significant distance in subsequent frames.

for detection in detections:

if detection.score > threshold:

class_id = int(detection.id)-1

# Potential Objects: person, bicycle, car, bus,

# truck, traffic light, street sign, stop sign

if class_id not in [0, 1, 2, 3, 5, 7, 9, 11, 12]:

continue

det_label = labels[class_id] if labels and

len(labels) >= class_id else '#{}'.format(class_id)

xmin = max(int(detection.xmin), 0)

ymin = max(int(detection.ymin), 0)

xmax = min(int(detection.xmax), x_width)

ymax = min(int(detection.ymax), y_height)

x_mid = np.mean([xmin, xmax])

y_mid = np.mean([ymin, ymax])

if not isAnnounced(det_label, x_mid, y_mid):

# theta min and max corresponds to Pi cam FoV angle

# Picam has 62 degrees horizontal FoV. Need to

# convert to LIDAR angles at LIDAR node.

theta_min = xmin / (x_width / 62) + 59

theta_max = xmax / (x_width / 62) + 59

now = time.localtime()

client.publish("object/getdistance", str(det_label) + '|' +

str(theta_min) + '|' + str(theta_max) + '|' +

str(now.tm_min * 60 + now.tm_sec))

objectsInFrame.append(det_label)

objectMidPts.append((x_mid, y_mid))

# List of objects and its mid points in last 30 frames will be stored in dqueue

if len(objectsInFrame) > 0:

objLastFrames.extend([objectsInFrame])

objMidsLastFrames.extend([objectMidPts])

noObjFrames = 0

else:

noObjFrames += 1

# If no objects found in last 30 frames, reset the queue

if noObjFrames >= 30:

objMidsLastFrames.clear()

objLastFrames.clear()

noObjFrames = 0

Anand Uthaman

Anand Uthaman

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.