After doing object localization and tracking, we need to detect the gesture made using the object. The gesture needs to be simple so that it is efficient to detect. Let's define the gesture to be circular motion and then try to detect the same.

i) Auto-Correlated Slope Matching

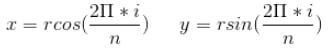

- Generate ’n’ points along the circumference of a circle, with radius = r

- Compute slopes of the line connecting each point in the sequence,

- Generate point cloud using any of the object localization Methods above. For each frame, add the center of the localized object to the point cloud.

- Compute slopes of the line connecting each point in the sequence.

- Compute correlation of slope curves generated in the above steps.

- Find the index of the maxima of the correlation curve using np.argmax()

- Rotate the point cloud queue by index value for the best match

- Compute circle similarity = 1- cosine distance between point clouds

- If correlation > threshold, then the circle is detected and alert triggered.

ii) Concavity Estimation using Vector Algebra If all the vectors in the point cloud is concave, then it represents circular motion.

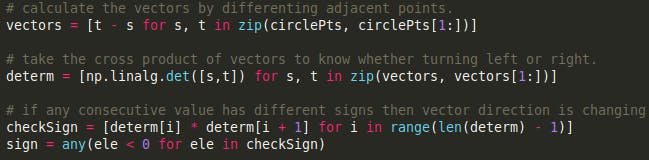

- Compute the vectors by differencing adjacent points.

- Compute vector length using np.linalg.norm

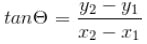

- Exclude the outlier vectors by defining boundary distribution

- If the distance between points < threshold, then ignore the motion

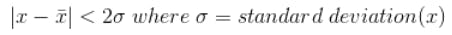

- Take the cross product of vectors to detect left or right turns.

- If any consecutive value has a different sign, then the direction is changing. Hence, compute a rolling multiplication.

- Find out the location of direction change (where ever indices are negative)

- Compute the variance of negative indices

- If all rolling multiplication values > 0, then motion is circular

- If the variance of negative indices > threshold, then motion is non-circular

- If % of negative values > threshold, then motion is non-circular

- Based on the 3 conditions above, the circular gesture is detected.

From the experiments, it became clear that we can use Object Color Masking (to detect an object) and use efficient algebra-based concavity estimation (to detect a gesture). If the object is far, then only a small circle would be seen. So we need to scale up the vectors based on object depth, not to miss the gesture. For the purpose of the demo, we will deploy these algorithms on an RPi with a camera and see how it performs. Now let's see the gesture detection mathematical hack as code.

# loop over the set of tracked points

for i in range(1, len(pts)):

# if either of the tracked points are None, ignore them

if pts[i - 1] is None or pts[i] is None:

continue

# otherwise, compute the thickness of the line and draw the connecting lines

thickness = int(np.sqrt(args["buffer"] / float(i + 1)) * 2.5)

cv2.line(frame, pts[i - 1], pts[i], (0, 0, 255), thickness)

isCirclePts = [p for p in pts if p is not None]

if (len(isCirclePts) > 30):

vectors = [np.subtract(t, s) for s, t in

zip(isCirclePts, isCirclePts[1:])]

vectors = [vector for vector in vectors

if np.linalg.norm(vector) > 5]

# To find concavity, check vector direction using Right Hand rule

determ = [np.linalg.det([s, t])

for s, t in zip (vectors, vectors[1:])]

directionChange = [determ[i] * determ[i + 1]

for i in range (len(determ) - 1)]

# when the movement of the object is

# insignificant, consider it stationary

if len(directionChange) < 10:

alarmTriggered = False

continue Anand Uthaman

Anand Uthaman

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.