I have implemented the below algorithm to solve a Depth AI use case - Social Distance Monitoring - using monocular images. The output of the algorithm is shown at the bottom.

a) Feed the input image frames every couple of seconds

b) Fuse the disparity map with the object detection output, similar to visual sensor fusion. More concretely, find the depth of those pixels inside each object bounding box to compute the median to estimate the object depth.

c) Find the centroid of each object bounding box and map the corresponding depth to the object.

d) Across each object in the image, find the depth difference and also (x, y) axis difference. Multiply depth difference with a scaling factor.

e)Use the Pythagoras theorem to compute the Euclidean distance between each bounding box considering depth difference as one axis. The scaling factor for depth needs to be estimated during the initial camera calibration.

# Detections contains bounding boxes using object detection model

boxcount = 0

depths = []

bboxMidXs = []

bboxMidYs = []

# This is computed to reflect real distance during initial camera calibration

scalingFactor = 1000

# Depth scaling factor is based on one-time cam calibration

for detection in detections:

xmin, ymin, xmax, ymax = detection

depths.append(np.median(disp[ymin:ymax, xmin:xmax]))

bboxMidXs.append((xmin+xmax)/2)

bboxMidYs.append((ymin+ymax)/2)

size = disp.shape[:2]

# disp = draw_detections(disp, detection)

xmin = max(int(detection[0]), 0)

ymin = max(int(detection[1]), 0)

xmax = min(int(detection[2]), size[1])

ymax = min(int(detection[3]), size[0])

boxcount = boxcount + 1

cv2.rectangle(disp, (xmin, ymin), (xmax, ymax), (0,255,0), 2)

cv2.putText(disp, '{} {}'.format('person', boxcount),

(xmin, ymin - 7), cv2.FONT_HERSHEY_COMPLEX, 0.6, (0,255,0), 1)

for i in range(len(bboxMidXs)):

for j in range(i+1, len(bboxMidXs)):

dist = np.square(bboxMidXs[i] - bboxMidXs[j]) +

np.square((depths[i]-depths[j])*scalingFactor)

# check whether less than 200 to detect

# social distance violations

if np.sqrt(dist) < 200:

color = (0, 0, 255)

thickness = 3

else:

color = (0, 255, 0)

thickness = 1

cv2.line(original_img, (int(bboxMidXs[i]), int(bboxMidYs[i])),

(int(bboxMidXs[j]), int(bboxMidYs[j])), color, thickness)Input Image:

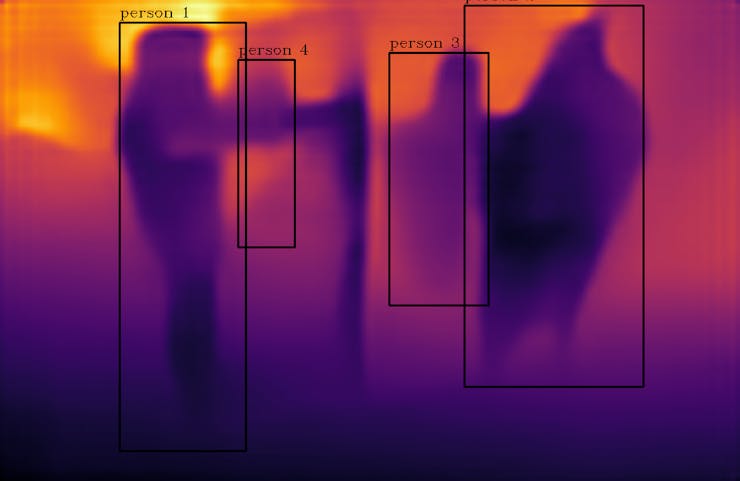

Disparity Map - Object Detection Fusion:

Output Image:

The people who don't adhere to minimum threshold distance are identified to be violating the social distancing norm, using a monocular camera image.

Anand Uthaman

Anand Uthaman

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.