I've been quite busy lately and I didn't touch this project since March. This weekend I finally found the time to give a few ideas a try and I managed to get everything working! weee!

My original idea was to modify my early python script that dumps the video stream into the stdin and to direct the video to a UDP or TCP socket to ffplay or VLC instead. This didn't work very well and made the aforementioned software crash or not play video at all (the video stream from the quadcopter is raw, it isn't packed in RTP or anything). Following these tests, I tried all sorts of things (mplayer, ffplay, gstreamer) and after thousand random tries and lots of frustration I finally found a solution that works.

The solution is to dump the video stream to the stdin using my original script and to use Gstreamer to decode and play the stream. The magic Gstreamer pipeline looks like this:

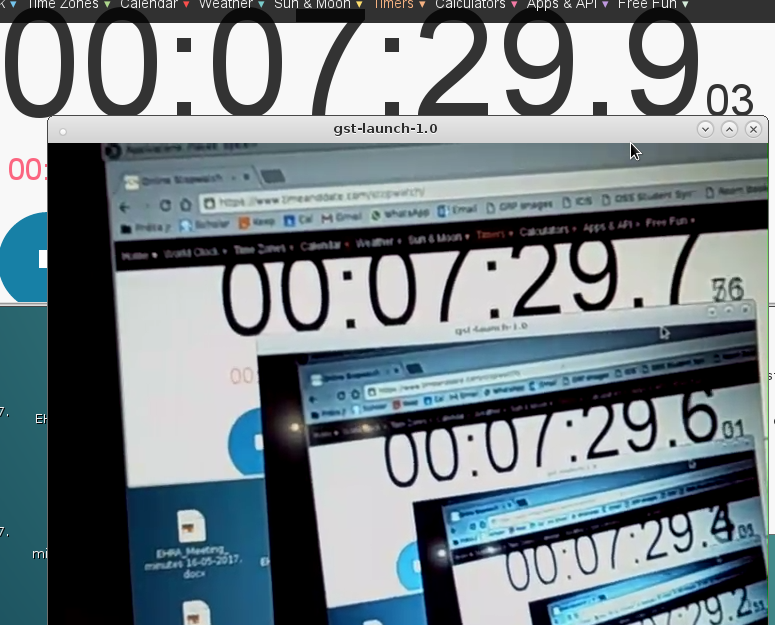

python ./pull_video.py | gst-launch-1.0 fdsrc fd=0 ! h264parse ! avdec_h264 ! xvimagesink sync=falseThis works like charm and the solution is so simple that I feel a bit stupid to not have tried it earlier.I did a "feedback" test in order to measure the time delay between REALITY and the video output and this is what I got:

I believe I've achieved what I wanted to achieve when I started this project and therefore I will soon update the text in the description of the project and mark it as completed. I will perhaps make another "final words" post and point at other interesting "research" ideas for us JJRC Elfie nerds.

adria.junyent-ferre

adria.junyent-ferre

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.