More accurately: many forks, many roads!

It's been an interesting month of tinkering. The video output question has lead me down multiple simultaneous code forks, and I'm slowly gathering enough information to make some sort of decision. I'm not quite there yet, though... so let me recap, and recount the last few weeks.

Option 1: Figure out nested interrupts on the Teensy and keep the existing 16-bit display.

In theory, nested interrupts should save me the hassle of direct display; if I could get it set up right, then the display updates could interrupt the CPU updates frequently enough that I could reclaim the display pulse time for the CPU to run. It doesn't feel like there's much pulse time to work with, though. My programming gut instinct tells me I'd be robbing Peter to pay Paul, and while I might wind up with a small net gain of free CPU time, it would be for such a small gain that I wouldn't be able to use it effectively. Consequentemento, I haven't spent much time on this; I consider it a last-resort option at this point.

Option 2: NTSC output, from Entry 16. I think it would be possible to make a B&W NTSC driver, with nested interrupts, that works. It might buy me enough free CPU time to get the current set of Aiie features running the way I want. I don't know that it buys me enough for what I want to add later. But there is one important possible win: I would be able to correctly draw 80-column text. Right now Aiie drops a pixel in the middle of each 80-column character in order to get them to fit in 280 pixels. The result is just barely passable. With an NTSC output I'd be able to drive the output for the full 560 pixels, showing the full 80-column character set cleanly.

On the down side, I don't have enough RAM in the Teensy to do that. I'm within about 10K of its limits already; I can't double the video driver memory. Which would lead me to the same complexity I'm considering for the next option, which I think is technically superior anyway. So this is, at least for the moment, a dead end.

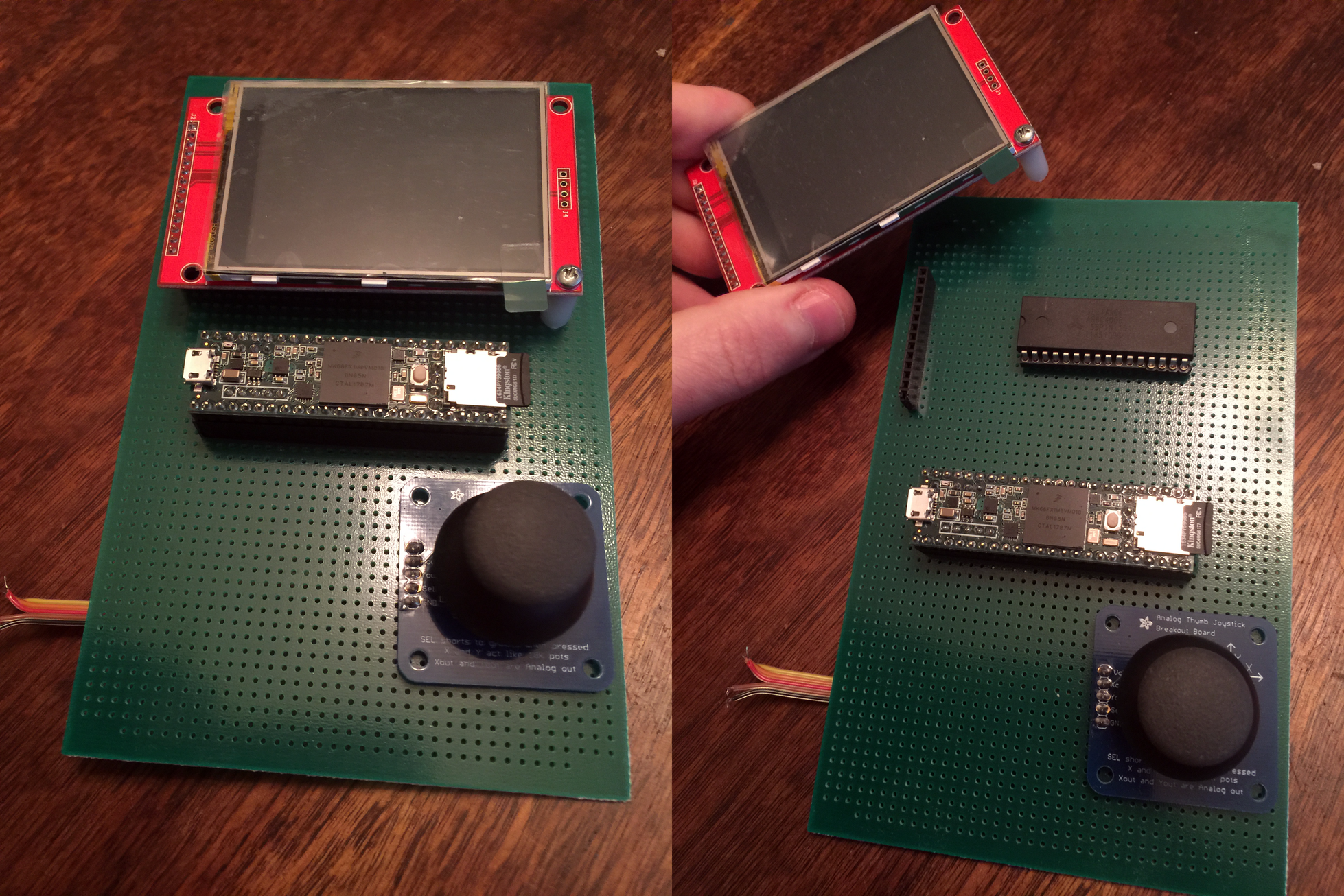

Option 3: DMA displays. I've got two 320x240 serial displays now. Ignoring, for the moment, that they're 2.8" instead of 3.2": if I can offload the work of the CPU banging data out to the LCD, then I can reclaim all of that CPU time. Unfortunately, to run DMA, I need to tradeoff RAM. I need a video buffer where I can store what's going out to the display. And, once again, I'm staring at the 10K of RAM I've got free and wondering how the heck I'm gonna be able to do that. Which brings me to this prototype:

Under that display is a SRAM chip. This feels like a bad path, generally speaking - but if I use that as the RAM in Aiie itself, then I'm freeing up 128K of RAM in the Teensy, which I can easily use for DMA. With some pretty serious side effects.

Side effect A: code complication. There's now another level of abstraction on top of the VM's RAM, so that I can abstract it out to a driver that knows how to interface with this AS6C4008. I'm generally okay with this one. There's no problem in computer science that you can't fix with another layer of abstraction, anyone?

Side effect B: performance. Every time I want to read or write some bit of RAM, now it's got to twiddle a bunch of registers and I/O lines to fetch or set the data. My instant access (at 180MHz) is reduced to a crawl. I've built some compensating controls in a hybrid RAM model; the pages of RAM that are often used in the 6502 are in Teensy RAM and the ones that aren't are stored on the AS6C4008. Even so: whenever I'm reading or writing this thing, I have to turn off interrupts so I'm not disturbed. So now I've got a competing source of interrupts; I'm back to having to understand nested interrupts on the Teensy better. This damn spectre is dogging me, and I think I see where it's going to lead; I really need to write some test programs around nested interrupts to figure out my fundamental misunderstanding about them.

I built this prototype and watched it boot Aiie using DMA to drive the display. There are bugs in the external RAM handling; assuming I iron those out, this could be feasible. But is it worth it? I'm not sure. This time I'm fundamentally limited by the RAM available in the Teensy. It's just the wrong chip for the job. Which brings me to...

Option 4: switch hardware. What I want is an ARM variant that has at least 256K of RAM; peripherals that make it possible to drive a display without wasting CPU time; enough CPU to run the basic emulator plus a half dozen timing-sensitive interrupt sources for cards that I want to emulate.

And now we're headed down the rabbit hole that I've been exploring for the last month.

After looking at a pile of different ARM chips and considering vendors, I found it really impossible to ignore the Raspberry Pi Zero W. It's a 1GHz ARM 6 with 512K of RAM for roughly $10. On a board, instrumented, with a fully functioning reference OS (Raspbian Linux).

Something about this has bothered me from the first moment I dreamt it up. I don't want this to be just another software package. The hardware is important to me. I want the joystick. I want it to be usable for the things I used to do on my //e. That was the original point; and going down this road has the possible ending of "Yeah, it's just some other software package."

I can ignore that for the moment, though, and just do a feasibility study... to add to my growing pile of prototypes...

Jorj Bauer

Jorj Bauer

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.