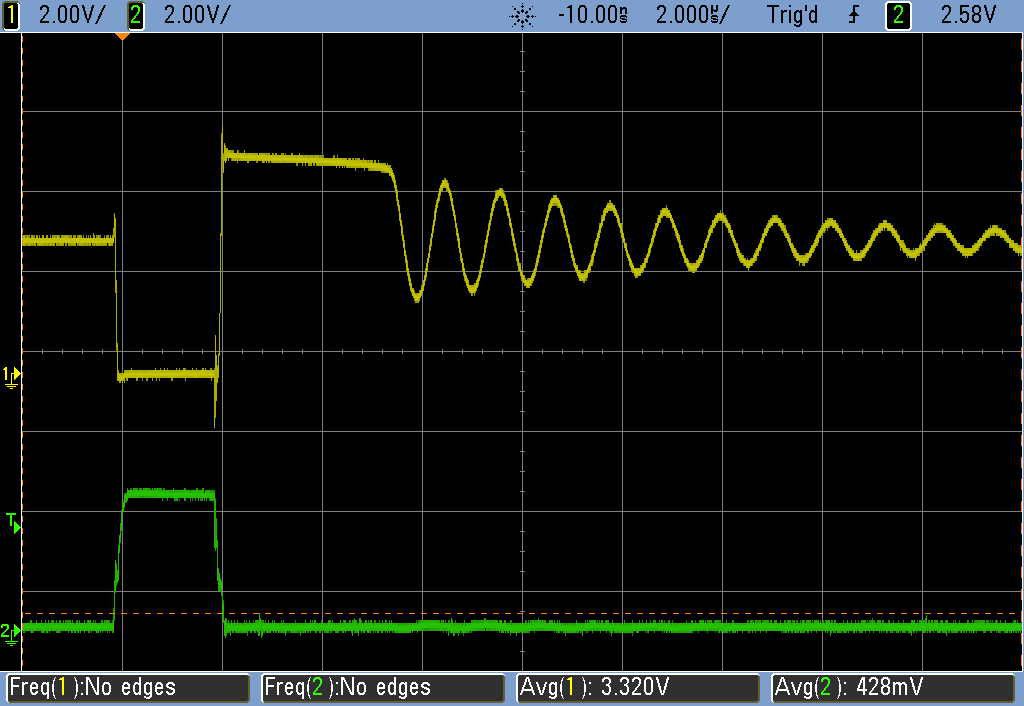

So here's how this works. The yellow trace is the voltage at the + pin of the LED (the low side of the inductor), and the green is the "one microsecond" pulse from the AVR, that really looks like two microseconds, huh?

The AVR turns on the MOSFET, dropping the low side of the inductor from 3.3 V (battery voltage) to zero. Current is running through the inductor. The FET turns off, and current wants to keep running through the inductor, building up a voltage until it's high enough (here a ~2 V LED + 3 V battery) to light the LED.

The LED lights up for about 3.5 usec and then dips under its threshold and does that funny oscillating thing. Would an LED with a lower threshold have smaller amplitude oscillations, and waste less energy? I have no idea how much energy is represented by the wiggles -- my guess is that, with a low-resistance inductor, it's doing very little work = not much.

The AVR sleeps for 16 milliseconds -- an eternity on this timescale -- and it all repeats.

For a given inductor/LED, what is the optimal pulse width? What I want, but haven't figured out yet, is to measure the current through the inductor and figure out when it's "full". You can do that empirically by changing the AVR's on-time and noticing how the LED's on time-responds because that corresponds to the energy stored in the inductor's field. Beyond something like 4 microseconds, the on-time doesn't seem to lengthen all that much, so maybe 2 microseconds is a lucky guess.

I could add in a resistor and measure voltage drop, I suppose.

Putting two LEDs in series, the max voltage goes up to ~7-8 V, or 3 V + 2x LED threshold voltages.

If you use a larger inductor but a fixed pulse length, the current draw drops, which makes sense because it "charges up" more slowly -- equivalently it displays more reactance. I'm not sure if I should be thinking in the time or frequency domains here...

Elliot Williams

Elliot Williams

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

I found that if you use multiple pulses per interrupt, you can recapture some of the energy in the ringing. Timing the subsequent pulses so they coincide with the down-swing of the ring can reduce the current draw by a few percent. I found it tough to tune exactly, and the "ideal" timing probably drifts with temperature as the capacitance of the LED and/or the inductance drifts, but you can definitely see the effect.

I also found that using multiple pulses per timeout was generally more efficient than increasing the timeout frequency, if you are trying to increase brightness.

Are you sure? yes | no