Introduction

How can people alert their friends that they have been attacked, or are in danger, without alerting the attacker? We need a way to discreetly communicate with a smartphone or other communications device! The communications device can then notify a network of trusted friends of the attack.

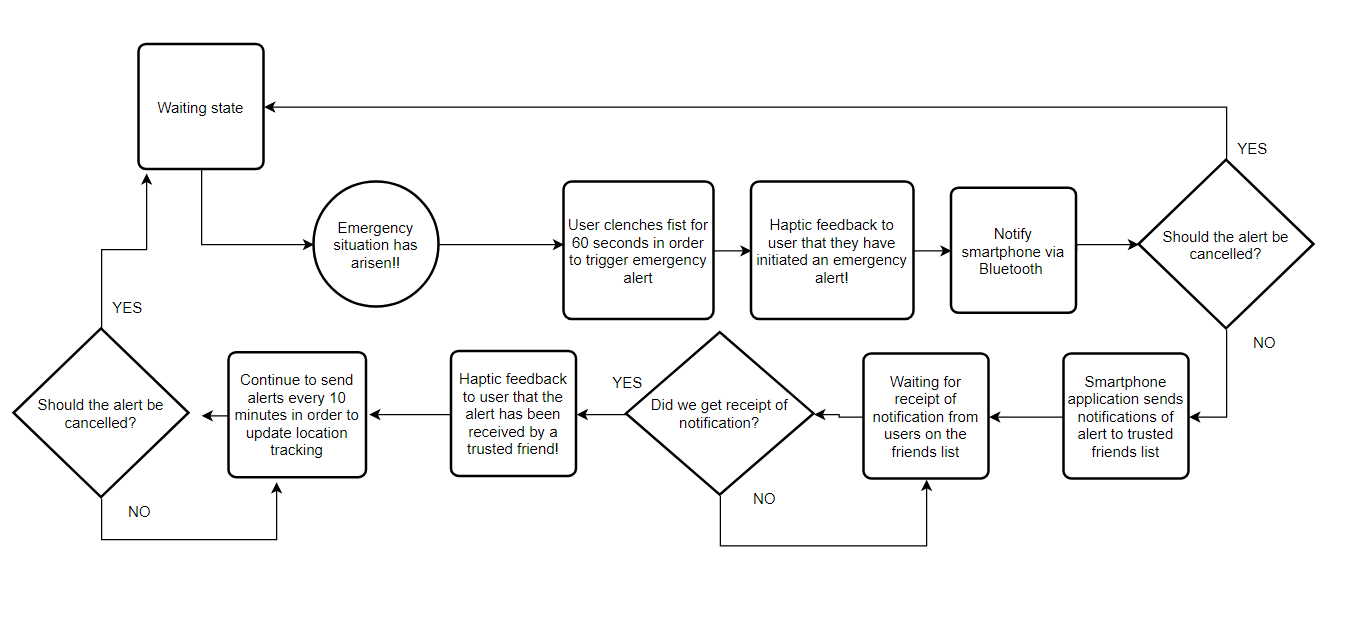

So we want to trigger an alert of an emergency situation using a discreet trigger. And then notify the person in danger that the alert has been sent to their network of trusted friends. In the case of a false-positive, the person in danger should be able to cancel the alert by entering a passcode to their smartphone.

Solution

So, the alert trigger in this solution is clench fist for 10s. We use EMG acquired from forearm to detect this. The user is informed of alert trigger by vibrate smartphone or vibrating a motor on an armband. The user would then have a set amount of time (e.g. 60 seconds) to cancel via entering a passcode to smartphone software. If the user doesn't cancel, then the alert is sent to network of trusted friends, and the user would get further haptic feedback from armband vibrate that the alert was been RXed by one of the trusted friends. The alerts will include location data from GPS. The alerts continue to be sent until cancelled by the user. Each alert including location data for tracking (e.g. if user is kidnapped).

General overview of solution:

1. Trigger: The trigger is clenching fist for 10s. Sensor is EMG attached to forearm via fabric band.

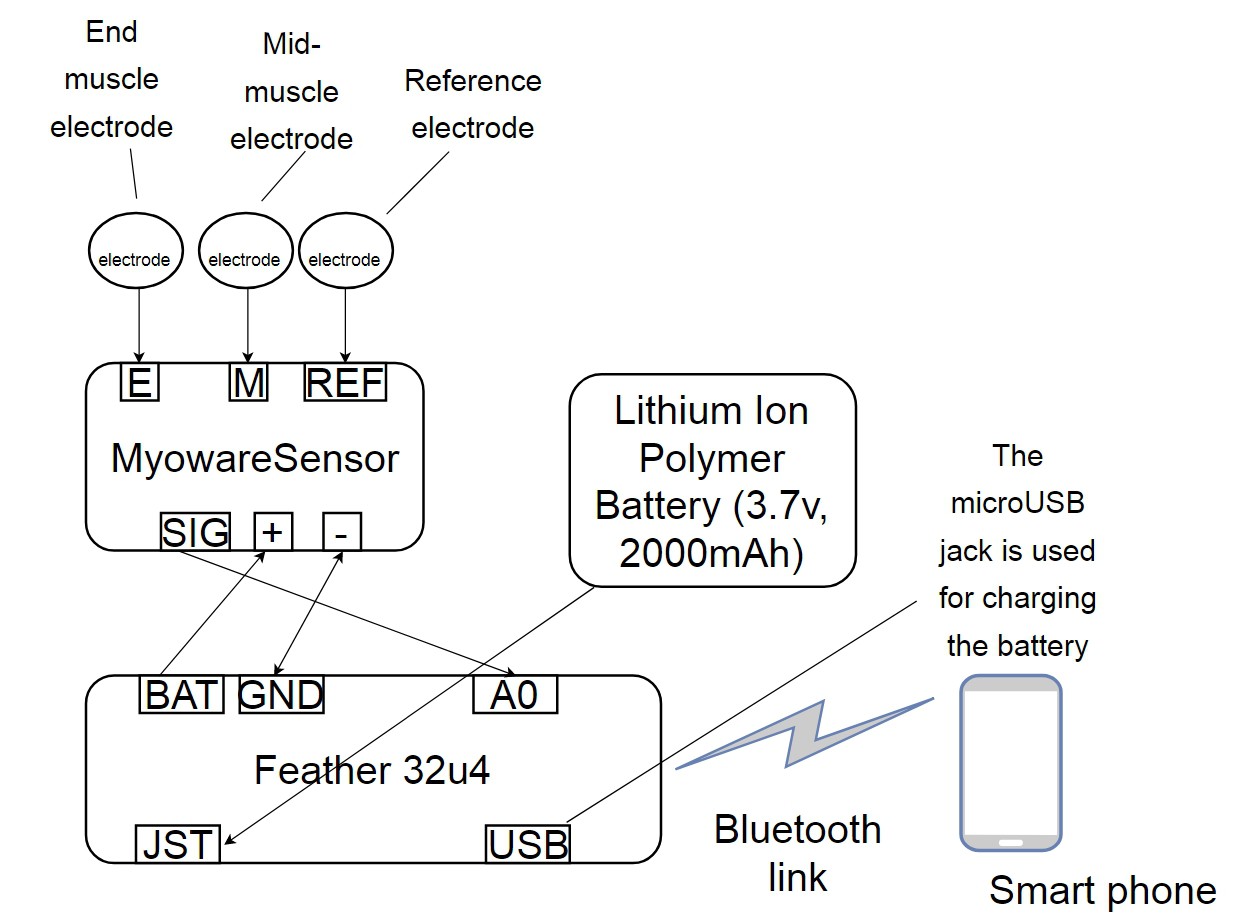

2. EMG sensor to Feather (wired)

[EMG sensor: supply voltage +3.3V or +5V , output EMG envelope so rectified; using MyoWare™ Muscle Sensor (AT-04-001) ]

[ Adafruit Feather 32u4 Bluefruit (which is referred to as arduino mostly)]

So all we are doing is read voltage from the EMG sensor using the Feather e.g.

void loop() {

// read the input on analog pin 0:

int sensorValue = analogRead(A0);

// Convert the analog reading (which goes from 0 - 1023) to a voltage (0 - 5V):

float voltage = sensorValue * (5.0 / 1023.0);

// print out the value you read:

Serial.println(voltage);

}

So, we are going to get different voltages for non fist-clenched conditions, and fist-clenched conditions. Once we get the voltage we expect for fist-clenched occurring for the 10 seconds, we know to trigger the alert. Unfortunately, whilst the voltages for fist-clenched and non fist-clenched (relaxed hand) are likely to be significantly different. The voltages for other hand actions (e.g. shaking hands, holding a pen, gripping a mouse) might not be so different to the voltages we get for fist-clenched!! See the problems section for more on this.

3. It's just some python code on Feather to say if we got that fist-clench. If we did we can give haptic feedback from actuator on fabric band that alert is now triggered. And Feather will bluetooth comm to smartphone app that alert triggered. Smartphone can go ahead and vibrate too. Say we have a 60s cool-down for user to deactivate if this is false-positive. Deactivation would be via smartphone app, after which the Feather is again waiting for the fist-clench voltage.

4. Now smart-phone will go ahead and see what kind of connections it has, and send out the alert!

5. Then smartphone can notify Feather via Bluetooth that alert received successfully by trusted friends. And the Feather turns on the motor for haptic feedback to alert user (discreetly) that the alert has been sent out and received!

Overview of hardware:

##

##

Outline of system flow

###

Haptic Feedback

So, the idea is to provide haptic feedback for the user when an alert is triggered, and when the alert has been received by a trusted friend. I found these very small vibrating motor discs which could be used to deliver the feedback (size 10mm diameter, 2.7mm thickness; 11000 RPM at 5V, 0.9 grams). These could just be switched on/off. Or else, we could use a small motor driver - e.g. the DRV2605 from TI - chip. This has an in-built library of various motor effects! You can see these waveform library effects in the datasheet here https://cdn-shop.adafruit.com/datasheets/DRV2605.pdf . So that would be pretty cool, in order to provide different types of haptic feedback for each situation! Adafruit already put the chip on a board (size 18mm x 17mm x 2mm, 1 gram) schematic here .

Housing for the device

So it needs to be somewhere discreet. And I think all the components should be together. Thus, we need to place the Adafruit Feather 32u4 Bluefruit, the DRV2605L Haptic Motor Controller, the battery, the vibrating motor disc, and the MyoWare EMG sensor + electrodes into some kind of neoprene-type armband! It can't be too small, because we need to have the electrodes in different places, and we don't want them all close to the motor! Although it's not a big problem. since we aren't going to be collecting data from the electrodes once the alert is triggered, and the motor is used.

Problems

How do we send alerts when there is no cellular connectivity?

Unfortunately, whilst the voltages for fist-clenched and non fist-clenched (relaxed hand) are likely to be significantly different. The voltages for other hand actions (e.g. shaking hands, holding a pen, gripping a mouse) might not be so different to the voltages we get for fist-clenched!! So erm, first of all we need to see what kind of voltages we get from the EMG sensor for different hand positions!

Additionally, different people will produce different voltages! So we need to try this for for several people too. Well, it will be a fun experiment to get data for that I think! And it might be useful for others to use!

Now, of course, there is another thing we could do! We could get each HelpMe! to learn the the correct voltages corresponding to fist-clenched for each individual user. Well, to be honest, I think this is the only approach! Since we don't have a one-size fits all for fist-clenched voltages! So I guess we will have to do this with software on the Feather, the smartphone app (to direct the user what to do e.g. clench you fist for training purposes, and to instruct the Feather which code to run). We can save the results to the MicroSD on the Feather.

I guess everyone is thinking, oh isn't there some machine learning thing we can do instead? Yeah, I did think of that. We could collect a large dataset of different hand position voltages. Maybe it is something to think about in the future..

Open Source

Any software written for the HelpMe! project is free software; you can redistribute it and/or modify it under the terms of the GNU General Public License version 3 See http://www.gnu.org/licenses/

This is the license for Python, since Python will be used in the project https://www.python.org/download/releases/3.4.0/license/

Any hardware plans for the HelpMe! project, including 3d design files and stl files, etc. are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. See http://creativecommons.org/licenses/by-sa/4.0/

Neil K. Sheridan

Neil K. Sheridan