High time for another log update...

I'm currently on vacation, but I took most of the prototype parts and a computer with me as I hoped to get some additional work done (only to find out that the vacation schedule was a little too dense for that - well you need to get some relaxing done during holidays I guess :) ).

Breadboard prototype - current iteration

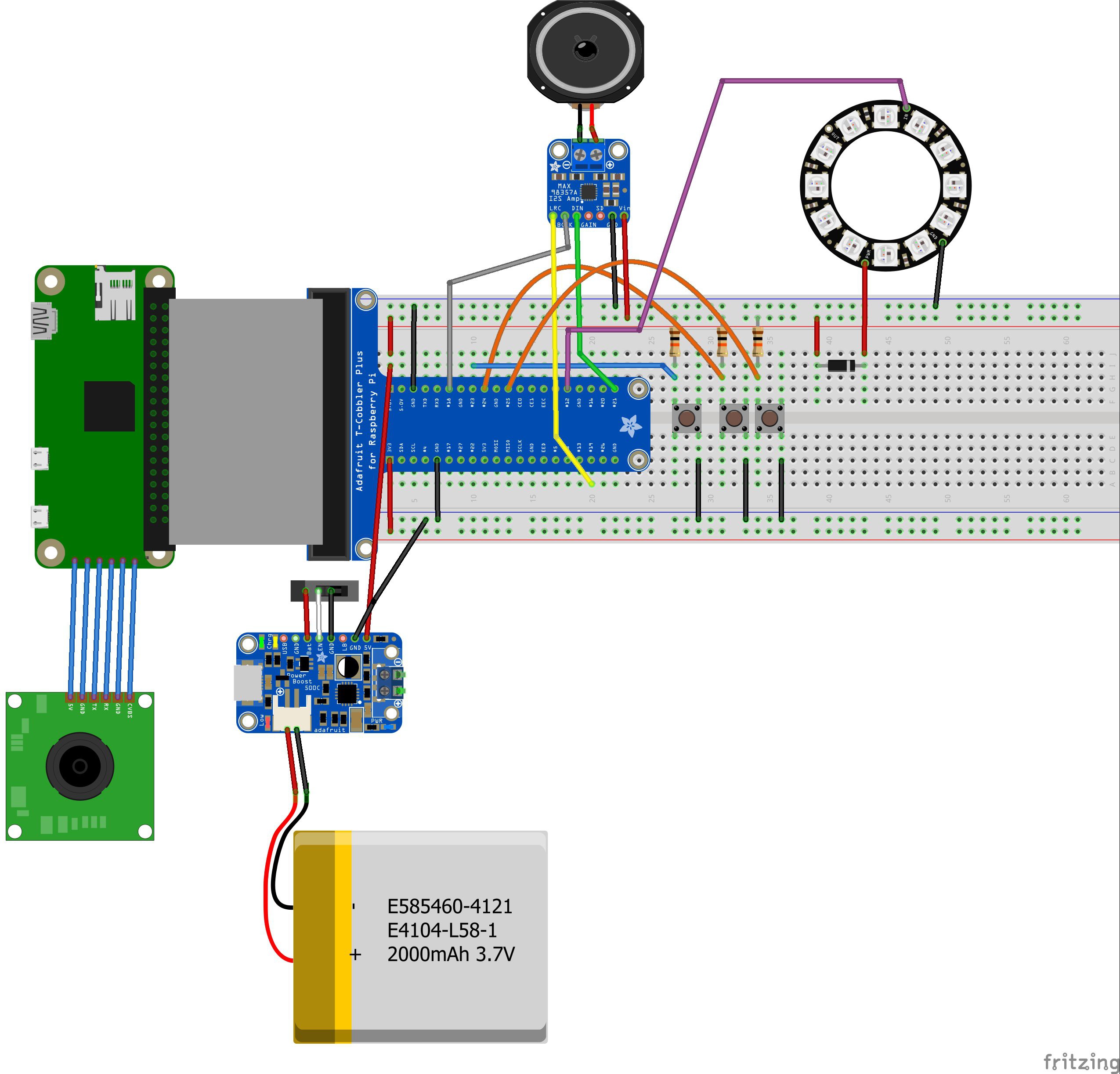

First of all, let's start with my latest Fritzing diagram for the breadboard wiring of the TextEye prototype:

Since I cannot currently find Fritzing parts for the newer version of the Raspberry Pi Zero or for any Raspberry Pi camera module, I took the older Pi Zero and a similar looking part and connected them with some direct line connections (although there is no actual connector on the Pi Zero right now), At least it is a somewhat realistic depiction of the actual prototype setup.

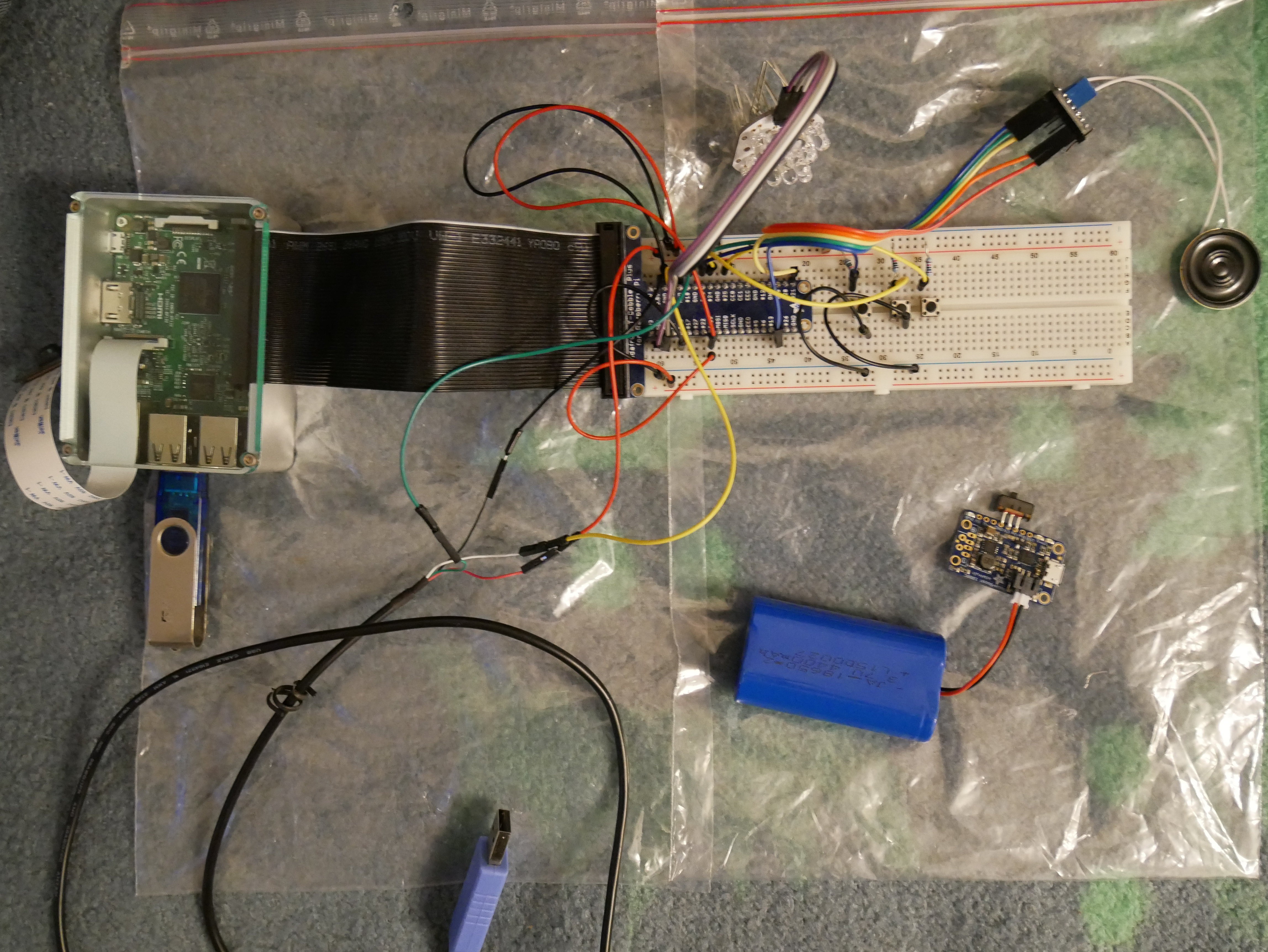

Now this looks pretty usable, but the physical prototype ended up being a little different:

If you compare the physical prototype and the Fritzing diagram, you'll notice the following differences:

- the physical prototype uses a Raspberry Pi Model 3 instead of the Pi Zero as the additional connectors simplify the development (updates, testing etc.)

- the PowerBoost 1000C is not yet connected to the prototype setup - mainly because I did not get around to the additional soldering before my vacation

- a TTL to USB serial cable (https://www.adafruit.com/products/954) was added for a direct serial connection to the Raspberry Pi

- since I did not bring enough breadboard jumper cables with me, one of the pushbuttons is currently not connected to ground (not a big problem right now)

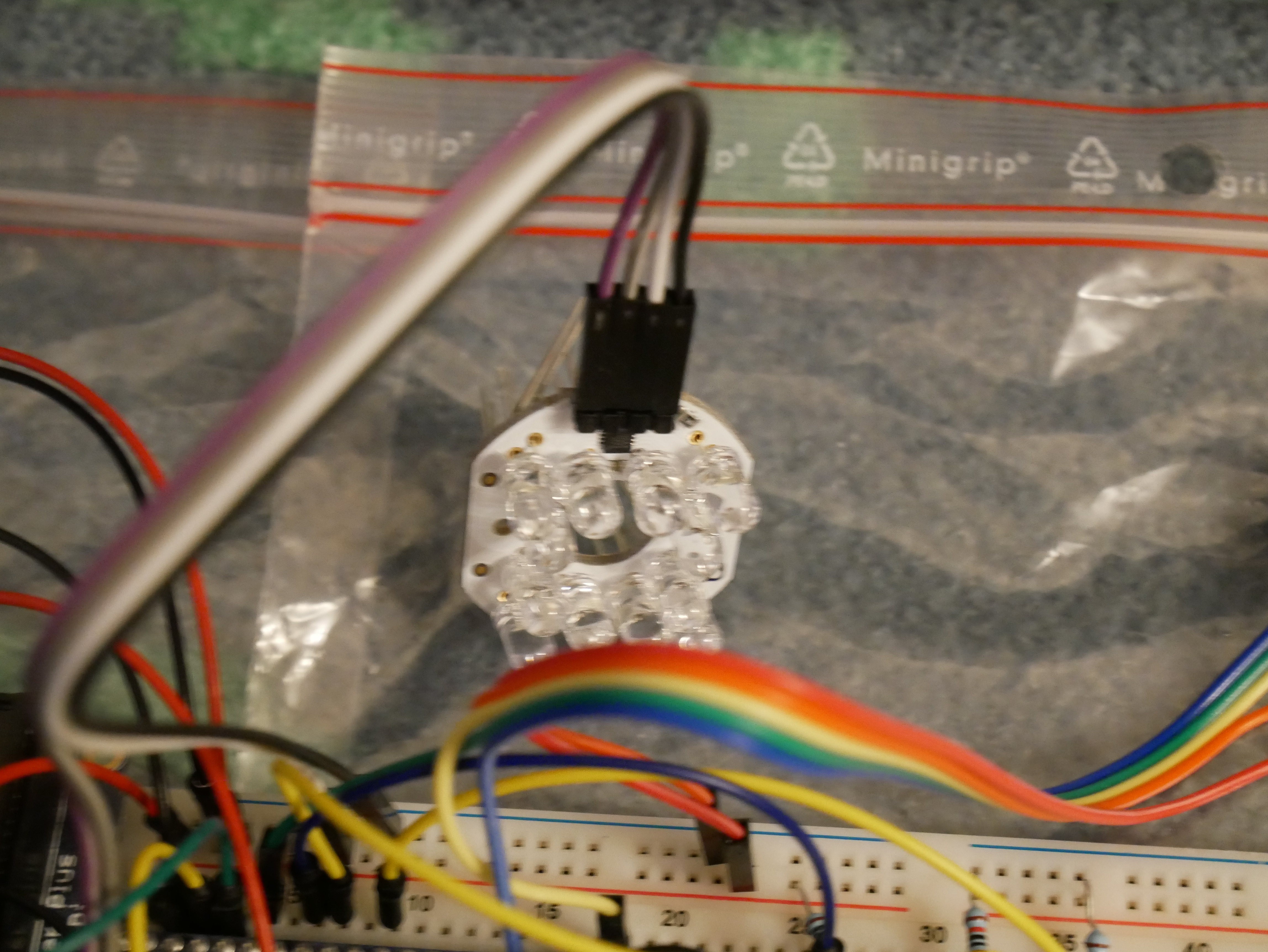

- last not least: the NeoPixel ring has disappeared and was replaced by the "Bright Pi" board:

The Bright Pi board uses simple white and IR leds instead of the RGBW leds of the NeoPixel ring or strip: https://www.pi-supply.com/product/bright-pi-bright-white-ir-camera-light-raspberry-pi/

Software (again)...

The main reason for using this "fallback" option for the lighting lies within the software development.

Since I'm using the C/C++ programming language for software development, I had already decided to use the "WiringPi" library for the GPIO access. When I looked at how to use the NeoPixel elements however, I found that the current option for this would be to use another library. Even though the other library would not access all GPIO pins, I could not be sure that the functions of both libraries would work alongside each other.

My programming experience related to hardware access tells me that a scenario like this, with functions from different libraries probably "fighting" over control of the same GPIO channels, is a potential recipe for disaster - unless you wrote both libaries yourself and know how to properly tweak them and make them work together.

From my research, I know that NeoPixel control mainly needs properly quick PWM access. With some additional time, it should be possible to use WiringPi to create a usable NeoPixel control library in C/C++. In order to get closer to a working prototype sooner, I chose to use the Bright Pi board instead.

The Bright Pi board is actually an I2C device with a simple 4-pin connection. Once I2C has been enabled on the Raspberry Pi through the advanced options in "raspi-config", you can pretty much follow the code examples on the Bright Pi web pages and combine them witn the WiringPi I2C examples in order to control the lights on the board from your C/C++ code.

While I have not finished the software yet (maybe I'm complicating things more then necessary...) I have got around to writing a quick and dirty test program today. I hope to get around to properly testing the hardware setup with this during the next few days.

Video todo

Due to my vacation (and a distracting bad cold) I'm still not as far along as I would like to be, but it's definitely no standstill.

For the Hackaday Prize, I still need to produce a short video showing of the project and a working prototype. Since the deadline for the main prize is October 17th, I had hoped to get around to doing a video sometime next week. But I've also entered the project into the "Assistive Technologies" competition, which has a deadline on October 10th. I've already received a mail telling me that the judging will start on October 10th, so I may not be ready to fullfill all requirements for the main prize in time.

That's a bit of a shame, but of course it does not mean that I will stop working on the project. The Hackaday Prize provides a nice additional motivation, but this project is important enough to be continued even without that. Apart from that, I already carried on with it after finishing the Raspberry Pi Zero contest, before someone nudged me to enter the project into the Hackaday Prize 2016 (hello Sophie ;) ).

With almost 400 projects that have been entered into the competition, several being already completed and fully working, I'm not too optimistic about finishing in one of the top spots anyway. There are a lot of equally good and interesting projects, and I don't envy the judges - some tough decisions have to be made...

For the moment, I'm just concentrating on getting the prototype to work with the complete workflow from taking a picture to the text-to-speech conversion of the OCR output. And that's all for now....

Markus Dieterle

Markus Dieterle

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.