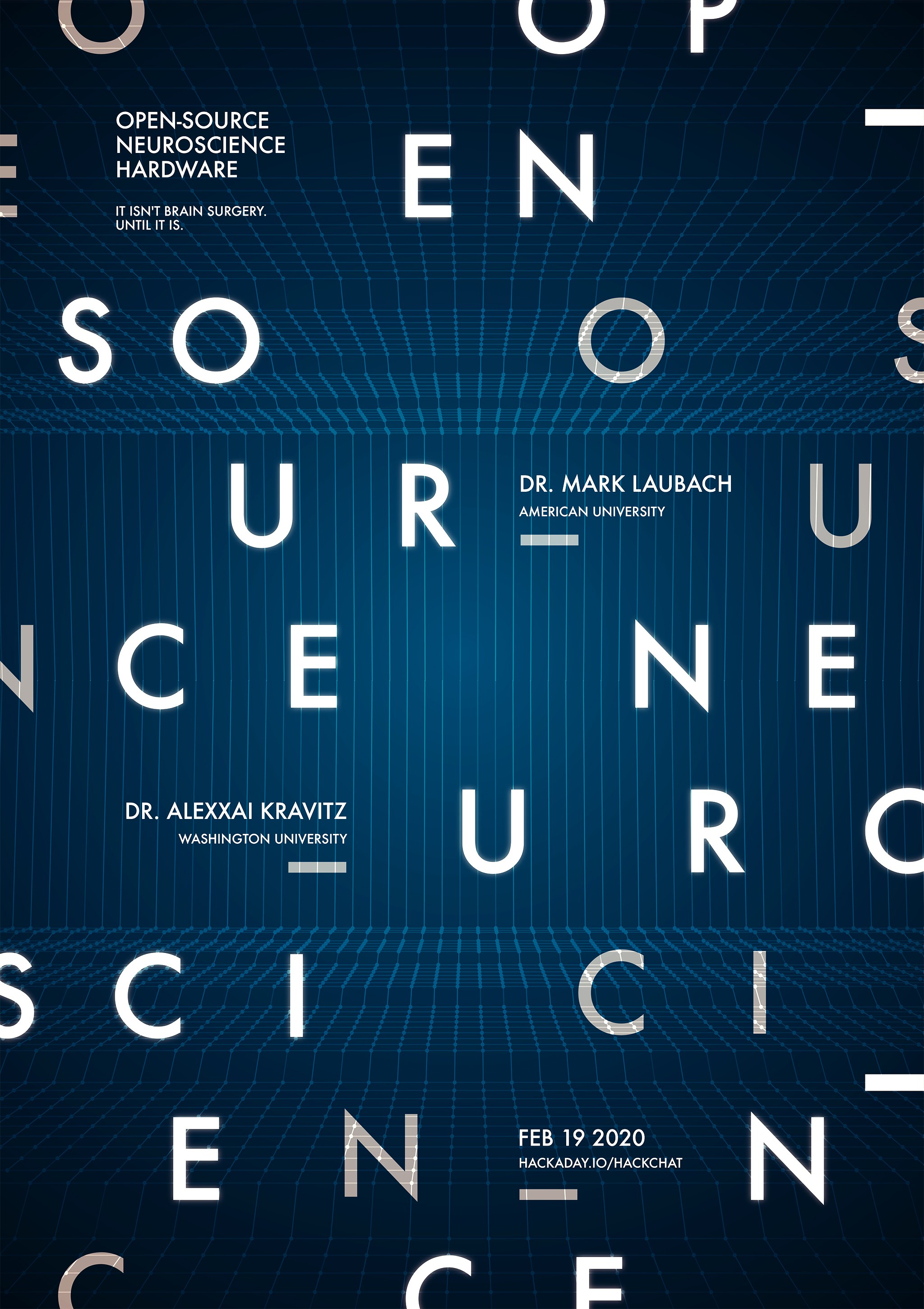

Lex Kravitz and Mark Laubach will host the Hack Chat on Wednesday, February 19, 2020 at noon Pacific Time.

Time zones got you down? Here's a handy time converter!

There was a time when our planet still held mysteries, and pith-helmeted or fur-wrapped explorers could sally forth and boldly explore strange places for what they were convinced was the first time. But with every mountain climbed, every depth plunged, and every desert crossed, fewer and fewer places remained to be explored, until today there's really nothing left to discover.

There was a time when our planet still held mysteries, and pith-helmeted or fur-wrapped explorers could sally forth and boldly explore strange places for what they were convinced was the first time. But with every mountain climbed, every depth plunged, and every desert crossed, fewer and fewer places remained to be explored, until today there's really nothing left to discover.Unless, of course, you look inward to the most wonderfully complex structure ever found: the brain. In humans, the 86 billion neurons contained within our skulls make trillions of connections with each other, weaving the unfathomably intricate pattern of electrochemical circuits that make you, you. Wonders abound there, and anyone seeing something new in the space between our ears really is laying eyes on it for the first time.

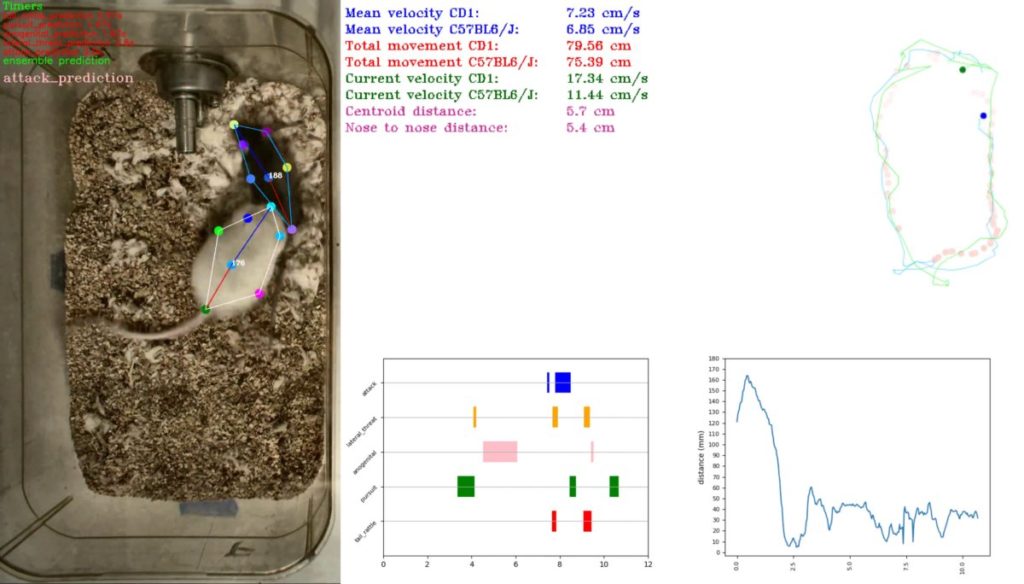

But the brain is a difficult place to explore, and specialized tools are needed to learn its secrets. Lex Kravitz, from Washington University, and Mark Laubach, from American University, are neuroscientists who've learned that sometimes you have to invent the tools of the trade on the fly. While exploring topics as wide ranging as obesity, addiction, executive control, and decision making, they've come up with everything from simple jigs for brain sectioning to full feeding systems for rodent cages. They incorporate microcontrollers, IoT, and tons of 3D-printing to build what they need to get the job done, and they share these designs on OpenBehavior, a collaborative space for the open-source neuroscience community.

Join us for the Open-Source Neuroscience Hardware Hack Chat this week where we'll discuss the exploration of the real final frontier, and find out what it takes to invent the tools before you get to use them.

So that was a super-fast hour, and we'll have to let Mark and Lex get back to work. Feel free to continue the discussion, though - this was really fascinating stuff. I really want to thank both Lex and Mark for the time and the great discussion.

So that was a super-fast hour, and we'll have to let Mark and Lex get back to work. Feel free to continue the discussion, though - this was really fascinating stuff. I really want to thank both Lex and Mark for the time and the great discussion.