I've just got started back on this project. Most of the first logs are out of date. I'll leave them just to illustrate the first thoughts. Anyway, so here's our general outline for the project! I'm starting with just a daytime detector. Most of the work is already done for the basics part, so I'd hope to have this up and running in the next couple of weeks.

BASICS

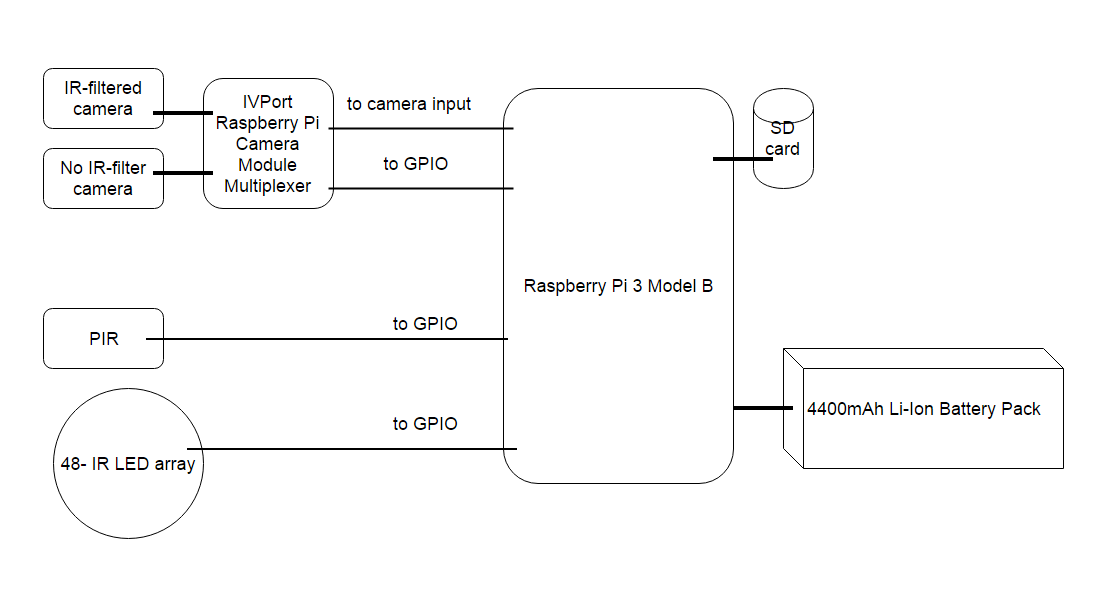

The daytime detector is comprised of the following hardware: one raspberry pi, a USB dongle for mobile connectivity, a raspberry pi camera, a PIR, a LiPo battery, a waterproof case. I'll use an ABS case for now, but we will 3d print a waterproof case eventually. We'll put a waterproof switch on the outside to switch it on (this is just by connecting the battery). And we'll have a waterproof switch for safe-shutdown (shutdown the OS, not just kill the power and risk SD card corruption).

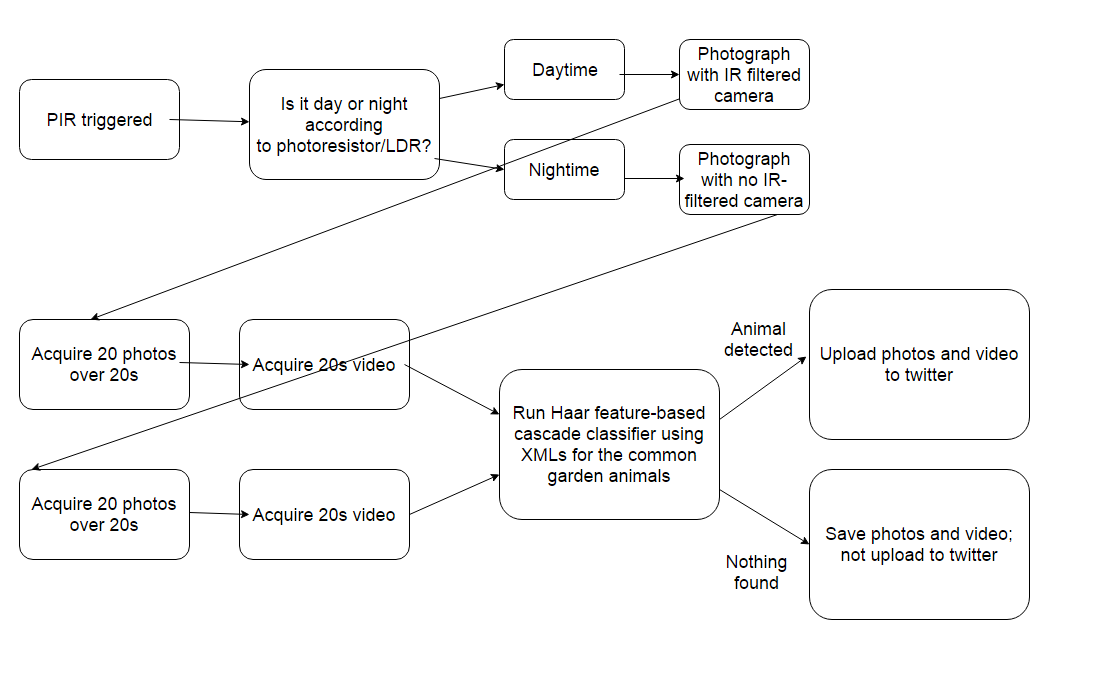

So, if we get HIGH on our PIR we will take a series of images using the PiCamera. Say 1 image, wait 3 seconds, then 1 image. Looping through for say 5 minutes. But checking if PIR went to LOW after each 2 images. If it did, we'll break out and wait for it to go HIGH again. You need to be careful with the code for this, or else the PiCamera will show error that it has run out of resources following your first round of images. I got stuck with that when building ElephantAI.

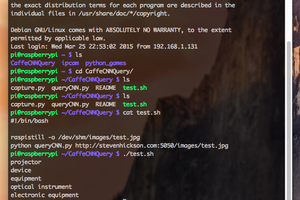

Now, we go and pass these images to the wildlife detector - which is only using TensorFlow with InceptionV3 off-shelf model. So just with this code adjusted to put our top 5 detected animals into a list and return it from the wildlife detector function https://github.com/tensorflow/tensorflow/blob/master/tensorflow/examples/image_retraining/label_image.py

Right, well if we didn't get any animals in our top 5 detections, we can just delete the images and go back to waiting for PIR to be HIGH and do the above all over again.

But if we did, then we can go ahead and tweet those images with their suspected names, using our 3G USB dongle!

That's all really!

ADJUSTMENTS

Could we cut down our time taking images, to say 1 minute? Then if we get any animals detected from the images after they are sent to the wildlife detector, we start taking video for 30 seconds? Then we go ahead and upload the images + video to twitter with their suspected names?

Or we could try and detect from video, but I'm not sure about doing this using Raspberry Pi really.

FURTHER

What I'd like to do, and it's the same thing as with the ElephantAI, is to get twitter users to help with identifying the spotted animals, and use this data to either build a CN for common garden animals/common animals from scratch, or use it retrain an existing model with some new more accurate classes of common animals.

So it goes something like this:

1. Twitter users will tweet back with hashtags #yescorrect or #nowrong according to whether the image they see corresponds to the suspected animal tagged by our wildlife detector.

2. Now this is not done on Raspberry Pi at all! The replies will be monitored by a server. All the Raspberry Pi's do is send out their images with suspected animals tagged.

3. If an image got a designated number of #yescorrect replies back from twitter users, the server will store that image in directory of correct_animalname. If an image got a designated number of #nowrong replies back from twitter users, the server will store that image in directory of wrong_animalname.

4. Now, if a large number of people are using the automated wildlife detection systems, and there is a lot of twitter interaction, we could be getting 100s of correct_animalname images per week!

So, as you might have guessed, we've got an easy way to do supervised machine learning!

5. After a few weeks, our server can go ahead and retrain an off-shelf model with all these images of common animals that have been labelled according to their class (name) by twitter users!

6. We could go ahead and send the new graph to the automated wildlife detection systems if we wanted via...

Read more » Neil K. Sheridan

Neil K. Sheridan

Brenda Armour

Brenda Armour

Steven Hickson

Steven Hickson