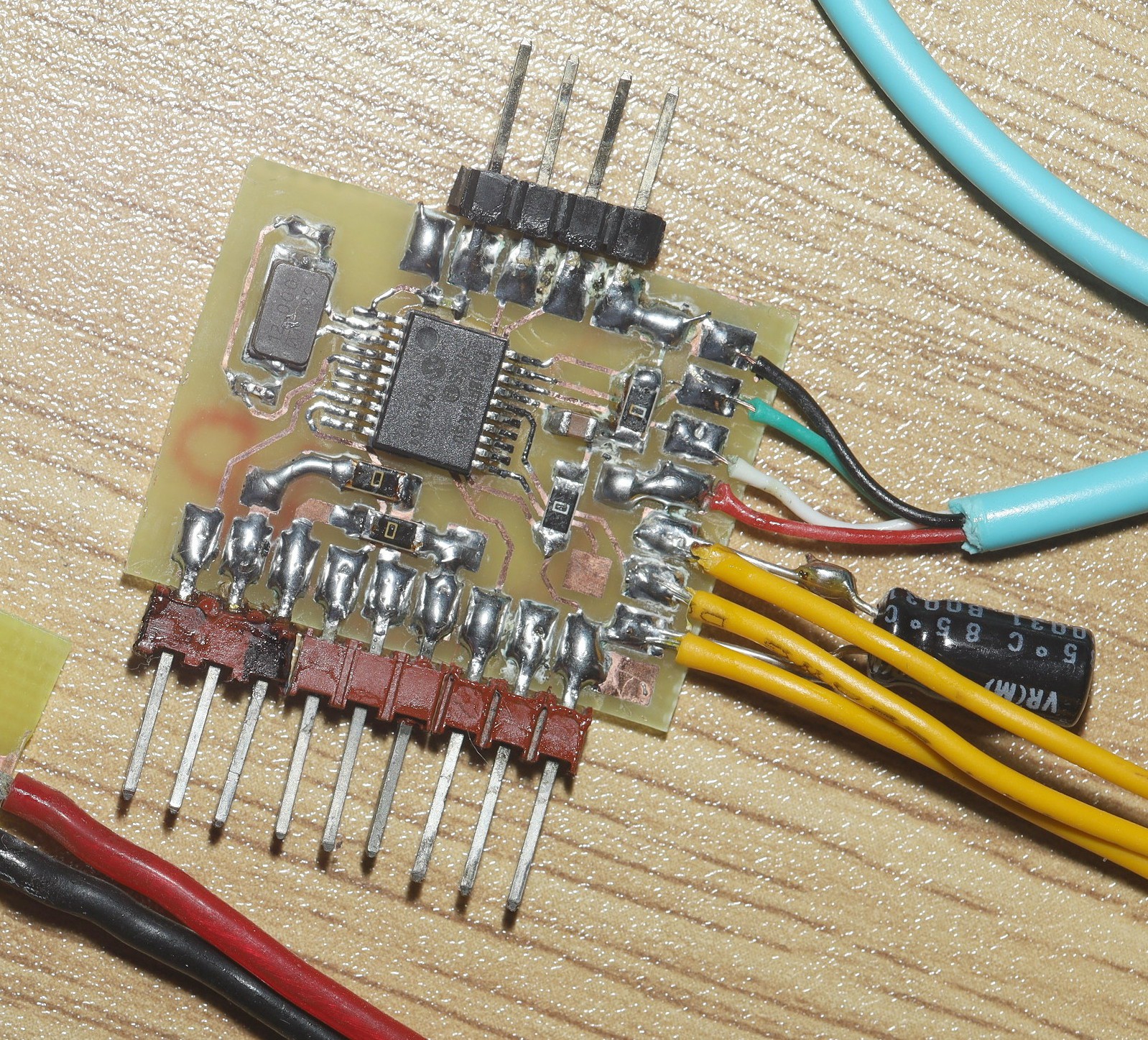

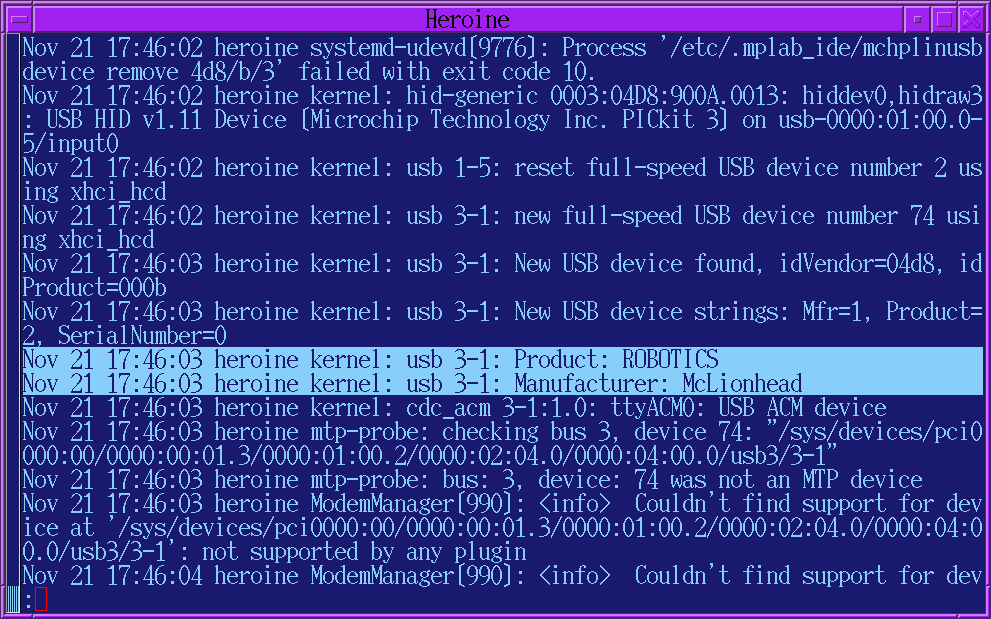

The journey began with a new servo output/IR input board. Sadly, after dumping a boatload of money on the LCD panel, HDMI cables, spinning this board, it became quite clear that the last IR remote in the apartment wouldn't work. It was a voice remote for a Comca$t/Xfinity Flex which would require a lot of hacking to send IR, if not a completely new board.

The idea returned to using a phone as the user input & if the phone is the user input, it might as well be the GUI, so the plan headed back to a 2nd attempt at a wireless phone GUI. It didn't go so well years ago with repcounter. Wifi dropped out constantly. Even an H264 codec couldn't fit video over the intermittent connection.

Eliminating the large LCD & IR remote is essential for portable gear. Gopros somehow made wireless video reliable & there has been some evolution in screen sharing for Android. Android may slow down programs which aren't getting user input.

Screen sharing for Android seems to require a full chrome installation on the host & a way to phone home to the goog mothership though. Their corporate policy is to require a full chrome installation for as little as hello world.

The best solution for repcounter is still a dedicated LCD panel, camera, & confuser in a single piece assembly. It doesn't have to be miniaturized & would benefit from an IR remote. The same jetson could run both programs by detecting what was plugged in. The user interfaces are different enough for it to not be a horrible replication of effort.

Anyways, it was rewarding to see yet another home made piece of firmware enumerate as a legit CDC ACM device.

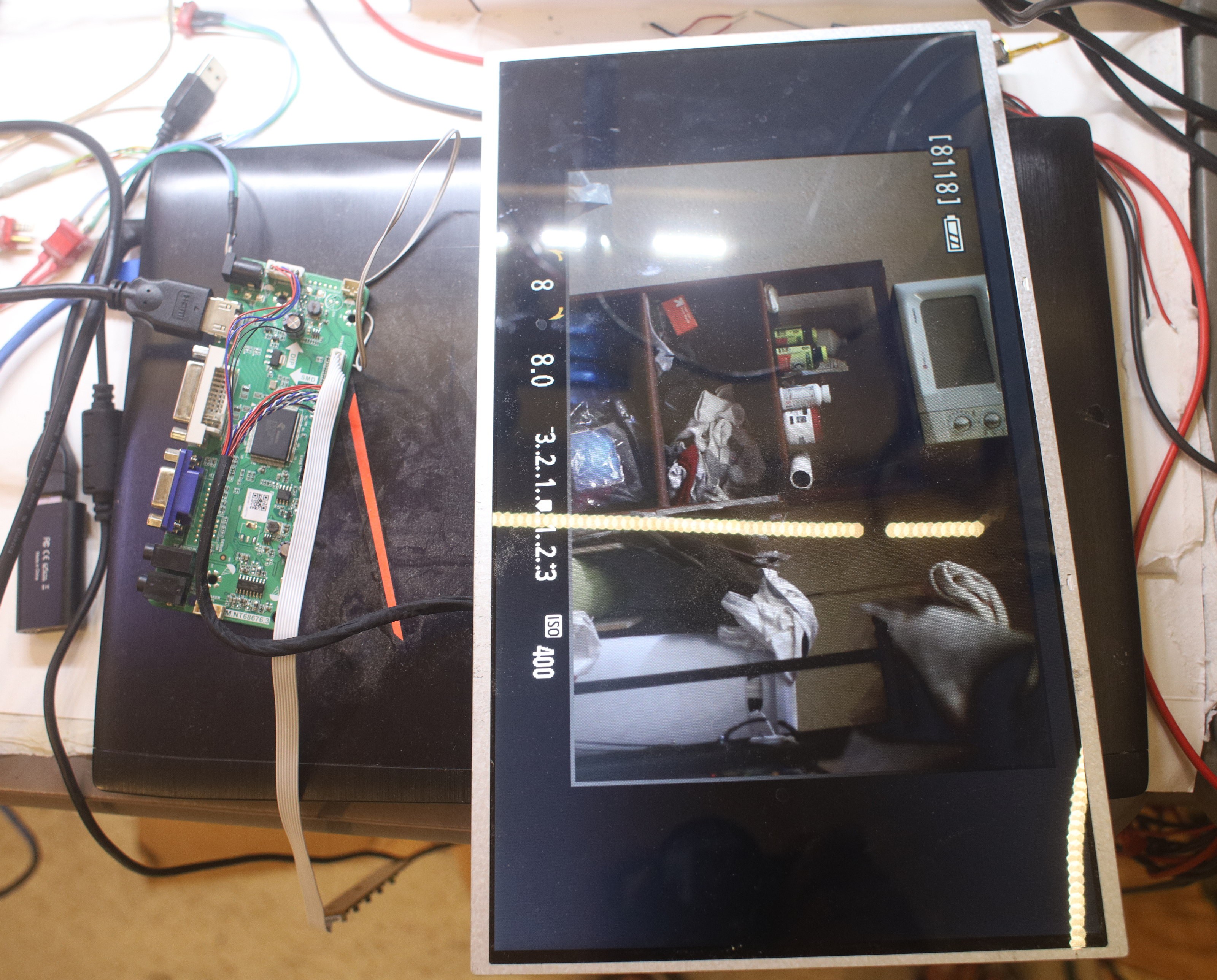

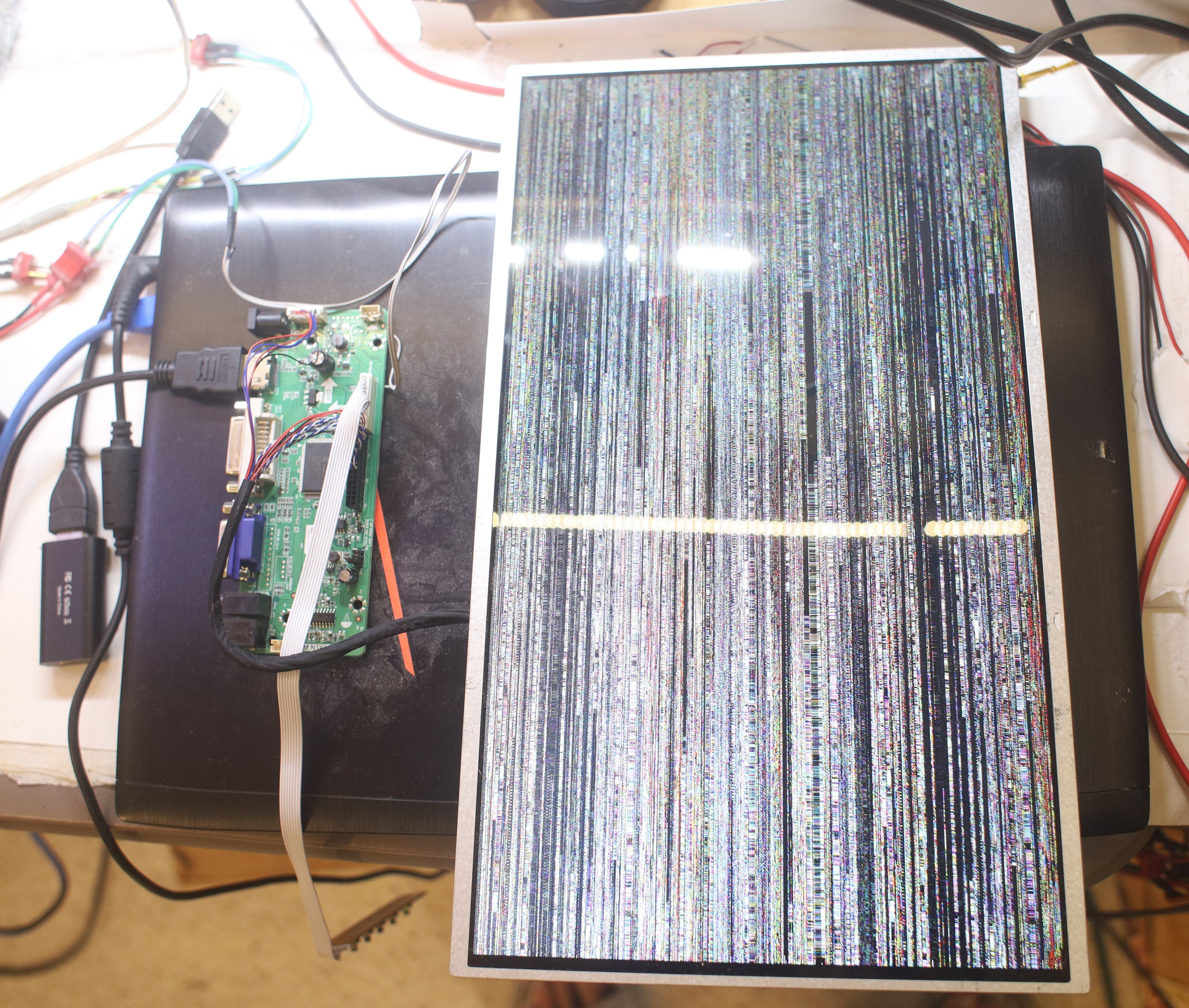

The new LCD driver from China had a defective cable. Pressing it just the right way got it to work.

Pressing ahead with the wireless option evolved into sending H.264 frames to a VideoView widget on a phone. Decoding a live stream in the VideoView requires setting up an RTSP server with ffserver & video encoder with ffmpeg. The config file for the server is

Port 8090

BindAddress 0.0.0.0

RTSPPort 7654

RTSPBindAddress 0.0.0.0

MaxClients 40

MaxBandwidth 10000

NoDaemon

<Feed feed1.ffm>

File /tmp/feed1.ffm

FileMaxSize 2000M

# Only allow connections from localhost to the feed.

ACL allow 127.0.0.1

</Feed>

<Stream mystream.sdp>

Feed feed1.ffm

Format rtp

VideoFrameRate 15

VideoCodec libx264

VideoSize 1920x1080

PixelFormat yuv420p

VideoBitRate 2000

VideoGopSize 15

StartSendOnKey

NoAudio

AVOptionVideo flags +global_header

</Stream>

<Stream status.html>

Format status

</Stream>

The command to start the ffserver is:

./ffserver -f /root/countreps/ffserver.conf

The command to send data from a webcam to the RTSP server is:

./ffmpeg -f v4l2 -i /dev/video1 http://localhost:8090/feed1.ffm

The command to start the VideoView is

video = binding.videoView;

video.setVideoURI(Uri.parse("rtsp://10.0.0.20:7654/mystream.sdp"));

video.start();

The compilation options for ffserver & ffmpeg are:

./configure --enable-libx264 --enable-pthreads --enable-gpl --enable-nonfree

The only version of ffmpeg tested was 3.3.3.

This yielded horrendous latency & required a few tries to work.

The next step was a software decoder using ffmpeg-kit & a raw socket. On the server side, the encoder requires a named FIFO sink created by mkfifo("/tmp/mpeg_fifo.mp4", 0777);

ffmpeg -y -f rawvideo -y -pix_fmt bgr24 -r 30 -s:v 1920x1080 -i - -vf scale=640:360 -f hevc -vb 1000k -an - > /tmp/mpeg_fifo.mp4

That ingests raw RGB frames & encodes downscaled HEVC frames. The HEVC frames are written to a socket.

On the client side, ffmpeg-kit requires creating 2 named pipes.

String stdinPath = FFmpegKitConfig.registerNewFFmpegPipe(getContext()); String stdoutPath = FFmpegKitConfig.registerNewFFmpegPipe(getContext());

The named pipes are passed to the ffmpeg-kit invocation.

FFmpegKit.executeAsync("-probesize 65536 -vcodec hevc -y -i " + stdinPath + " -vcodec rawvideo -f rawvideo -pix_fmt rgb24 " + stdoutPath,

new ExecuteCallback ()

{

@Override

public void apply(Session session) {

}

});

Then the pipes are accessed with Java streams. The stream constructors have to come after ffmpeg is running or they lock up.

OutputStream ffmpeg_stdin = new FileOutputStream(stdinPath); InputStream ffmpeg_stdout = new FileInputStream(new File(stdoutPath));

HEVC frames are written to ffmpeg_stdin & RGB frames are read from ffmpeg_stdout. This successfully decoded raw frames from the socket. The resolution had to be downscaled to read the RGB frames from stdout in Java.

This too suffered from unacceptable latency but was more reliable than RTSP through VideoView. The next step would be old fashioned JPEG encoding. JPEG requires too many bits to be reliable over wifi, but it could work over USB. The whole affair made the lion kingdom wonder how video conferencing & screen sharing managed to make video responsive.

lion mclionhead

lion mclionhead

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.