I'll use my existing software:

https://github.com/JamesNewton/esp8266WebSerial

ported to ESP32, for easy setup of WiFi router connection, configuration of the pause between pics, what URL to post the pic to, etc...

A few low cost hardware platforms were suggested at

https://hackaday.io/page/5754-lowest-possible-wifi-camera-with-low-power-sleep

but I went with

because for $12 as a development version, the extra cost is well worth convenience. Hopefully the code can be ported to lower cost hardware, or even custom hardware in the future.

The overall flow after setup is:

1. wake up on RTC, check external pin to see if an event has happened during sleep, if not, go back to sleep. If this feature isn't desired, it can be disabled in config, or just pull the external pin high. The idea here is that we can wait for a light to flash on a control panel, or for some other detectable event before powering all the way up to take a pic.

2. If we are waking, try to connect to the configured WiFi. If there is no connection after a few tries, and this was our first power on, go into AP mode and wait for someone to connect and feed us a configuration. (see the esp8266WebSerial project above for more details).

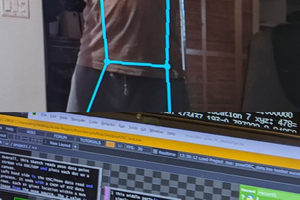

3. If we are waking and connected to WiFi, turn on the LEDs, take a picture, turn off the LEDs, upload the picture to the configured server. In the future, some form of image processing might be added here. E.g. mask out and xmit only parts of the picture (where the dials, digits, indicators are) or subtract this picture from the last one taken and send only the difference, etc...

4. Also upload any data e.g. from a serial port connected to a peripheral device, the status of our external event pin, battery status, etc... A "trigger" string to be sent to the peripheral can also be configured. This allows an external sensor / data logger to be used in addition to or instead of the camera. An sscanf regexp can be configured to extract up to 3 values from the returned data for local display with linear conversion to engineering units ( Y = mX + b ).

5. if the upload succeeds, and there is no return data from the server, sleep. On failure, try again for some number of times before giving up. If we have a display, flag the error. If the server returned data, parse it for settings changes (e.g. faster or slower interval) and respond to those. A message can also be set from the server on the local display if there is one. Any additional data is sent to the peripheral device and a few seconds allowed for a response, which then takes us back to step 4. This allows the user to request that the server get additional data / change settings in the peripheral the next time the device checks in.

Most of that is already in the ESP8266 code above, but will need to be ported / re-written. The camera / image upload will be new. Not sure if the porting or the camera would be the better starting point.

James Newton

James Newton

Arthur Guy

Arthur Guy

Martin Fasani

Martin Fasani

John Grant

John Grant

Jerry Isdale

Jerry Isdale

QUESTION: Does anyone tried a library that will detect movement comparing images ?

I would like to send images from one of this cheap cameras but only when something changes. Every jpg image weights about 10 /12Kb and if I do a security cam that sends a picture every second, then I will end up with:

12 KB*60*60*24 = 1036,8 Megabytes per day. That's way much (Of course I won't even by capable to send this at this speed I guess)

Does anyone try to do something like this ? The goal would be to compare 2 Jpegs and say if the difference threeshold is more than X, then upload a new image.

Research links:

https://stackoverflow.com/questions/3395872/image-comparison-algorithm

https://github.com/mapbox/pixelmatch-cpp (This one looks interesting)