The network of superconducting gravimeters has been collecting data for decades. About 95 percent of the signal is the sun-moon signal. I have an example of one month of data in an attached image. You can just see a few spots of blue where the actual signal peeps out from underneath the calculated signal.

The calculation is quite simple, if you have a ready source for precise positions of the sun, moon and earth in station centered coordinates. Luckily NASA's online Horizon system provided by the Jet Propulsion Laboratory (JPL) Solar System Dynamics group has made it easy. I will post how to generate and download the necessary data to calculate the signal to be expected, and post some programs on GitHub to take the positions, calculate the tidal signal, and help you do the linear regression needed for each axis of the instrument.

This is a very forgiving method for getting started. If you have gaps in the data, or periodic noise, earthquakes or other random interruptions, each measurement of the three axes in time can be individually compared to what is expected. You will need to try to get your instrument aligned carefully to North, East and Vertical unit vectors. You will need the exact GPS location of the instrument. If you are off a bit, you will see that in the regression calculations, and can use the regressions to solve for the position and orientation. That is a bit advanced, but I will try to simplify it and post it on GitHub and here.

I have a few ideas myself. I would like to try some of the MEMS, atom chip and other gravimeters that are getting sensitive enough to be called "gravimeter" rather than accelerometer. In fact, "seeing" the sun moon signal is a good indication that any new technology has reach a certain level in capability. Large networks can potentially provide feedback to the JPL ephemeris process to help refine the values for GMsun and GMmoon. Longer term for these "second" instruments they can probably try to track Jupiter and Venus.

My more immediate goal is to find the instruments that are sensitive enough and can be logged at high sampling rates, in order to be able to apply "time of flight" and correlation methods to arrays of gravimeters for things like 3D imaging of earthquakes, the earth's interior, ocean currents, and atmospheric currents. It will probably take one or two generations of people learning about and using gravitational fields on a daily basis for these things to be possible. I thought I would try to share what I have learned by calibrating the SG and seismometer networks this way, and what I am learning from the many new device manufacturers.

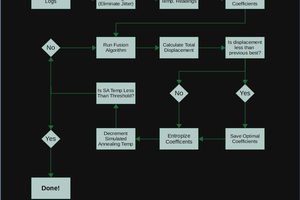

The vector tidal acceleration at an instrument location on the earth is a simple Newtonian gravity calculation. For now, it only uses the sun and moon. The sun's gravitational potential at the instrument has a gradient that is the acceleration we measure. But because the sun also accelerates the earth too, you take the sun's acceleration at the instrument location and subtract the sun's acceleration at the center of the earth. Then add to that the moon's acceleration at the instrument, minus the moon at the center of the earth. There are xyz values for each of these. So you are taking the x value of the suns acceleration at the instrument and subtracting the sun's x value at the center of the earth. You also have to calculate the vector centrifugal acceleration at the instrument. It sounds complicated, but it is mostly software and keeping data organized.

Once an instrument is calibrated by comparing it to what is expected, then it can begin reporting on what it measures. The measurements can be solved for the position of the sun, assuming standard values for the moon, and solved for the position of the moon, assuming standard values for the sun. These...

Read more » RichardCollins

RichardCollins

Debargha Ganguly

Debargha Ganguly

Hugh Brown (Saint Aardvark the Carpeted)

Hugh Brown (Saint Aardvark the Carpeted)

Supplyframe DesignLab

Supplyframe DesignLab

Caleb W.

Caleb W.

For a high school student who was interested in something a bit simpler to start with, do you have a recommendation for an accelerometer that might be suitable for measuring the difference in gravity between one degree of latitude for example? I had a look on sparkfun but there's so many factors - range, sensitivity, resolution - that it's difficult to know which to choose.