It is my deep belief that knowledge brings about positive change.

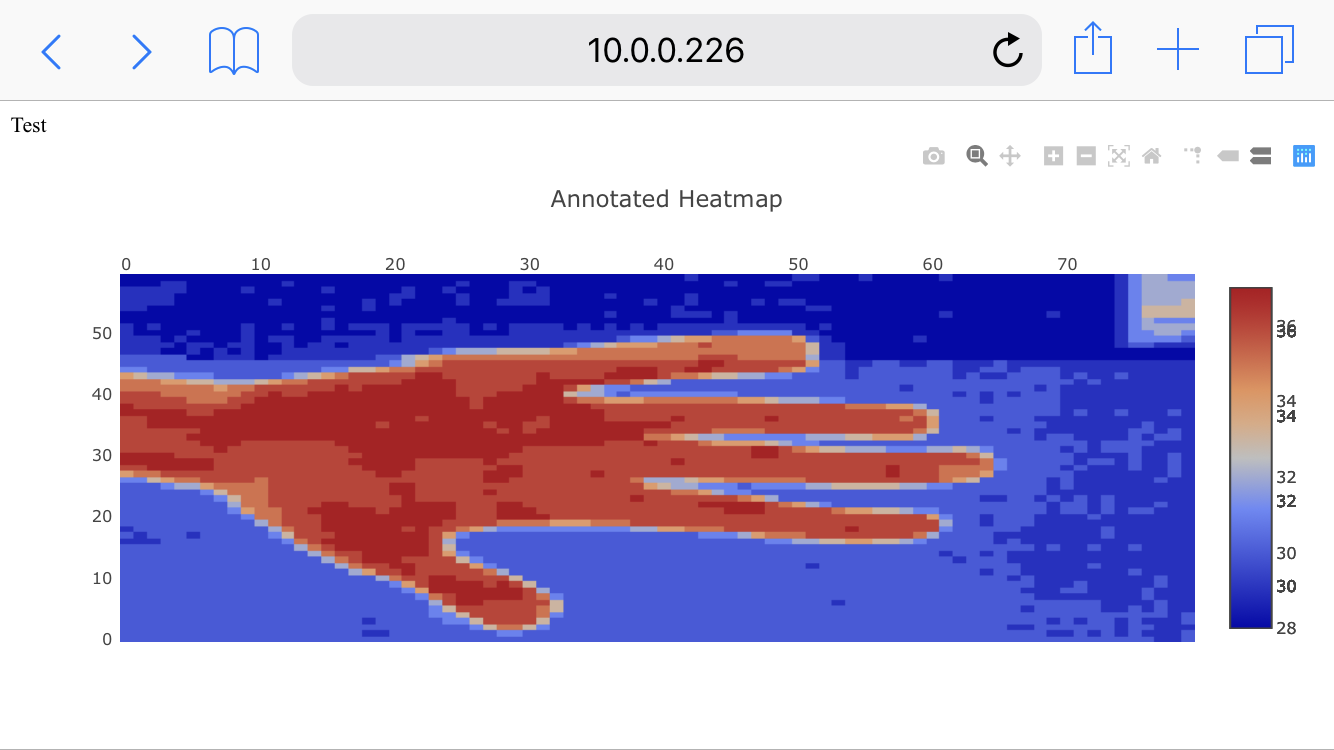

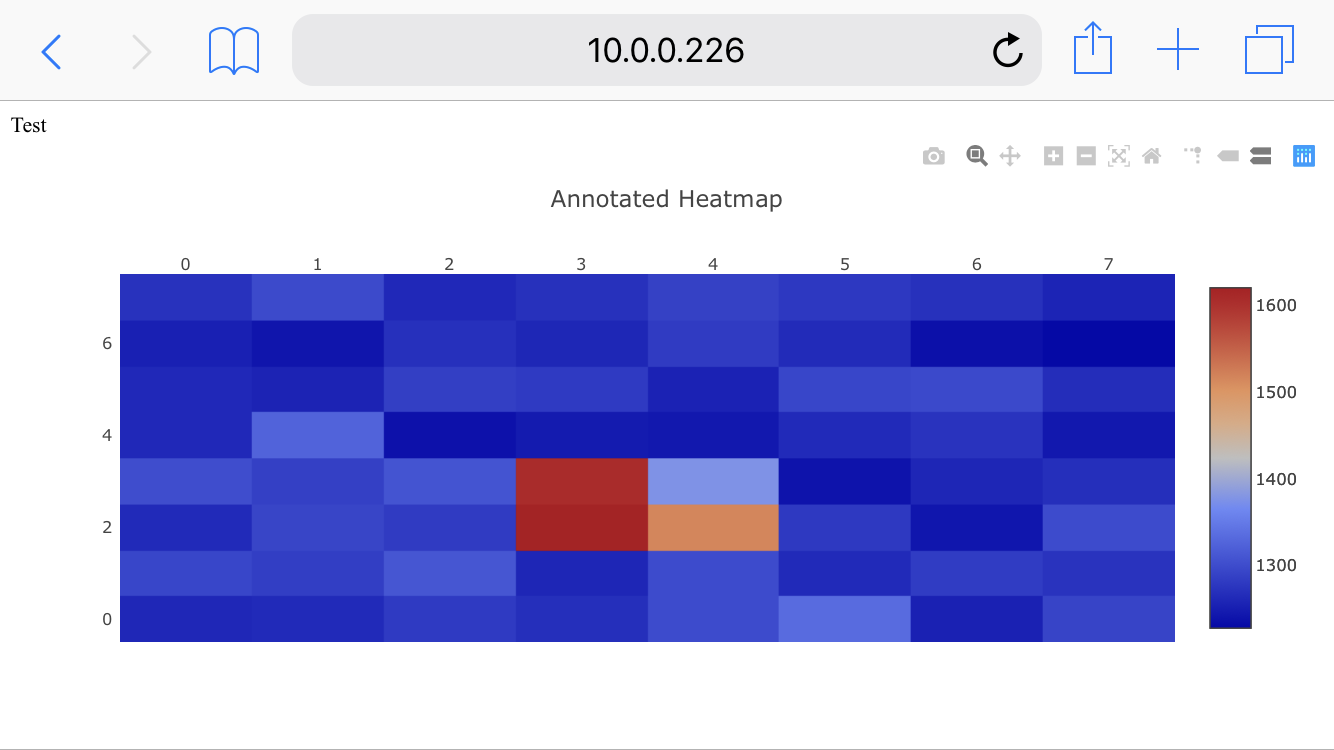

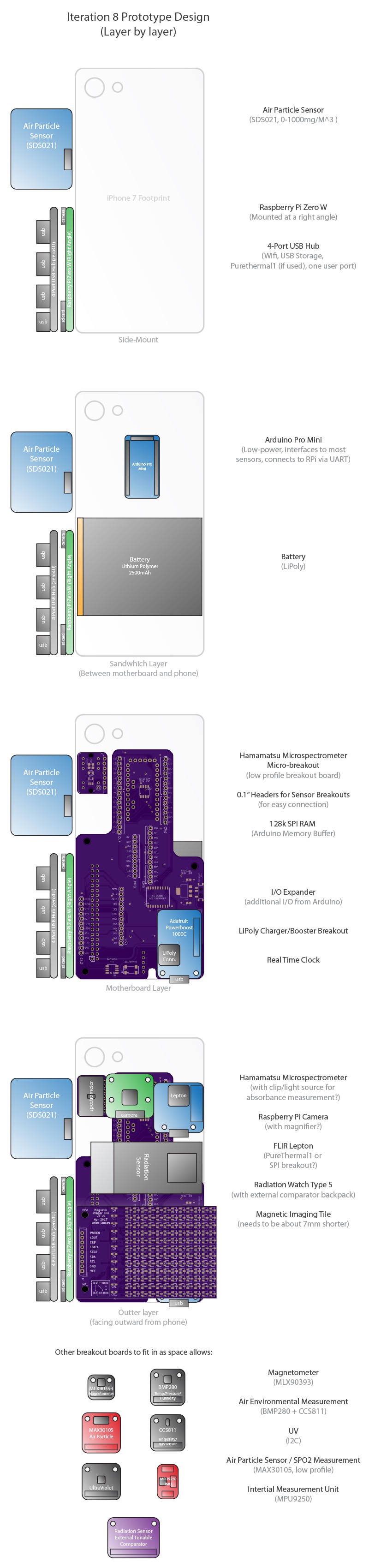

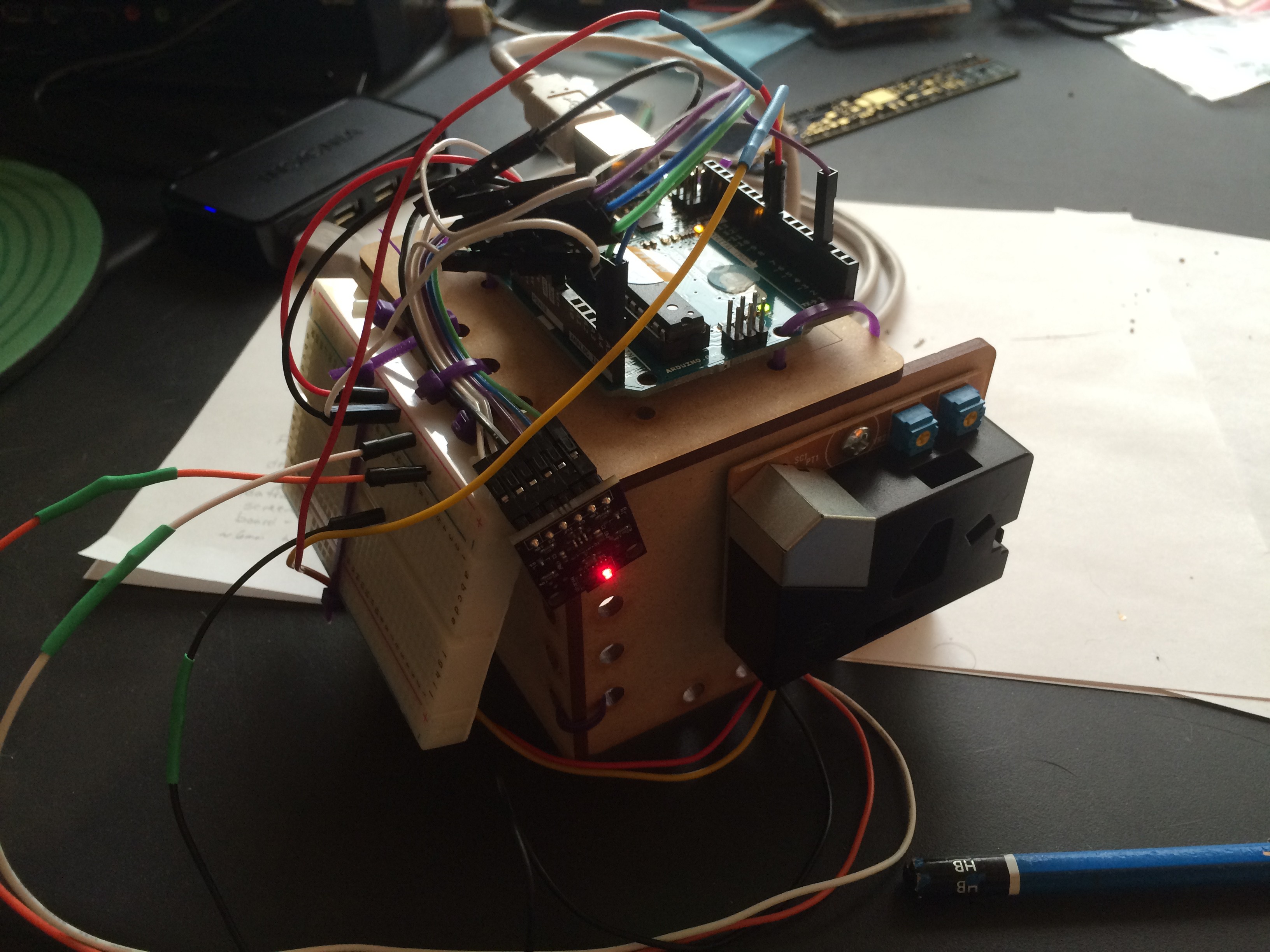

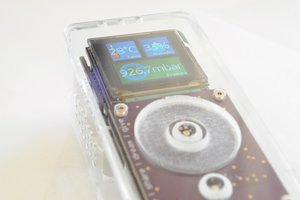

We could live in a world where the same instrument that can show a child how much chlorophyll is in a leaf could also show how them much pollution is in the air around us, or given off by one's car. As an educator and a researcher, I feel that if people could easily discover things about their worlds that were also important social topics, that they would then make positive social choices, like reducing their emissions, or working towards cleaner industry in their communities.

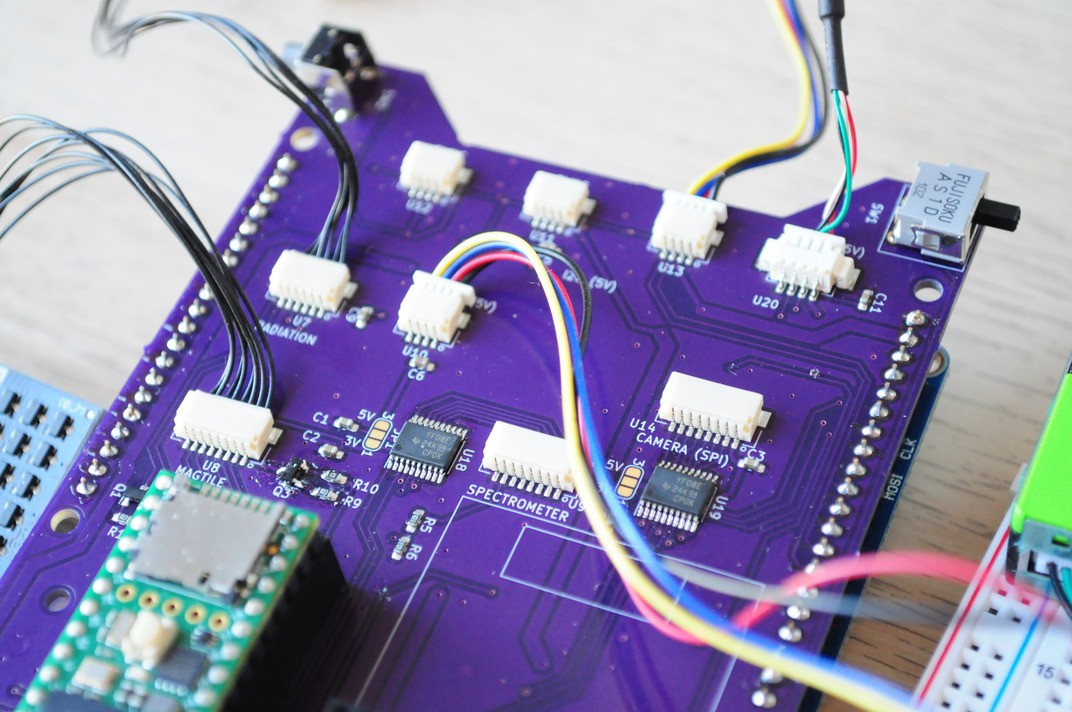

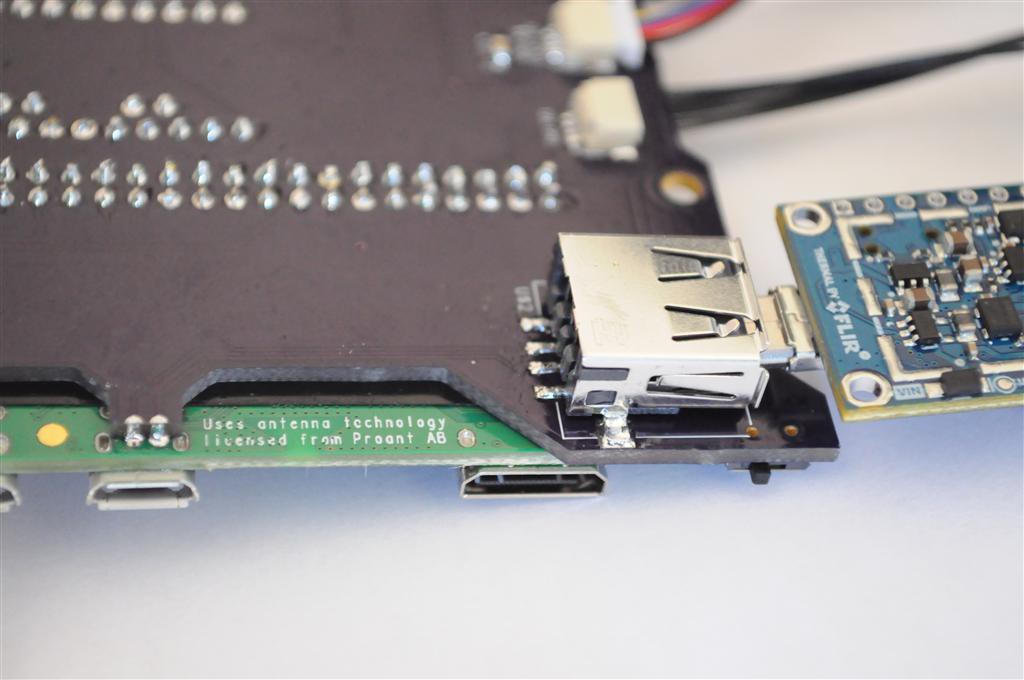

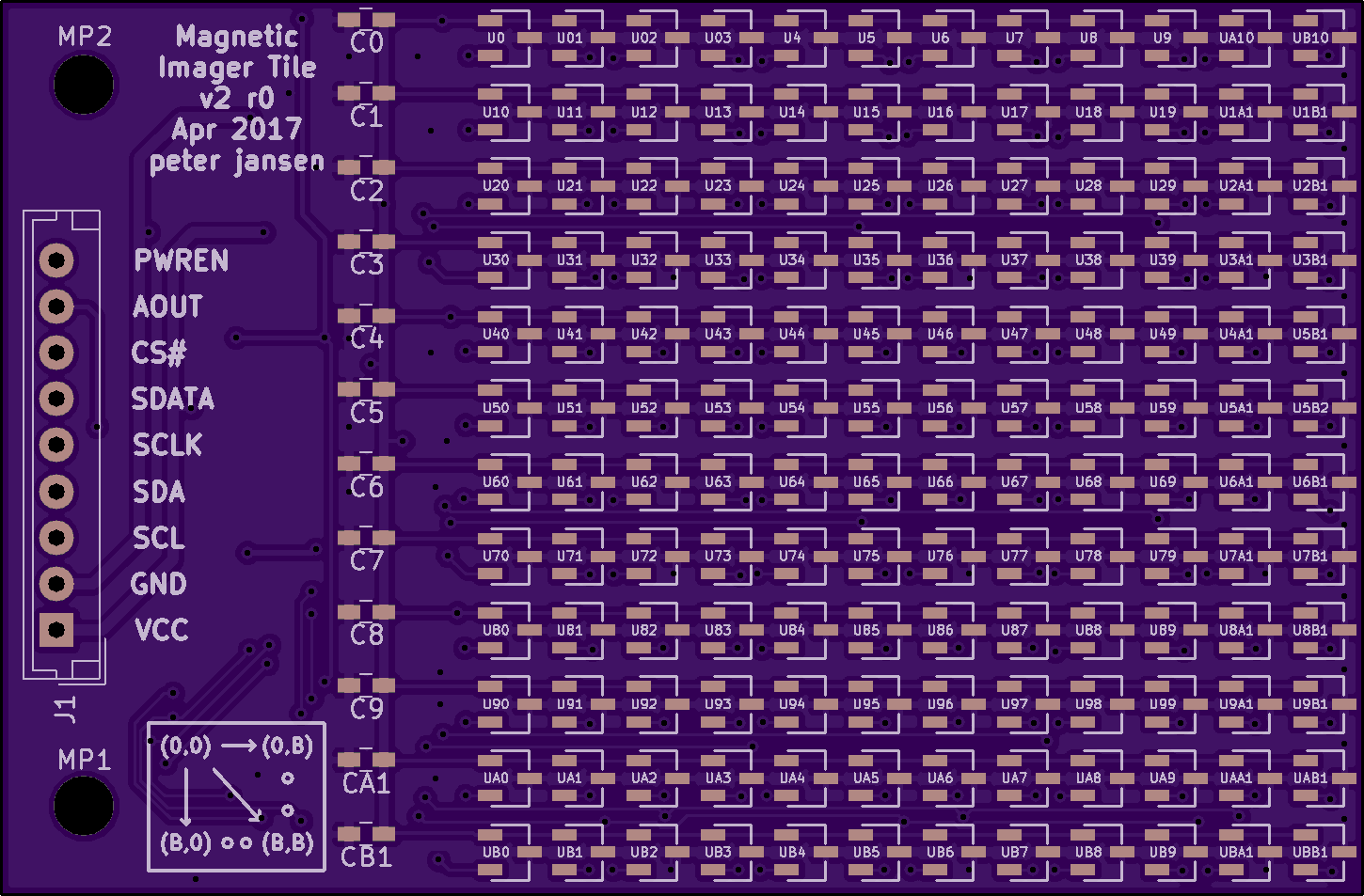

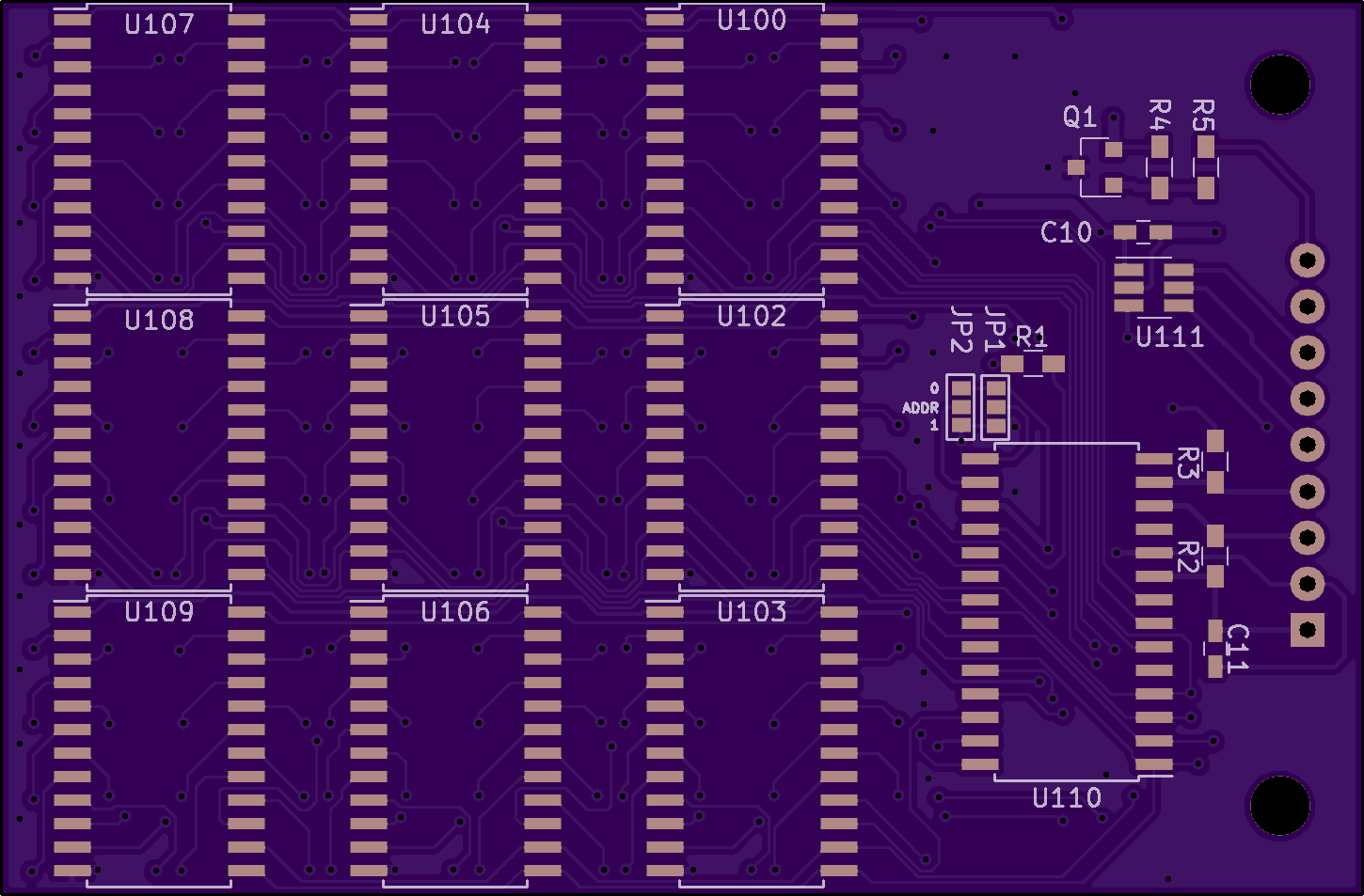

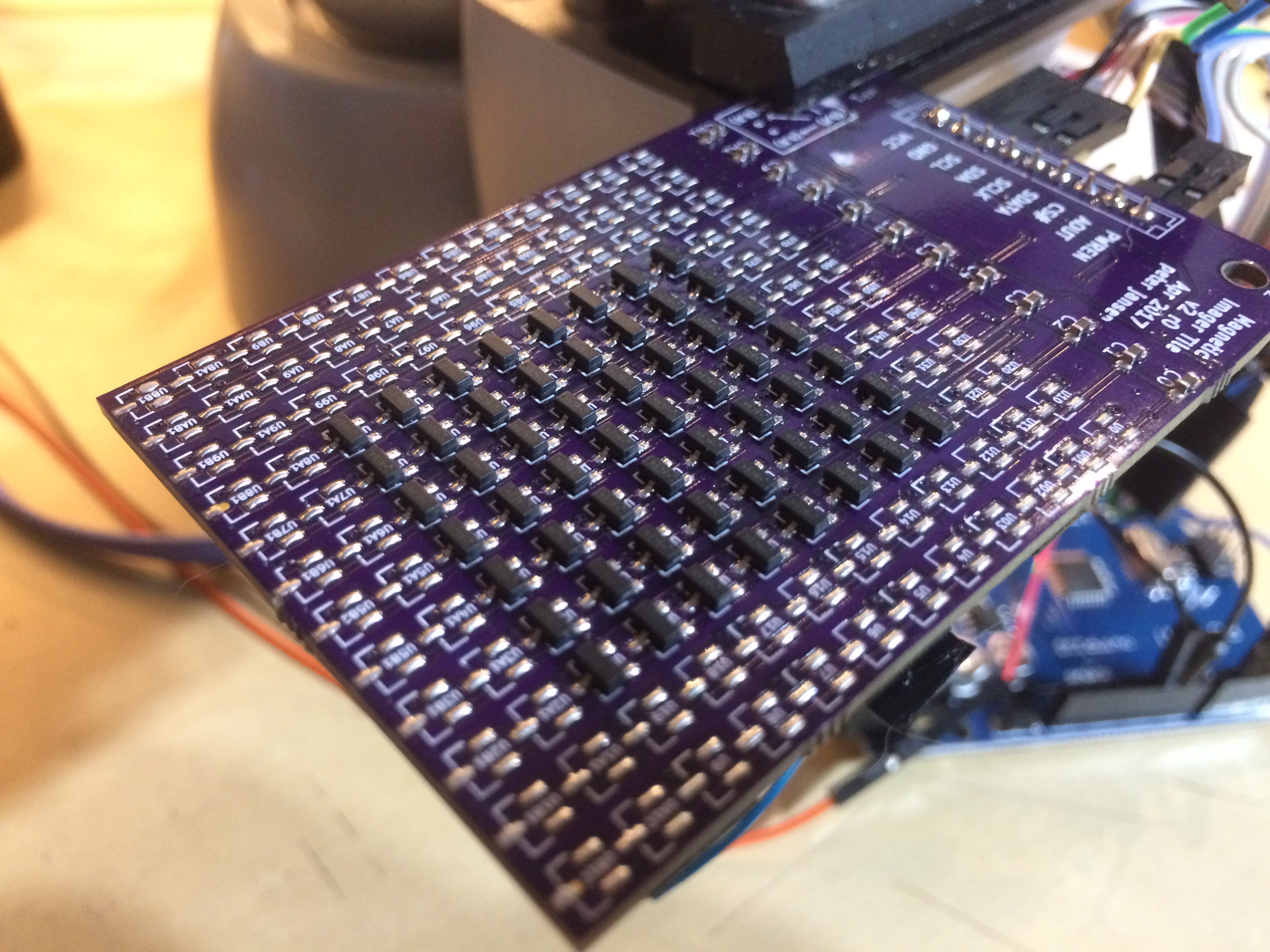

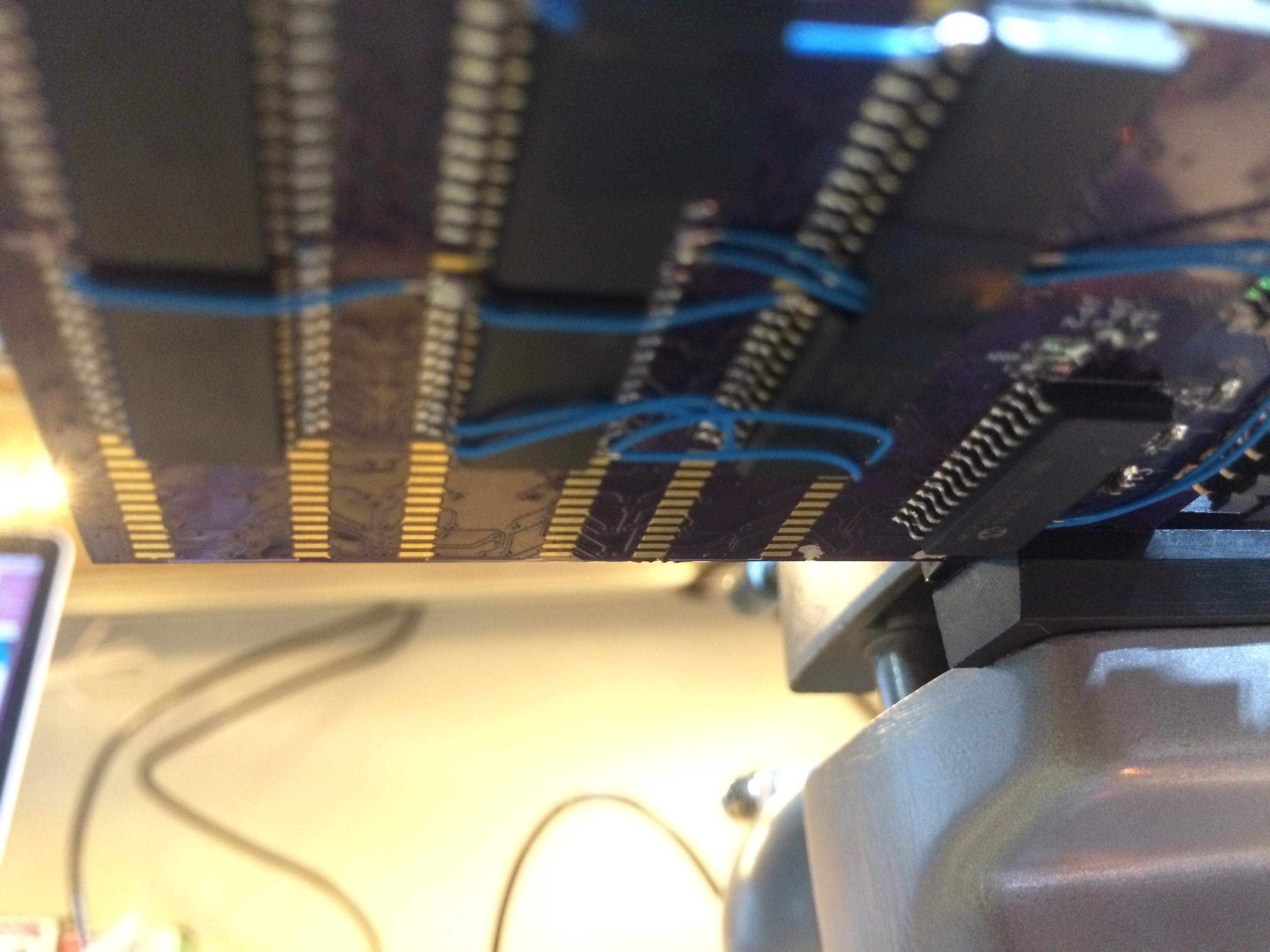

By having access to general inexpensive sensing tools, people can learn about healthy leaves, clean air, clouds and the water cycle, energy efficient homes — and visualize abstract concepts like spectra or magnetism.

As a tool for exploration, we can discover things around us that we don't already know. And that's what it's about. Little discoveries, everywhere.

peter jansen

peter jansen

pastcompute

pastcompute

Samuel Wantman

Samuel Wantman

sparks.ron

sparks.ron

Have there been any updates? Has anyone had success with building one? If so, how did it go?