Okay, this will just be another schmorgasborg update as again, I haven't been doing

nothing, but it doesn't really fall into the neat little buckets like "changing the source" or "putting it on the truck" like in the first weeks. So:

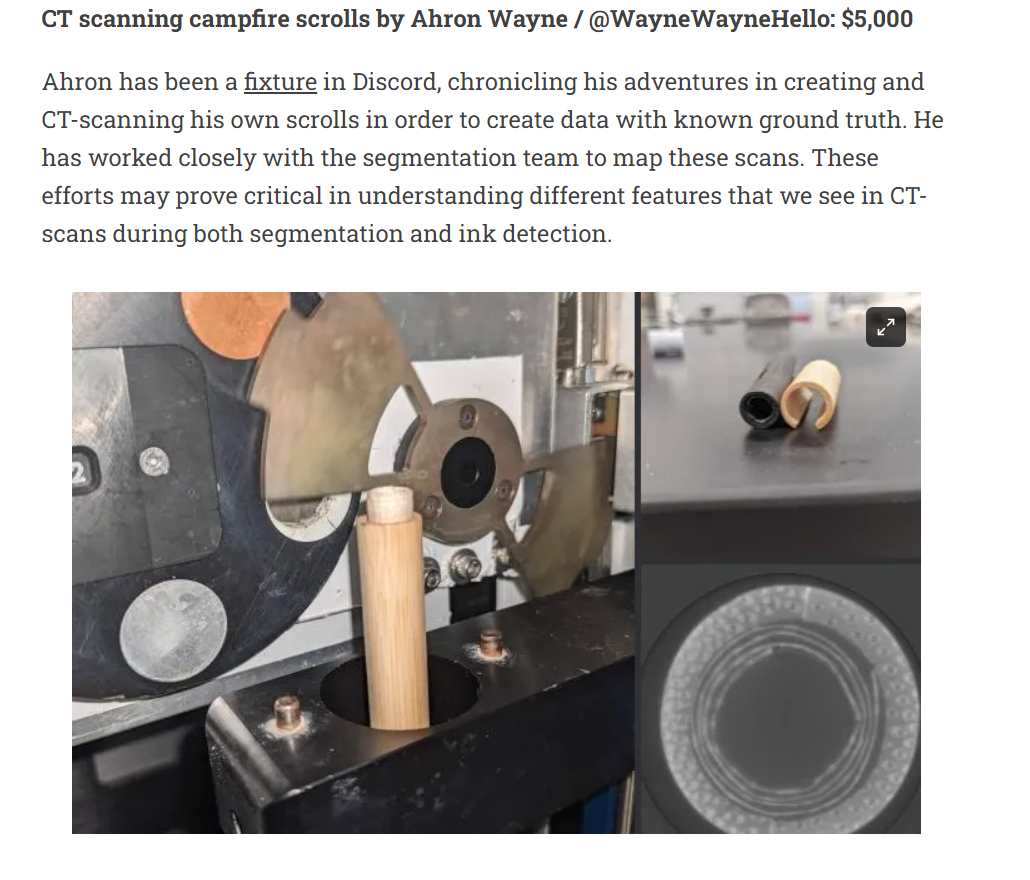

0: Guess who won a thing! https://scrollprize.substack.com/p/segmentation-tooling-2-winners Woohoo!

That pays for the scanner and the source and all the other random little doodads, just about!

1: The super blurry scans we saw early are a thing of the past because of... the SHORT SCAN!

from XRayPhysics.com:

In most lab CT, just like in the gif above, the object or source/detector rotate a full 360 degrees to scan the object. Which kind of makes sense, because that's what you would do with structured light scanning, or photogrammetry. But in reality, the minimum angle of rotation for a complete reconstruction is actually just 180 degrees! (+ fan angle). This is because, the x-rays go through the object. The projection of the object is the same 180 degrees apart (ignoring geometric magnification differences). You can see this in the reconstruction in the gif above; the reconstructed circle is complete at about the half way mark for the rotating source.

The rest of the 360 degrees is actually somewhat redundant! Ideally, more samples increase the signal to noise, but this is still kind of weird to me that it's the standard because by default some of the object is sampled twice and some is only sampled once.

The problem is that in our system, our rotary axis is super wonky in an as-yet-undetermined way. And this means that after the 180 degrees rotation, the object does not actually return to the exact same position, and the extra projections are even worse than redundant. You've got two good datasets fighting each other. I noticed this when I chose the "short scan" reconstruction method and the recon got way better, even though I was throwing away nearly half of the projections.

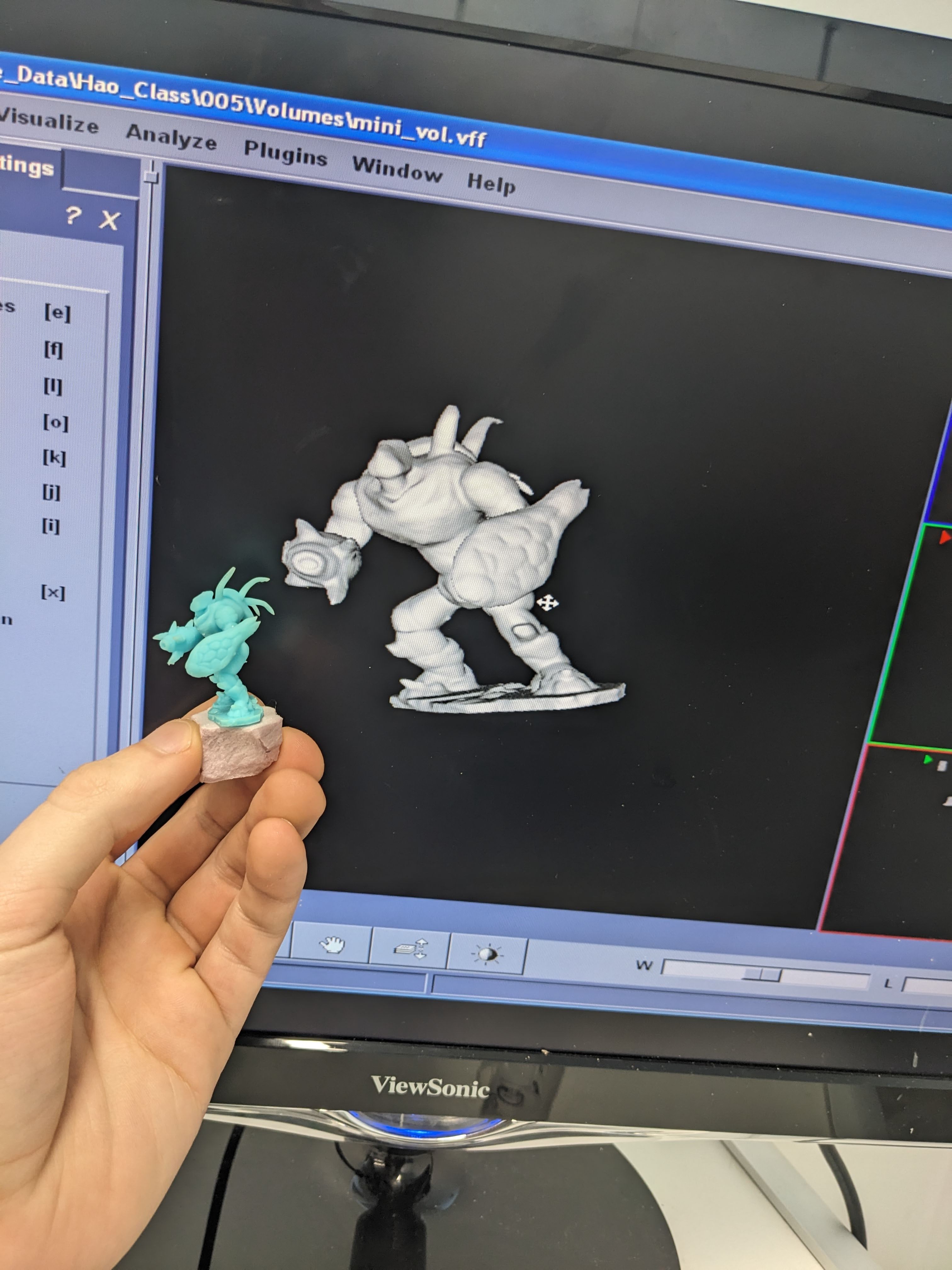

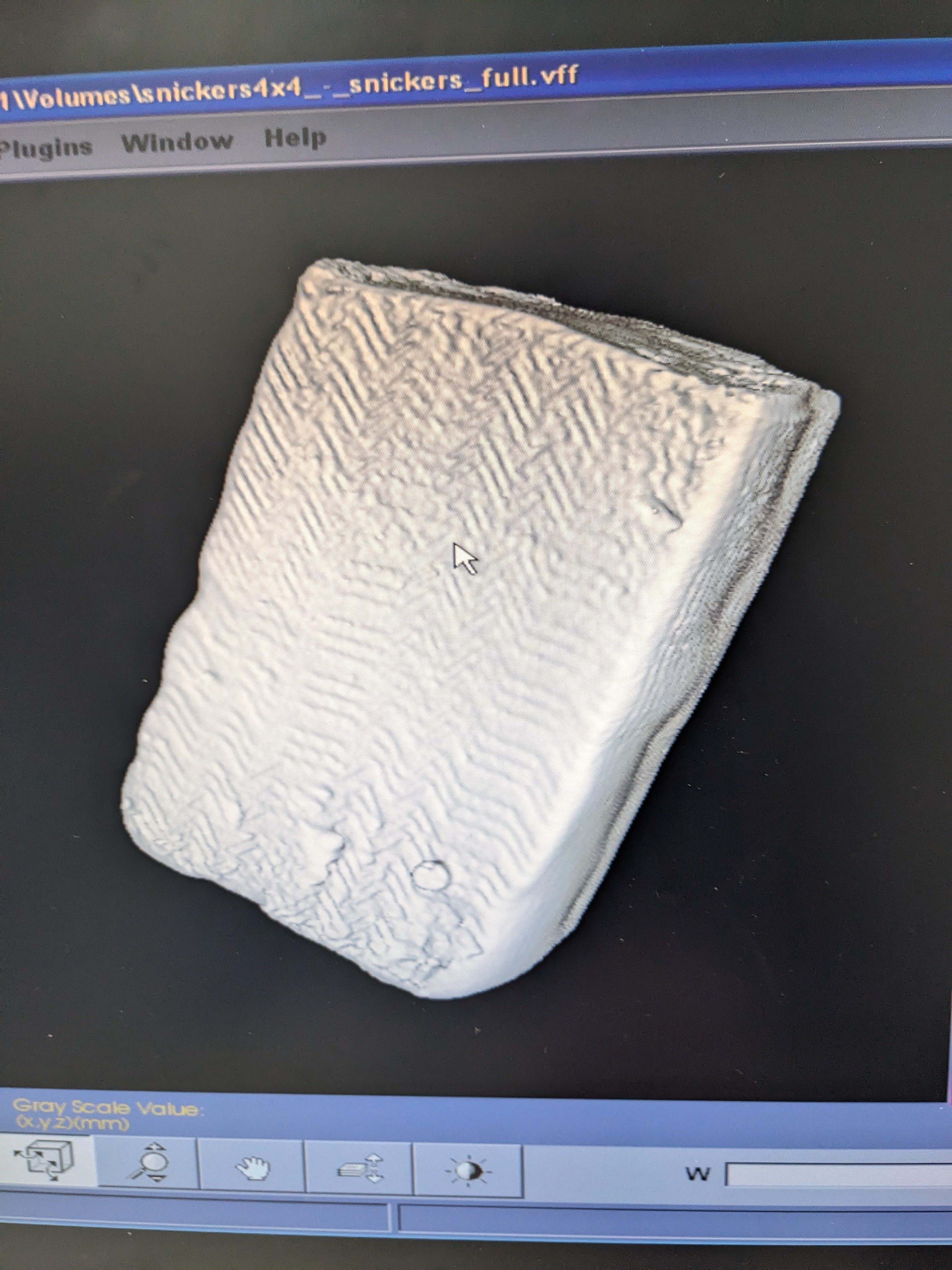

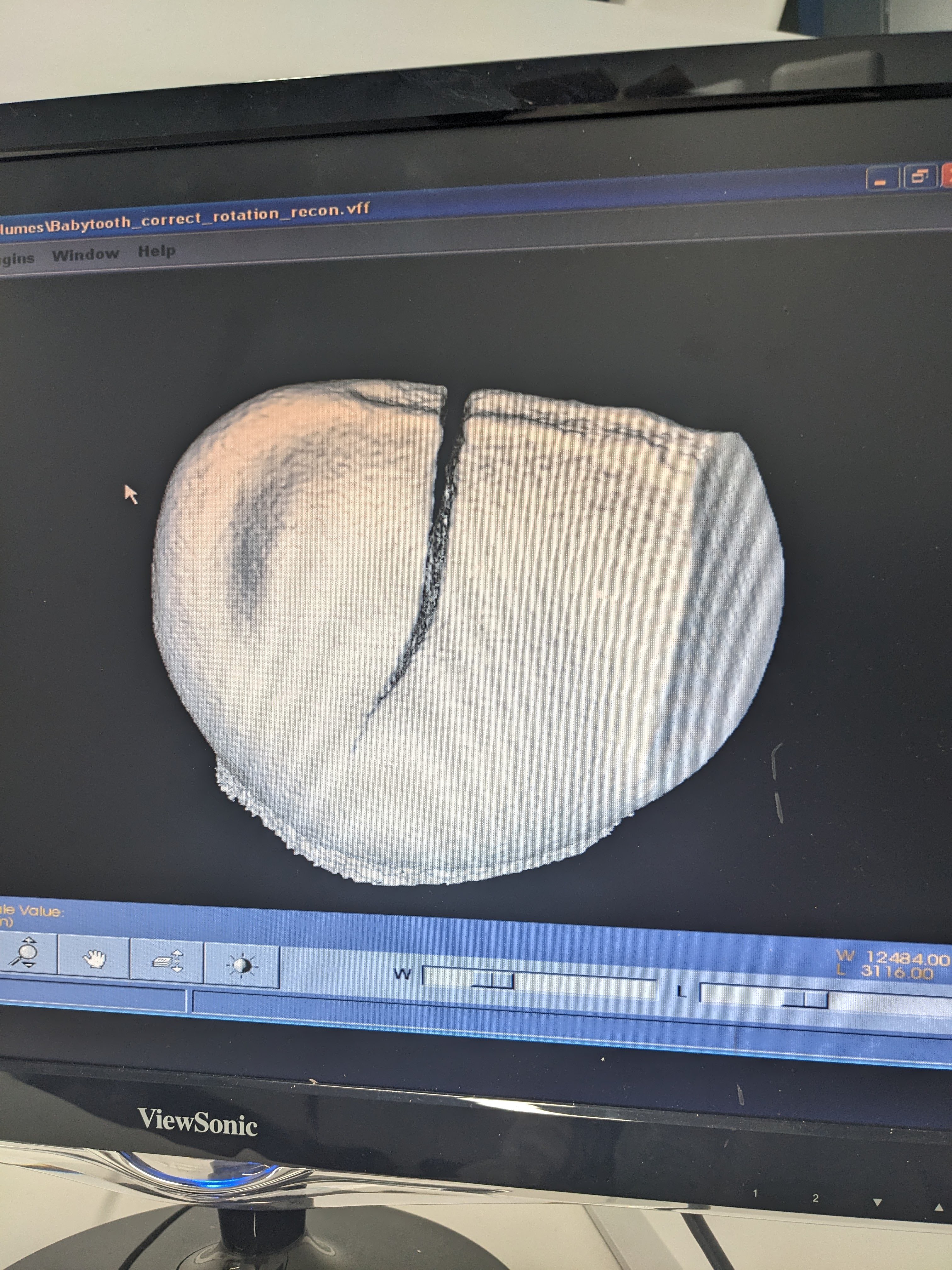

So now scans are way way better! And I can scan and make STLs of really important things like:

Tiny resin print, professor's son's babytooth, snicker's.

2: I've been using the scanner for 2D images a lot!

Here's a picture of an old foil pokemon card! It's definitely not part of a project I've been working on for 2 years. I certainly haven't been collecting a large dataset for being able to look inside the unopened pack with simple 2D projection images. Incidentally, if you have experience with supervised convolutional neural networks for pix2pix, please reach out.

(guess who it is in the comments below!)

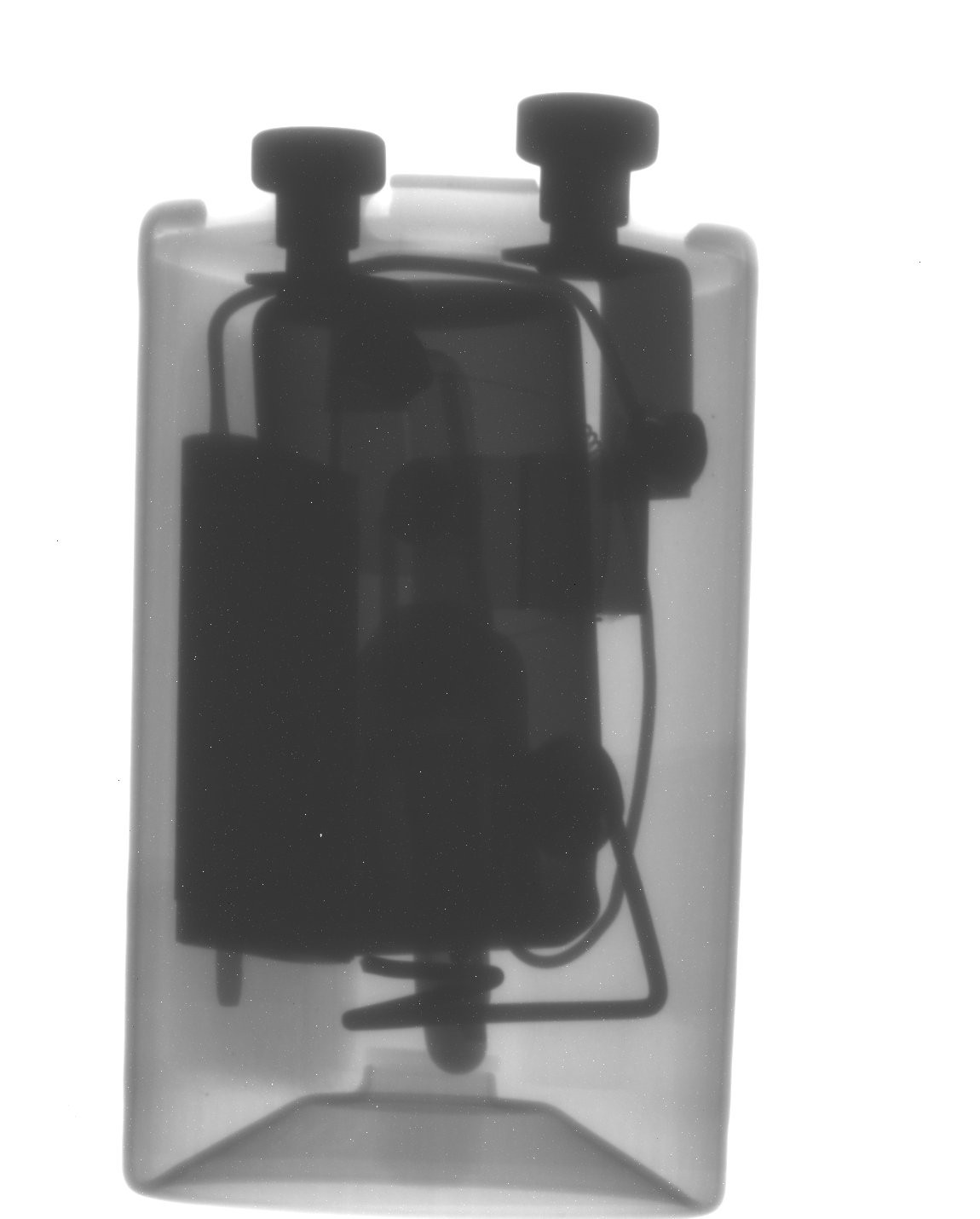

Or look at this starter thing? That's neat.

3: I came up with a scheme to extend the life of my source --- especially when taking series of 2D pictures where you would otherwise have to turn the source off, open the door, put the thing in, turn it on... the source has basically a filament light bulb inside, and just like regular visible ones the harshest thing is turning them off and on. And I was noticing that the power has gotten even lower than before. I try not to push it too far past 0.5 watts!

Daniel said something like "you can use the position of the tungsten shutter to know when it's safely blocking the source and have an Arduino move it to there whenever you open the door". I took this to mean, you can monitor the position of the tungsten shutter with an encoder and when it's blocking the source, change the voltage to 2.5v really quickly to make it stop rotating.

Hey, it works! And maybe I'll do daniel's idea eventually. Something like this is clearly what the original designers intended for when they created a tungsten shutter with an encoder attached to it. It's so much better than turning the source on and off and it REALLY works --- radiation meter sees no more than background inside the machine when it's covered (compared to ~10,000x more when it's uncovered). Unfortunately full CT scans still force you to turn it off and on, but I'll figure a way around that.

Anyway, that's where we are now. I haven't even touched on like how I'm using external image processing with imagej to do despeckle or deep learning noise reduction. Or how it was used to teach a guest lab for a medical imaging class. But yeah! The machine is getting a lot of use! A good buy!

Ahron Wayne

Ahron Wayne

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

Is this project essentially over? I haven't seen an update in months and I'm on the edge of my seat!!

Are you sure? yes | no

It's not over by any means, but my updates are definitely a lot less often for sure! That's not because I'm not still using the machine, or trying to improve it --- it's because it's been rather comfortably settled and far past the easy, "here is what I fixed in the last week" phase. I've kind of settled on the fact that the machine will never really be a modern hardware powerhouse and a lot of the gainz are now in the form of modern software magic and careful application.

Are you sure? yes | no

Is that.... Nidoking (Base Set 11) (or its Base Set 2 reprint)?

Are you sure? yes | no

Ding Ding Ding! Nice job!

Are you sure? yes | no

Woohoo indeed -- really neat legacy data rescue project!

Are you sure? yes | no