List of changes:

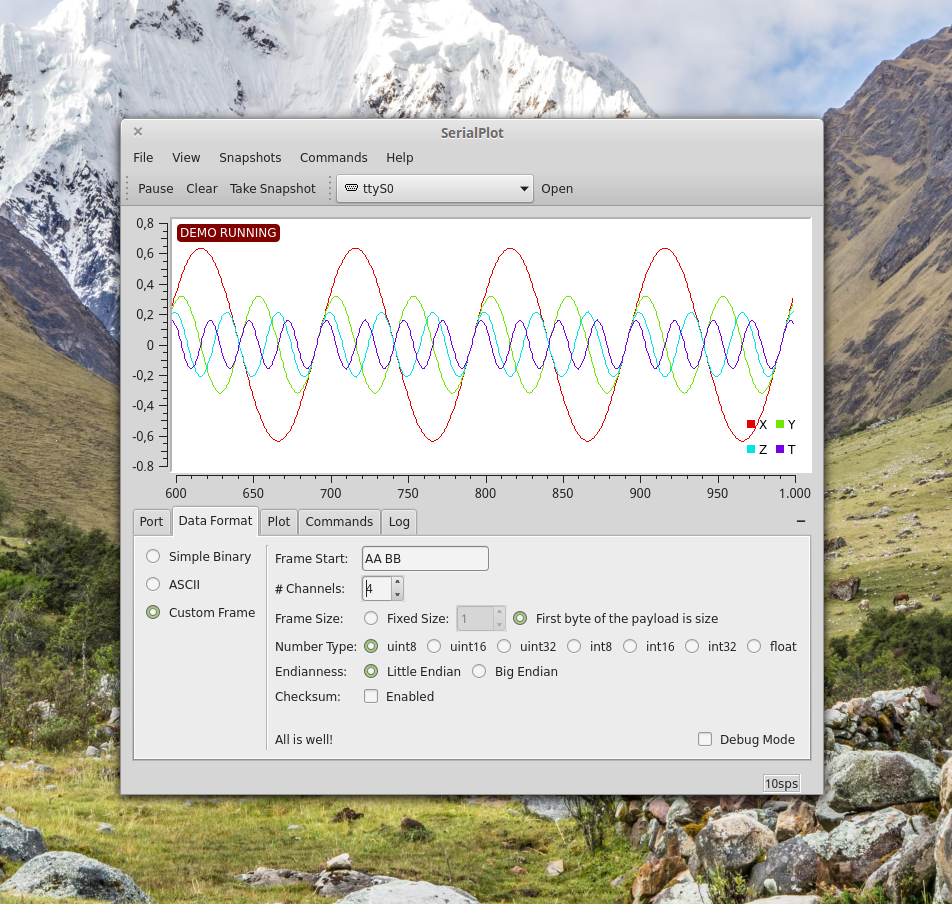

- Custom frame format support added.

- Command labels and commands menu added.

- Legend added.

- Skip sample button (for Simple Binary reader) added.

- ASCII mode can determine number of channels automatically.

- Changed shortcut for "Open Port" to F12 (was F2).

- Numerous GUI improvements and bug fixes

Most important addition of this release is the "Custom Frame" data format selection. With this option you can define your own binary data format. A custom frame format is defined as follows:

- Define a frame start sequence (sync word). This is defined as an array of bytes and should be at least 1 byte long. You have to have a sync word!

- Define number of channels.

- Define frame size. It can be a fixed number or you can transfer frame size at the beginning of each frame, right after sync word. Note that frame size byte doesn't count itself.

- Define number type.

- Define endianness.

- Enable/disable checksum byte. When enabled checksum byte is sent at the end of each frame. It should be the least significant byte of the byte sum of all the samples. This basically includes all bytes between the frame size byte and checksum byte.

I plan to write a tutorial on how to use this feature in detail with some code examples in the near feature.

Installation

New for this release, I've created an ubuntu ppa which makes installing software and updates very easy. Here is how:

sudo add-apt-repository ppa:hyozd/serialplot sudo apt-get update sudo apt-get install serialplotIf you want to access .deb packages directly. You can find them on the sidebar links.

Here is the windows setup by the way. Still 64bit only at the moment.

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

How about 100,000 cycles? 86,400 cycles would give you 24 hours. A work around that could also be considered an enhancement would be to have the program automatically take a snapshot once the end of the current cycle is reached and to keep doing so as long as the program is open. This feature could be toggled on and off as desired. You already have a button that when depressed creates a snapshot. I envision a timer function that would correspond in length to the number of cycles selected. Once the time expires it triggers a snapshot and the timer restarts. The reason I call it an enhancement is because you will have more resolution with each individual snapshot than you would have with one very long set of data--unless you have the ability to zoom in on a particular area of the graph. If the snapshot could be converted into a photo file it would allow a photo editor to stitch together each individual snapshot.

And if I can have everything! I would like the program to be able to manipulate the data. As an example lets say I'm monitoring voltage on a battery as it discharges through a resistor of 10 ohms. One trace would plot voltage but a second trace would then be a math function of the first trace (in this case power would be voltage squared divided by resistance of 10 ohms). Then if power could be integrated over time that would tell you how many watt hours a battery can hold.

Another powerful option--at least for battery testing would be the ability to load multiple snapshots into the same window. For example, load the snapshot of a batteries first discharge cycle into a window and then load the snapshot of the same battery at discharge cycle number 500 into the same window such that the change in battery performance can be readily determined.

I hope you find these suggestions useful. I have no idea how difficult they would be to implement for someone versed in coding. For me, it would take ages. I'm not a programmer, but I attempted it anyway.

Are you sure? yes | no

@joegrowswell I already had some stuff implemented. So I just released a new version. If you are windows, download it from here https://bitbucket.org/hyOzd/serialplot/downloads .

'auto snapshots' idea sounds easy to implement but I don't like it from a UI perspective. Especially in case of high sample rate, snapshot list could quickly get out of control.

'math' is another thing that I'm planning to implement. But it's going to take a while before I'm there : )

What you are describing in your last suggestion is more related to plot manipulation. At the moment my priority is real time functionality. Remember you can export snapshots as CSV files. Afterwards you can use Excel/Calc to create charts as you wish. There is also more specific software such as https://kst-plot.kde.org/

Thanks a lot for suggestions.

Are you sure? yes | no

The new version is working for me so thanks! I like your suggestion to use the CSV file in Excel. I have one last question. Will there be a version of this software I can run on a Raspberry pi?

Are you sure? yes | no

@joegrowswell What distro are you using on Pi?

Are you sure? yes | no

I just got my Rasberry Pi 3 model B and haven't set it up yet. What distro do you recommend?

Are you sure? yes | no

@joegrowswell I don't have any experience with pi. I would probably use something debian based because I only have experience with ubuntu/debian based distros : ) I've asked this question, because I can enable the arm builds on launchpad ppa and you can install the debian packages from there. For other distros (not debian based) you will have to compile serialplot from the source code (its not that hard).

Are you sure? yes | no

I would find it useful if the number of samples could be expanded beyond 10,000. At 1 cycle per second 10,000 cycles gives me 2.78 hours of data capture. I would like something more like a week's worth of data capture. Is this reasonable? Otherwise, I'm very pleased with your program. I spent an entire day and a half trying to create a program that would do what this does (and I failed) before I stumbled on this. I learned a lot in the process, though.

Are you sure? yes | no

@joegrowswell You read my mind. "Capturing a weeks worth of data" is my vision for this software. But its not easy. I'm still in the planning stage. I want to do it right.

At short term I could increase the maximum number of samples. What do you think a good number would be? I thought 10000 would be enough for most purposes, guess I was wrong : )

Removing the limit is out of question though (at least with current code). If user accidentally enters a very big number, application could try to allocate too much memory and mess up the system.

Are you sure? yes | no

Ok, after some experimentation I've increased the maximum number of samples to 1Million. Plotting gets a little slow, but in a low sample rate as 1 sps it shouldn't be an issue.

Can you compile from the source?

Are you sure? yes | no

No, I don't know what that means. I tried uninstalling the program and reinstalling--still 10,000 is the limit.

Are you sure? yes | no

You could use dynamic memory allocation to accommodate the data size. That means using pointers, keeping track of the data size etc.

e.g. Use 10,000 for the initial fixed size. Read in data. Once it reached that size, reallocate memory block for 20,000. Repeat.

Are you sure? yes | no

That's the tip of the iceberg : ) I don't want to just capture data in snapshots. I also want to display it seamlessly. And I want to do this in the scale of GBs. Saving and loading from the disk should be supported as well. Its actually a must if we are going to have GBs worth of data collected and displayed.

Are you sure? yes | no

It doesn't mean that the processing is done in batches. The memory reallocation can happen transparently while you are reading in data. It just mean instead of giving up when the array is full, your program simply ask for more memory. The library call get the memory and copy the contents over. Pretty much all programs and new languages out there use dynamic memory allocation. Very rarely you would see a program (outside of crippled demos) running out of memory.

I have written programs that do that using standard C library and they scale quite nicely. Mine can index a HDD with dynamic allocation and do so without pause.

Are you sure? yes | no

> The library call get the memory and copy the contents over.

That's not a good idea. Copying would take considerable amount of

time. To be able to achieve the level of scalability I want, I have to

divide the data in chunks.

There is also some UI considerations I have been thinking about. I

don't want user to be surprised with the memory usage. I'm thinking

about setting a soft limit for RAM usage, something around a couple

hundred megabytes. There would be also a 'recording option'. When user

enables the recording; they would chose where to store the recorded

data. Data is recorded in chunks, each chunk to a separate file, with

an 'index file' containing the list of chunks and some information

about the recording. Moreover user should be able to easily open a

recording for browsing, and maybe to continue recording.

Are you sure? yes | no

You do realize that serial I/O is very very slow compared to modern day CPU memory access. 115,200 is only 11.52k bytes/sec The memory bandwidth on a modern day CPU is on the order of thousands megabytes per second (if not more), so the copying is not even going to be an issue. The incremental size can be increased to reduce copying. Memory copy is sequential access, so it fits into caches and prefetches easily.

The library function I am talking about is standard C library realloc() for memory management back in the late 80's. We outgrown old Fortran memory limitations a long time ago. Just want you to know that there are better alternatives than the old fixed arrays that limits what user need to do.

The old memory is released back and new memory is allocated on current size + minor expansion, so there shouldn't be using more memory than what is *actually* needed. Not sure why you are even worrying about memory usage and copying overhead when practical every program dynamically allocate memory and OS uses virtual memory which copy memory blocks all the time.

I use it for indexing a hdd which is easily higher than a few orders of magnitude of a slow serial download and a lot more data. I wrote the program for speed. If that's an issues, I would not bother with it.

Are you sure? yes | no

Indeed serial IO is slow compared to harddisk IO. I'm not worried about not being able to keep up with the serial IO. What I'm worried is moving the collected data around when resizing the array. Let's say I have allocated a memory of 100MB. When this is filled, and I try to allocate a bigger memory and move the data to there, this will cause application to hang. Even if it's only a second I don't want it. This is a GUI application and user shouldn't notice anything happening under the hood.

I'm worried about memory usage, because I want people to be able to use this in small computers such as R.Pi maybe even in headless systems.

Are you sure? yes | no

When I started out with the reply, you were only talking about 10,000 or 1 million samples, so what I said is specifically about that. I have taken it to 50 or so MB and kept everything as an array to speed up on search results in my program. I wrote the program back when my PC had a single core and a total of 768MB worth of RAM, so I do have to conserve memory for other programs. It is not that drastically different than a RPi.

When you are dealing with larger amount of data, of course the same method won't be scalable. There are other ways of dealing with that amount of data. e.g. memory mapped file.

Changed the requirement, changed the solution... I never suggest using the same solution for drastically different scales.

---

It is 130MB data on my current PC with faster HDD and the dynamic memory allocation hasn't slow it down. My PC is about 5 years out of date and due for an upgrade.

Are you sure? yes | no

I'm currently investigating the memory mapped file as a solution. Thanks for the tip.

Are you sure? yes | no