Now it was time to tackle some of the software (talking about codes running on the computer not the firmware) side of things. The very first thing we did was to load our under-powered PC (it is a 32 bit system by the way) with the latest version of Ubuntu (14.04.3 LTS).

With it 'sudo apt-get updated, upgraded, and then dist-upgraded'. We are finally ready to install the 'Robot Operating System (ROS)'. For those who are not familiar with ROS, wikipedia calls it:

"Robot Operating System (ROS) is a collection of software frameworks for robot software development, (see also Robotics middleware) providing operating system-like functionality on a heterogeneous computer cluster. ".

Meanwhile we call it a kick ass software that ties the various components of a robot together. It has a large open-source community supported library to boot. Anyone who is really serious about robotics should take a look at it. This is also the first time getting our feet wet in the 'ROS lake'.

For our system we installed the most current version 'ROS Jade Turtle' (detailed instruction here) IN FULL (don't to leave out any important packages). The ROS website provides a series of excellent tutorials that really helped us... in the beginning (more on this later). In a nutshell, what we want to in ROS is to implement something called Hector SLAM (no it is not a WWE thing). Firstly SLAM is a abbreviation of Simultaneous Localization and Mapping. It refers to the process in which a robot (it can be any number of similar things) can in parallel map out an unknown area and at the same time determine its location in the map. This is helpful in getting a robot autonomously from point A to B.

Now Hector SLAM is something more advanced. It can build up a map without need for odometry. Let us explain: Imagine the lidar sensor we featured earlier. It outputs a distance versus polar angle kind of reading. When the sensor moves, the readings change, kind of like a scene A to B. Now the Hector SLAM algorithm is able to the components of A in B. Thus it does'nt require odometry input as a reference., although it can be augmented by it.

Now ROS rears its baffling head. It is well known to have a steep learning curve (maybe that is only for us). While there are a multitude of examples of sucessful implementation of RPLIDAR with Hector SLAM, there is not step by step instruction how to do it. After much toil we got it to work and we will document the steps here. Hope it is helpful:

First, one needs to get RPLIDAR to talk to ROS (we are making a major assumption that you are using Ubuntu), the steps for this procedure are:

1. Go to https://github.com/robopeak/rplidar_ros

2. Clone the folder listed on the site into your catkin's workspace src folder (assuming you are using Jade Turtle here)

3. Go the catkin directory: cd catkin

4. Source the setup.bash file (we found that we need to do this for every command prompt that we open to run ROS stuff): source /opt/ros/jade/setup.bash

5. Compile: catkin_make

6. After that is done connect the RPLIDAR and run your new spanning rplidar ros node (that is what it is called): roslaunch rplidar_ros view_rplidar.launch

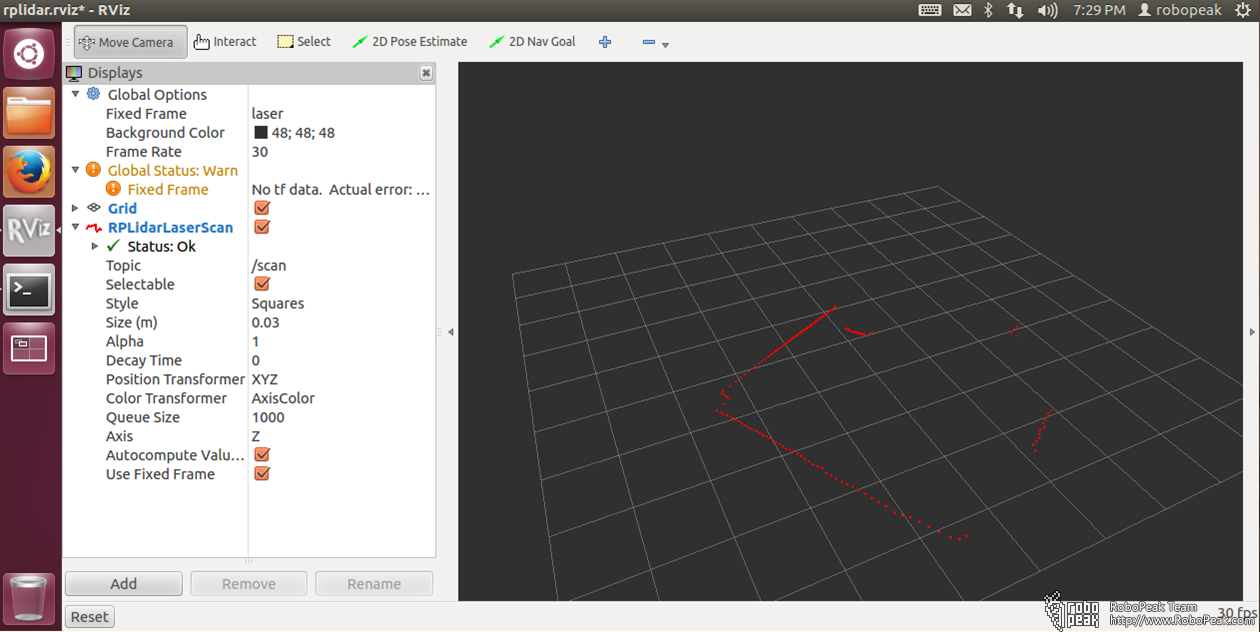

The last step open up what is call RVIZ which a ROS visualization program. What you should see is something like this:

Now what you would immediately notice on the left is a warning about 'No tf data'. To put it simply it is trying to say that it is lacking in some defined reference frame. This will be a problem later on when trying to run Hector ROS.

Now what you would immediately notice on the left is a warning about 'No tf data'. To put it simply it is trying to say that it is lacking in some defined reference frame. This will be a problem later on when trying to run Hector ROS.

Alternatively you can quit RVIZ and run the following two commands in separate command prompt (remember to source your setup.bash in both command prompt):

roslaunch rplidar_ros rplidar.launch

rosrun rplidar_ros rplidarNodeClient

to see the data stream.

Now the next step is to implement the Hector SLAM node itself. Here are the step:

1. Now clone the Hector SLAM package (https://github.com/tu-darmstadt-ros-pkg/hector_slam) into the src directiory.

2. Now from the catkin directory, source the setup.bash then run catkin_make hector_slam

3. Make the following modification to file ~/catkin_ws/src/hector_slam-catkin/hector_mapping/launch/mapping_default.launch

Change last second line:

<!--<node pkg="tf" type="static_transform_publisher" name="map_nav_broadcaster" args="0 0 0 0 0 0 map nav 100"/>-->

to:

<node pkg="tf" type="static_transform_publisher" name="base_to_laser_broadcaster" args="0 0 0 0 0 0 base_link laser 100" />

and save.

4. To start mapping do the following:

1. Start a command prompt and issue following commands

source devel/setup.bash

roslaunch rplidar_ros rplidar.launch

2. Start another command prompt and issue following commands

source devel/setup.bash

roslaunch hector_slam_launch tutorial.launch

5. Now just move the lidar around... slowly... it should build up a map of the surrounding.

If you were to start the mapping without making the modification in step 3, no map would be generated.

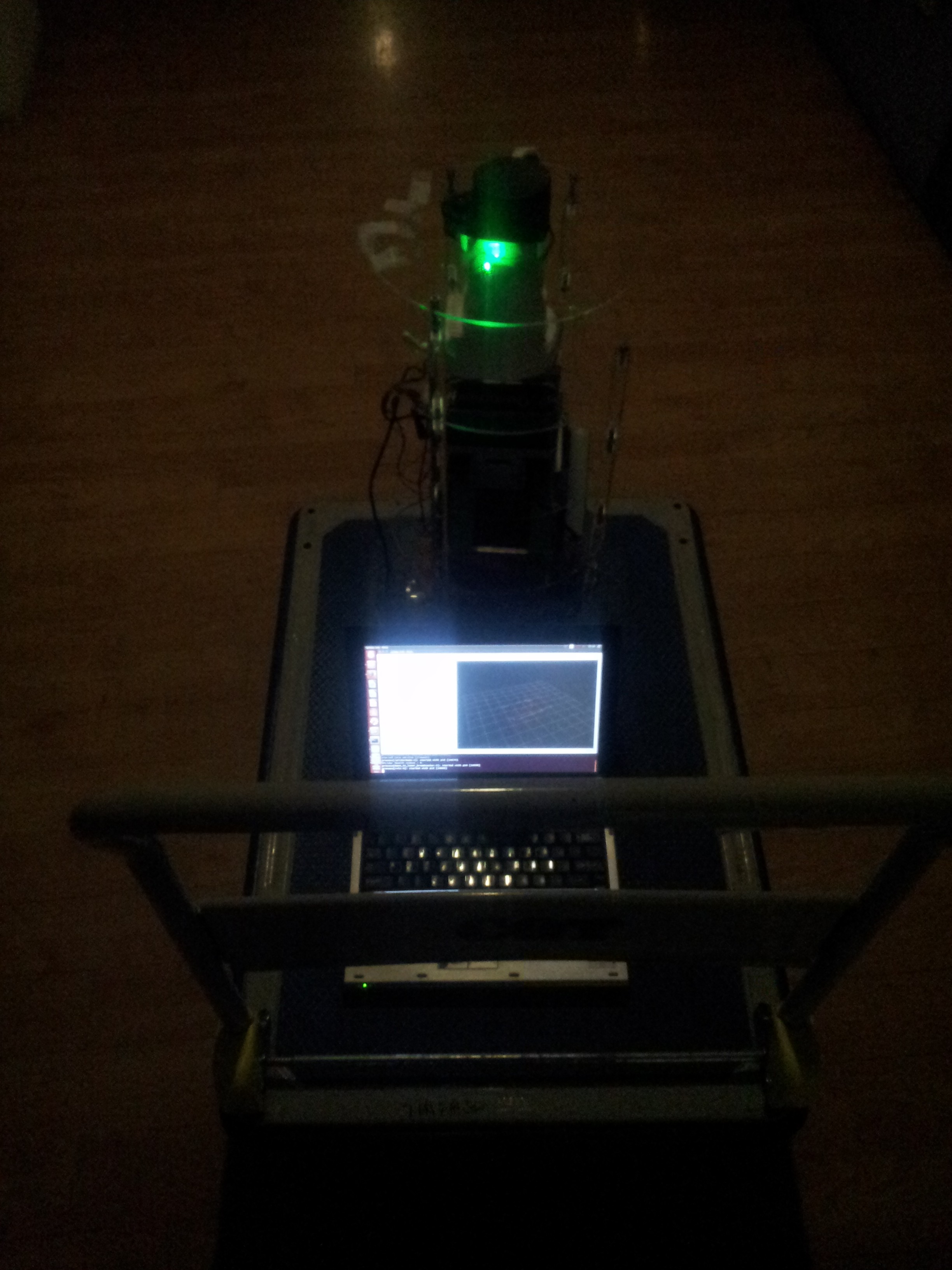

Here was our test set-up for the first mapping, the lidar was connected to a laptop and the platform moved around on a trolley. The problem here is that the lidar is picking up the trolley push bar. Since that is fixed structure as the platform was moved around, the algorithm got confused and did not manage to build up a map. Maybe there is a way of excluding certain data points from the mapping.

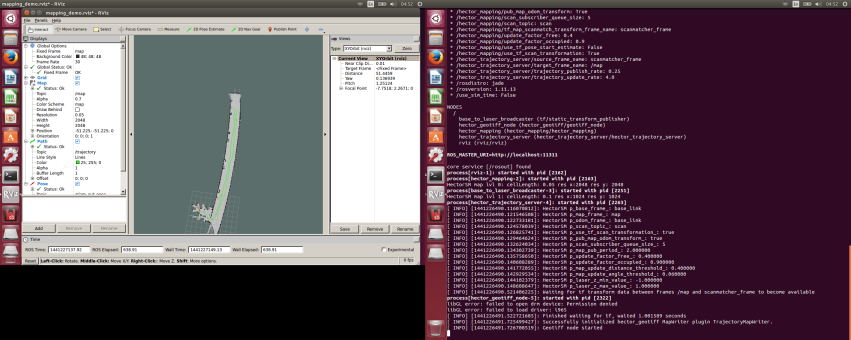

We then connected the lidar directly to the platform onboard pc and started the mapping via ssh over wifi. This ensure no part of the lidar was blocked. We drove the platform slowly up and down the corridor outside of our office and obtain this:

The corridor was in fact straight. The fact that it appears curved here was possibly due to the fact that the wall on that side was exceptionally featureless. So the Hector SLAM algorithm may not be matching up the features accurately. Maybe implementation of the odometry input would help in this case.

The corridor was in fact straight. The fact that it appears curved here was possibly due to the fact that the wall on that side was exceptionally featureless. So the Hector SLAM algorithm may not be matching up the features accurately. Maybe implementation of the odometry input would help in this case.

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

Hi,

I have a problem when running the catkin_make hector_slam, there is always an error said

make: *** No rule to make target 'hector_slam'. Stop.

Invoking "make hector_slam -j4 -l4" failed

I don't know what is wrong with that, if you have any idea, please let me know what is wrong with that.

Thank you

Are you sure? yes | no

Hi,

Thank you very much for this information.

When you ssh into the onboard computer of the robot, do you have to do this from an ubuntu platform? And will this also open an rviz window? Or how do you manage to do that?

I have another question regarding the autonous navigation.

Do you have an idea on how I could implement or use the data that is generated to make my robot navigate autonomous through a building?

Greetings

Jonathan

Are you sure? yes | no

MAAAAAAAAAAAAAAAAAAAAAAAAAAN!! GENIUSS!!! A lot of time trying to do that and finally worked!!! Using Indigo and doing the modifications you said on comments maked everything going fine!! Thannnnnxxxxxxxx !!!!!!!!!

Are you sure? yes | no

Had issues getting it running using ros-lunar on ubuntu16.10 (yakkety)

So finally figured out the following working steps,

sudo sh -c 'echo "deb http://packages.ros.org/ros/ubuntu $(lsb_release -sc) main" > /etc/apt/sources.list.d/ros-latest.list'

sudo apt-key adv --keyserver hkp://ha.pool.sks-keyservers.net:80 --recv-key 421C365BD9FF1F717815A3895523BAEEB01FA116

sudo apt-get update

sudo apt-get install ros-lunar-desktop-full

sudo rosdep init

rosdep update

echo "source /opt/ros/lunar/setup.bash" >> ~/.bashrc

source ~/.bashrc

sudo apt-get install python-rosinstall

mkdir catkin_ws

cd catkin_ws

catkin_init_workspace

git clone https://github.com/tu-darmstadt-ros-pkg/hector_slam

git clone https://github.com/robopeak/rplidar_ros

cd rplidar_ros

git checkout slam

cd ~/catkin_ws

source devel/setup.bash

catkin_make

roslaunch rplidar_ros view_slam.launch

Are you sure? yes | no

Awesome write up and got me going. Thanks!

Are you sure? yes | no

Can write an article or guide me to implement ROS navigation with Hector SLAM

Are you sure? yes | no

Hi! I have been the problem with generate map, I changed the step 3 no map would be generated.

Can you help?

Are you sure? yes | no

@aldo1993 In tutorial.launch file set "/use_sim_time" to "false" instead of "true". Then relaunch hector_slam by running command "roslaunch hector_slam_launch tutorial.launch". And still it's not working then change mapping_default.launch file. Change default values of both base_frame and odom_frame to base_link.

Basically do below changes.

<arg name="base_frame" default="base_link"/>

<arg name="odom_frame" default="base_link"/>

Are you sure? yes | no

Hi, i'm trying to follow your example (it almost works). It seems i'm encountering a little issue. No map is built in rviz. i have this info: lookupTransform base_footprint to laser timed out. Could not transform laser scan into base_frame. on the terminal. Do ou have an idea on how to repair? Thanks.

Are you sure? yes | no

@grunbaum In tutorial.launch file set "/use_sim_time" to "false" instead of "true". Then relaunch hector_slam by running command "roslaunch hector_slam_launch tutorial.launch". And still it's not working then change mapping_default.launch file. Change default values of both base_frame and odom_frame to base_link.

Basically do below changes.

<arg name="base_frame" default="base_link"/>

<arg name="odom_frame" default="base_link"/>

Are you sure? yes | no