Oculus Rift, VR One, Samsung Gear, Sony Playstation VR… All those Virtual Reality are great, but they are missing something ! They do not have a position tracking system ! This means you can look up down right left, but can’t stand up and move around in your room. Too bad because the best experiences happens when you can actually move around by yourself in your virtual environment.

How do I know ? Well I have been working with many of those headsets as a consultant for an augmented reality company, and I have developed a 3D position tracking system compatible with all those devices ! And let me tell you, I have tested this tracking system with Samsung Gears, and the result was AWESOME !!! I was able to move around in a 5 meters by 8 meters room and therefore in my virtual environment.

What is so great about position tracking ? The range of applications is much wider ! You can for example create an experience in a museum where users could visit a pyramid using VR headsets in an Egyptian exposition. Or visit an appartement or a building even before it has been built ! You could also create games with multiple users… The only limit is your imagination !

Now you might be wondering how this all works ! You can check out this short video for a quick introduction :

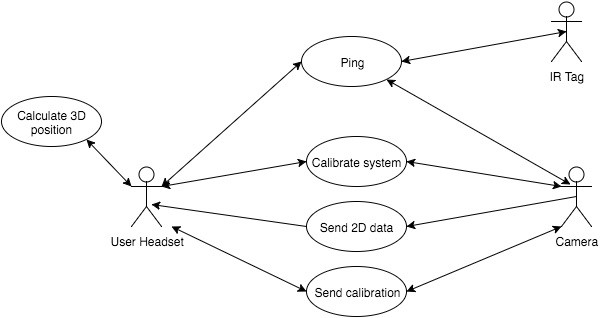

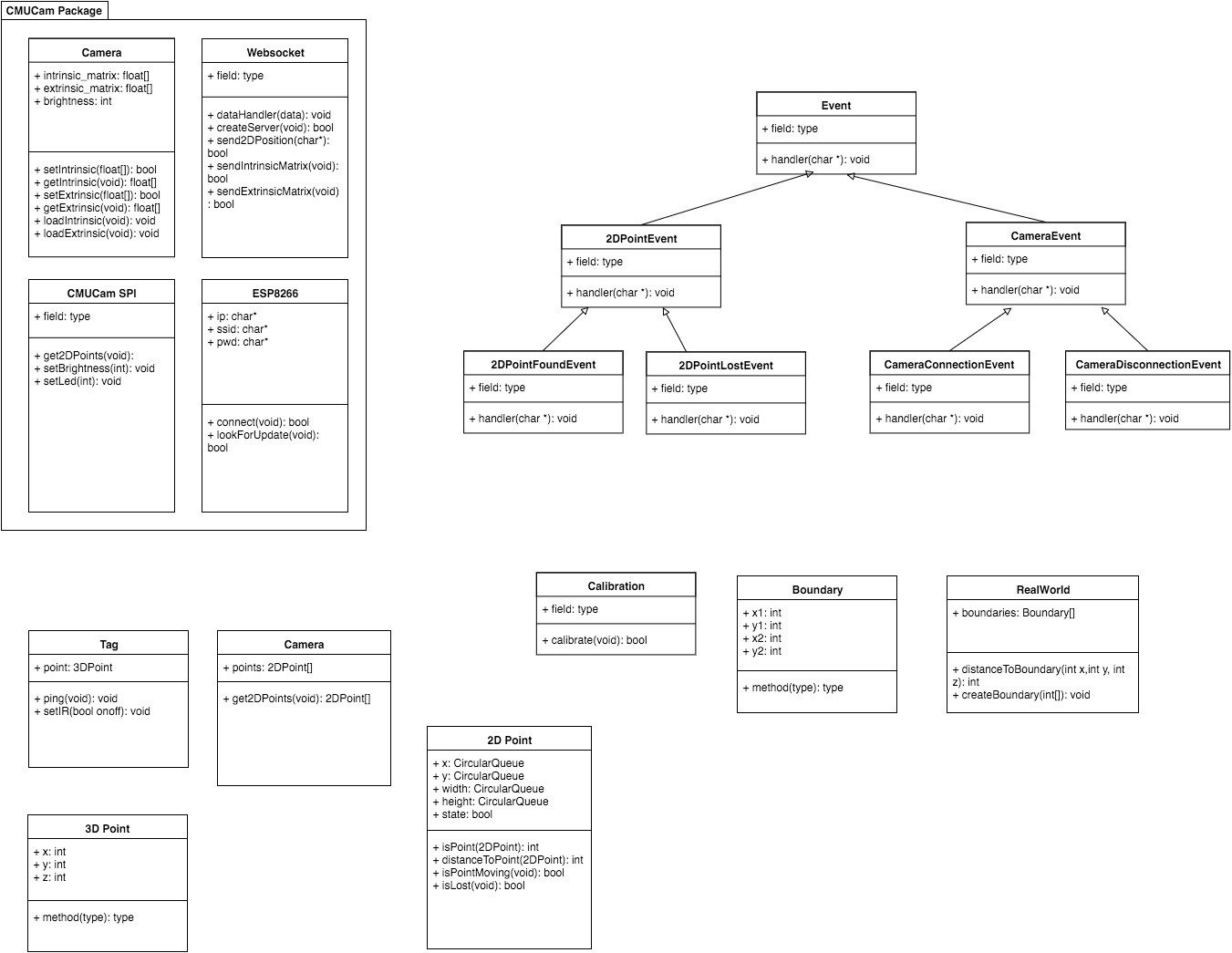

Here is very simply how the different components interacts with each others :

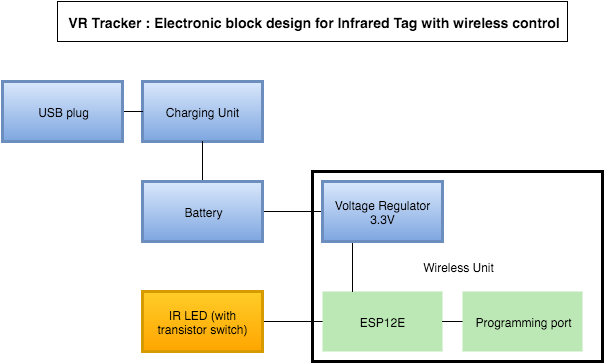

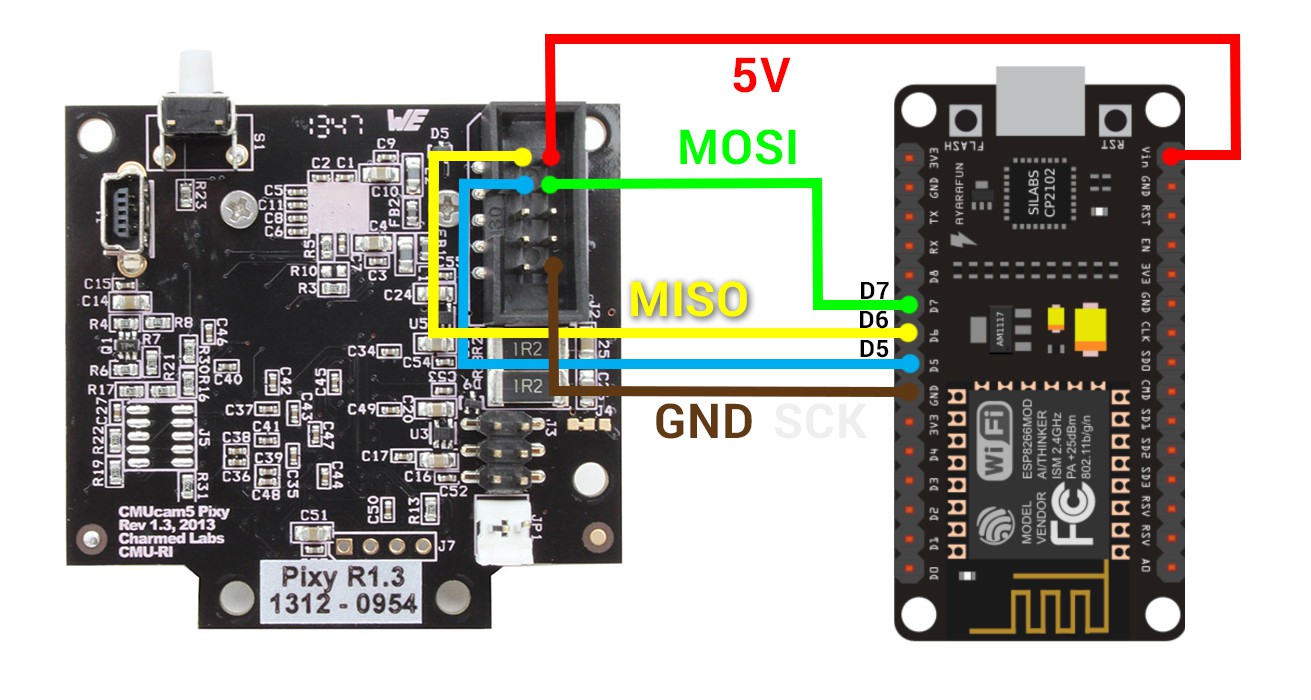

To sum up, the system is composed of at least 2 cameras (CMUcam5) that can track infrared light, an Arduino board with aNrf24L01 wireless transmitter to send the camera’s data over the air. A Raspberry Pi which acts as a gateway to calculate the 3D position from every 2D positions received from the cameras using OpenCV library. And finally an infrared emitter which is going to be tracked.

Jules Thuillier

Jules Thuillier

Yes ! A goddamn floppy disk !!!

Yes ! A goddamn floppy disk !!!

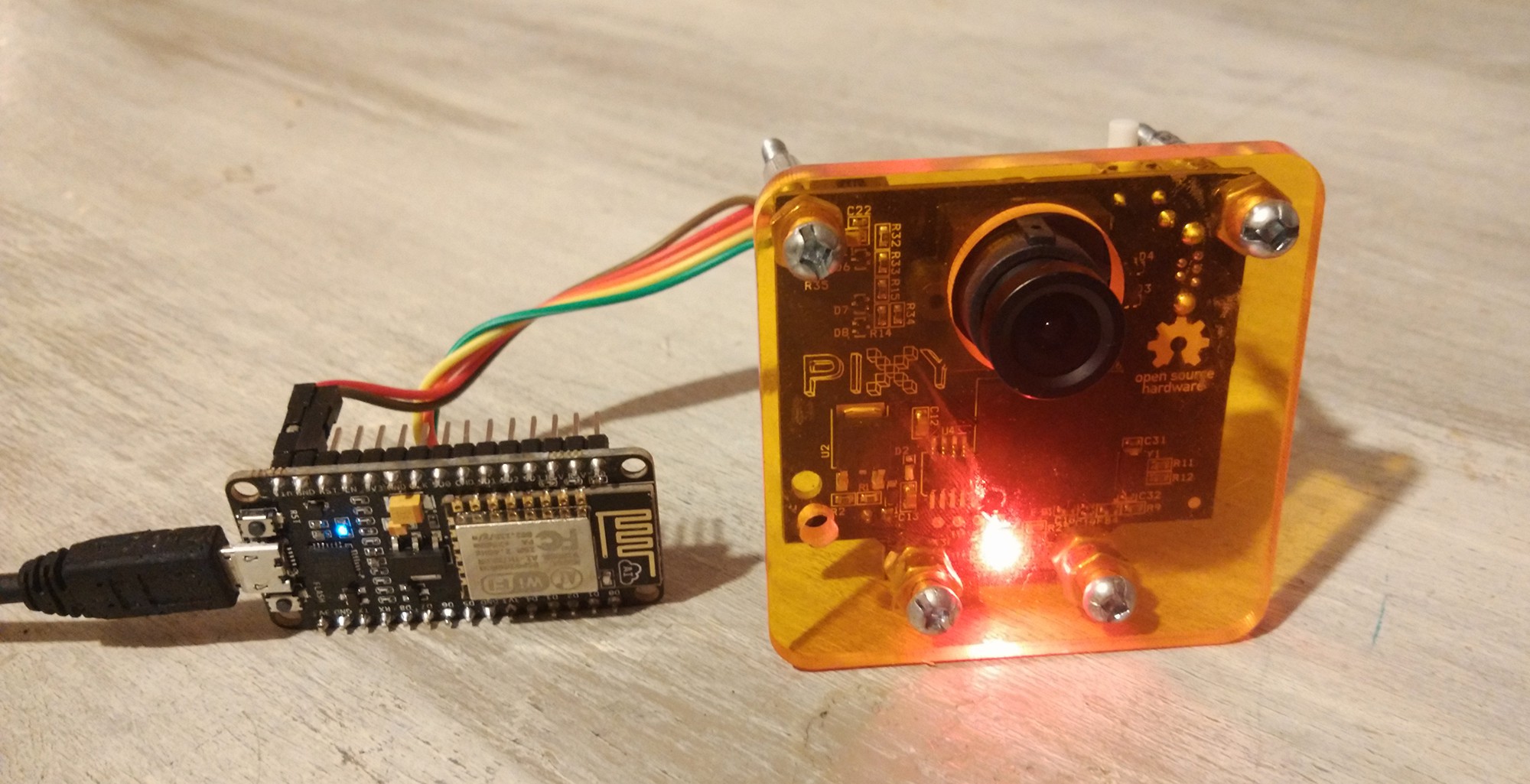

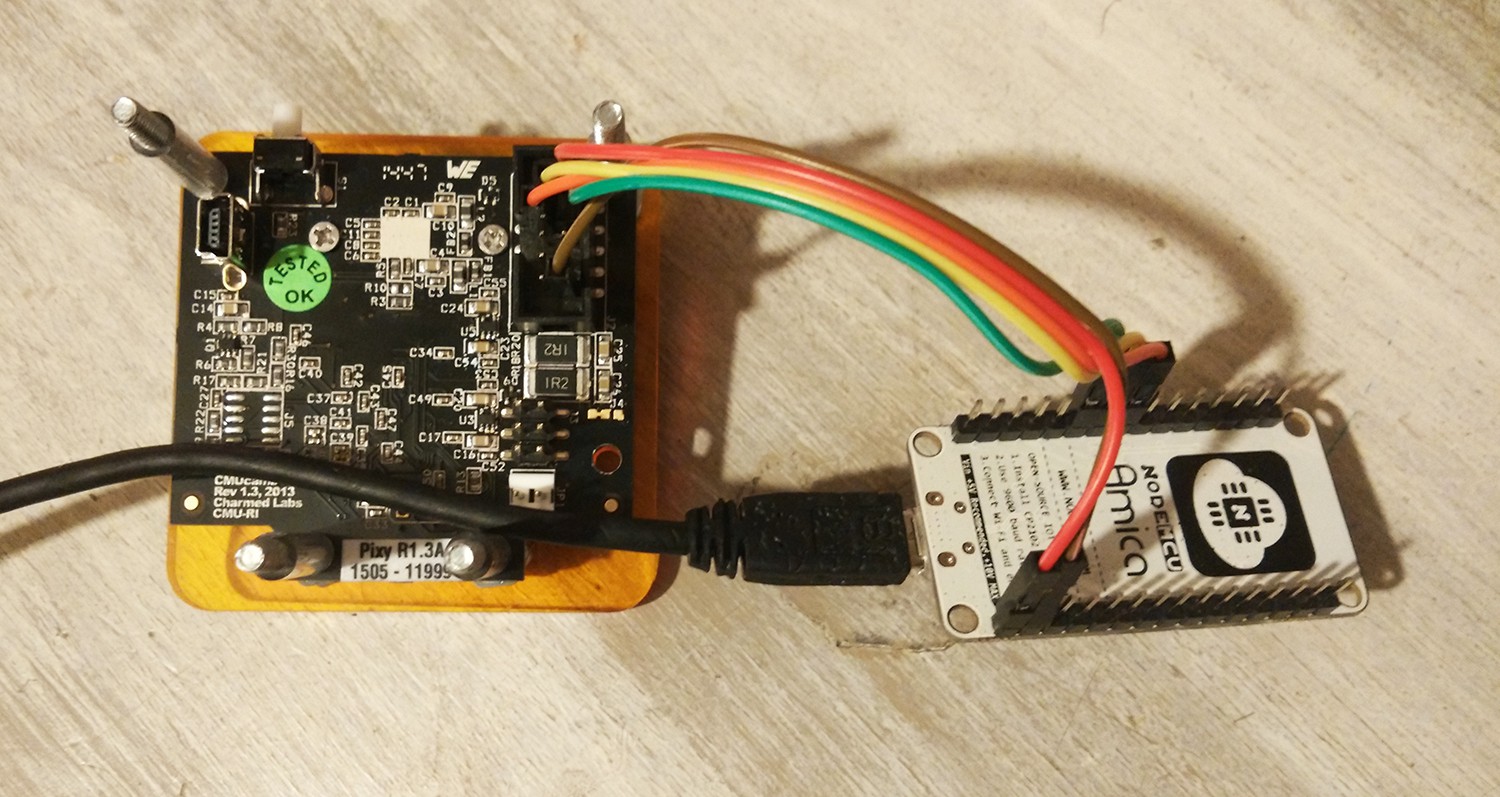

The camera and its wireless transceiver are sandwiched between both PVC parts as you can see here :

The camera and its wireless transceiver are sandwiched between both PVC parts as you can see here :

Martin Fasani

Martin Fasani

Ulrich

Ulrich

Charitha Jayaweera

Charitha Jayaweera

so I actually want to use this to set the rotation and orientation of a game world, to match a real world. similar to the void, so put the camera onto the headset itself, and then set the trackers to the wall. and use their coordinates to set various points. to have multiple level setups, yet reset the tracking to the environment. do you think that is possible?