-

Good Luck to All Hackaday Prize Competitors

10/22/2018 at 13:57 • 0 commentsIt's been a crazy last few months, I put in the time when I could. Starting a new job didn't help, but over all it's been good. Thanks to everyone who helped out, thanks to everyone who followed this project and commented and stared the github page. It's been a lot of fun!

-

Mouse Tracking working!

10/22/2018 at 13:54 • 0 commentsJust finished getting mouse tracking working, so you can control your mouse, however there's no time to put it into the video. I'll post photos and details soon. Check out the branch MachineControl on github for more details.

-

Timestamped Head Motion Corrected Eye Tracking Data Available

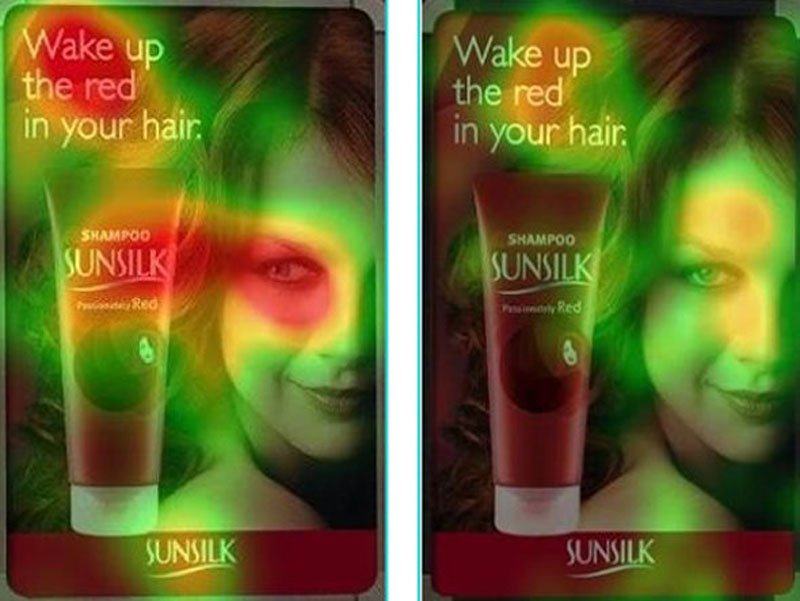

10/22/2018 at 11:10 • 0 commentsFinally a long asked for feature is available: time stamped eye tracking data that can be used for heat maps and other static image eye tracking analysis. Heat maps are a useful way to visualize data for websites and design as they can reveal the amount of attention a user pays to an individual element of the design and can help reveal issues.

A casual search on google images for "eye tracking heat maps" reveals all sorts of interesting information regarding this technique.

For example: this image shows the effect of where the model looks in an advertisement. Notice how much more attention is paid to the product on the left image.

![]()

-

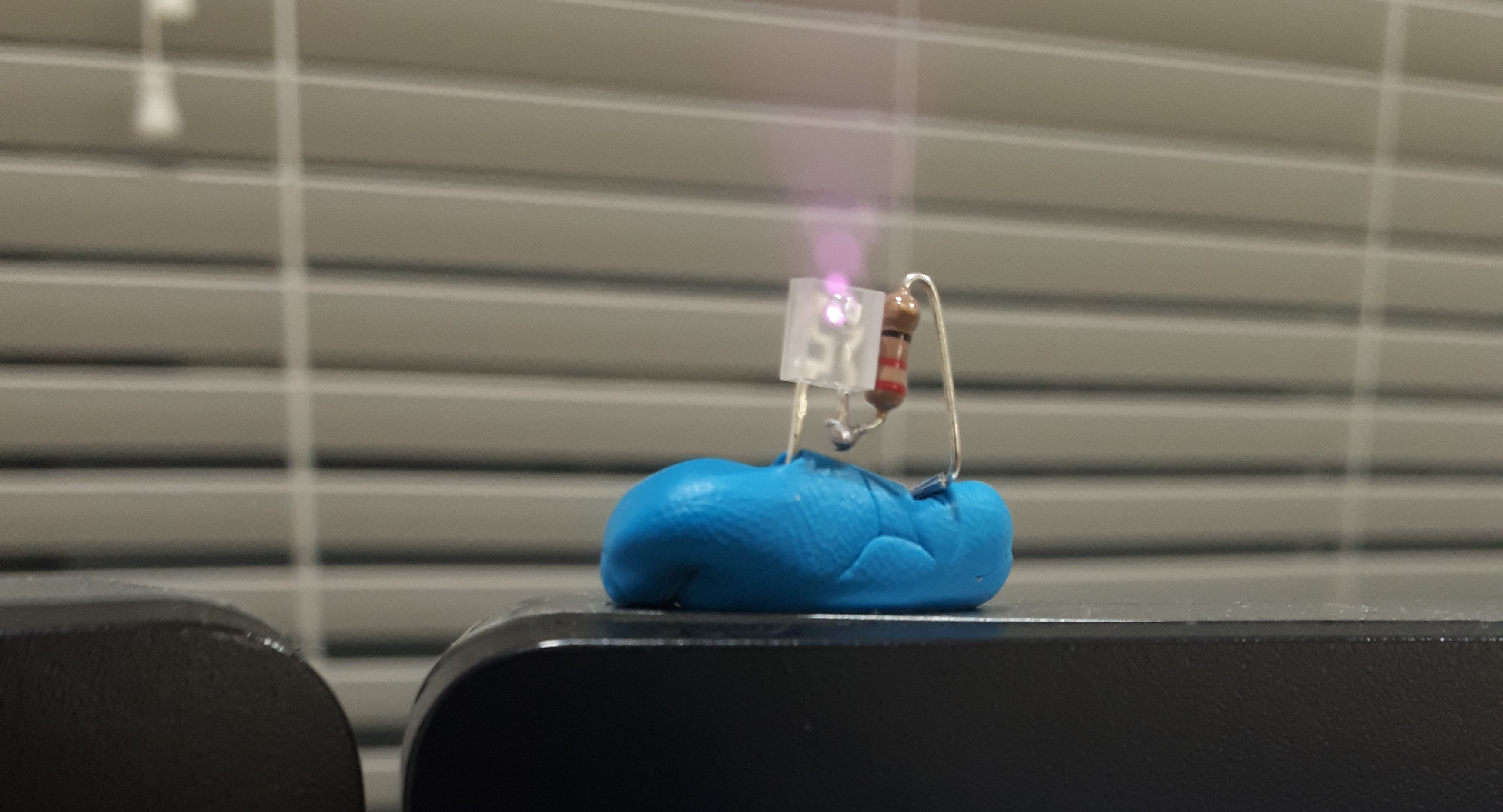

Laser Beacons Replaced with IR LEDs

10/22/2018 at 10:56 • 0 commentsIn order to provide a reliable and non intrusive experience to the user, the beacons used for the ransac approximation were switched from a red laser diode based design, to IR LED's at 940nm.

![]()

Additionally an IR filter was added to the front facing camera. Changing the front camera output from a typical camera output, to a tool to be used for localization and mapping.

-

Ransac Homography Correction Success!

10/22/2018 at 02:33 • 0 commentsToday we were successful in correcting for head movement relative to the screen. This was accomplished by using Accord CV's ransac implementation.

Homography is a powerful tool because it is relatively light on the CPU but can be used to do incredible things like estimating movement and perspective. And in this case, remapping points after the camera has moved.This technique is what is powering the ability to map the computer mouse to the user's eyes.

All of this new functionality is available on the MachineControl branch: https://github.com/J-east/JevonsCameraViewer/tree/machineControl

-

Development Plans over the next few days:

10/18/2018 at 22:07 • 0 commentsTCP/IP sockets: added TCP socket project for communicating with different applications over the network. Doing this will allow great flexibility in terms of communicating with different systems that may be wireless.

Greater separation of Projects: A lot of work has been done to separate out the eyetracking logic from the operating system specific machine vision. Camera and machine vision logic is held in one project (.exe) while EyeTracking, TCP Sockets, and Logging are held in different DLLs. This should allow for great flexibility to eventually expand this project to different systems.

Perspective Transformation: A big goal has been to move away from a monitor based system, this will be used to control various machines, such as robotic arms via systems such as the tinyG stepper motor controller system. Other platforms may be used, as long as they are able to attach to a TCP socket on the network, and interpret the commands sent.

By introducing perspective transformation, known fixed points may be identified with the front facing camera and the shift will be used to generate a transformation matrix. This transformation matrix will be used to transform the eye tracking location generated in order to correct for head movement (Yaw/Pitch/Row) as well as (X/Y/Z Cartesian movement relative to the known points. IR will be used on the front facing camera in order to ease the identification of these points.

Decawave Time of Flight Location System: Matt will be integrating Decawave based location system for greater understanding of head location in Cartesian space. We're hoping for +/- 50mm of resolution.

BNO055 9 axis Accelerometer: Matt will also be integrating a BNO055 for better understanding of Yaw/Pitch/Roll of the user's head. This may be added to a sort of rudimentary data fusion model for correction of the fix point beacon system we are trying for with the front facing camera and IR light sources. The team has fairly limited understanding of homography and more advanced machine vision techniques, so this may end up being essential for properly calibrating the user's gaze relative to the machine that will be interfaced with

TinyG Based Machine Control: Another big goal was to interface with a tinyG based CNC system. End goal being to draw a picture using the user's gaze.

More Comfortable Head Gear: My 3d printer is back up and running so an attempt will be made to print a superior head mounted unit for carrying the cameras and additional hardware which will be used in this project.

MVP: allow user to interface with computer screen for better ease of use: The minimum we can hope for is to allow the user to type a few words using only their eyes. This could be a massive feat should it be accomplished in a way that is easy to use for the individual. It's unclear what this interface may eventually look like, but it should be 1: accurate, 2: low eye strain, 3: easy to learn how to use

-

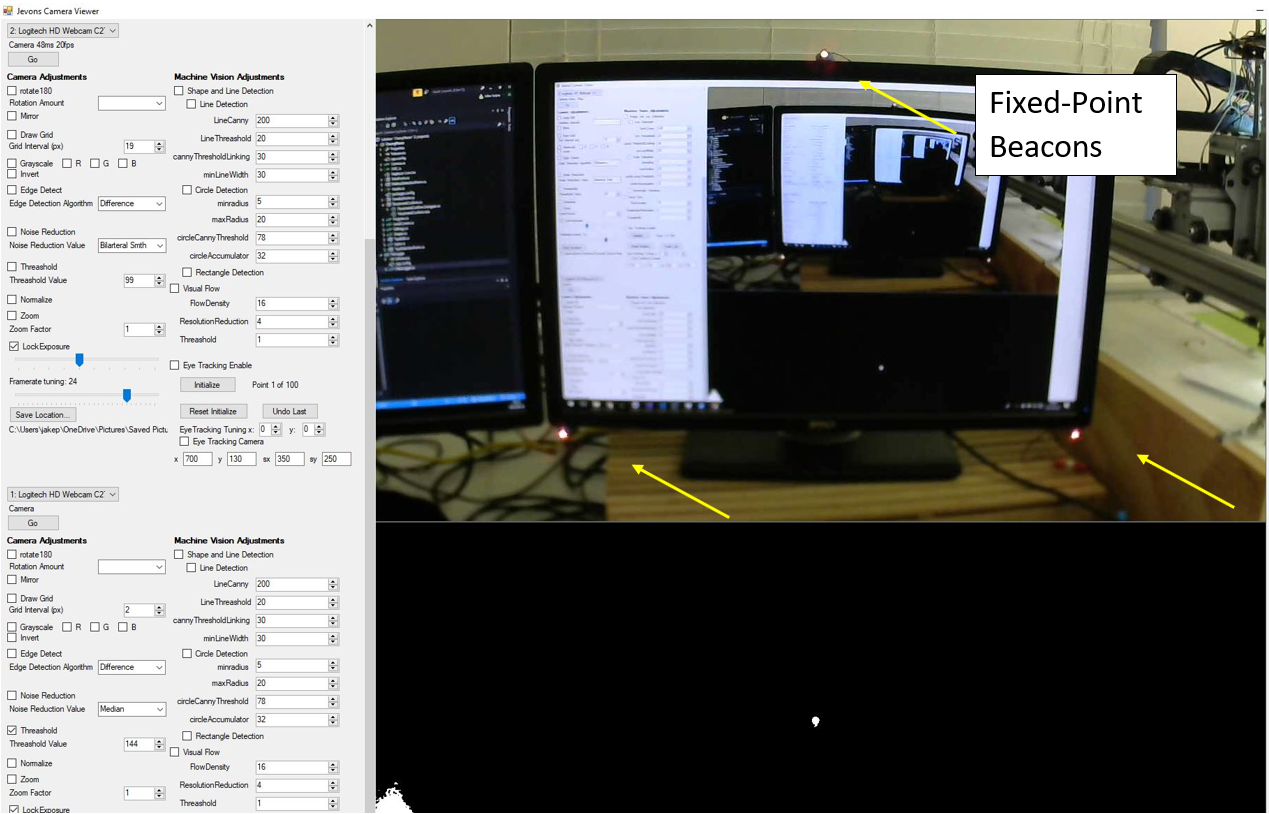

Setting Up the Fixed-Point Laser Beacons

08/26/2018 at 22:54 • 0 commentsA few different light sources were tried before settling on lasers as the best option. These lasers are simple 3 volt red dot lasers that have been diffused with a piece of masking tape. The lasers were bright enough to be easily detected by the forward camera even in a brightly lit room.

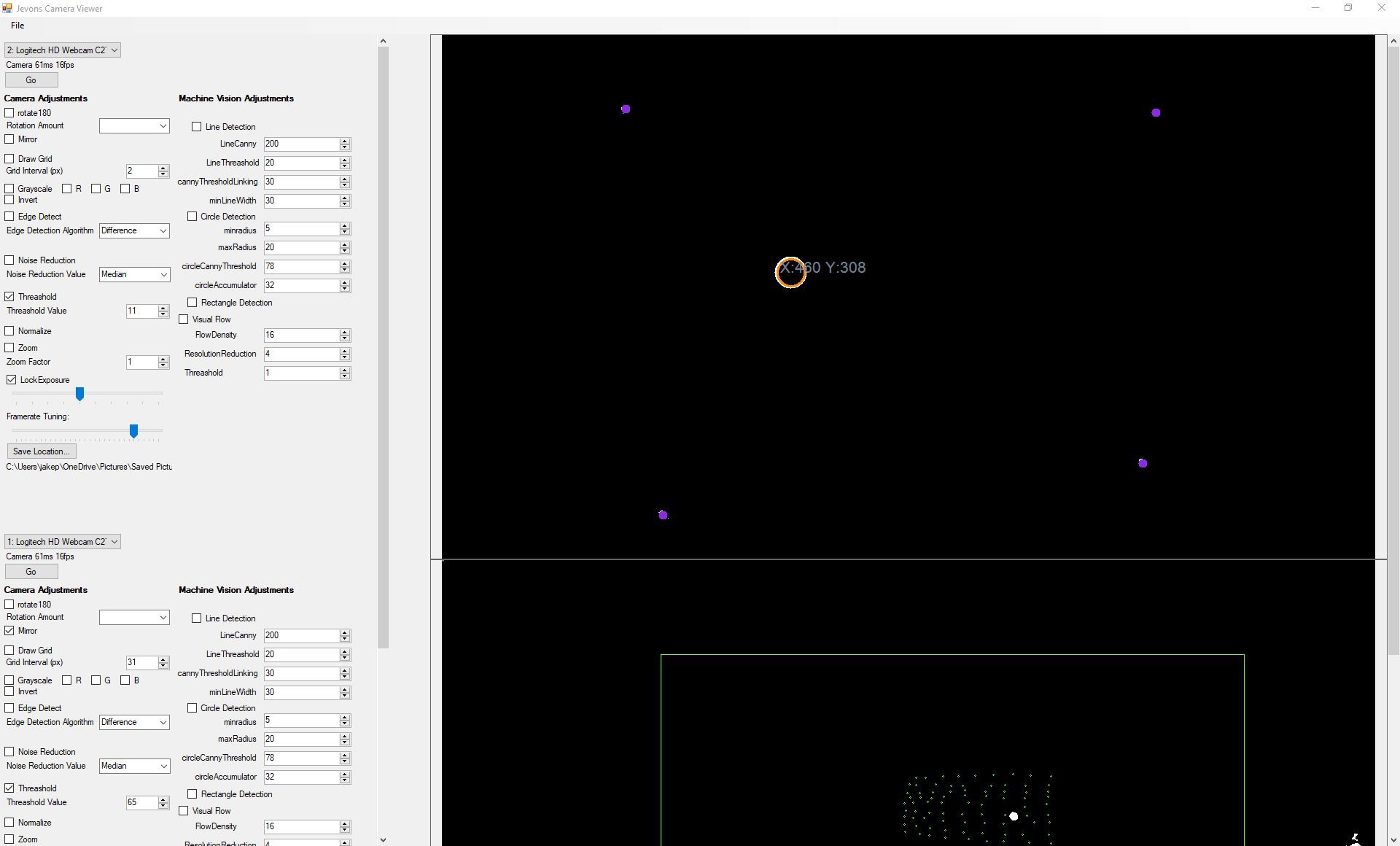

![]()

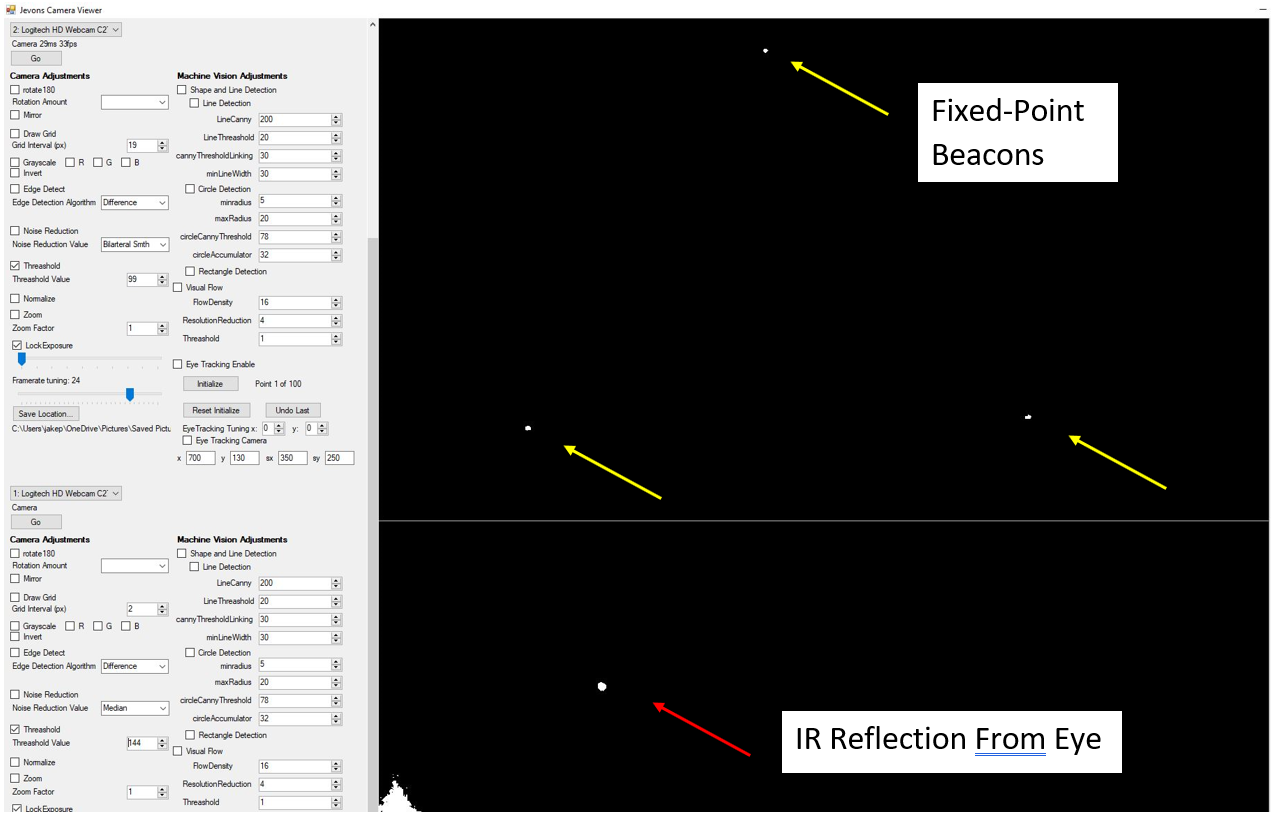

Below is a screen shot showing what the forward camera is looking at (top half) and the reflection of the IR led on the eye (bottom half)

In order to recognize the beacons in software we apply some filters using Jevons Camera Veiwer software.

-

Mounting and Structural Changes

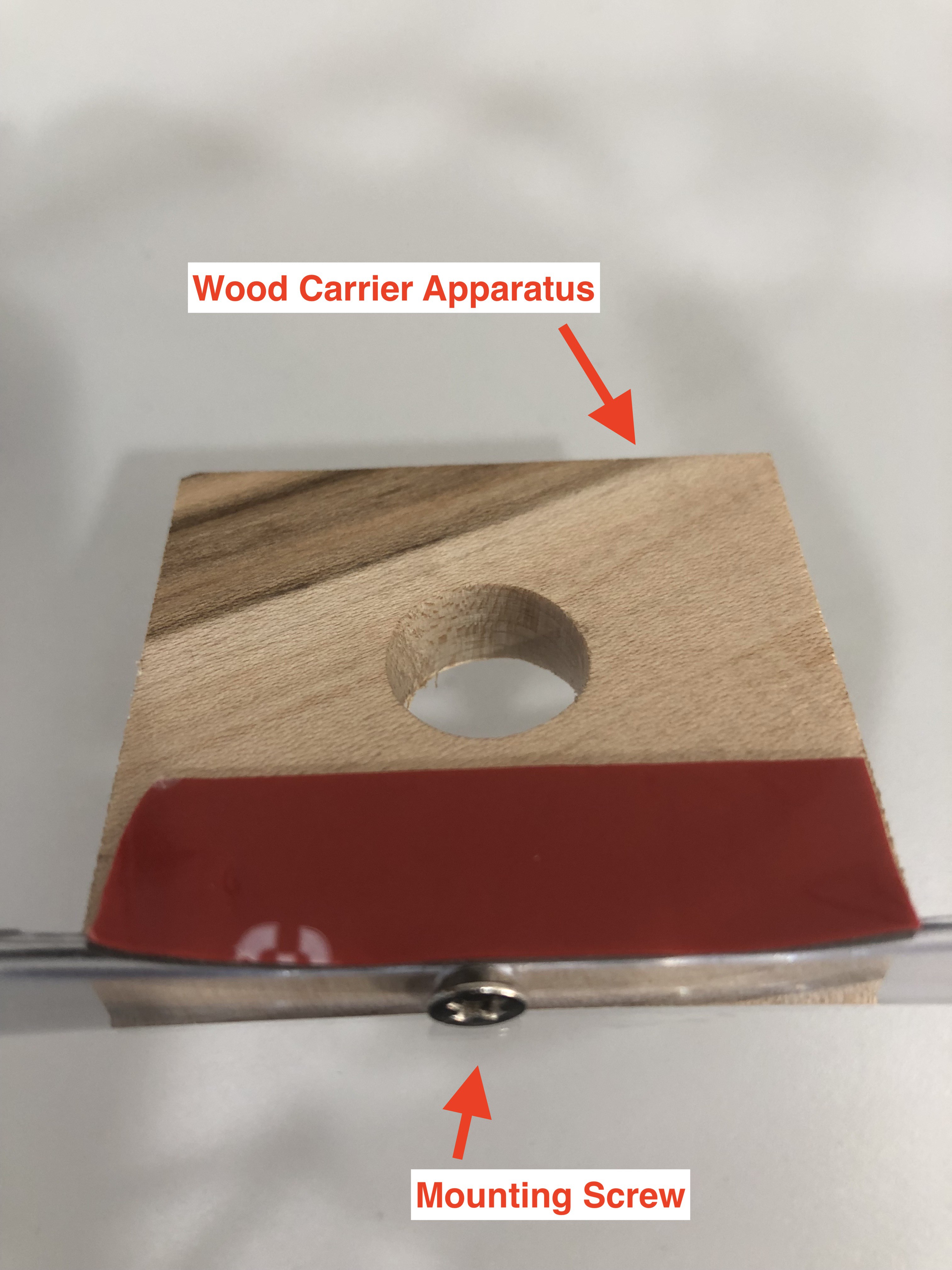

08/26/2018 at 15:45 • 0 commentsTo better stabilize the forward-facing camera, we utilized a carrier apparatus that is mounted onto the top of the glasses using screws. We chose to use a wood carrier apparatus, although plastic would be a suitable material as well. This change eliminated the zip tie mounting.

![]()

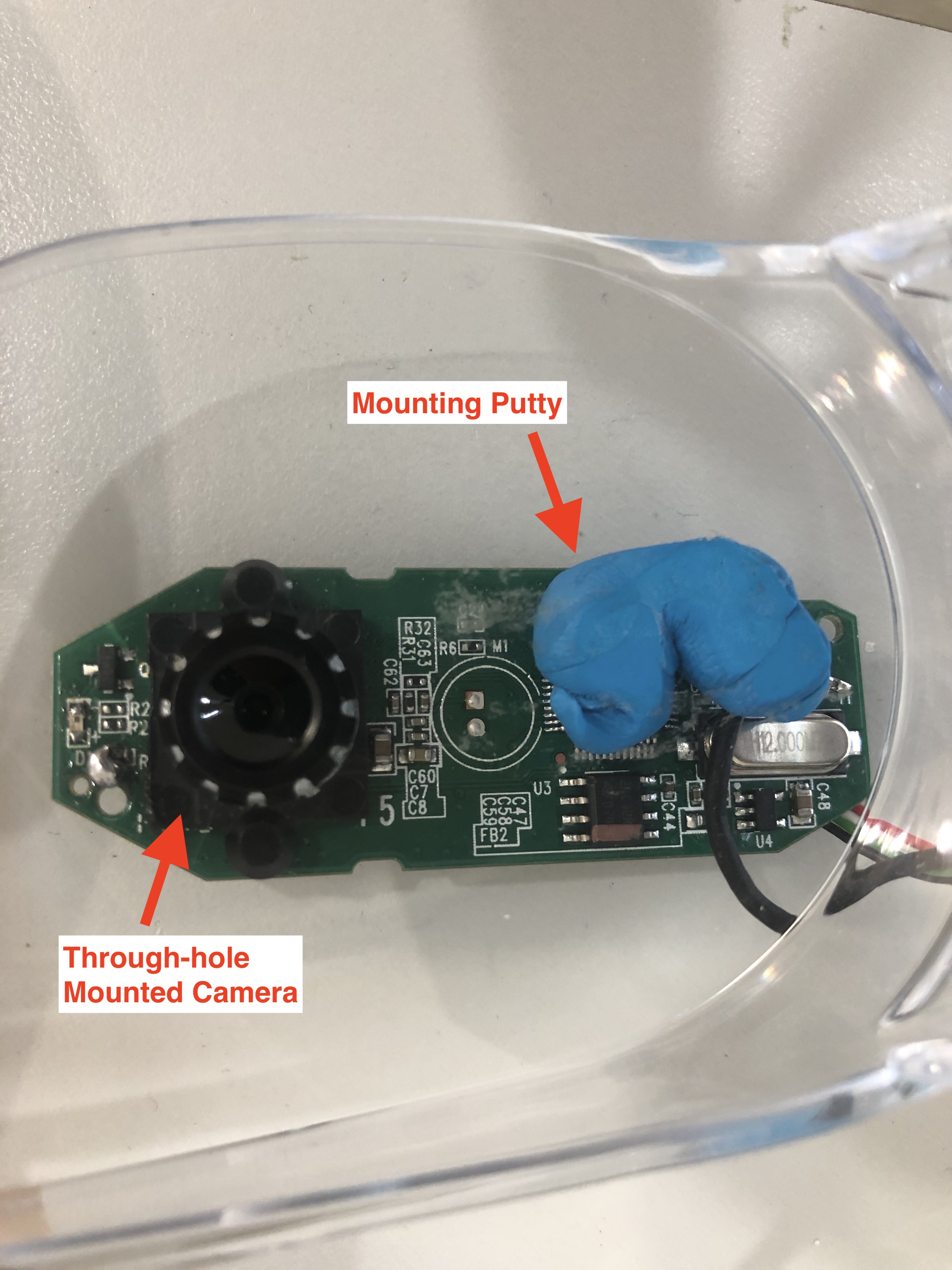

We changed the eye-tracking camera's mounting to a through-hole mounting, drilled directly into the glasses. The new mounting increased the resolution of the eye-tracking camera, since the lens is closer to the eye and the image is no longer distorted by the plastic lens of the safety glasses. The blue Loctite Fun Tak mounting putty holds the camera more securely.

![]()

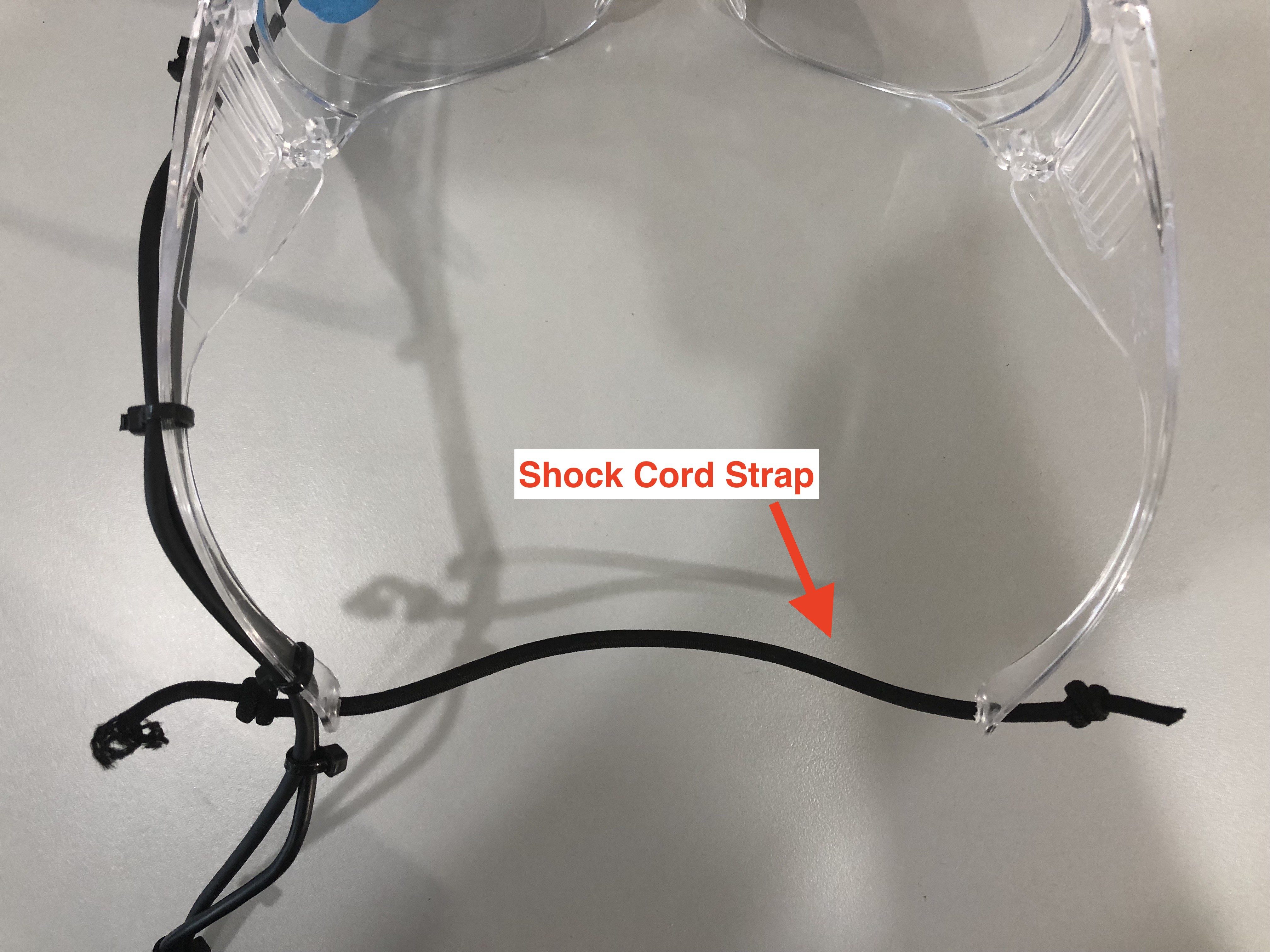

For additional usability, we changed the adjustable strap in the back of the glasses to a shock cord strap.

![]()

-

Converting to an Infrared Eye-Tracking Camera

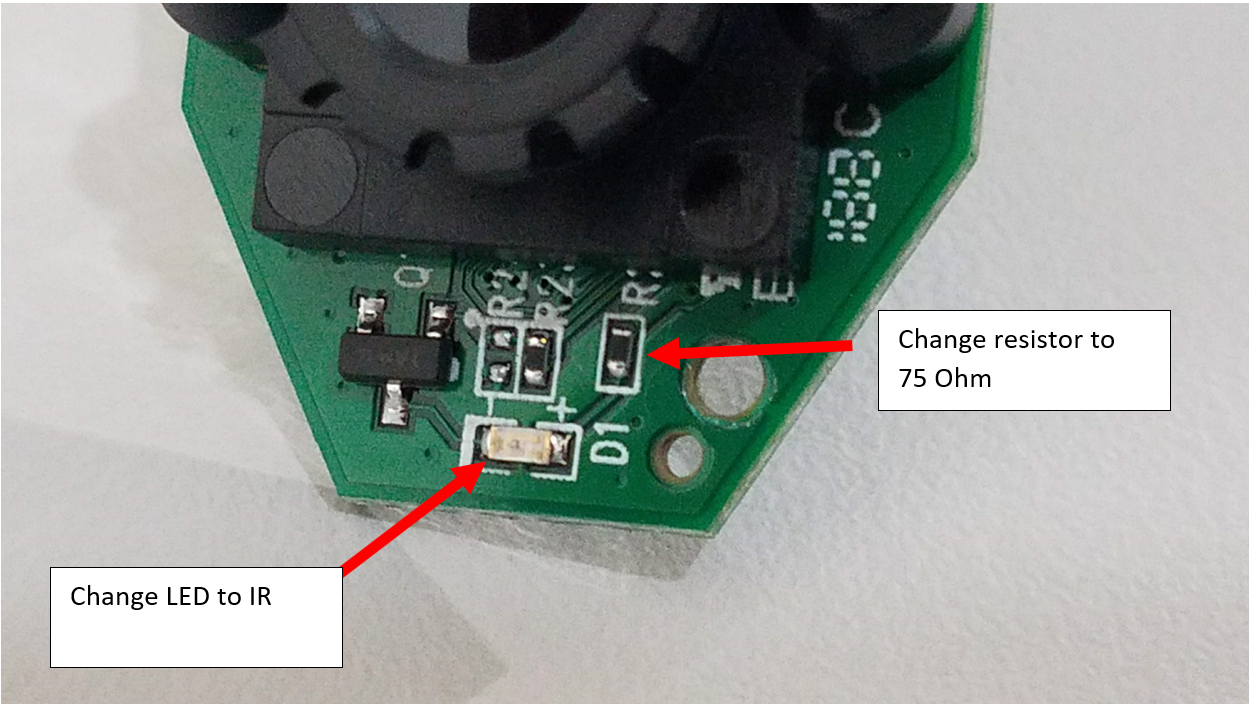

08/25/2018 at 20:59 • 0 commentsPreviously, the eye tracking glasses used a green LED for tracking eye movement. Noise from other light sources was interfering with the system. Plus, the green light shining in the user's eye was distracting.

![]()

Trading the green LED for an IR LED solves both of these problems. To fully convert the system to IR tracking, we inserted an IR film to filter the eye tracking camera. Because the c270 camera is not very sensitive to IR light, we more than doubled the brightness of the IR LED by swapping the 200 Ohm chip resistor for a 75 Ohm chip resistor.

-

Looking into designing and manufacturing Camera PCB

05/13/2018 at 18:54 • 0 comments2018-05-13 So after a very exhaustive search of USB capable image sensors I've pretty much given up.

Things I've looked into to date:

So I've been very impressed with the On-Semi MIPI CSI-2 interface sensors such as the MT9M114. My original plan was to design a tiny circuit board around on of their sensors. But after a very exhaustive search I was unable to find anything that can convert that data to motion-JPEG and put it on the USB. There is a very large and expensive chip made by cypress called the Cypress EZ-USB® CX3 which looks very cool, but is way more than this project requires.

Additionally I looked into several 12 bit parallel interface sensors such as the AR0130CSSC00SPBA0-DP1, with the plan being to use a realtec usb 2.0 camera controller. Unfortunately I do not believe these are available to the public and I do no want to invest the time into a design with almost no support. It's difficult to find datasheets for these devices. An example being the RTS5822 if anyone has additional information about these devices, please let me know.

I have looked into using a raspberry pi zero as an IP camera with a MIPI camera, such as the MT9M114 and a tiny pcb. Unfortunately the IP stream appears as though it may be delayed by several milliseconds. However I need to do some experimentation to confirm this. (The latency maybe extremely low in real terms, just requiring some optimization)

What I'd really like to do is set up a raspberry pi zero as a USB camera device per this guide and stream the data from a MIPI camera through the USB: https://learn.adafruit.com/turning-your-raspberry-pi-zero-into-a-usb-gadget?view=all#other-modules

If anyone would like to take on this project I'd love to help out. But in the meantime I believe we're going to be stuck with the c270

Low Cost Open Source Eye Tracking

A system designed to be easy to build and setup. Uses two $20 webcams and open source software to provide accurate eye tracking

John Evans

John Evans