Tornado Server

I used Amazon Web Services to set up an EC2 instance and hosted a Tornado web server. This server allows us to communicate with all the components in our drone system. The server uses WebSocket protocol to communicate with the Android application, the ESP32 camera modules and the ESP32 development board

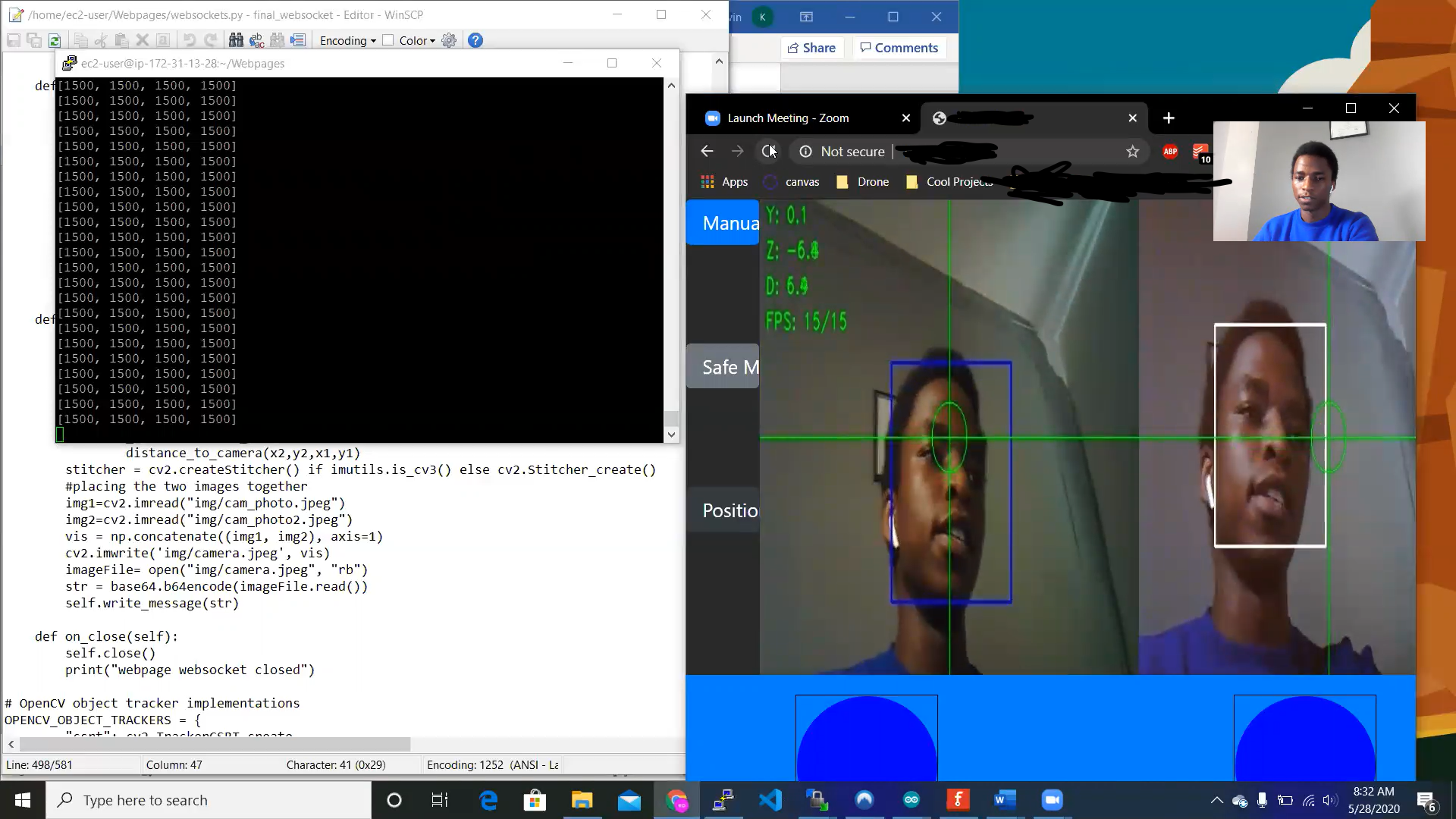

Computer vision

Computer vision was achieved by harnessing the power of cloud computing. Using a combination of haar cascade classifiers and a CSRT tracker, we can implement the face tracking algorithm and build on that to make the system recognize a face and follow it. After a face is recognized and tracking begins, we then compute the distance between the object and the camera. Based on this distance, we can send different commands to our ESP32 development board to make the drone follow the object.

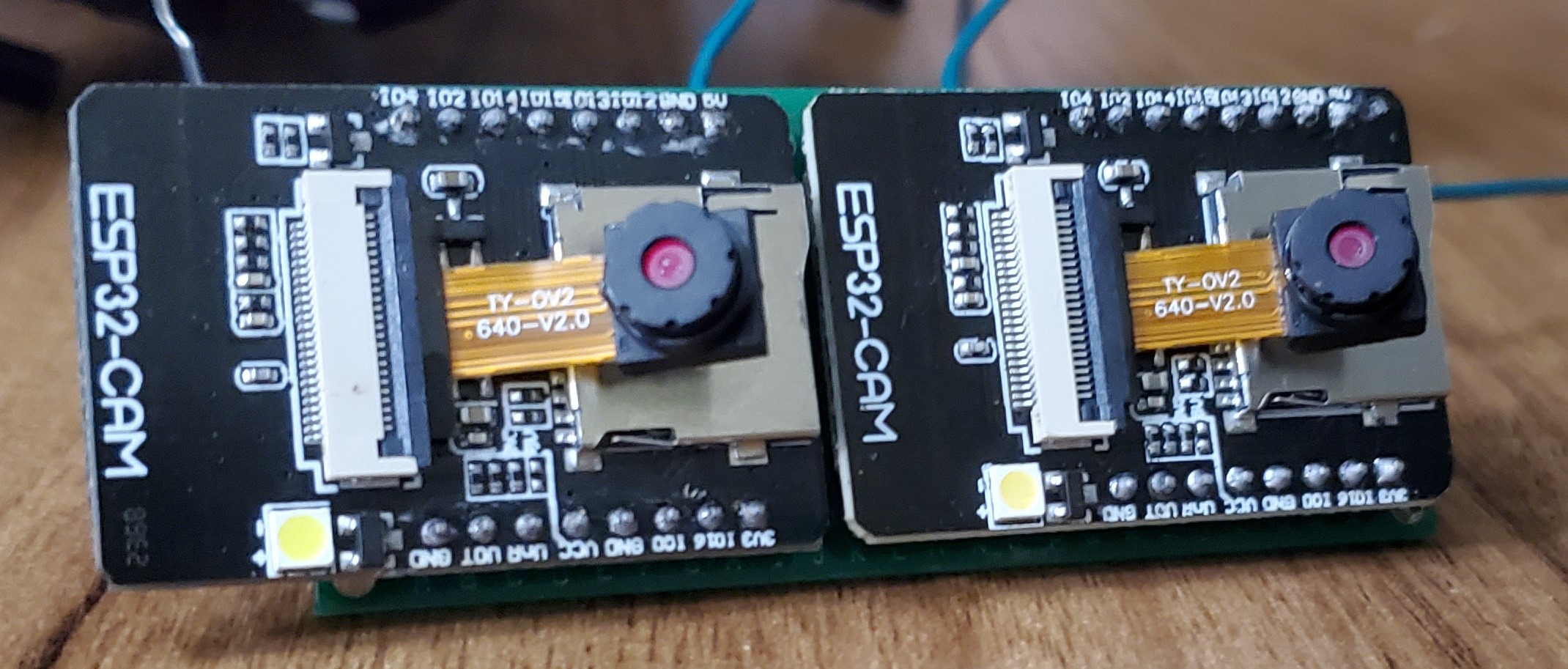

ESP32 Camera Module

The ESP32 camera module consists of a two-megapixel image sensor and an ESP32-S chip which has Wi-Fi and Bluetooth communication capabilities. The images from the camera are sent to the webserver through WebSockets. The drone consists of two camera modules. This is done so the system can achieve stereo vision and better calculate distance between the object being tracked and the drone. An image of the stereo camera is shown below.

ESP32 Development Board

The ESP32 development board is used to send PWM signals to our flight controller. The development board receives the PWM signals from our tornado webserver.

CC3D Flight Controller

The CC3D flight controller receives PWM signals from the ESP32 development board. These signals are then processed and then sent to electronic speed controllers to make the drone fly. The funky follow has three different flight modes: Position hold, follow mode and self leveling mode. Altitude hold was not achieved as the flight controller does not support that. PID control is done and the flight controller sends different PWM signals based on the current “flight mode”. The flight controller sends pulses with a duty cycle between 1000 microseconds and 2000 microseconds at a frequency of 50Hz. Firmware installation was done with the opensource flight controller software LibrePilot.

Web Client

The FunkyFollow also consists of a web client where the user can view the image stream from the drone. The images are first received from the camera modules. The images are then processed and sent to the web page. The images are sent through WebSockets every 50ms. In the future, I will get rid of the web interface and stream the drone images to our android app instead.

This screenshot shows the images received in the website as well as some of the communication occurring in the server.

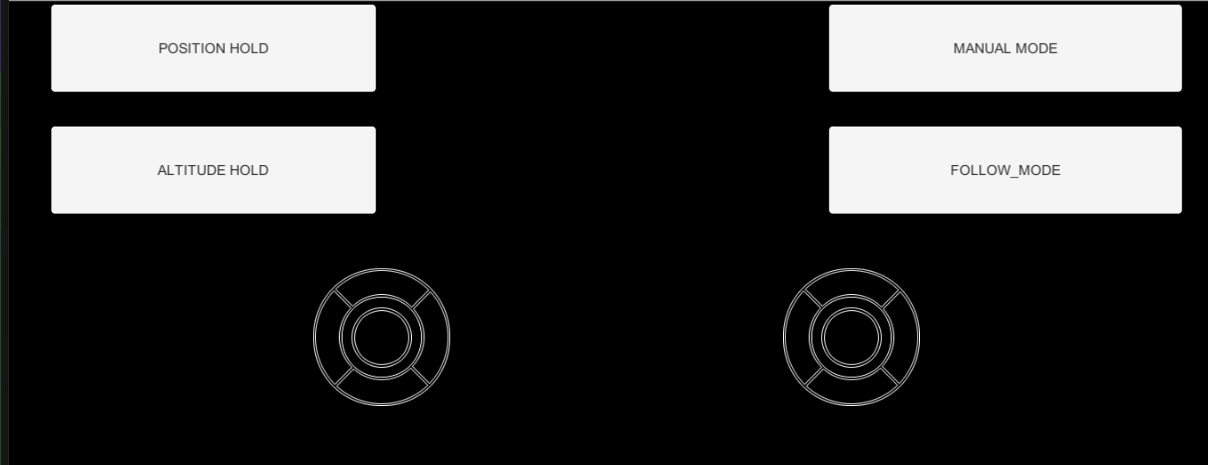

Android Application

I developed an Android app to control the drone. The Android App currently consists of two joysticks and four buttons. The joysticks send different signals to our server through WebSockets. The server then sends the message to the ESP32 development board. The message is then sent out to the flight controller as a PWM signal. A screenshot of the application is shown in the picture below.

In the future, I will change the background to be the image stream of the drone. Additionally, I will make the buttons be semitransparent to limit the obstructions to the image stream.

allexoK

allexoK

Joshua Cho

Joshua Cho

hypnotriod

hypnotriod

Nathaniel Wong

Nathaniel Wong

Hi @Kevin Omanwa, how do you achieve the distance estimates to a visual feature / surface using the stereo camera?