-

On masto

02/02/2023 at 00:20 • 0 comments -

Timeline object completed

07/25/2022 at 14:45 • 0 commentsIt's been a while!

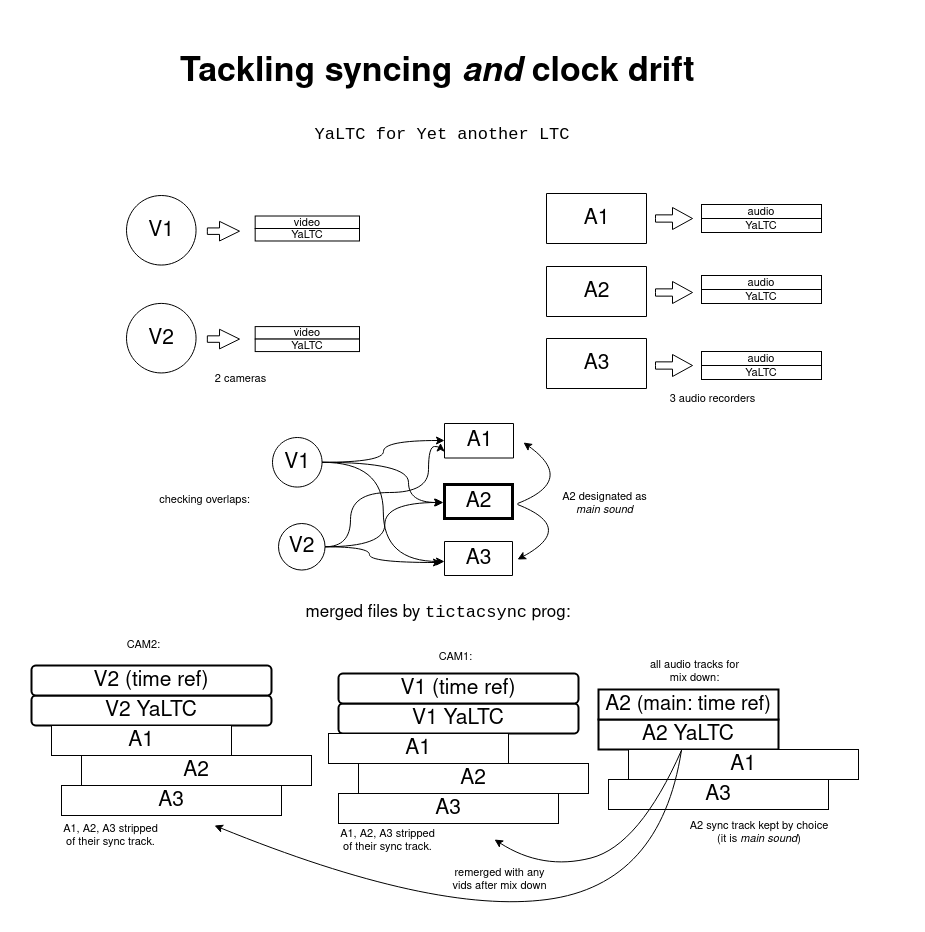

The Timeline object detects audio recordings time coincidences and sync-merged them to corresponding videos:

Next step: processing multi-files recordings (there are recognized in but not merged yet).

-

This is getting serious

03/10/2022 at 00:25 • 0 commentsThe project has it own domain name.

-

Dedrifting

02/19/2022 at 03:35 • 0 commentsBefore merging any audio to a video (or to a reference audio, called main sound), any track will be dedrifted using pysox tempo transform if the detected clock drifts result in a delay longer than 5 ms for sound tracks merged together or 10 ms for merged audio and video.

![]()

-

Code rewrite

02/03/2022 at 20:48 • 0 commentsPushing last updates to Source Hut

-

Cabling

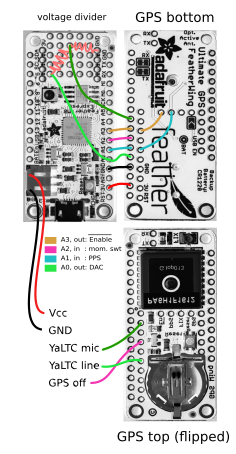

01/22/2022 at 19:42 • 0 commentsUsing Adafruit Feather boards.

![]()

![]()

-

It is now called the Tic Tac Sync!

12/24/2021 at 23:05 • 0 comments![]()

-

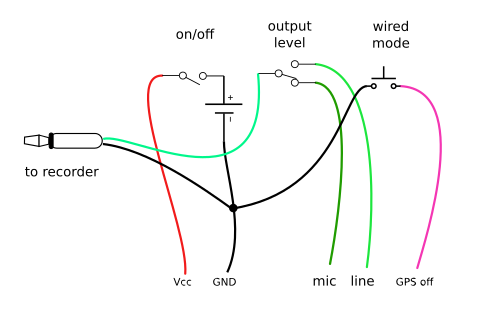

Do you have any reference? I don't want to block any DC

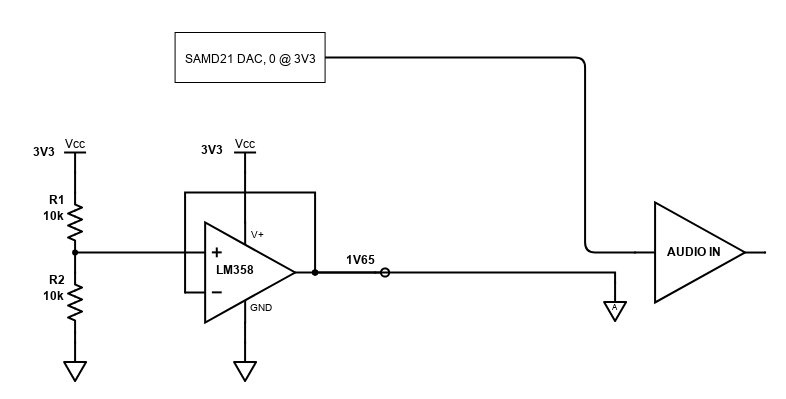

12/20/2021 at 13:04 • 0 commentsThe prototyping area of the Feather M0 is handy for building a voltage reference (aka virtual ground) around a LM358:

![]()

![]()

I know there is a DC blocking capacitor built in the recorder audio input stage (or yet a DC-servo circuit to track the signal mean over time): I did feed the 0-3V3 directly into it (hence a signal with a 1.65V DC offset) but it resulted in ringing and more importantly in a lag of almost 500 μs ! I'll try the same measurements with an onboard cap to see if it makes any difference. I'll post data latter.

-

Packaging crappy code

11/07/2021 at 02:13 • 0 commentsThere's even a pypi package for my dualsoundsync script !

Repositories are here:

- for postprocessing: dualsoundsync on source hut

- for flashing your own atomicsynchronator: ppssyncYears.ino

How bad is my code?- It is not commented yet

- test coverage is null, for now

at least

- it doesn't crash

- it works (syncing dual system sound recordings)

- it doesn't use print() for logging

-

to do (software side, for dualsoundsync ):

- comments and tests

- optional logging

- implement syncing of videos with intermittent audio recordings

- implement syncing of audio with intermittent video recordings

in the long run, build a standalone GUI program (with Qt?)

-

Syncing in the kitchen

10/14/2021 at 13:17 • 0 commentsFor now enjoy this dual-system sound demo. Stay tuned for a multicam demo with Olive and OpenTimelinIOHere is the camera recording: image + sync signal (fingers sound is absent, remember: this is a dual system sound set-up):![]()

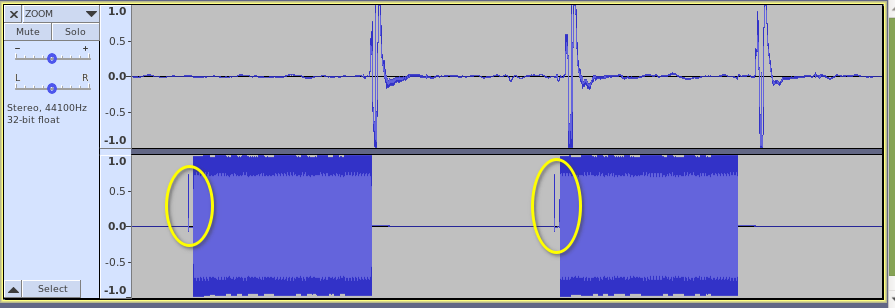

This is the ZOOM H4n Pro recorder tracks. Note the thin spikes in the right channel (the yellow ovals): they are the sync pulses from the GPS, straight on the UTC second (the left channel are the fingers snipping). Those sync spikes are present in the movie too (by construction)

![]()

![]() Postprocessing with dualsoundsync.py, aligning the spikes in the audio recorder file with those in the camera file:

Postprocessing with dualsoundsync.py, aligning the spikes in the audio recorder file with those in the camera file:$ dualsoundsync /media/shooting_files_rep scanning rushes_folder splitting audio from video... splitting audio R and L channels trying to decode YaLTC in audio files 1 of 4: nope 2 of 4: ZOOM_Rchan.wav started at 2021-10-13 01:45:08.263145+00:00 3 of 4: nope 4 of 4: fuji_a_Lchan.wav started at 2021-10-13 01:45:14.361967+00:00 looking for time overlaps forming pairs... joining back synced audio to video: dualsoundsync/synced/fuji.mp4

![]() Tadaam, fuji.mp4 automagically synced!

Tadaam, fuji.mp4 automagically synced!

Atomic Synchronator => TicTacSync

Beyond '67 SMPTE timecode: YaLTC is a new GPS based AUX syncing track format for dual-system sound based on affordable hardware

Raymond Lutz

Raymond Lutz