-

Not Dead. Really.

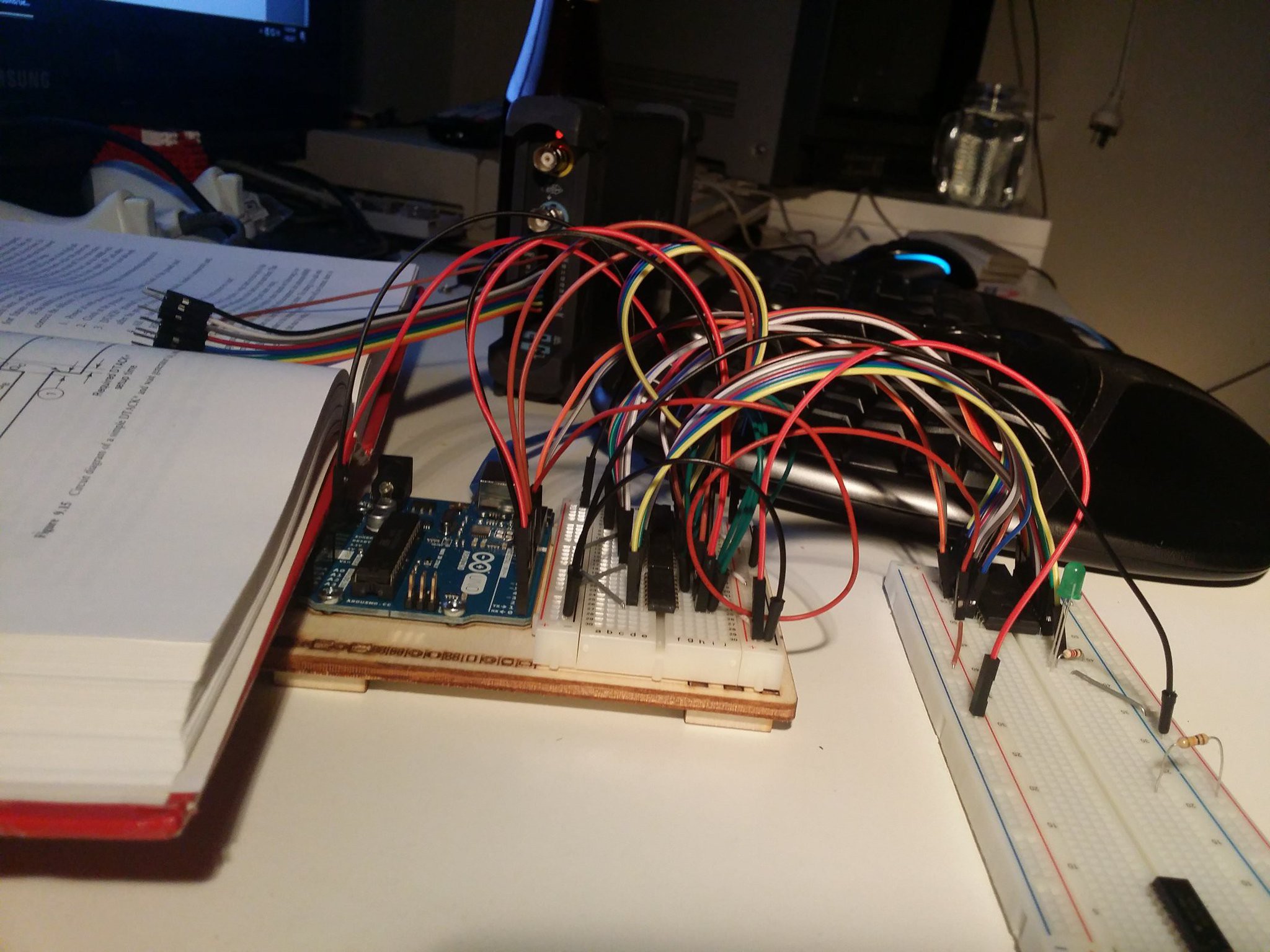

01/29/2019 at 08:55 • 0 commentsSo this project did stall, but it's not dead. It's still sitting on my desk where it was the last time I really tinkered with it, but it's not been torn down. It stalled because I hit a bit of a bump with it, and then life in general has prevented me from addressing things further, until now.

What Caused the Stall?

So after getting the DTACK sorted out properly (see the last project log) I had a kind of working system. I wrote a simple program to the EEPROM which would read and write from RAM in a loop so that I could analyse what was going on with my Logic Analyser and ensure everything was working properly. Where it got confusing is that it appeared to work correctly for a few bus cycles, and then go off the reservation; everything would be fine and then out of nowhere it'd be processing illegal instructions.

Trying to work out what was happening with a 16 bit address bus and 16 bit data bus with an 8 channel analyser definitely proved tricky, but eventually, with the help of an online friend I realised that I'd cross a couple of wires on the data bus between the CPU and ROM. Once that hurdle was cleared I was feeling pretty good about things, and instead of writing up something here (which I should have done) I started trying to add a MC68681P DUART to the breadboard, and at that point the whole setup just got 'too flakey' for want of a better term. I've thought about trying to find where the problems on the breadboard circuit are but it felt like a hefty obstacle, with work and life in general leaving me with little free time, the stall happened.

Next Step

It seems to me that getting away from the breadboard will be the key to getting this going again. I've played around with some crystal oscillators to replace the CPLD I was using for a clock, and have started playing around with various tools to generate schematics and some PCBs. I'm planning to simply use pin headers for bus connections and try and get the basic CPU, RAM & ROM combination working on a card or two before I start adding serial again. Having a bus setup will help modularise things and make it easier to add new functionality without impacting old.

Right now I'm wasting a little time trying out different tools again. I liked DipTrace but thought I should make the effort to learn something more "standard", and it's likely a toss-up between KiCad and Eagle, both of which seem to have their pros and cons in terms of UI friendliness. That said, i've tripped over Upverter and it's also showing promise, so who knows.

More to come soon!

-

Fixing DTACK

07/31/2017 at 14:04 • 0 commentsSo in my last log I said my DTACK# generation was "dumb, but working", however it turns out that it was just dumb. Yes it did work in the sense that it would pull DTACK# low after a configurable delay based on the shift register, but the problem was that it didn't go high again when it should, it simply delayed the signal (we'll call this BCYCLE# as per Alan D. Wilcox's 68000 Microcomputer Systems):

BCYCLE#: ‾‾‾‾\______/‾‾‾‾‾‾‾‾‾‾‾‾‾‾‾ DTACK#: ‾‾‾‾‾‾‾‾‾\______/‾‾‾‾‾‾‾‾‾‾This might not look to bad on the surface, but really DTACK# should be negated (made high) as soon as the CPU has indicated that it's stored the data on the bus. The effect of not doing so can be seen on sequential bus reads/writes:

A B BCYCLE#: ‾‾‾‾\______/‾‾\______/‾‾‾‾‾‾‾‾‾‾‾‾‾ DTACK#: ‾‾‾‾‾‾‾‾‾\______/‾‾\______/‾‾‾‾‾‾‾‾In this second scenario, the first bus cycle completes at time A, but because DTACK# doesn't follow suit it's still asserted low for sometime into the next cycle, starting at time B. The upshot of this was that it appeared continuously grounded after the first cycle in any given run and using later and later outputs from the shift register had no effect on the operation.

Clearing DTACK on Time

I studied the circuit diagram in Wilcox's book and pondered the apparent complexity of it... initially I'd not seen a good reason for negating various signals and the like but having encountered and diagnosed the issue just detailed I finally realised what was going on. The circuit in the book (which is now what my solution is based on (not identical, but close) uses the shift register in a different manner. The core concept revolves around using the clear input of the shift register to reset it's output at the moment required.

By holding the serial data input (SDATA) high, the chip's output pins will always be high, except for the first few clock cycles after a reset, at which point the 1 values need to propagate through from QA to QH, one clock at a time. This gives us the delay control needed, and then an immediate reset to 0 is available by asserting CRCLR# low. The final piece of the puzzle is to realise that these values are the opposite of what's required, by running BCYCLE# through an inverter and the output of the shift register through another, we get the values we desire.

Talking it through: when BCYCLE# goes low, CRCLR# on the shift register will go high, starting the propagation of 1's through the output pins. This gives us a delay based on which pin we tap in to, and by inverting that 1 we'll get a delayed 0 which we can use for DTACK#. When BCYCLE# returns high indicating the end of the bus cycle, CRCLR# will be driven low, resetting the shift register, meaning all pins will output 0. Again, inverting this gives us our DTACK# high, essentially at the same time as BCYCLE# does, not after a delay causing the previous bug.

BCYCLE#: ‾‾‾\______/‾‾\______/‾‾‾‾‾‾‾ CRCLR#: ___/‾‾‾‾‾‾\__/‾‾‾‾‾‾\_______ QC: XXXX__/‾‾‾\_____/‾‾‾\_______ DTACK#: XXXX‾‾\___/‾‾‾‾‾\___/‾‾‾‾‾‾‾The key lines to look at above are BCYCLE# and DTACK#, with those alone it's easier to see the difference between this setup and the previous one:

// Before - DTACK# still asserted during the second cycle BCYCLE#: ‾‾‾\______/‾‾\______/‾‾‾‾‾‾‾ DTACK#: ‾‾‾‾‾‾‾‾\______/‾‾\______/‾‾ // After - DTACK# set back to high with BCYCLE# BCYCLE#: ‾‾‾\______/‾‾\______/‾‾‾‾‾‾‾ DTACK#: XXXX‾‾\___/‾‾‾‾‾\___/‾‾‾‾‾‾‾ -

Talking 'bout my DTACK Generation

07/04/2017 at 12:48 • 0 commentsSo the 68k CPUs use an asychronous bus setup such that when the CPU asks something else for some data (e.g. RAM) then rather than just assuming the data will be there in a given amount of time, it sits and waits until it's told the data is ready and present on the bus. This signal is called DTACK (Data Transfer ACKnowledge), and you pull it LOW to tell the CPU it's good to go.

For the initial freerunning stage (the part I logged before getting sidetracked by EEPROM programming) this can just be grounded, and if you have fast memory, it could just stay that way. For now I'm implementing a pretty dumb scheme that's in no way optimal, Rather than considering all of the CPU signals that can indicate an address is valid and ready I'm just taking the easiest option.

![]()

The 68k has two control lines called the Upper Address Strobe and Lower Address Strobe, and if either of these are asserted LOW then it's attempting to perform a read/write. Right now I'm taking those two lines, running them through a 7408 AND gate, and pumping the result through another SN74HC595N shift register (the same type I used in the EEPROM programmer) to delay the LOW pulse before passing it back to the CPU on the DTACK pin. Dumb, not optimal, but working. That'll do for now: I know from programming that premature optimisation will bite you in the ass eleven times out of ten, and so far my adventures into hardware have been fraught with more than a few dumb mistakes, so consider this an attempt to mitigate my own stupidity.

-

Diversion Diverted.

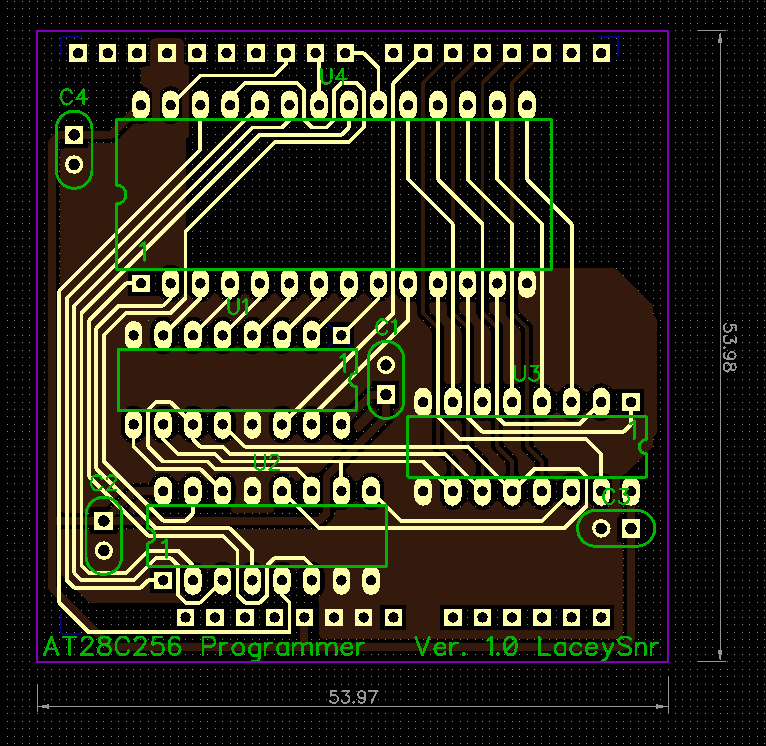

06/26/2017 at 12:22 • 0 commentsSo the EEPROM rabbit hole was a little deeper than I thought, but it was massively valuable in terms of learning for me. Having gotten the EEPROM programmer working on the Arduino, I decided it'd be good to make it more permanent, which meant making a PCB.

Design

Before this I'd never even designed a PCB so the first steps were finding out about tools to do both a schematic and then a layout. Having played around with all of the big names out there (Eagle and co.) I realised that almost none of these tools have a particularly intuitive UI. The one I found most friendly for all aspects of the design was DipTrace and so that was the one I ended up using, features I particularly liked were the speed at which you could sort out bus lines in the schematic along with the net labelling, and the easy schematic->PCB process. Also for a newbie it made easy work of pattern editing and the like, which I hadn't though I'd need but came in handy for the ZIF socket.

Overall creating the schematic wasn't too hard, though I did miss a few of the control lines at first, making my first two iterations of the PCB routing useless. Having put those in I went through a few more revisions and although the result isn't the best (it'd look prettier with a full fill each side methinks), it does work.Manufacture and Assembly

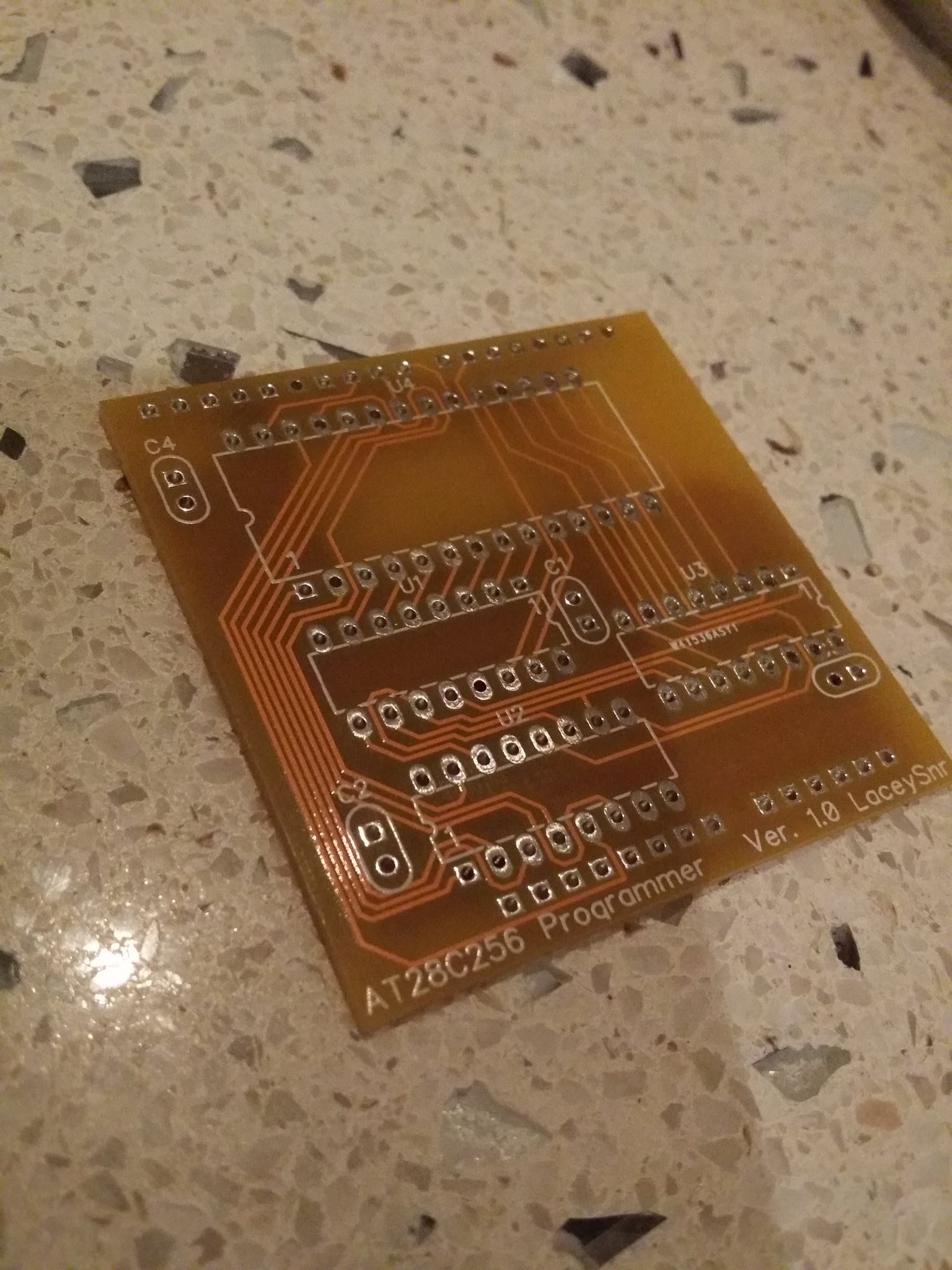

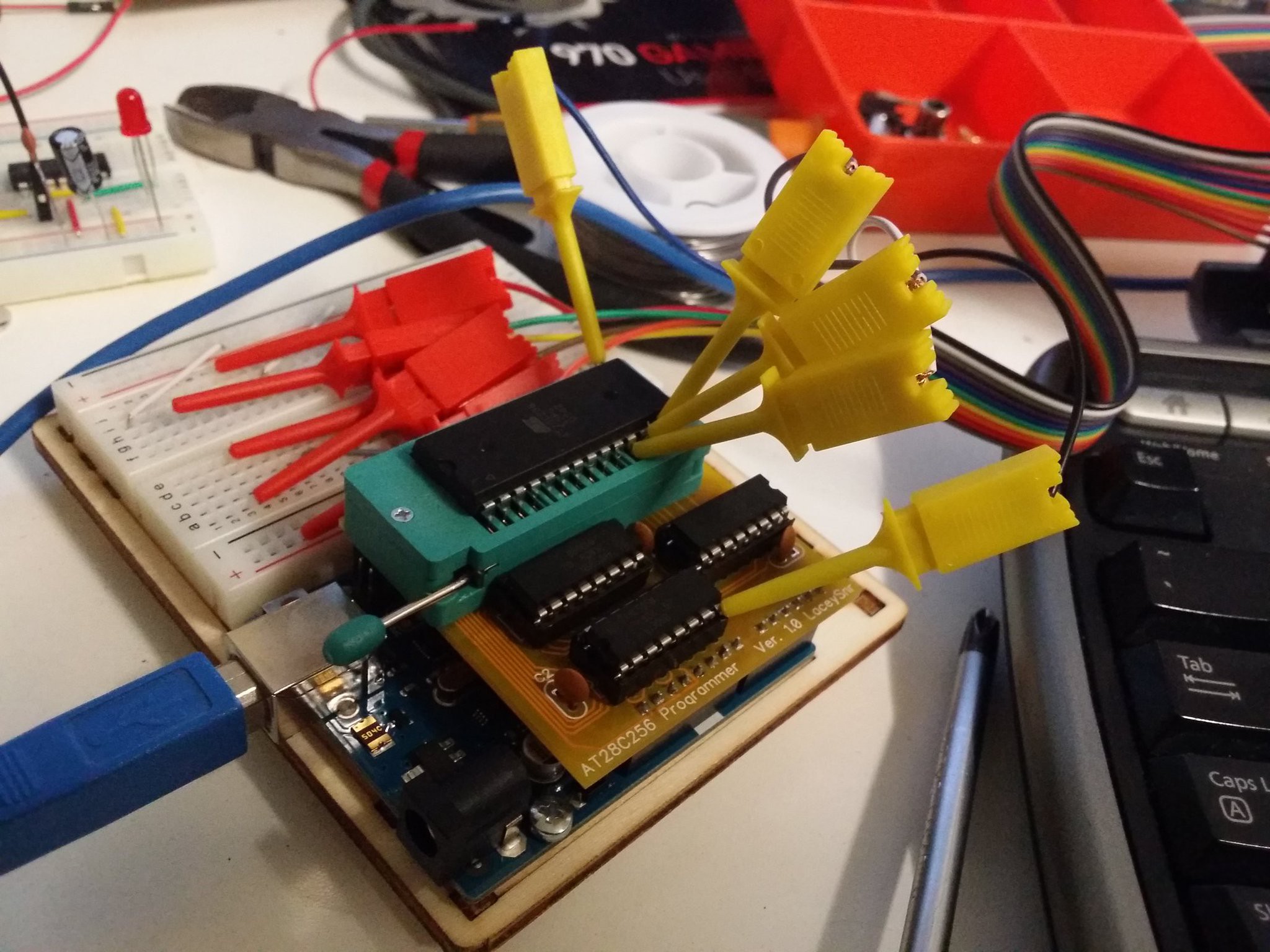

I had the boards made by PCBway and was super happy with them: great price, great speed, and there was some live chat help on hand for a newbie like myself. Receiving the boards was pretty exciting for me as a lifelong software person, though I wasn't convinced they were going to work because in my eagerness I'd completely neglected to check the final routing against my breadboard prototype.

![]()

I soldered some sockets onto the board, as well as the pin headers and a few bypass caps, and all in all I'm pretty happy with the result. Not the best soldering I've ever done, but it's the first time I've done a board like this and it was pretty fiddly in places to say the least!

![]()

One thing that might be obvious from the above pictures is that I completely underestimated the size of the ZIF socket. I'd not bothered finding a pattern for the silk screen (which would definitely have helped) and instead just printed out the PCB design and eye-balled the width. First off this mean that the socket for U1 is literally against the ZIF socket, it didn't quite stop it going it but it was close, and I thought I'd have to solder the shift register direct to the board. The other thing you may have realised now is that I didn't consider the length of the ZIF socket, I knew it was only a little shorter than the board, but stuffed up in that there's no room to mount the bypass capacitor for it. I didn't fret over this since the original breadboard version only used two caps (at a far greater distance) and worked fine.

Testing

My first test was a little underwhelming in that attaching my logic probe to the data pins of the EEPROM and trying to read it didn't return the data I was expecting. I immediately assumed the worst and figured I'd made a mess of the schematic, but as I poked around some more I saw that some signals were definitely there, and so started going through things one by one. It didn't take long before I remembered that in doing the PCB routing I'd changed the Arduino pin assignments to make the routing easier (it's just a simple software change after all) and I'd not bothered to update the code at the time. Modifying the code to reflect the new layout took a few minutes and then it worked perfectly, making me a happy bunny indeed.

![]()

Files to Come

I doubt anybody is interested, but if they are I'll post the design files up soon along with the Arduino code, the latter needs some work to really be usable but it gets the job done. It'll never be able to fill the EEPROM completely as it's dependent on the ROM to write being stored on the Arduino's own controller, which has the same total capacity but with some reserved for the code itself. Still, close enough ;)

-

A Diversion: EEPROM Programming

05/21/2017 at 04:43 • 0 commentsHaving got the CPU freerunning, I was starting to look at what I needed for DTACK etc. and figured that I should really switch to a 5V CPLD, so I ordered one, and have been waiting for several weeks now for a breadboard compatible socket to arrive... ho hum.

In the mean time I decided to look into what I needed in terms of ROM, and ordered a couple of AT28C256 chips from AliExpress, along with a cheapo programmer. Unfortunately when those arrived I discovered I'd been neglectful and the two weren't compatible! I started to look around for a programmer but they're not cheap, and I quickly came across information from Quinn Dunki and others regarding building their own EEPROM programmers. I had an Arduino Uno laying around so I got stuck in. It didn't take long to get my head around using shift registers, but no matter what I tried I couldn't read anything but 0 back from the EEPROM. A couple of weeks later I finally posted on the electronics StackExchange and someone suggested that my data lines were still being driven by a shift register when I was trying to read. I double checked the wiring and sure enough, I'd accidentally grounded the OE (output enable) pin on the data shift register instead of one of the address ones. As soon as that was resolved writing was no problem at all.![]()

A schematic will be forthcoming, and I'm toying with getting this made into an Arduino shield to make things easier down the line. Eventually I think I'll steal Quinn's ideas wholesale and stick a microcontroller into the computer so that EEPROM programming can be performed on board.

-

In The Words of Tom Petty, I'm Free Runnin

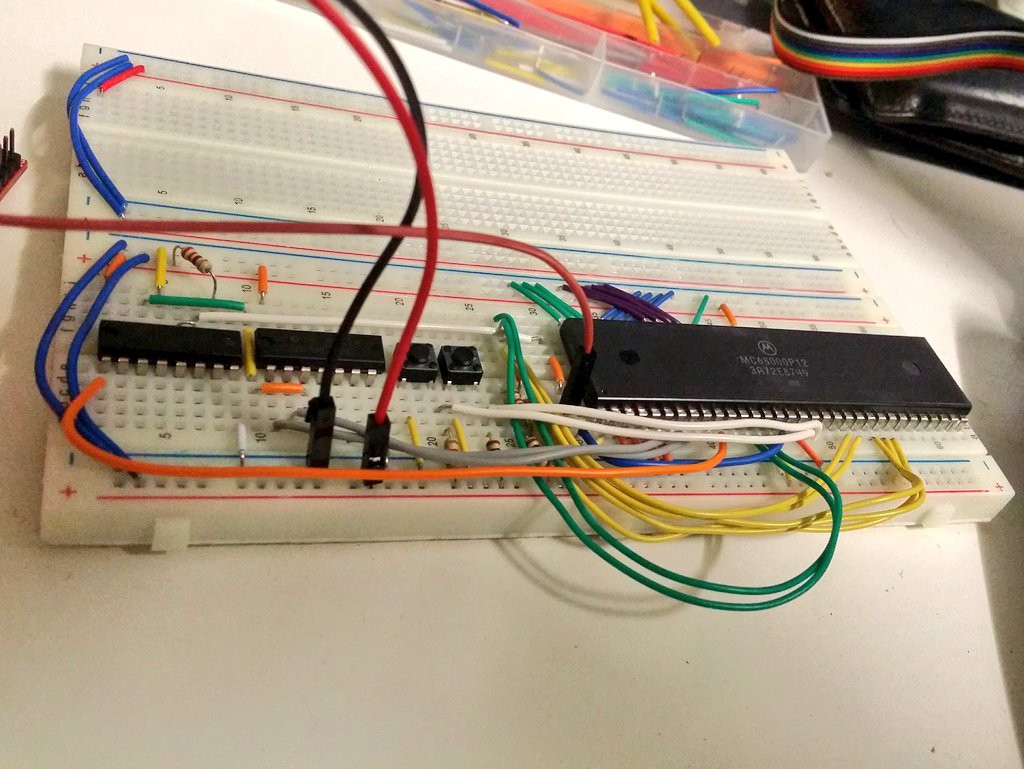

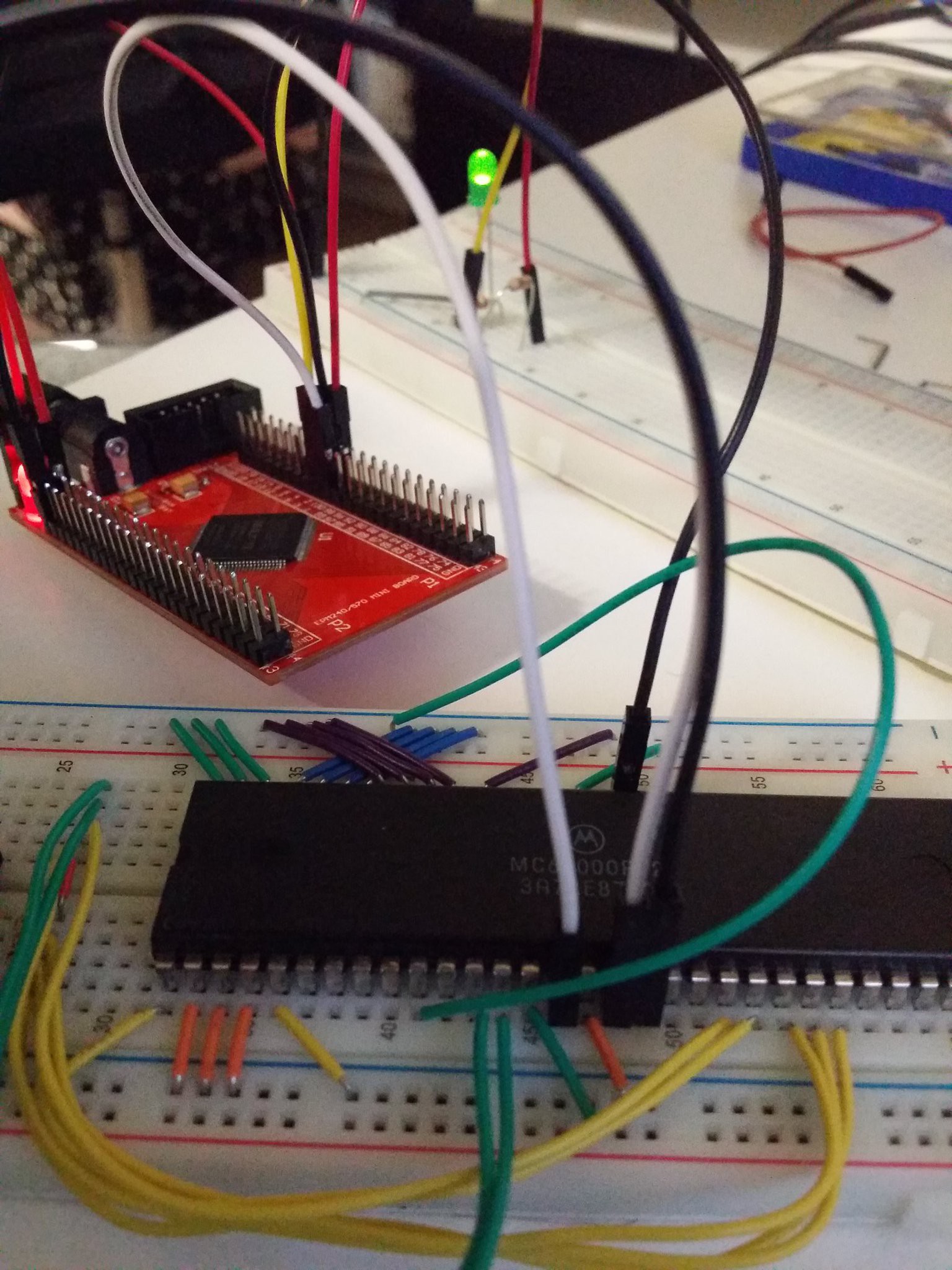

04/16/2017 at 13:12 • 0 commentsWell it was close enough, and I don't like that song, but I do like the fact that this old chip is free running on the breadboard now.

What's free running? Well it's basically hooking up the bare minimum to make it work. Power lines, inputs all zero'd out (high in some cases), a basic reset circuit and a clock. The key is that the datalines are all grounded, resulting in the processor executing the instruction "ORI.B #0,D0", in other words OR the immediate value zero with register D0. The program counter runs this then reads the next instruction which is still the same and so on, cycling through the address space forever, meaning if you hook an LED to some of the higher address lines you'll see it flash at slower and slower intervals as it's being used to represent more significant bits in the PC address.![]()

I'm going to be using an Altera MaxII CPLD to implement some of the glue logic (though this may change due to it using 3.3V logic), and right now it's the oscillator in there that's driving the clock line of the CPU. I'm not sure how long I'll be able to use this CPLD for, it's not rated to drive 5V logic, but given this CPU is isn't CMOS it sounds like it might be doable.

The electrical specs for the MC68000P12 CPU say the minimum high level for input is 2V, and it's high for output is 2.5V, so the ranges do overlap with the MaxII which has a range of 1.7-4V for the high input voltage and the output high has a minimum of the IO supply volatage (which should be 3.3V on this dev board). I'm not an expert, and people may read this and pull their hair out, but as long as things aren't on fire and function correctly then I'll keep on going.

-

And we're off.... I think.

04/06/2017 at 07:28 • 0 commentsCreating a log just to chart my progress (or lack thereof).

I've got a copy of 68000 Microcomputer Systems: Designing and Troubleshooting and have read through the early parts a few times, as well as reading up on what others have done before. My interest in the 68k is due to it being used in some of my favourite machines, namely the Sega Megadrive/Genesis and the Atari ST.Over the last couple of years I finally made the leap from higher level languages down to assembly, writing a columns clone for the Atari Falcon in 68k Assembly, and I've always wanted to make an Operating System, so I figured why not build a computer to write one for?

My free time is limited, my hardware foo is lacking, but I'm chipping away. First stage: get this sucker free running!

Matt Lacey

Matt Lacey