-

Some lossy video compression

10/22/2018 at 16:27 • 0 commentsJust found on had.com:

LordNothing says:

October 22, 2018 at 8:51 am

block formats are pretty easy to do but i dont think there is any support for that in the display hardware. like being able to use dxt1 at four bits per pixel, a 4x improvement over raw 16 bit color data. for every 4×4 block of cells, select the lightest and darkest 2 pixels in the block, sticking those into a color table, generate 2 interpolated pixels. those four colors become a color table and the pixels are stored as 2-bit indices. decompression is much faster as it just needs to do the interpolation step and from that it can assign color values to the pixels on the screen so the demands on the display controller would be minimal. it just has to exist first.

So given the min and max values of pixels in a square, some relatively decent compression is possible, which directly benefits from the σα code. The system is a bit more complex because there are 2 layers of compression, with very different characteristics, but the compression ratio will be better in some pathological cases such as noise or other very fine details. -

To reverse or to not reverse

05/30/2017 at 04:21 • 0 comments20170530:

The reversing of the encoded number comes from the following assumptions:

- Colors are often "saturated", often very close to zero or close to max, few "pastels" between 25% and 75%

- This is reinforced when a block is adjusted for minimum and maximum values : at least one sample will be 0 and another will be max (which ?)

- The "very high and very low" scenario also happens with relative/delta/differential coding where hopefully there are mostly low-amplitude values, and the positive values wrap around to very high values. Low amplitudes with negative values will use fewer bits

However,

- The reversal at the middle is totally arbitrary. Nobody can be sure that these heuristics will happen "most of the time".

- This reversal could be under-efficient with different kinds of data sets.

- The amplitude reversal requires a subtraction in the critical datapath, which slows things down (a bit).

The "safe answer" is to say : measure and compare various scenarii. But there is a catch: with no reversal, there is still something to compute, in this case a-S.

Considering that S=a+b we get a-S=a-a-b=-b

Oh wait, we have a negative number now ?

If there is no reversal, there still is some computation ( -x = ~x +1 and incrementation is faster than full addition but not by significantly much)

-

Extension to unequal boundaries

05/27/2017 at 04:14 • 0 commentsThe algorithm presented on the project description is, in fact, a special case where both addends have the same range.

In the colorspace decomposition algorithm however, there are 3 addends, with the same boundaries but the intermediary sum Cgb has twice the range of the R component. Combining R and Cgb creates a new set of problems...

First : one addend's boundary is larger than the other. The algorithm requires a clear ordering to work, so we must swap the operands in a preliminary step, if the order is not respected.

0 ≤ a ≤ m, 0 ≤ b ≤ n, 1) initial setup: S = a + b, l = m + n 2) operand swap: if (m < n) then swap (a, b), swap (m, n) fi

(edit 20170607: removed o and p because they were useless)

Now that it's out of our way, we can focus on what to do with these data and what happens to them.Let's focus on the data table of the Project Details Page.

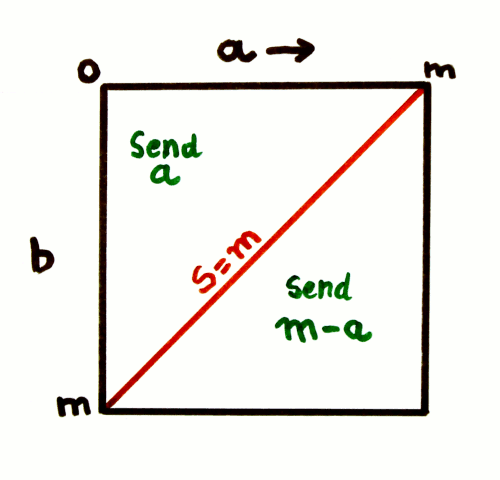

What this amount to, is: a square of side n, cut in half at the diagonal (that corresponds to S==n). One half of the square has a normal numbering of the columns, the other half has a reverse numbering.

![]()

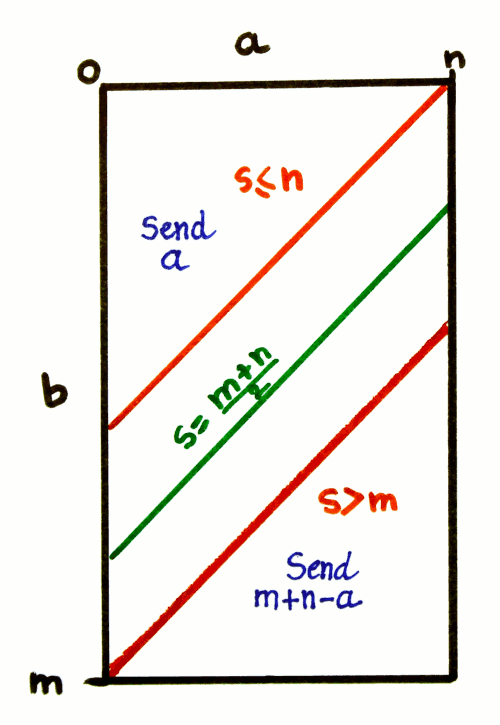

What happens when m > n ?

In a geometric sense, this amounts to cutting the square at the S=m diagonal and moving the lower/reversed part away from the higher part, creating a new zone between them.

![]()

The diagonal of the square is the place where two things happen:

- the operand is reversed: a becomes m-a

- the range of a is also reversed

However when the limits are different, these two things do not happen at the same place anymore.

- The reversal of a must occur at the middle of the rectangle, so there are as many direct codes as there are reversed codes. This does not occur at n or m, but in the middle:

if ( S > l/2) then a = l/2 - a; fi

- The range decreases at S>m, increases at S≤n, but between these bounds, it does not change: there is a saturation region.

if (S < n) then range = S (increase) else if (S > m) then range = l - S (decrease) else range = n (saturate) fi fi

These tests can be run in parallel, but also in series, because the conditions are co-dependent: n < l/2 < m. The code segments can be merged:

3) Reversal: if (S ≤ l/2) then range = S else range = l - S a = l/2 - a fi 4) saturation if (range > n) range = n fiOf course, the decoding is symmetrical, it reuses the same formulas to compute the requested range and adjust a.

Conclusion

This general algorithm works for m=n, and the special case presented in the main page is just an optimisation and simplification. The special case is faster and more compact but the general case is more powerful and potentially more efficient.

In the context of 3R, when m != n, the trivial approach for encoding one of the data is to send the one with the smallest range. The above algorithm goes further:

- it reverses the encoded number, which makes it easier eventual further entropy coding steps

- it reduces the range of the encoded number, when it is known to not exceed an implicit limit.

Taken together, these elements significantly increase the packing density with no pathological case or increase of the original data (to be demonstrated). These improvements would be impossible without the knowledge of the respective limits of the operands.

Yann Guidon / YGDES

Yann Guidon / YGDES