Interacting with the touch screen while driving is far more distracting than just glancing at the phone to check a message. The driver has to focus on a small section of the screen, and then focus to make sure there hand is in the right location before pressing down. This sequence is then repeated multiple times, in the case of typing out a text message. The act of sending even a short text message can take the driver’s focus of the road for many seconds at a time. If the driver could interact with their phone without using the touchscreen, it would at least lessen the distraction and could end up saving lives.

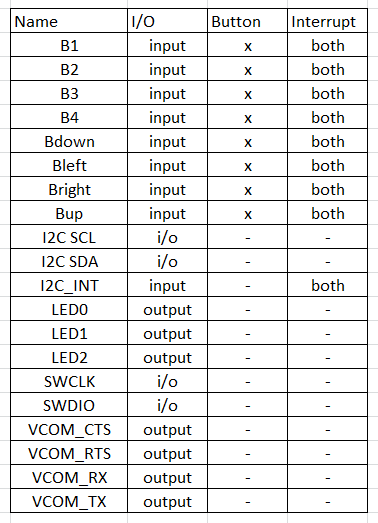

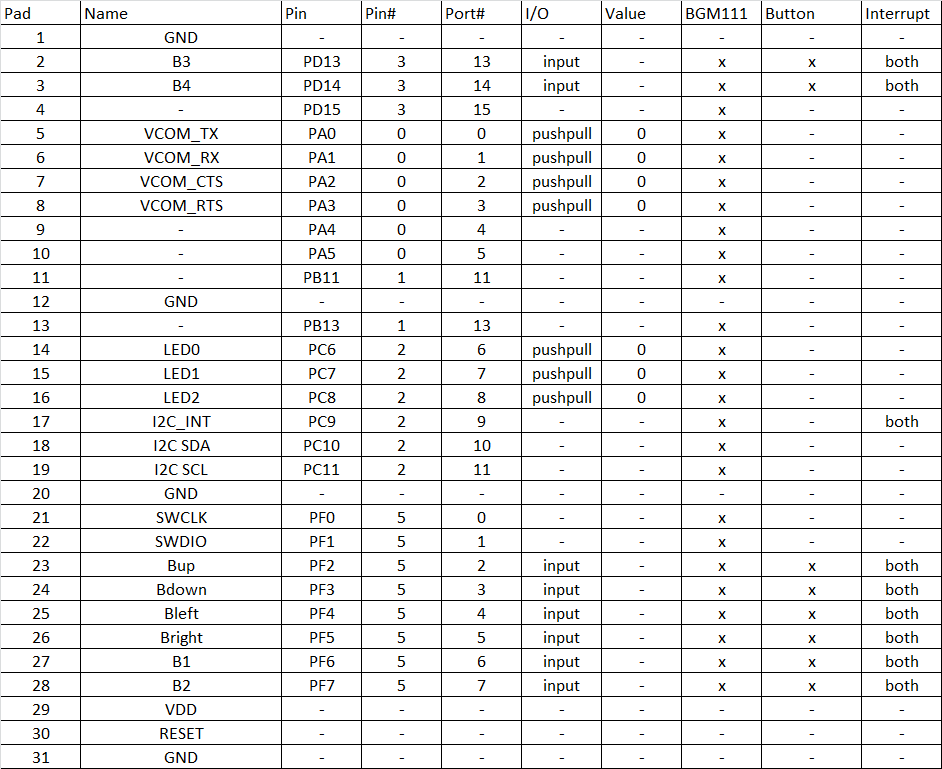

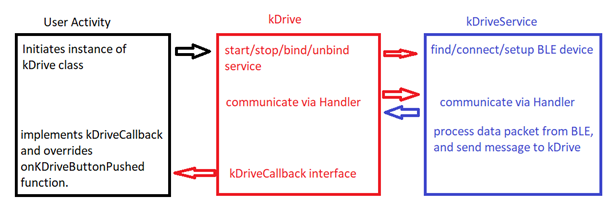

This product is a small keyboard like device that can be strapped to a steering wheel, or mounted somewhere else in the car the allows the driver to use tactile feedback to interact with their phone without looking at the screen. The device has several buttons which can be distinguished by feel, which allow the driver to navigate through menus, activate voice control, and perform pre-programmed actions. Android and Apple devices already provide support for using Bluetooth keyboards to navigate and provide input actions such as launching apps, filling out text forms, etc. The device will also work with an application which lessens the distraction for common tasks such as texting, initiating phone calls, inputting navigation, selecting music, etc.

It’s unfortunate that we can’t stop people from interacting with their phones while driving. It is becoming more and more common as we become more reliant on our phones. Many people the drive for ride sharing services even rely on the interaction to pick up a fare. The goal of this product is to alleviate the distraction that is cause by interacting with cell phones while driving. A few seconds of distraction is all it takes to cause an accident or to not recognize potential danger. If we can keep the driver’s focus on the road, it can save thousands of lives every year.

Kyle Thomas

Kyle Thomas

Daniel Grießhaber

Daniel Grießhaber

Supplyframe DesignLab

Supplyframe DesignLab

Laura

Laura