With a CNC the main goal is to get a platform to a location. This location can be specified by different coordinate systems. Currently I'm limiting the aim to a 2D plane. Most hobby CNCs use the two drive axis as the basis for the CNC's coordinate system. From a user perspective we want to print what we see on screen to a physical environment. On screen the basis are orthogonal, so we also expect the CNC's basis to also be orthogonal. This usually mean careful construction and calibration to ensure the two drive axis are orthogonal. On screen the units also constant, forming a uniform grid, this also means the CNCs have to ensure uniform 'steps', usually achieved by stepper motors.

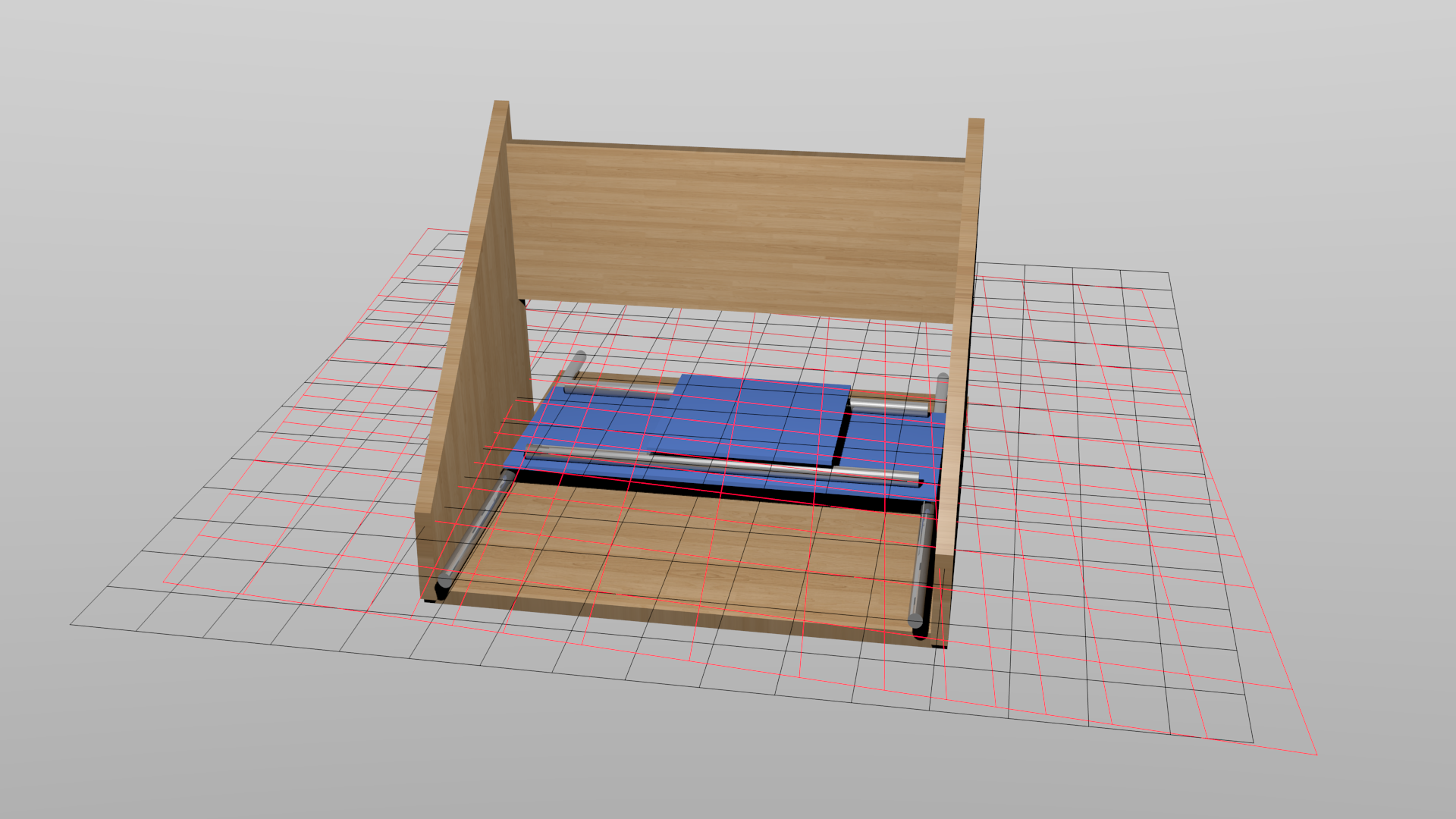

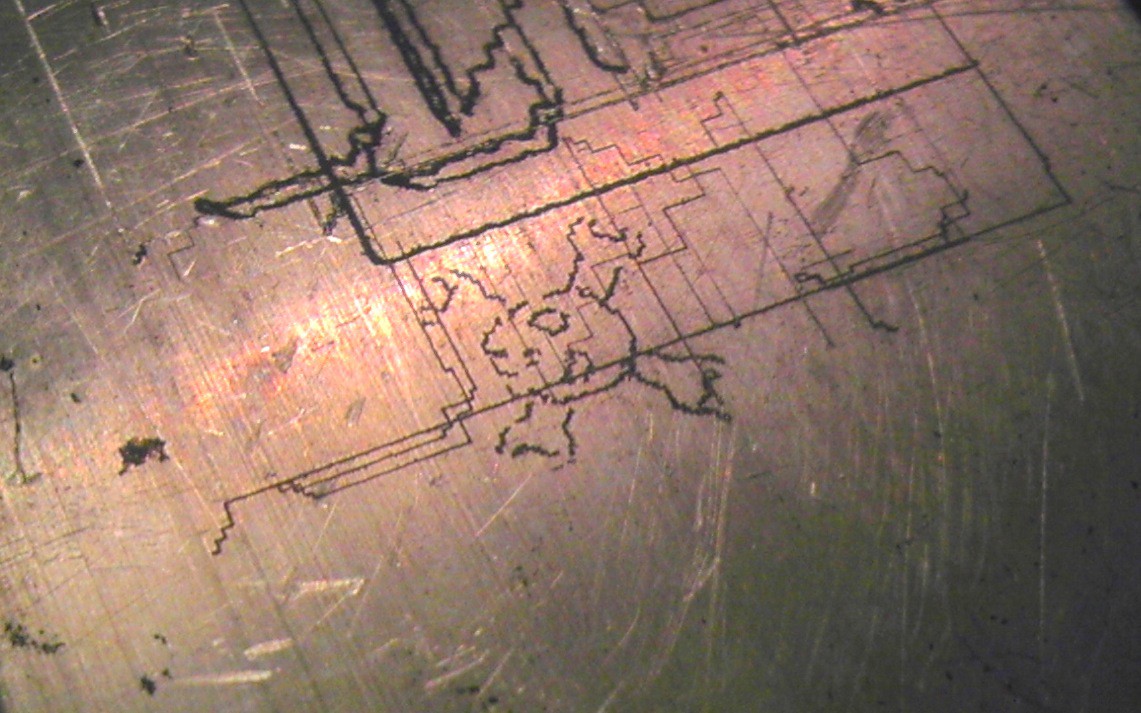

With limited resources I was unable to construct a CNC which meets these requirements. Below is a visualization of the desired grid (black) and the grid from the uncalibrated hardware (red):

It might be slightly exaggerated but it makes a point. If the CNC was used as is to plot an item without calibration, the output will be badly distorted.

It might be slightly exaggerated but it makes a point. If the CNC was used as is to plot an item without calibration, the output will be badly distorted.

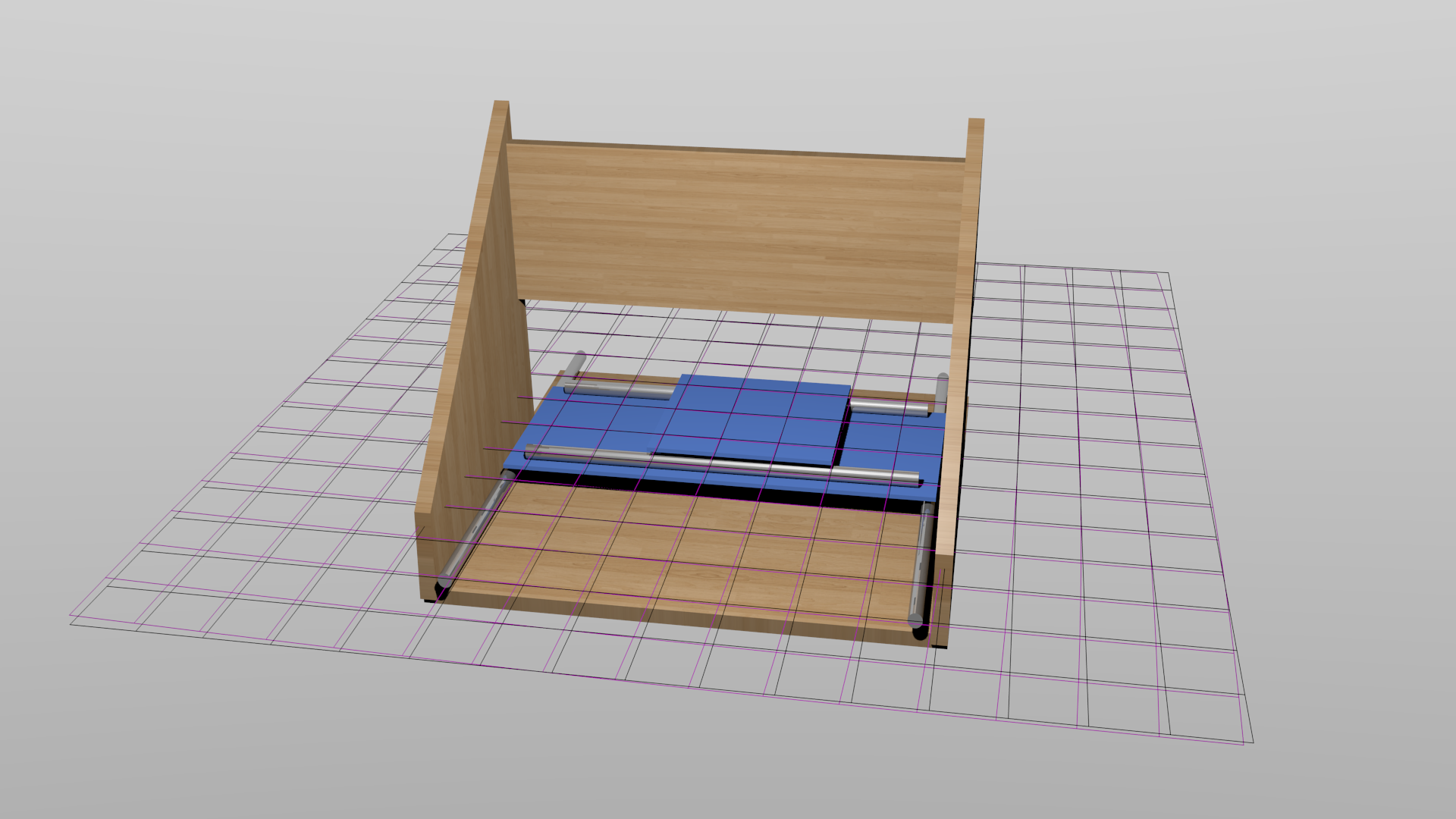

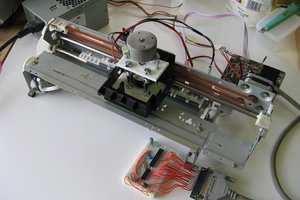

To solve this I add a feedback element which will then allow me to use the basis of the feedback device instead of the basis of the CNC. The feedback element I chose was the humble webcam. It was a iSight striped out of a long expired iMac. My experience with these cameras used OpenCV tells me that the spatial accuracy are quite impeccable. There are very little distortion, but even if there where distortion, OpenCV has a plethora of information to remove the distortion. So now the projected grid of the camera will become our reference to my CNC. A visualization with the ideal (black), camera (purple):

The two grids are close enough for now. So let the software start to implement this. The aim to to move the platform to a location observed by the webcam. Now all this might be a bit confusing right now but I'm sure a bit of code and a video will clear it all up.

JLAM

JLAM

John Opsahl

John Opsahl

Kenji Larsen

Kenji Larsen

shlonkin

shlonkin

I am working on a new project and this CV project will be a helpful guide for me you can see my furniture removals website project for further details.