Presentation: here

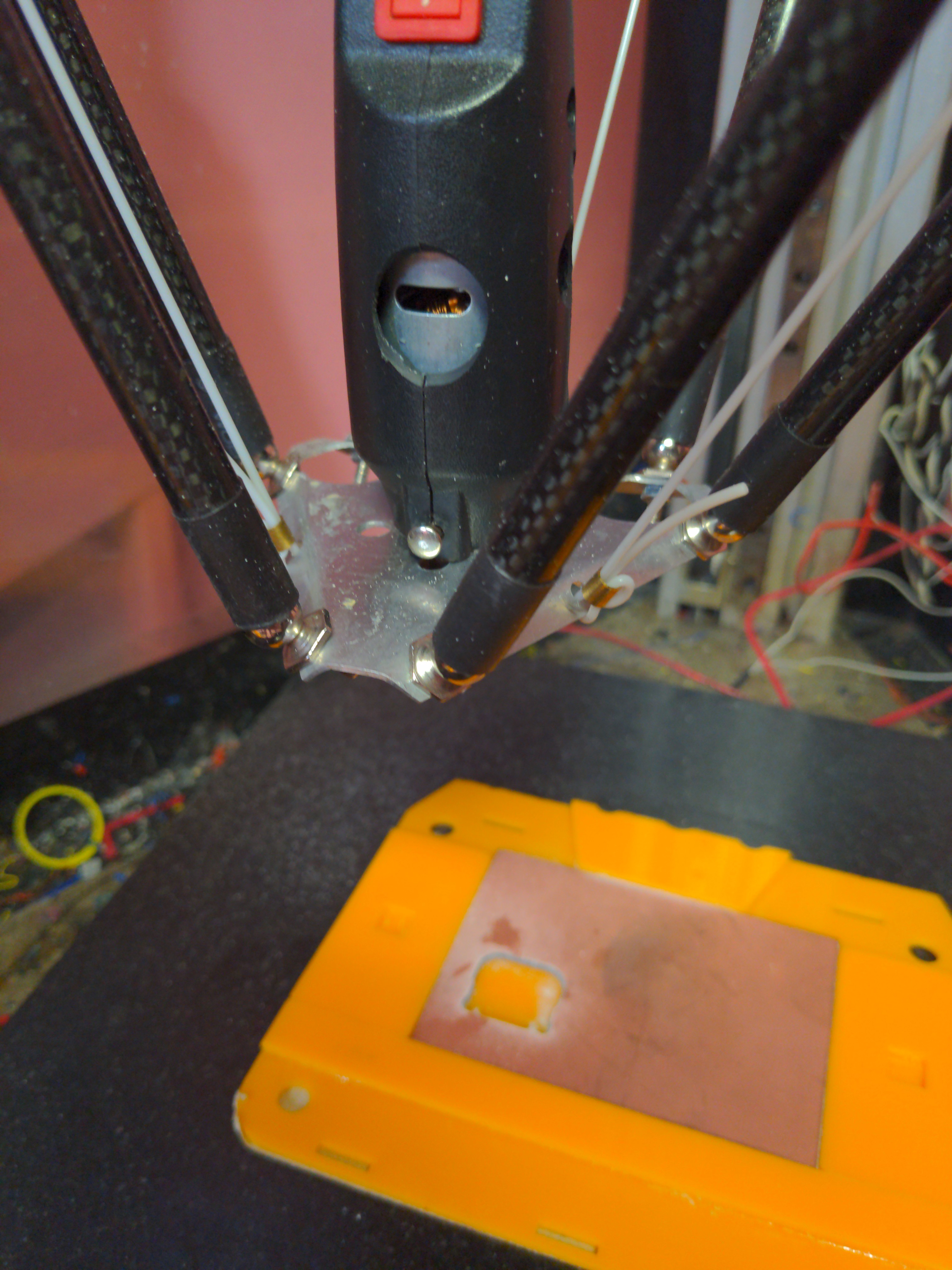

Hardware is done until testing reveals issues with it.

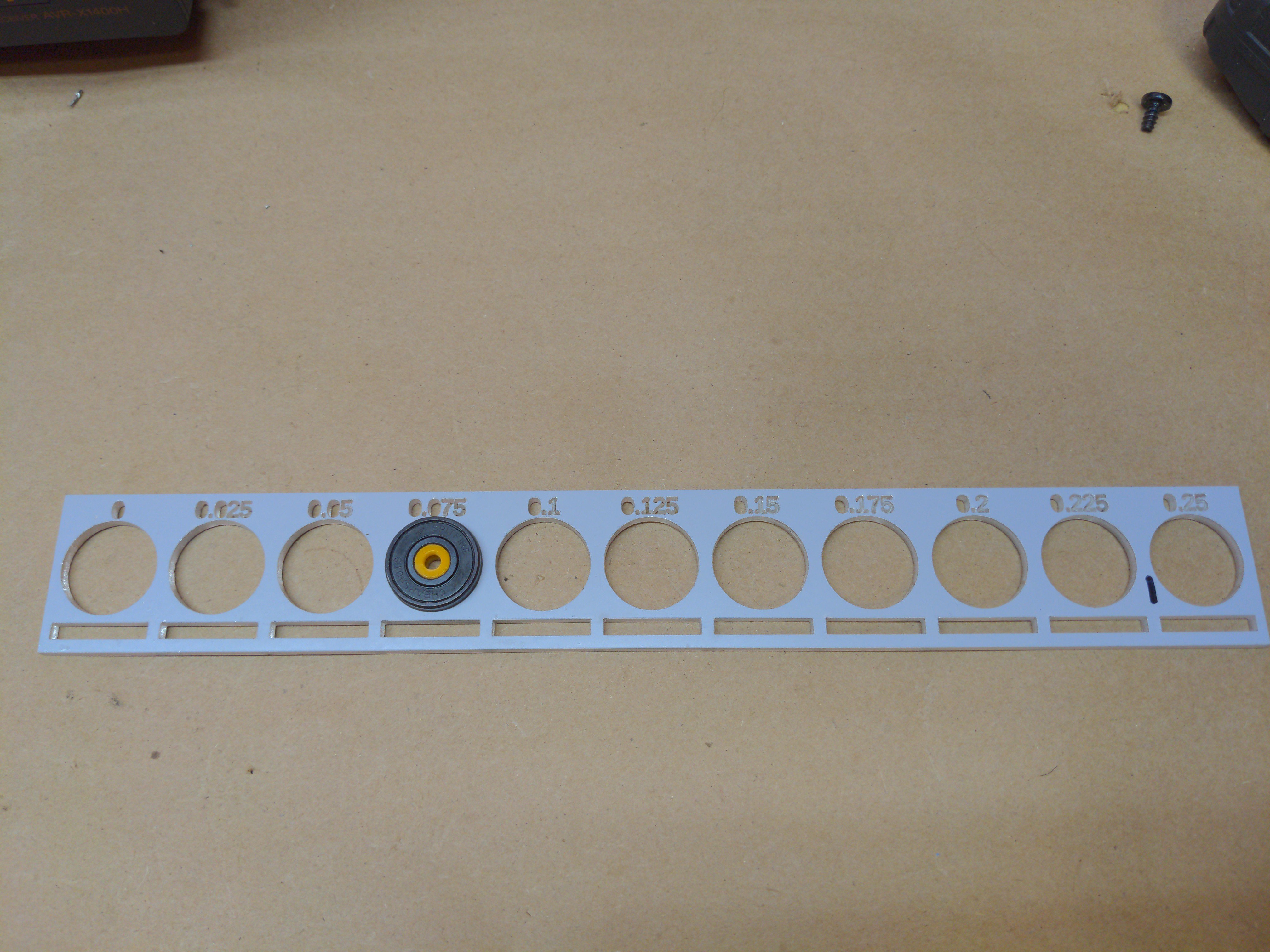

Source and rendered files are up on Github. Laser cut files are here. 3D printable files are here.

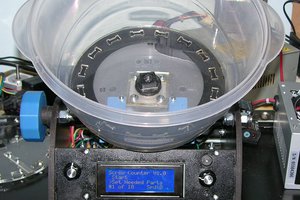

So far you can use a BLDC gear motor, a small worm drive gear motor, or a Nema 23 stepper to drive this.

Torque requirement is 15-30 kg/cm and speed requirement is 22 to 30 rpm.

We need to deliver a faster inhale stroke than previously specified, so torque requirement is now 30 kg/cm and speed requirement is 48 rpm. This may put it beyond what our (cheap) Nema 23 can do and probably puts my first pick for the BLDC out of the running as well. The previous number for inhale time was 1.5s. I picked the parts based on 1s. The actual time from the UK document is now 0.3s. Ooof. Everything gets harder.

Electronics and sensing are sorted, but not completed. Still missing some parts.

Software is in progress. With the sensor stack in place, will be capable of assist ventilation, or static rate ventilation.

Pressure and volume delivered will be directly adjustable. Maximum pressure will be configurable. Maximum pressure should also likely be backed up by a physical pop-off valve. We have a reliable 3D printed valve already developed for us.

Daren Schwenke

Daren Schwenke

Douglas Miller

Douglas Miller

Les Hall

Les Hall

Mika Heikkinen

Mika Heikkinen

Jeroen Delcour

Jeroen Delcour

This is a fantastically worthwhile project, kudos for the design. Just a comment on the OpenSCAD script - bearings are almost always referred to by their diameters: OD, ID, width rather than in radii. It is easy to seach eBay and engineering supplies using the diameters for ballraces.