I would be lying if I said that I've always wanted an Apple I; the first Apple product I ever lusted after was the 1990 Mac IIfx or (more realistically) the Macintosh LC.

The Apple I is of historic interest though, as the product that helped launch one of the most influential companies in the world, with impact on economy, culture, industrial design and much more. As such, I feel that I should have some practical experience with it.

A real one is out of the question; if I had the (probably) $2M required to buy one, I'd rather buy a fancy car. There are replicas and emulators out there. Some replicas are built using the exact same, now obsolete parts as used in the original. Other replicas uses microcontrollers to emulate the video interface, something I feel is like putting Tesla alloys on an original '59 Mustang, An emulator will not provide enough "feel", that hardware will.

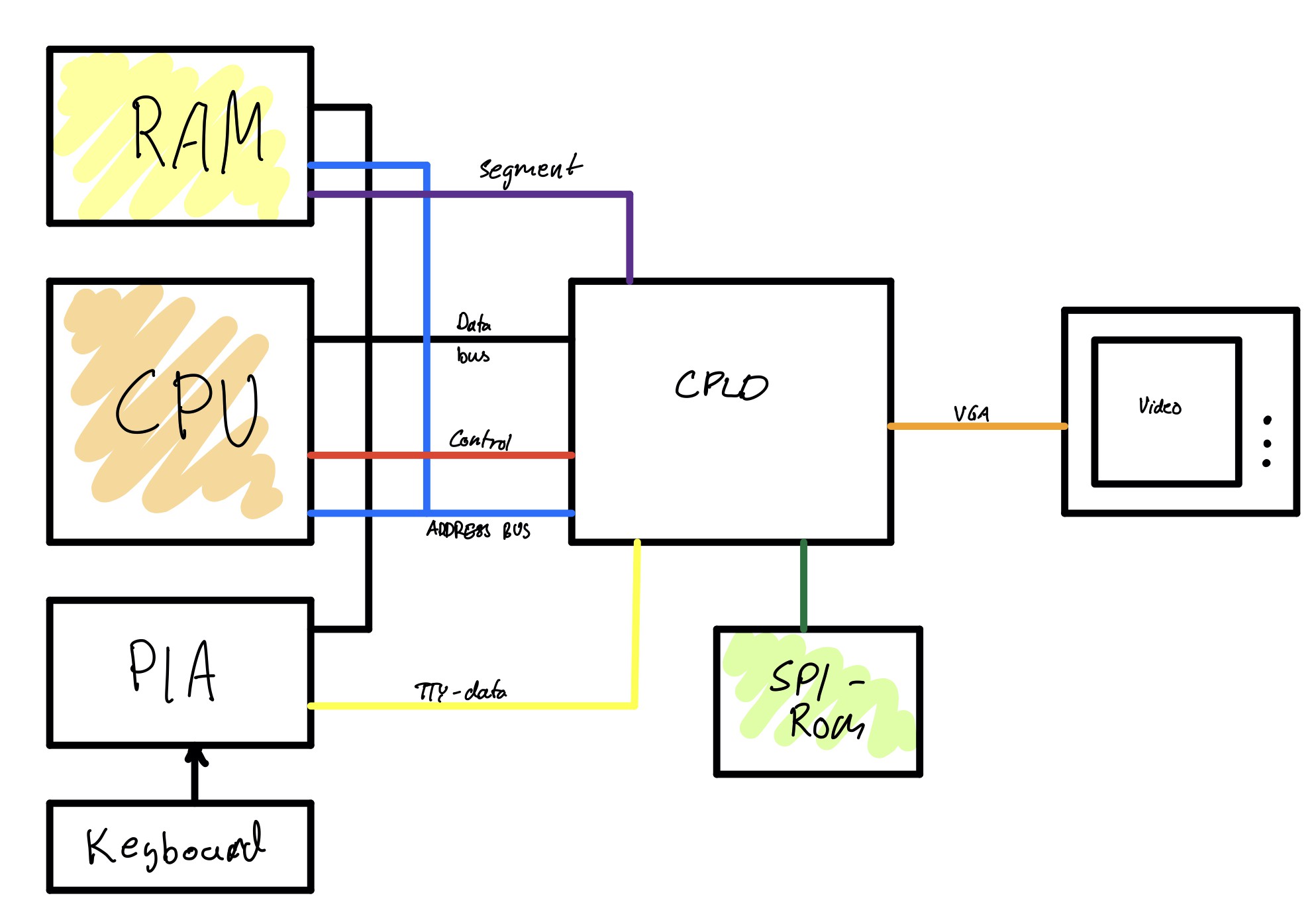

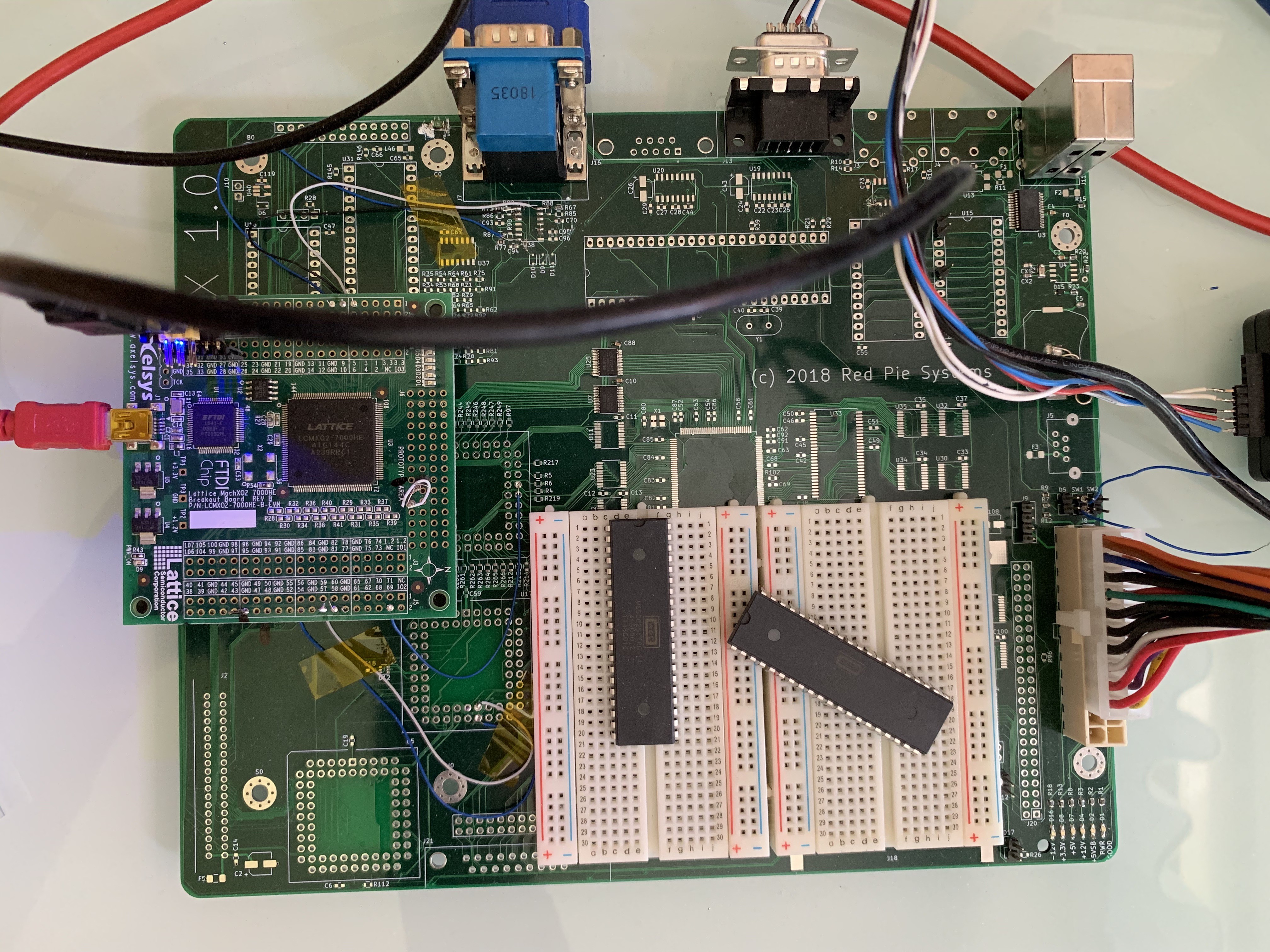

I really don't want to hunt down some unobtainium parts and TTL-chips with a 1976 date code, or implement Woz-magic in a microcontroller, so I guess I must implement my own Apple I clone. :)

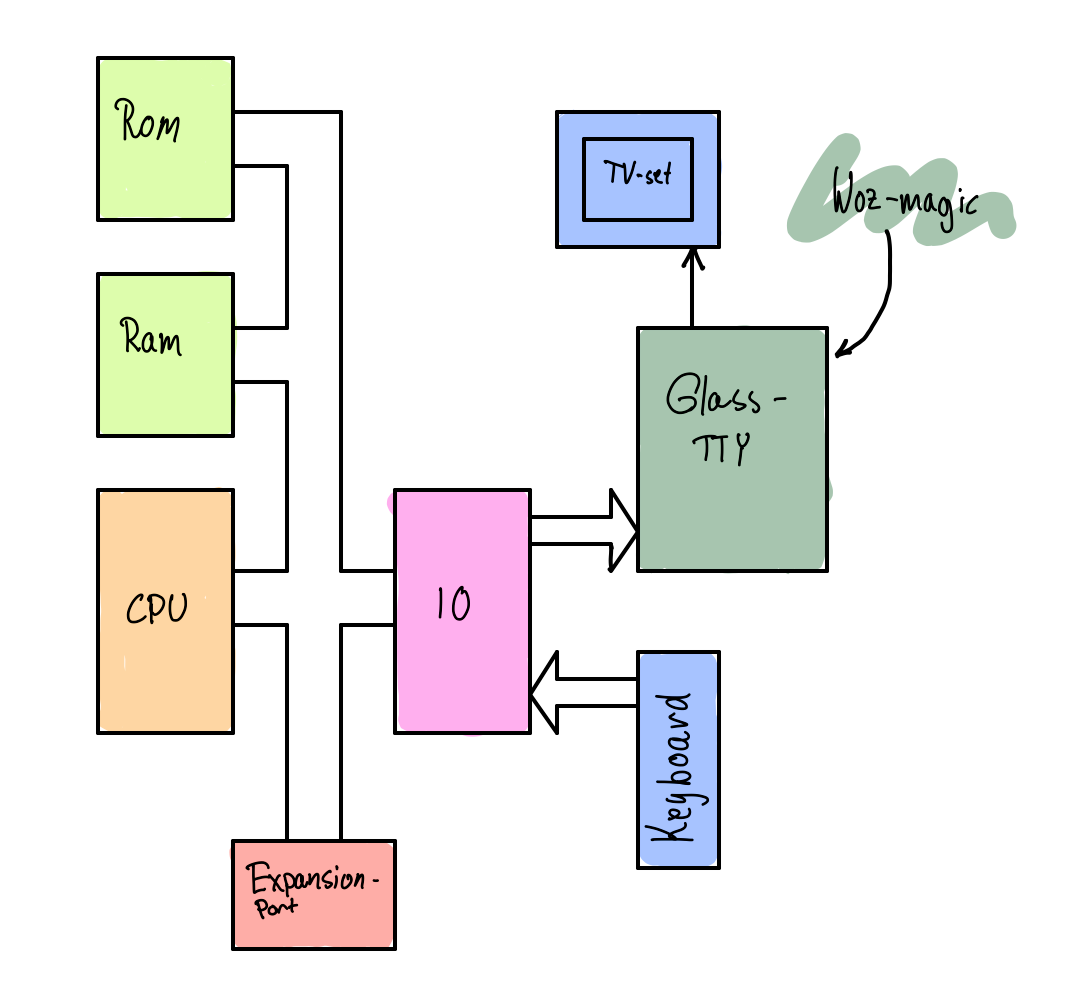

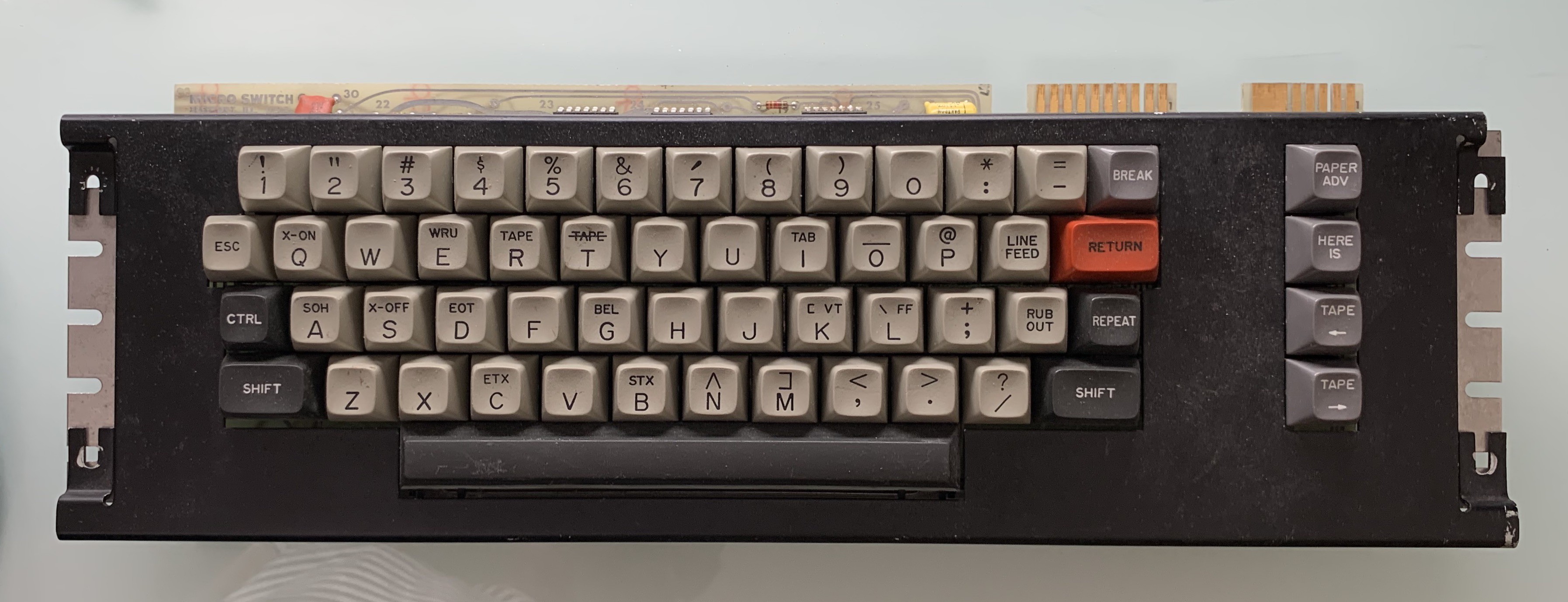

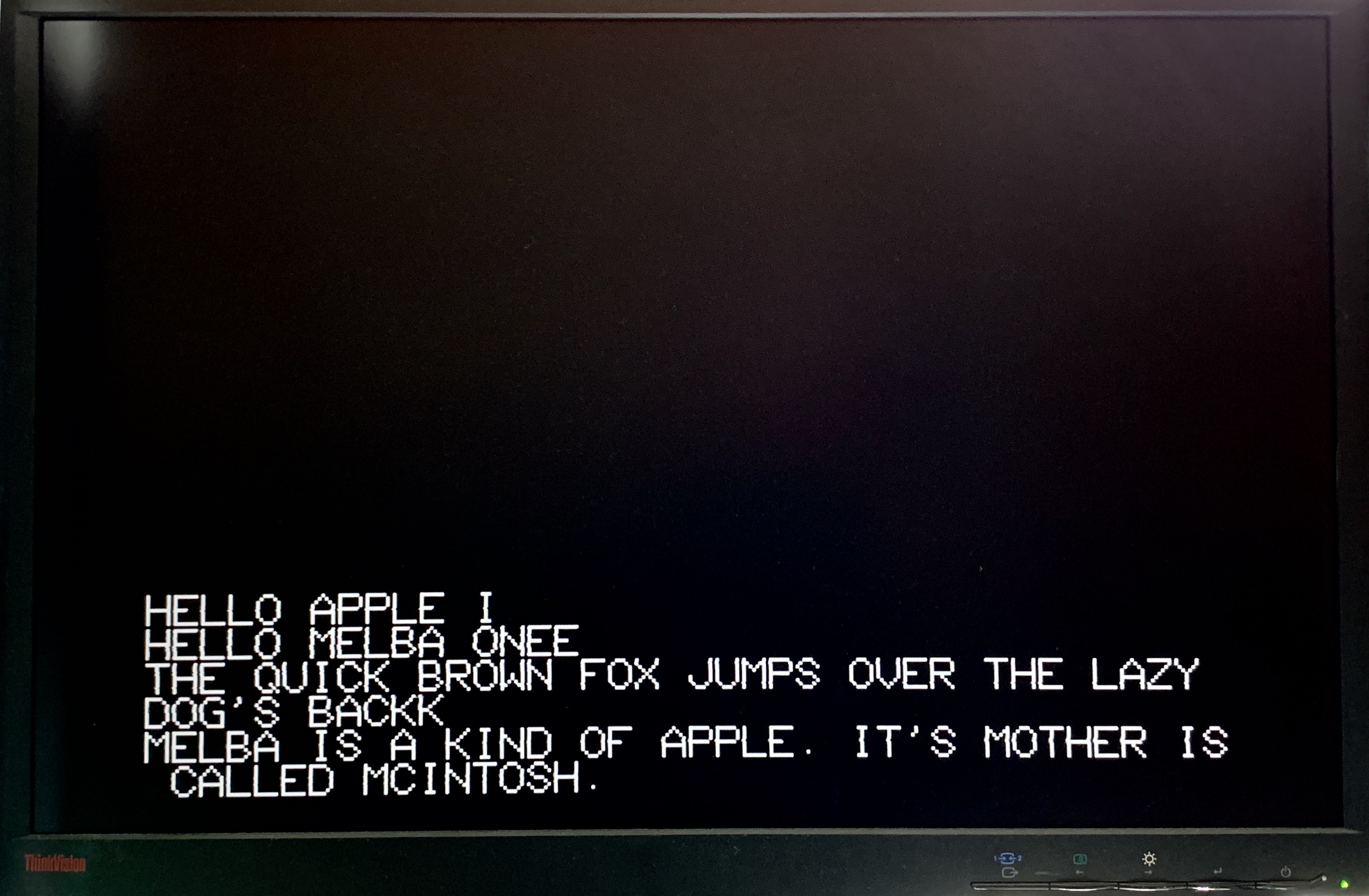

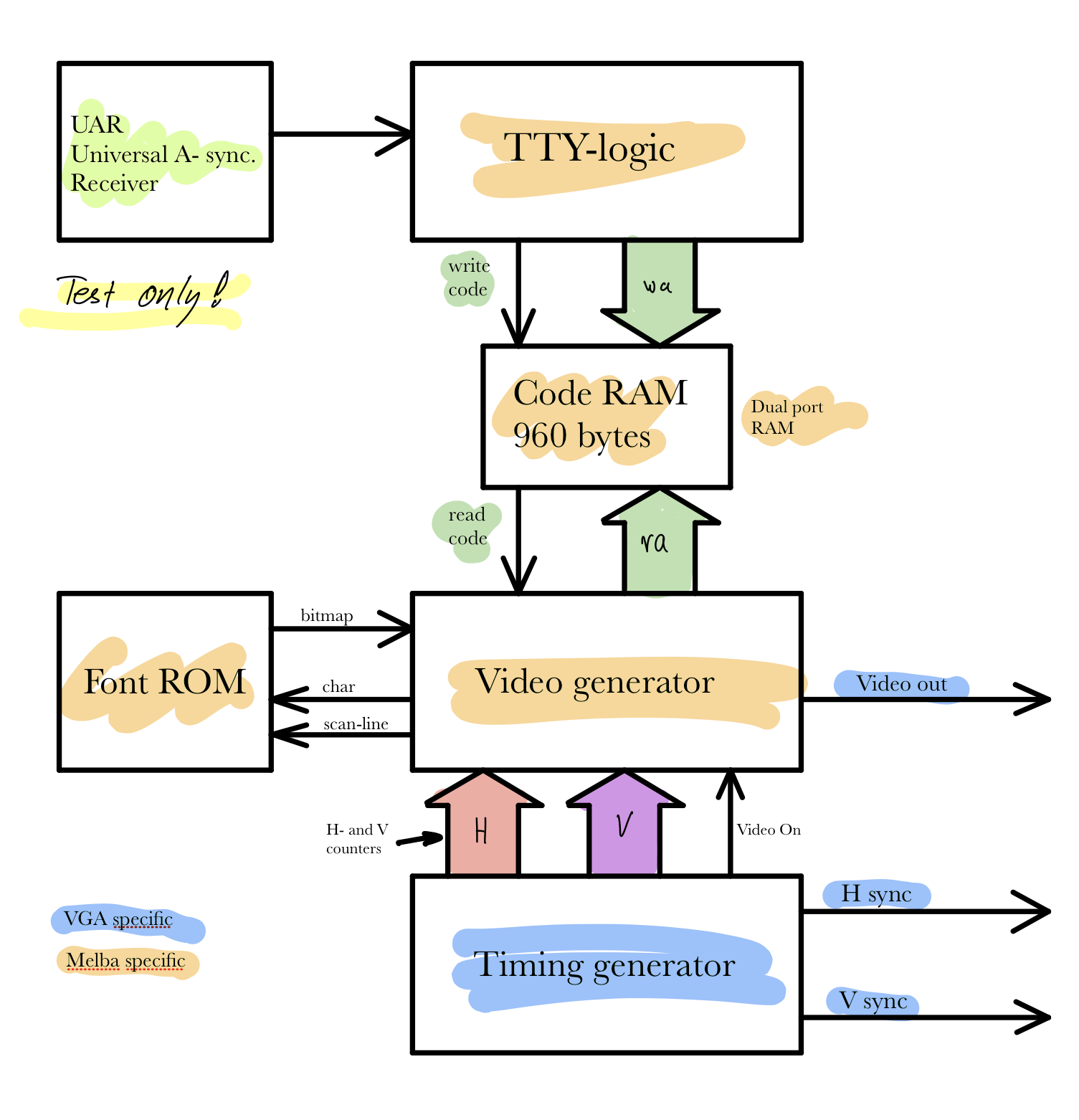

The Apple I is a very basic computer (I was going to write rudimentary computer, but where's the pun in that?). It has only the bare minimum needed to be a computer; CPU, memory and some IO. The IO is connected to what makes the Apple I and Apple I; the glass TTY.

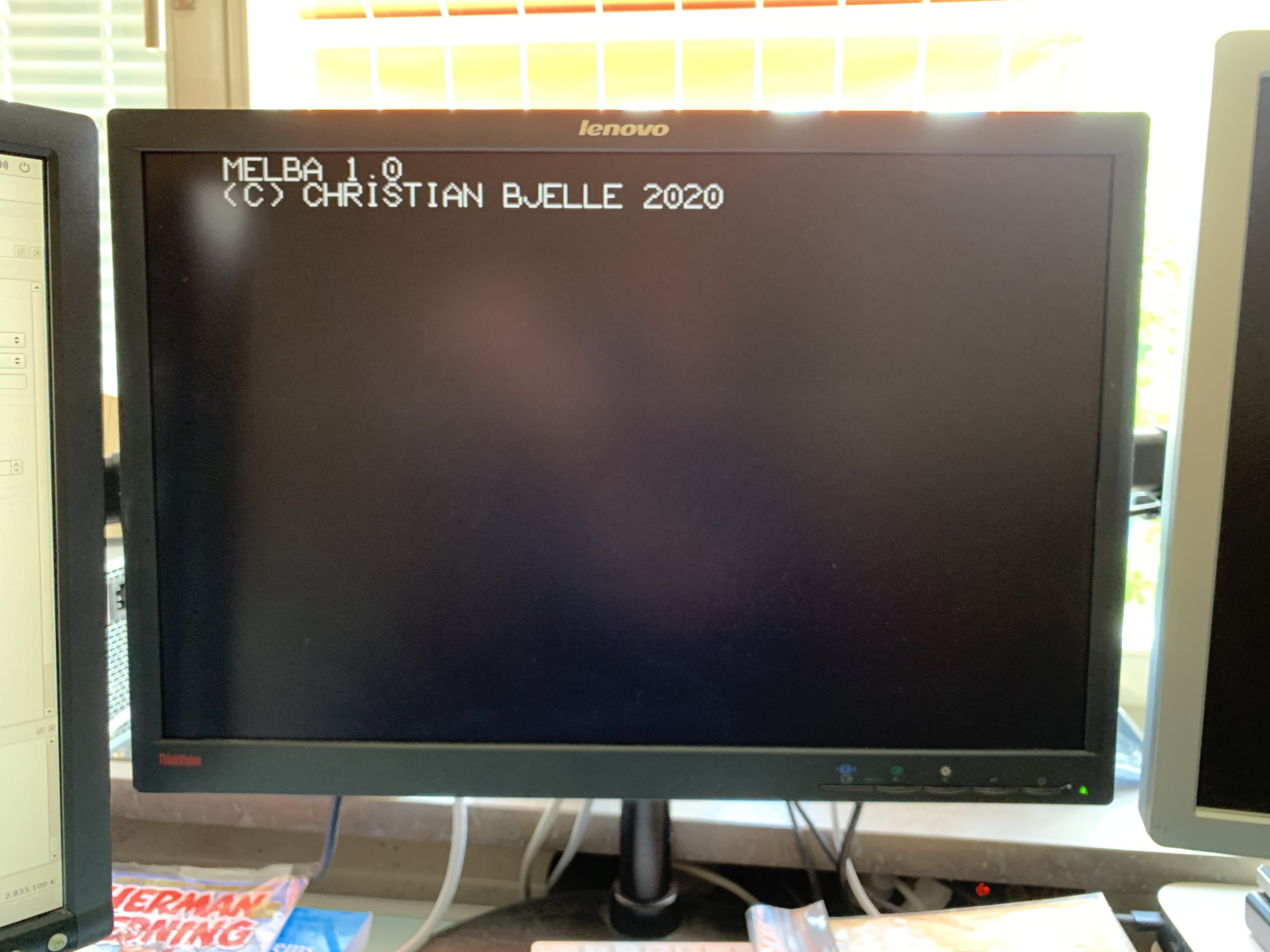

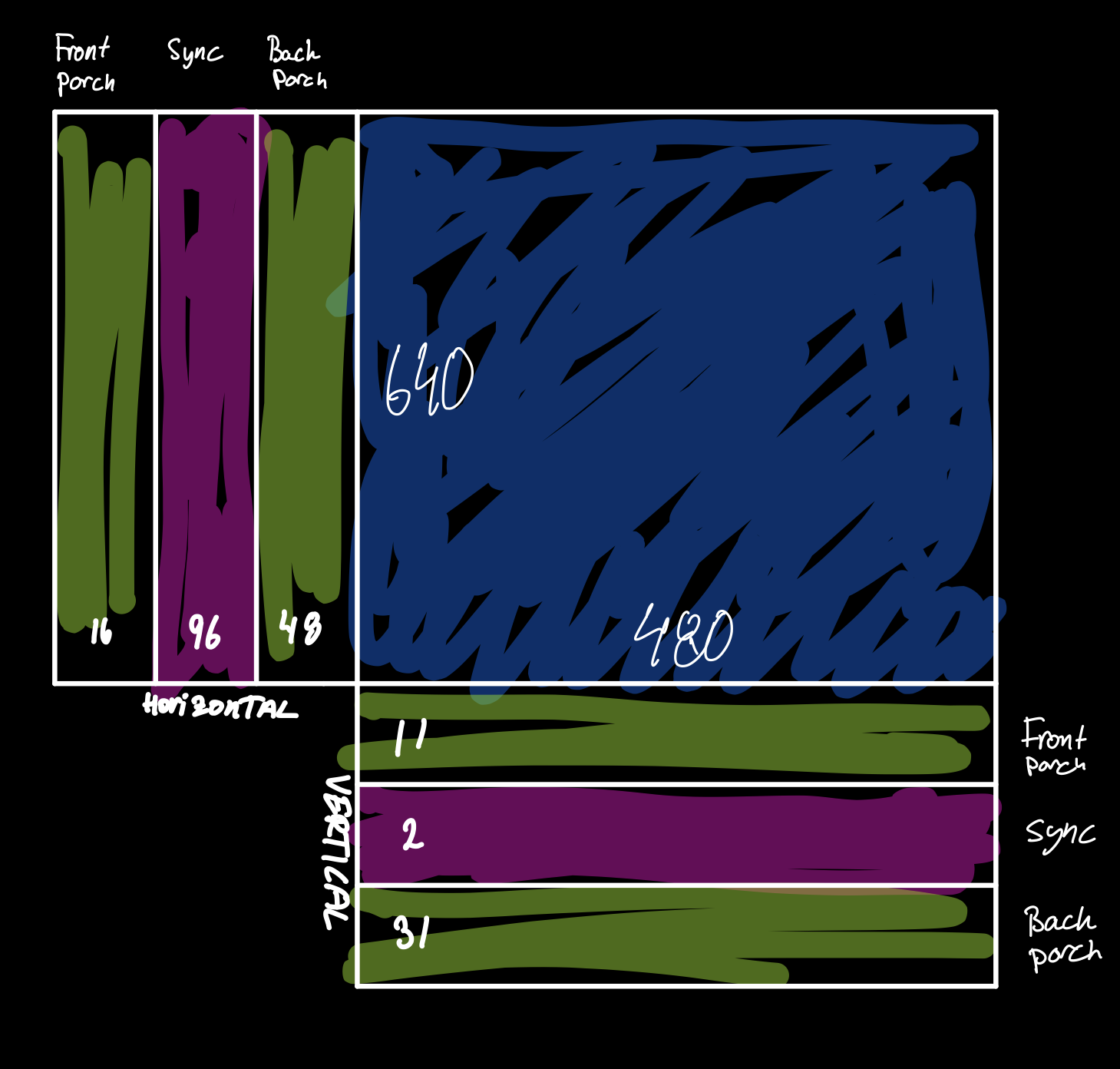

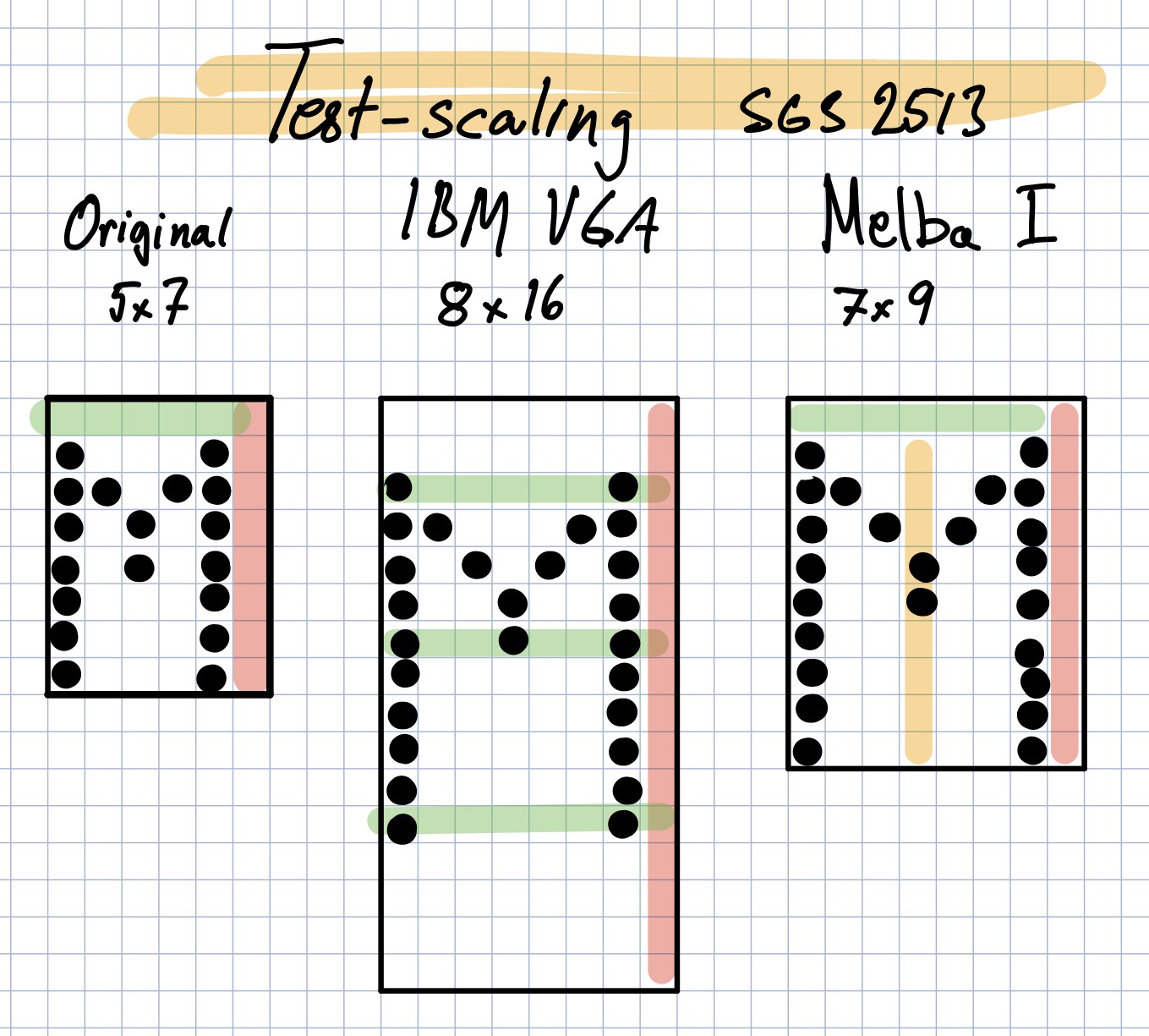

This piece of Woz-magic replicates the behaviour of a teletype, a keyboard and a printer that communicates with a computer, but on a television set. It shares some of the limitations of a real tty as well; you can not delete a character once written to the paper, and the cursor can't be freely positioned, and will not blink.

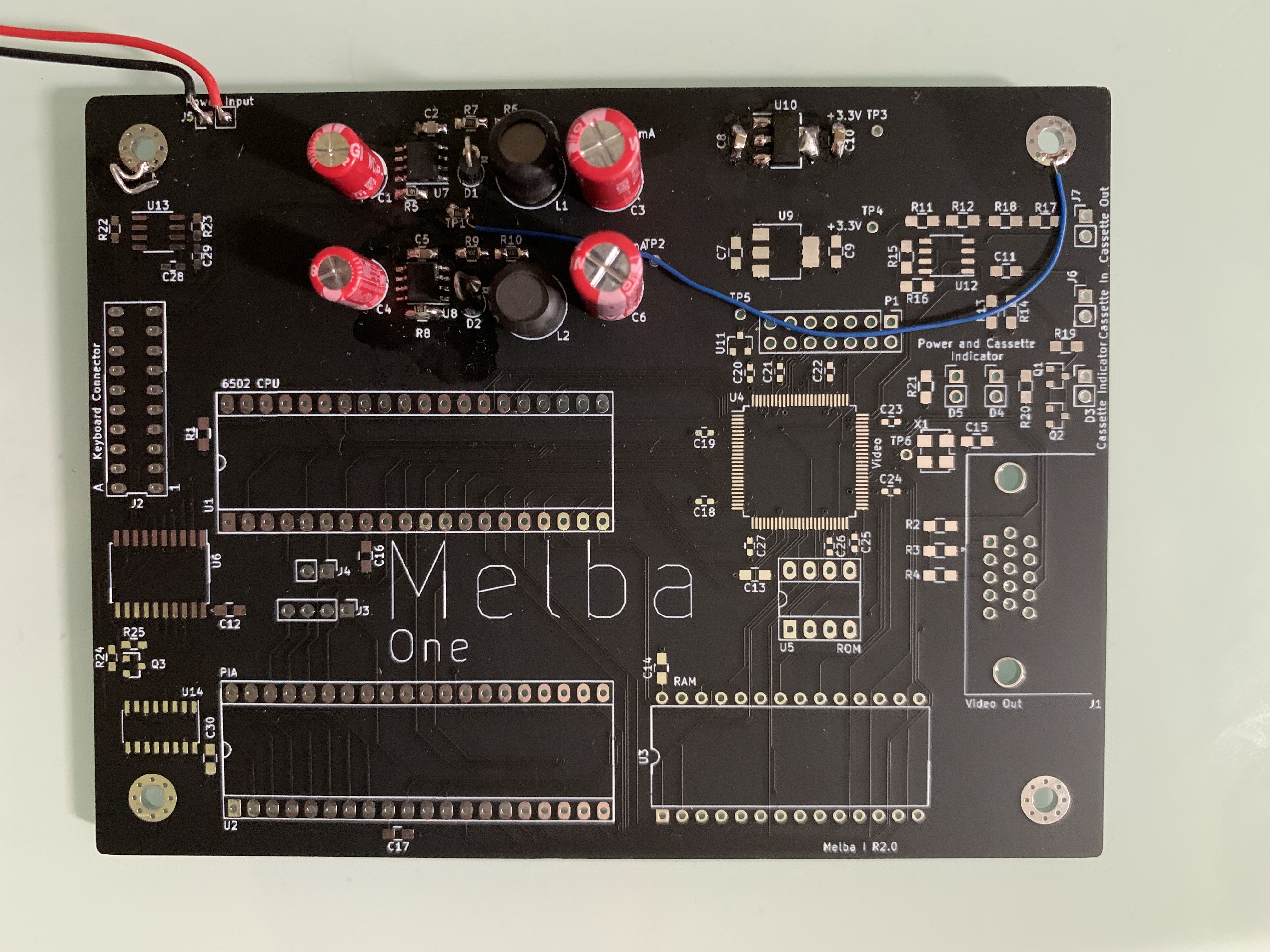

The computer came as a pre-assembled PCB, requiring only a case, keyboard and a power supply. This surely must have been interesting to people wanting to do mostly software, as the expandability was very limited, with the only expansion slot usually taken by the Apple Cassette Interface.

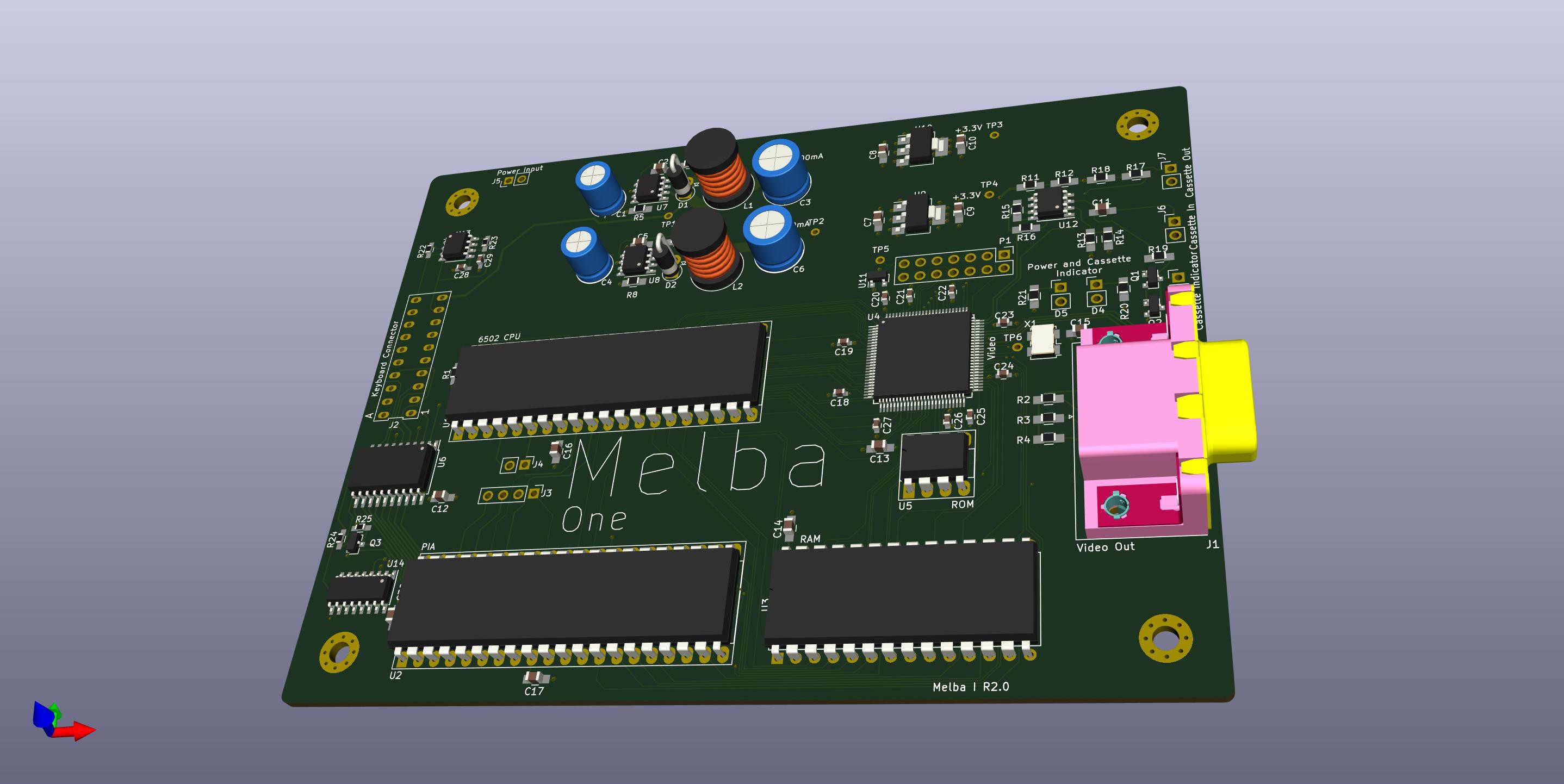

The project will be split into three phases:

- Requirements and research (done, mostly this text)

- PoCs

- TTY

- Complete system

- Cassette Interface

- Final design with case and keyboard

Christian Bjelle

Christian Bjelle

Benchoff

Benchoff

Maarten Janssen

Maarten Janssen

This excellent website truly has all of the info I wanted concerning this subject and didn’t know who to ask.

<a href="http://infocampus.co.in/angulartwo-training-in-bangalore.html">Angular Online training</a>

<a href="http://infocampus.co.in/angulartwo-training-in-bangalore.html">Angular 9 training in Bangalore</a>

<a href="http://infocampus.co.in/angularjs-training-in-bangalore.html">AngularJS Training in Bangalore</a>

<a href="http://infocampus.co.in/angularjs-training-in-bangalore.html">AngularJS Online Training</a>

<a href="http://infocampus.co.in/react-js-training-in-bangalore">ReactJS Training in Bangalore</a>

<a href="http://infocampus.co.in/react-js-training-in-bangalore">ReactJS Online Training</a>

<a href="http://infocampus.co.in/python-training-in-bangalore.html">Python training in Bangalore</a>

<a href="http://infocampus.co.in/ui-development-training-in-bangalore.html">UI Development training in Bangalore</a>

<a href="http://infocampus.co.in/ui-development-training-in-bangalore.html">UI Development Online training </a>

<a href="http://infocampus.co.in/web-designing-training-in-bangalore.html">Web Designing Online Training</a>

<a href="http://infocampus.co.in/web-development-training-in-bangalore.html">Web Development training in Bangalore</a>

<a href="http://infocampus.co.in/web-designing-training-in-bangalore.html">Web Designing Course in Bangalore</a>