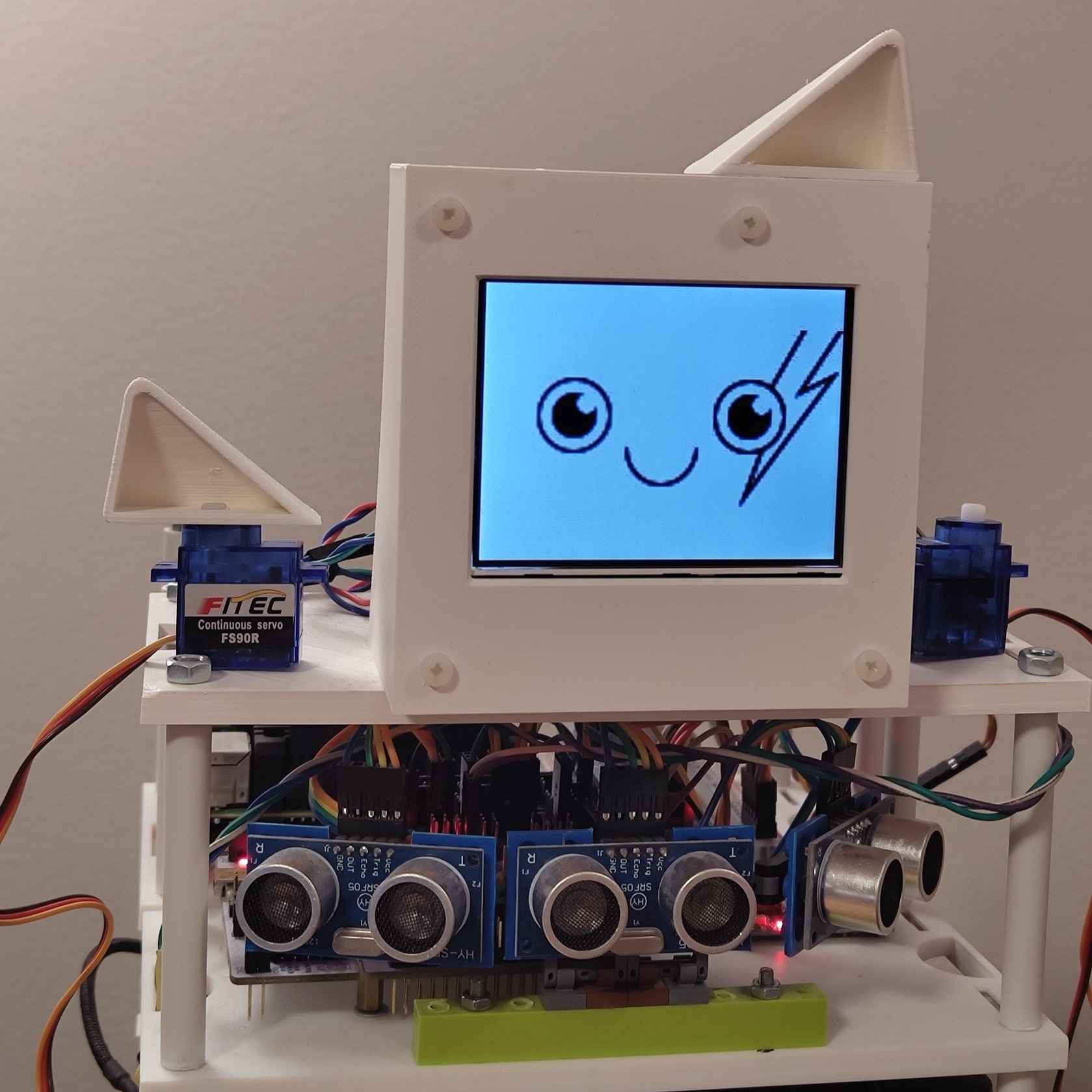

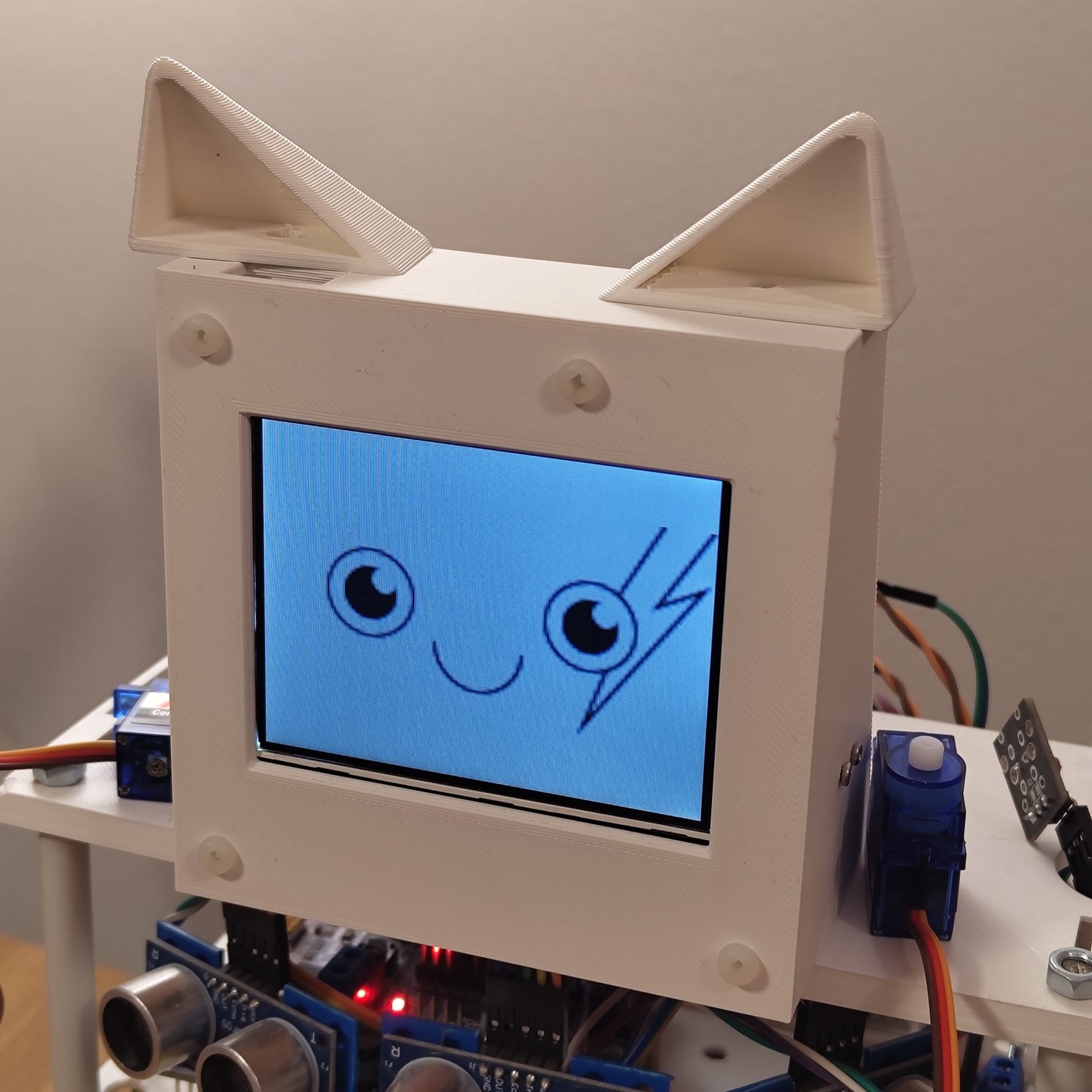

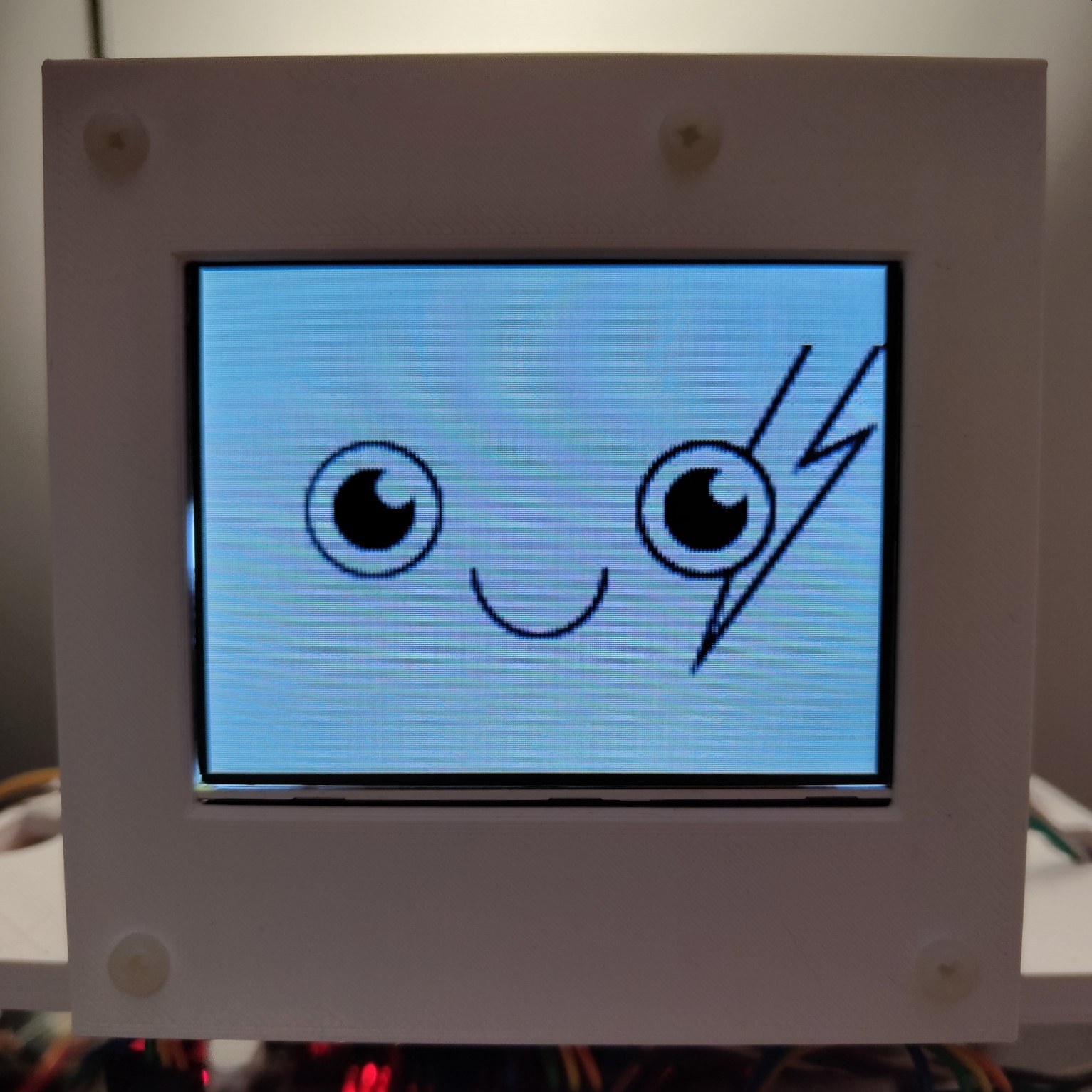

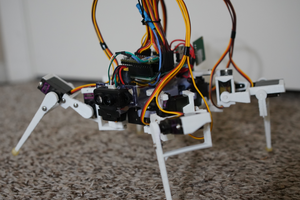

The Zakhar project is a unique and innovative approach to robotics and human-robot interaction that utilizes our (or just my 😅) understanding of animal behavior and the human and animal brain to create a robot companion for dogs and cats. The ultimate goal of the project is to create a working product that can be a basis to eventually create a human companion.

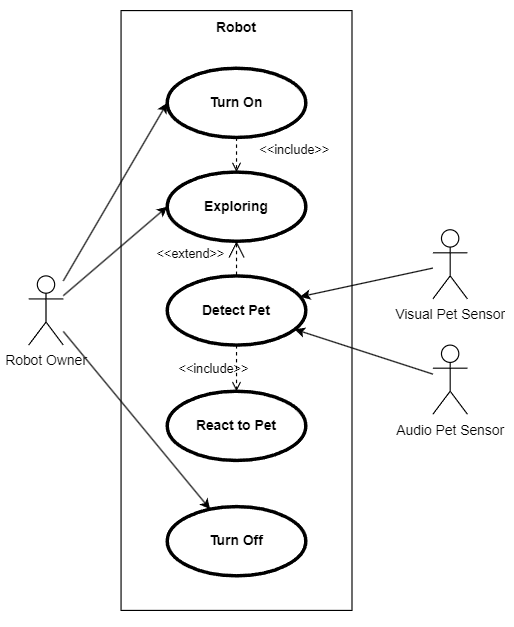

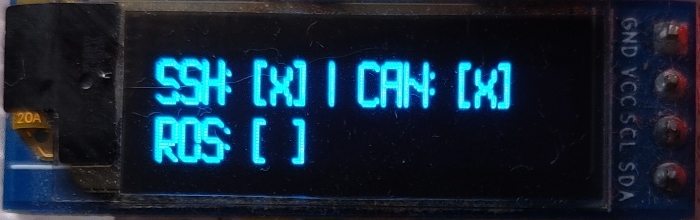

The robot's operating system (ROS-based) is designed to mimic the way the human and animal brain functions, with three main programs:

- consciousness

- instincts

- reflexes

The consciousness program is responsible for higher cognitive functions such as decision-making and problem-solving and is similar to the prefrontal cortex in the human brain.

The instincts program consists of sophisticated behavior patterns that can suppress the consciousness and take control of the robot's body in certain situations, much like the amygdala in the human brain which is responsible for emotional responses and the "fight or flight" instinct.

The reflexes program consists of simple body responses to external stimuli, similar to the spinal cord in the human body which is responsible for reflexive responses to stimuli.

The instincts program interacts with the consciousness program through the Emotion Core, a piece of software that simulates the endocrine system and represents a table of hormones that can be affected by sensor data and by the consciousness and instincts programs. The endocrine system in the human and animal body is responsible for producing and secreting hormones that regulate various bodily functions and can affect behavior and emotion. By simulating this system, the robot is able to experience a range of “emotions” and behaviors that are familiar to its human and animal users.

This familiarity with emotion-controlled behavior in theory should provide a simple and understandable user experience for all time of animal users (pets and human beings). The project aims to utilize this animal-like user experience to create a final product: a robot companion for cats and dogs, with two sets of instincts and main programs tailored for interaction with each species. The physical shape of the product is currently being considered and will be developed in future development stages.

The project is open-source and well-documented and published under the GPL3 license.

Andrei Gramakov

Andrei Gramakov

VPugliese323

VPugliese323

Redtree Robotics

Redtree Robotics

Bruno Laurencich

Bruno Laurencich

Jacob David C Cunningham

Jacob David C Cunningham