Author's Note: This writeup pertains to Pathfinder's "Milestone 1," which is my first really usable prototype that somewhat resembles a consumer device. I'm currently working on Milestone 2, which adds smarter haptic feedback and moves to a fully custom, integrated design. That's what future project logs will be documenting.

Step 1: Getting Distance Data

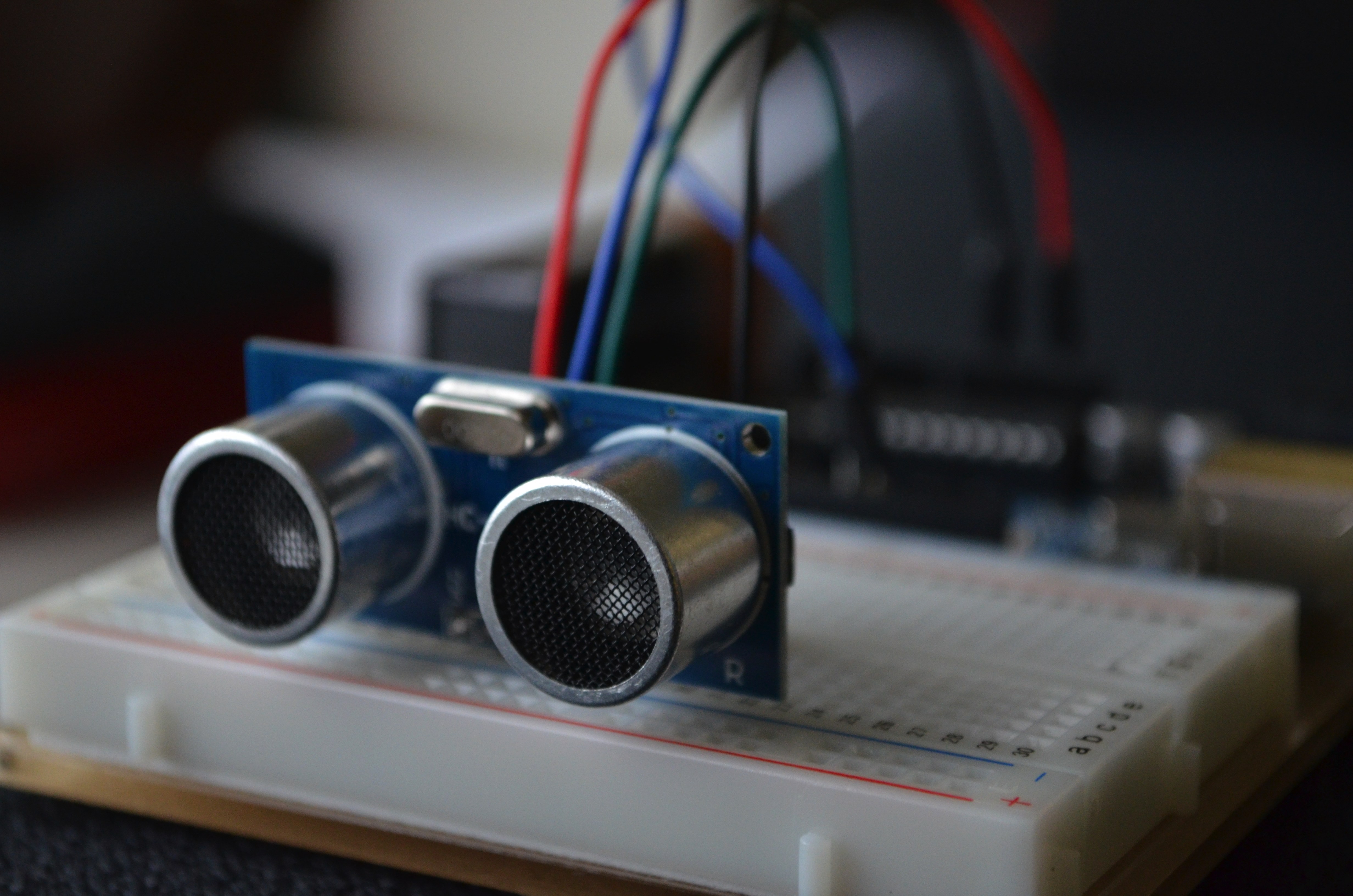

I began by sourcing the cheapest ultrasonic sensor I could find: the $2 HC-SR04 sensor. It incorporates a transmitter and receiver, operating on 60 kHz sound waves, along with their appropriate drive & timing circuitry into a single module. I then interfaced this sensor with an ATmega328P micro controller, at first through the Arduino platform to make development as simple as possible. In testing this sensor, I measured consistent 0.5cm precision out to a maximum range of 500cm, with a 30º cone of detection. However, accuracy remained off by around 5%. This was due to the impact of ambient temperature on the speed of sound, and so I integrated data from a TMP36 analog temperature sensor to achieve accuracy within 1% of actual values.

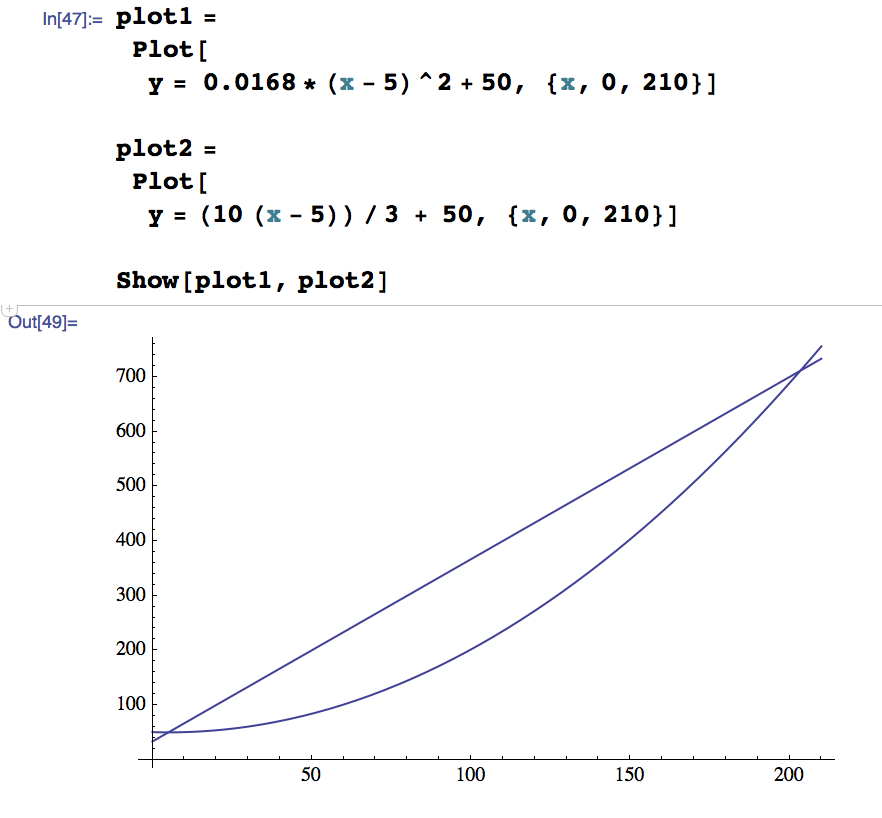

Haptic Feedback

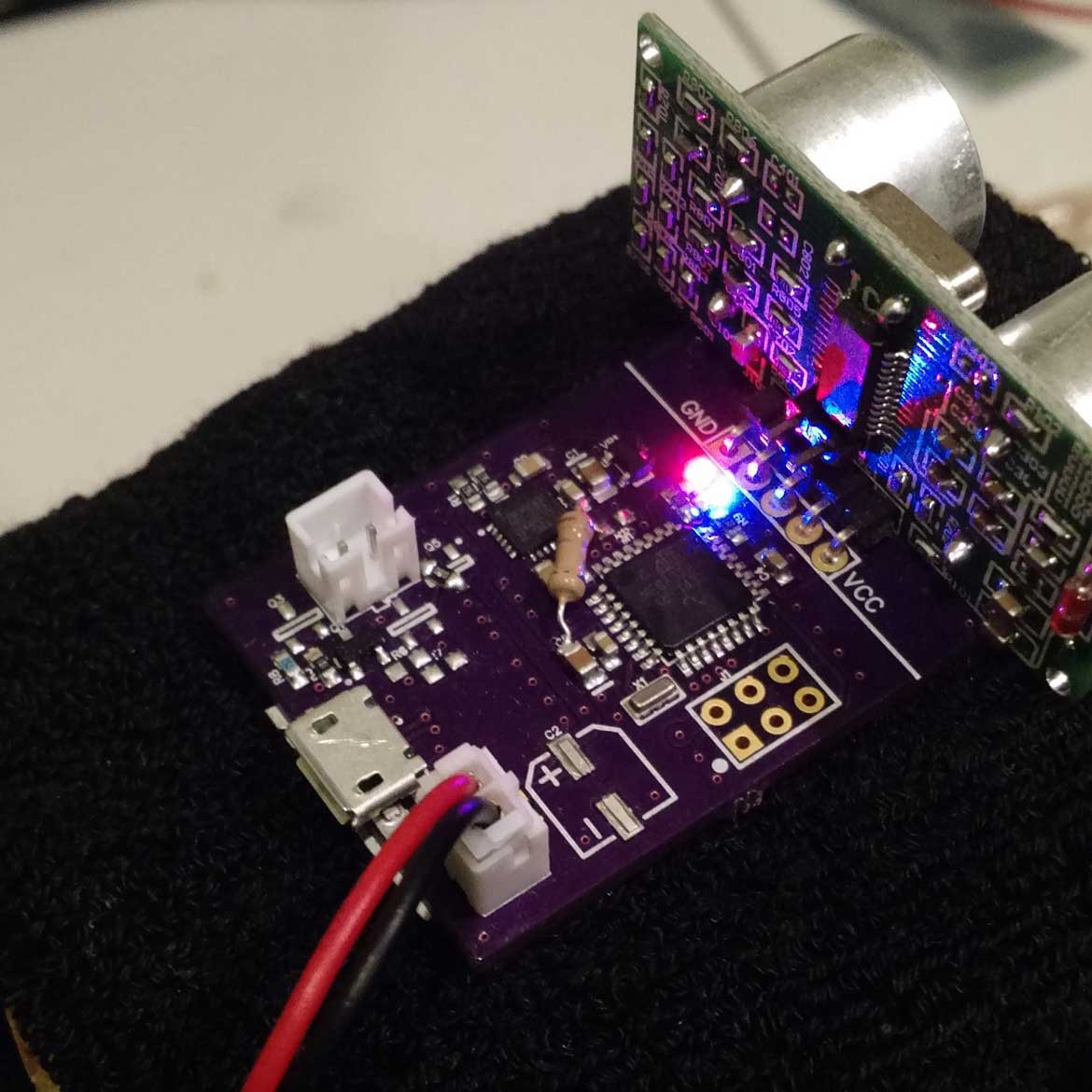

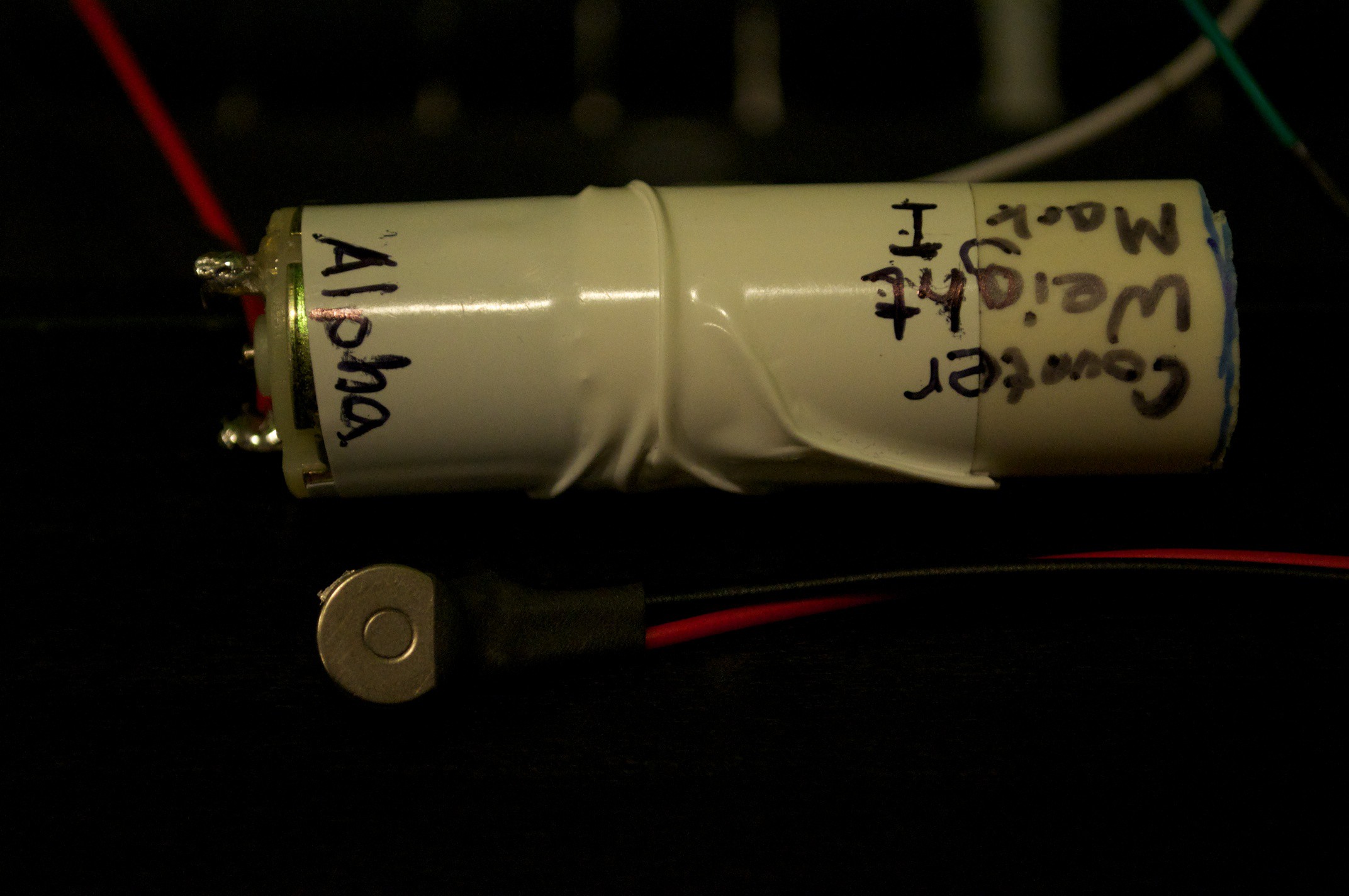

I used small, circular motors built for haptic feedback in mobile devices. A motor is placed on the pinky fingertip of the user's non-dominant hand, allowing for good tactile sensitivity while remaining minimally intrusive. To convey intensity, we manipulate the frequency of gentle pulses, whereas most traditional systems vary vibration strength. This is a really delicate process, as having the best sensors and data in the world won't help unless I can actually convey that to the user. My main challenge so far has been resolution: It's fairly easy to tell apart, say, the different quartiles of the systems' range, but we need to do better. First, I asked a focus group of future beta testers to identify the ideal range for an assistive navigational device. Most agreed that 250cm, or just over 8 feet, would be a useful extension over their existing options and that they would see diminishing returns in utility after that point. For my first prototypes, I just used a simple linear scale that changed the delay between haptic pulses. What is a haptic pulse, you ask? Even simpler: A uC pin goes HIGH for roughly 20 milliseconds, driving the gate of an N-channel MOSFET to switch a simple ERM motor. I have big plans for this cobbled-together assembly, however, and I'm now looking at LRA motors + specialized haptic driver ICs.

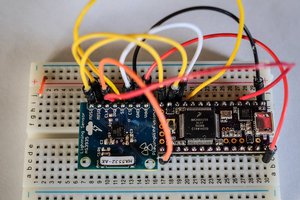

Bringing It Together

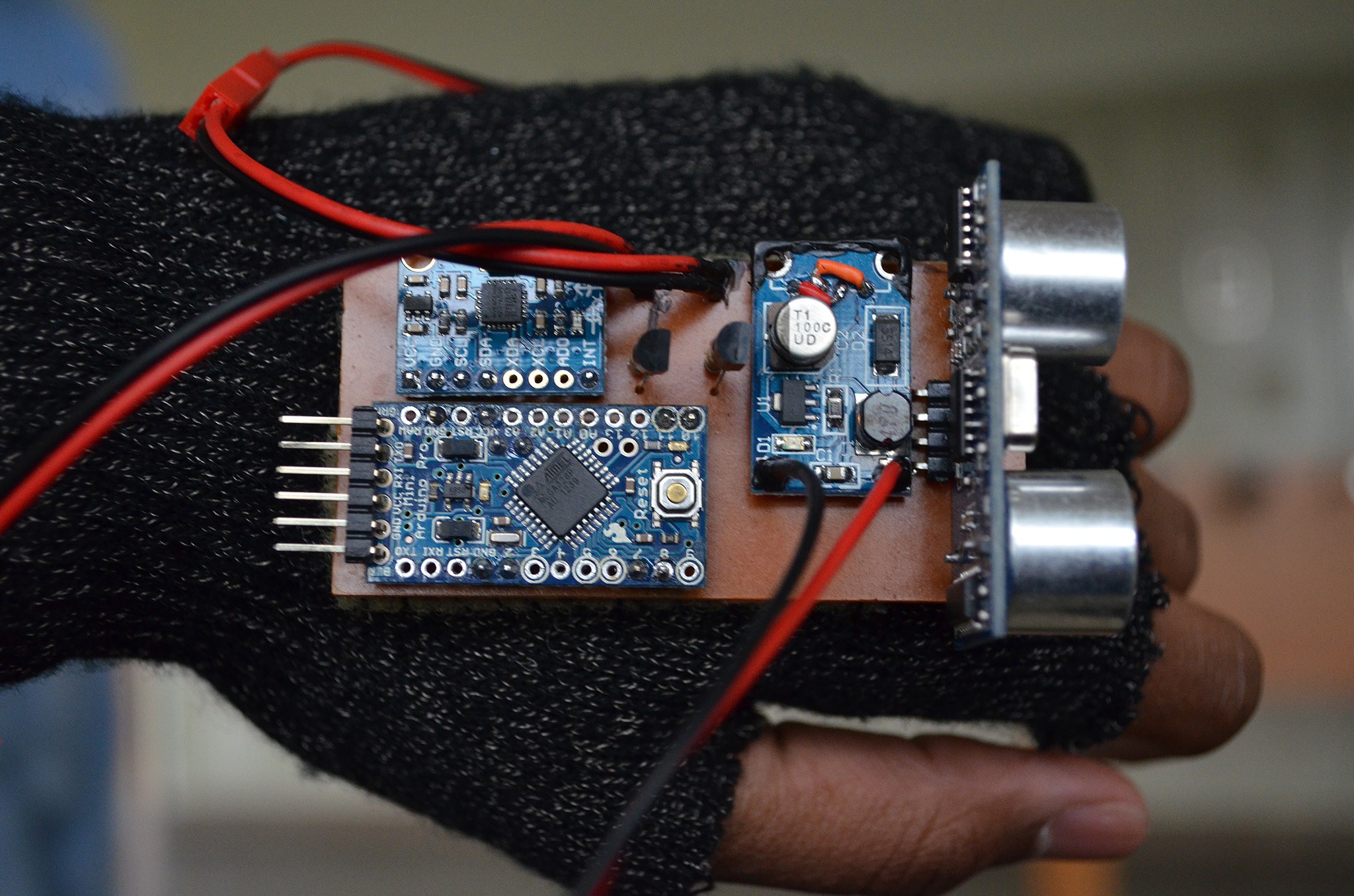

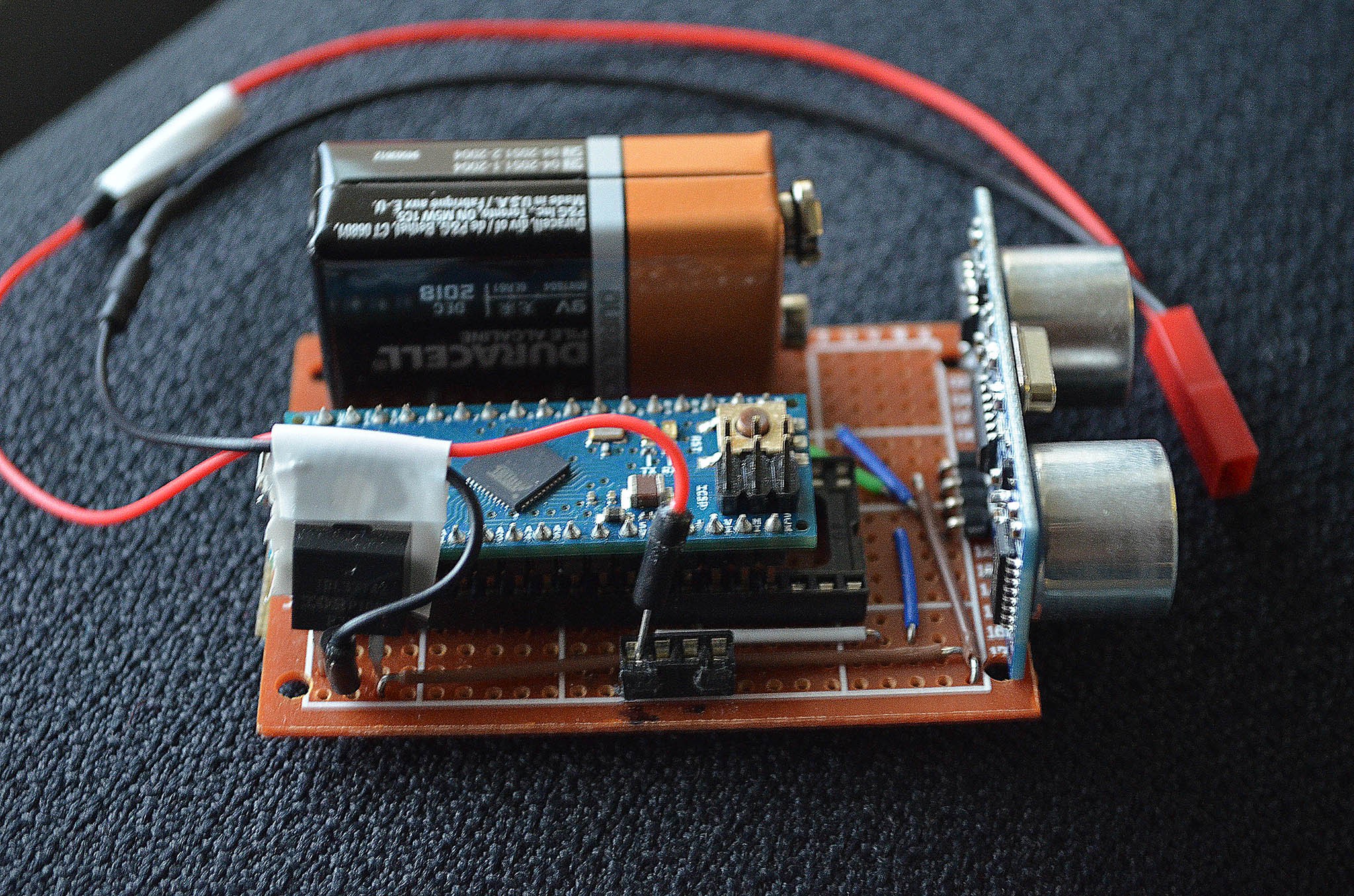

I now had to integrate the system into a single device. I switched to a much smaller controller board and arranged the parts on a prototyping grid, hand soldering the connections with jumper wires. A 9V battery provided a primitive, yet portable power source. This early prototype measured 50x70mm, and weighed 115 grams. The assembly was attached to a utility glove with velcro and tested; users reported much of the obvious: the device was bulky, had poor weight balance, and the loose glove made for poor tactile feedback.

From Prototype to Product

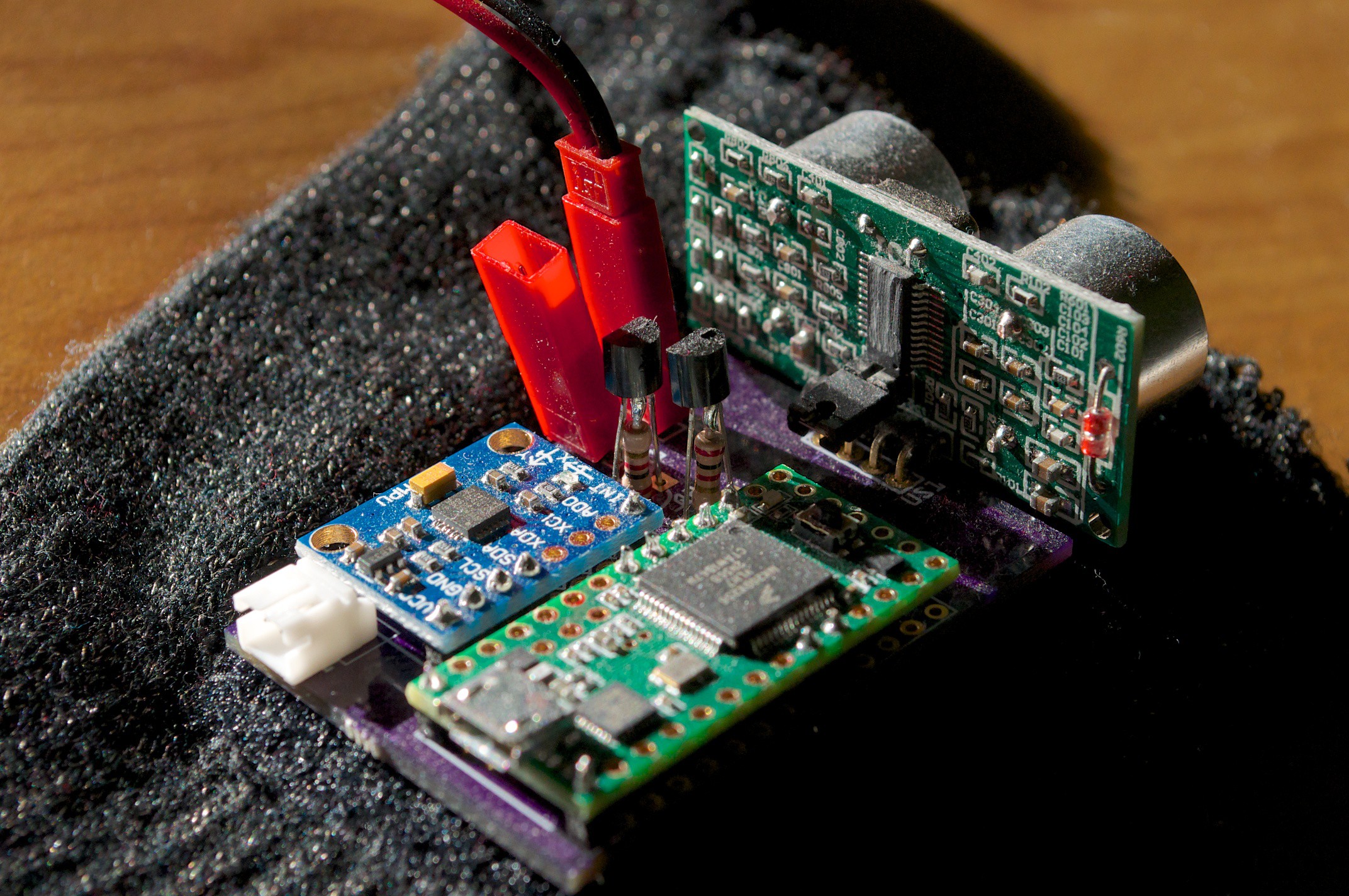

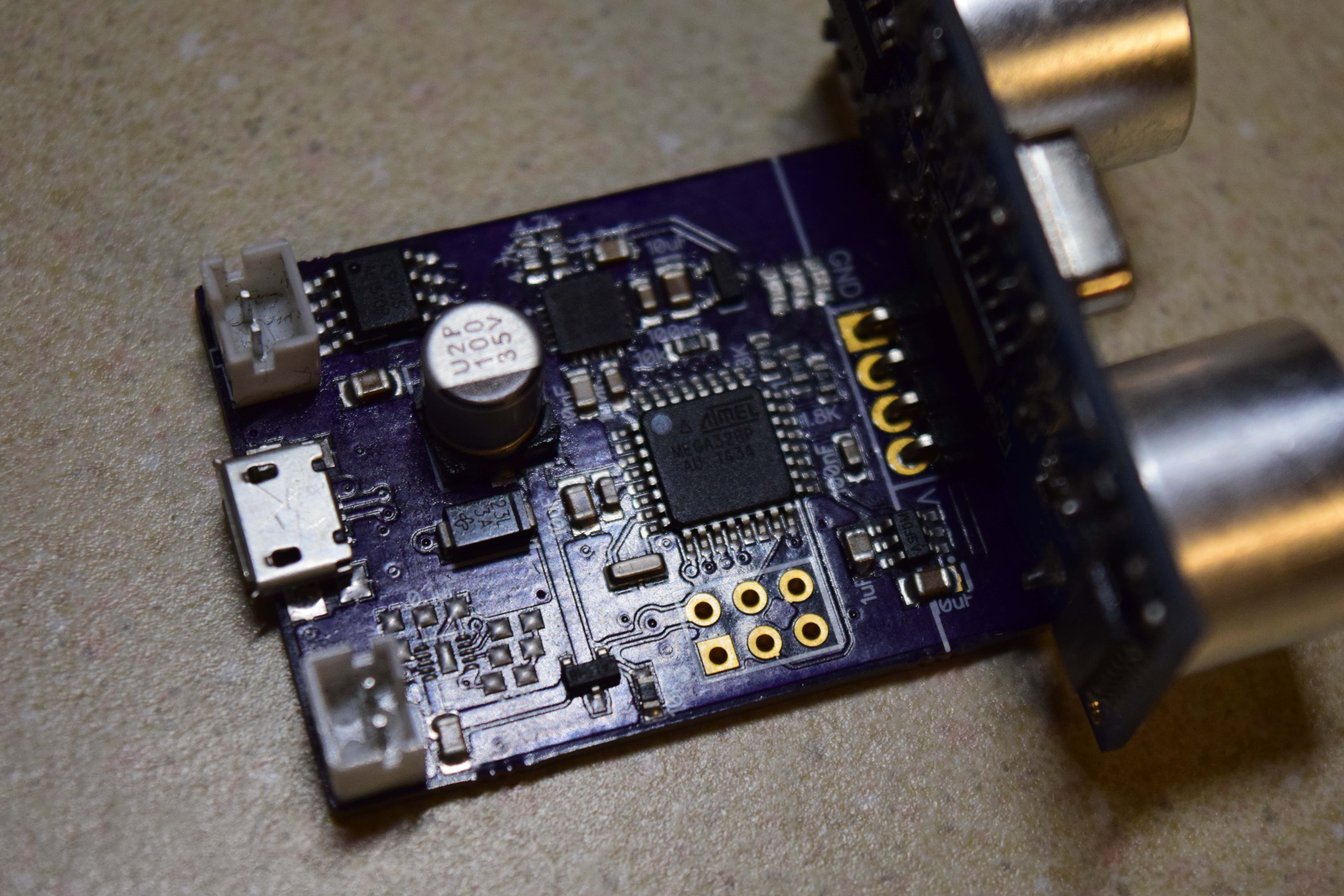

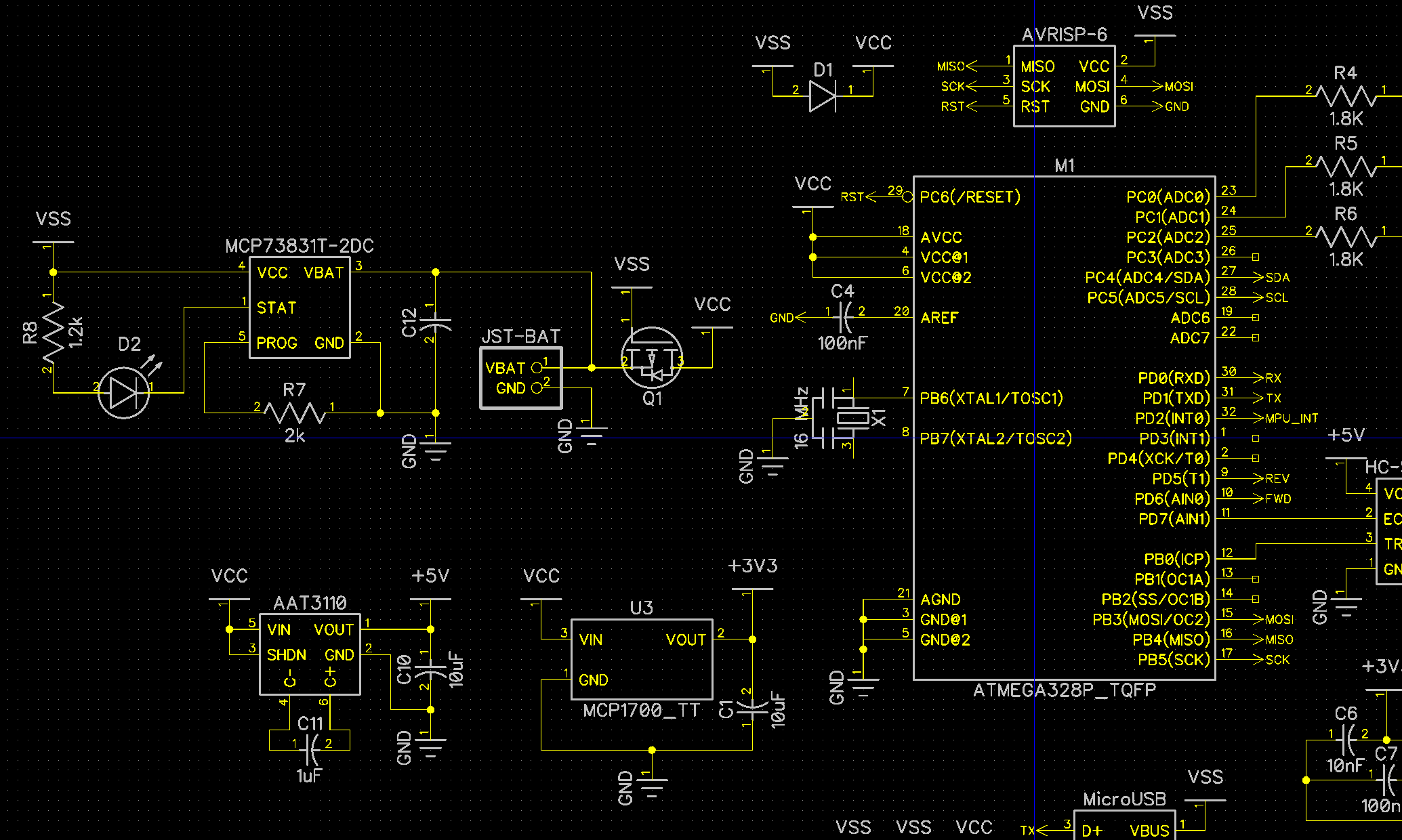

With my basic design vision physically realized, I became significantly more ambitious. Over a two week development sprint, I learned and employed EAGLE CAD to create PCB layouts for my prototype, which enabled me to add features such as an accelerometer/gyroscope, support for lithium-polymer battery packs, and an additional wrist motor for complementary feedback patterns; all while significantly reducing the footprint. I manufactured the 35x55mm board with resources from my school's chemistry lab (cupric chloride etchant, as well as the essential fume hood + personal protection equipment!. The glove too was replaced for a more elastic variant, and the motor was sewn into the fingertip to ensure tactile sensitivity. Overall, the board was 45% smaller and weighed 60% less (45g), while improving functionality.

Iterative Improvement

Mark III has a slight board shrink (30x45mm, 40g) and systems upgrade. The 8-bit 16MHz AVR processor was swapped for a 32-bit 96MHz...

Read more »

George Albercook

George Albercook

brian bloom

brian bloom

Awesome project! Any updates for 2016?