For this project, we considered various technical and design considerations:

1. Form Factor

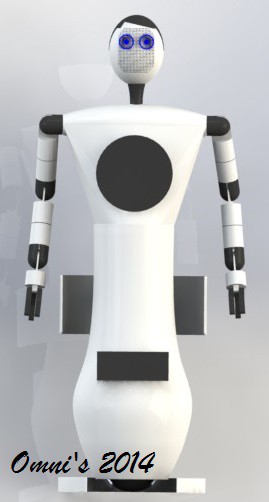

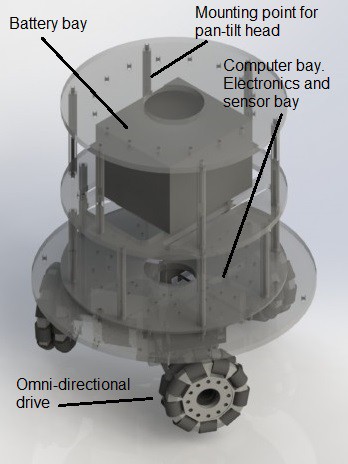

In such a service oriented industry like the restaurant industry, a close and personal touch is essential. It makes the diners feel welcomed and at ease, thus providing a conducive and pleasant dining experience. Many form factors for the robotic waiter are possible that will carry out the day to day operations of a restaurant equally well. However, to be in line with our earlier point, our team thinks that a humanoid robotic waiter, although harder to implement, will best approximate the interpersonal interaction between a human waiterand a diner. We also note that with current technology, we are not able to come up with a facsimile of a real person with complete fidelity in terms of outwards appearance and movement, thereby introducing a 'creepy' factor in the robot if we attempt to do so. Thus we have chosen to go part way on this and came up with the humanoid design (shown in figure on the right) for OSCAR. With this design we think that the customer will experience almost the same level of interaction as with a human waiter, without constantly needing to come to term with the humanistic characteristic of the robot, because the outward appearance clearly suggest it is not a human.

2. Locomotion, Navigation, and Collision Avoidance

When we think of waiters, we think of people in uniform, gracefully negotiating the narrow spaces between tables while skillfully balancing a number of items their hand as they serve the diners. Traditional statically stable (remains stable without the need for any active feedback) robots require large bases, thus a big footprint to remain stable. Due to this reason, they are not optimized for the small confines of the walking spaces in between tables or within the kitchen. Their movement are sometimes slow and not as fluid as that of human locomotion. Dynamically stable (require active feedback mechanism to remain stable) robots are more suited to this purpose. They have the advantage of having a small base thus footprint and are very maneuverable as a result of their dynamic stability.

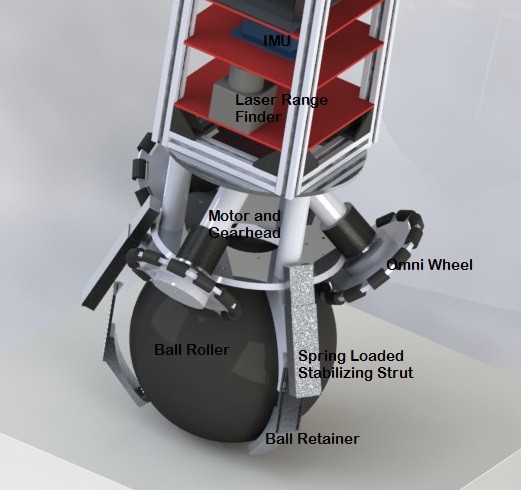

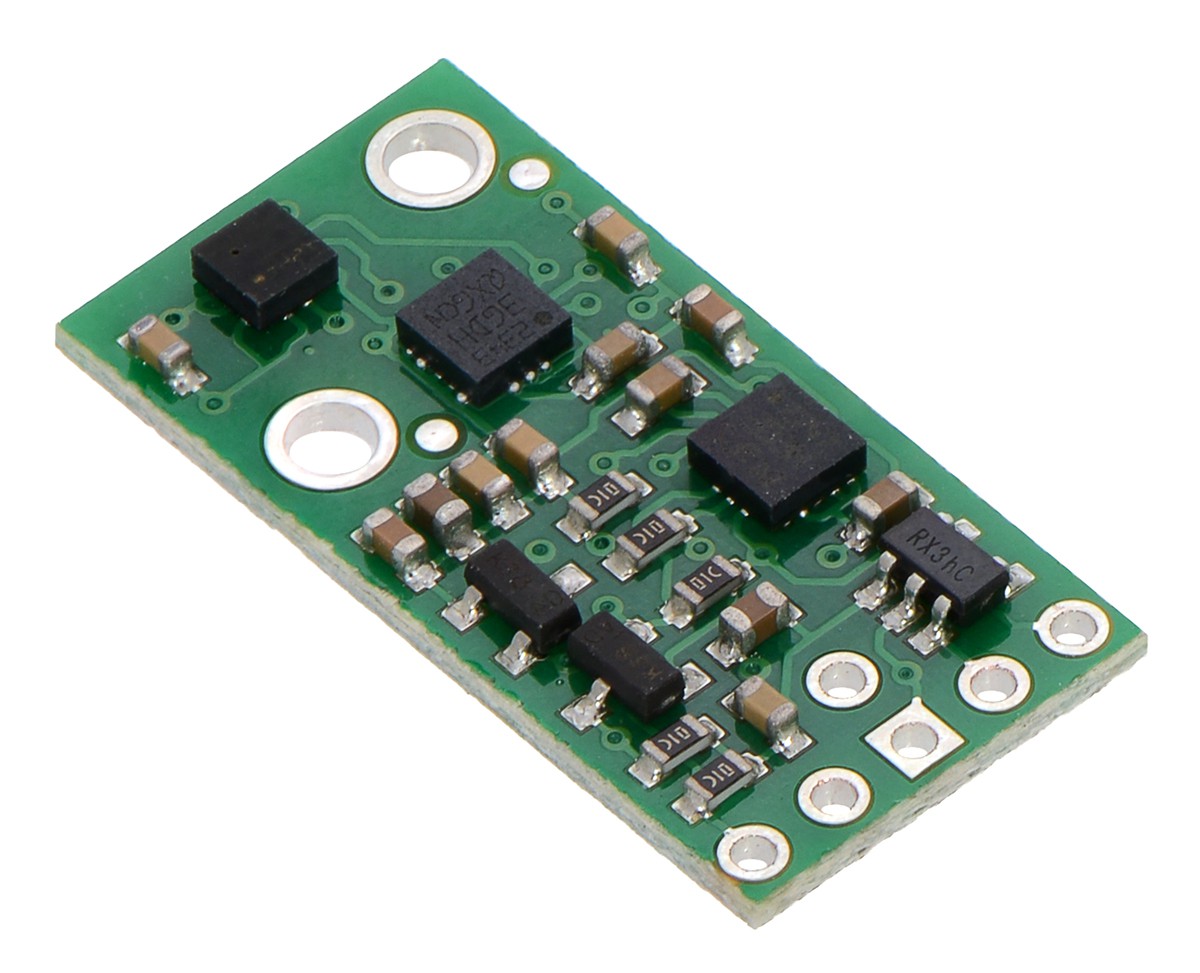

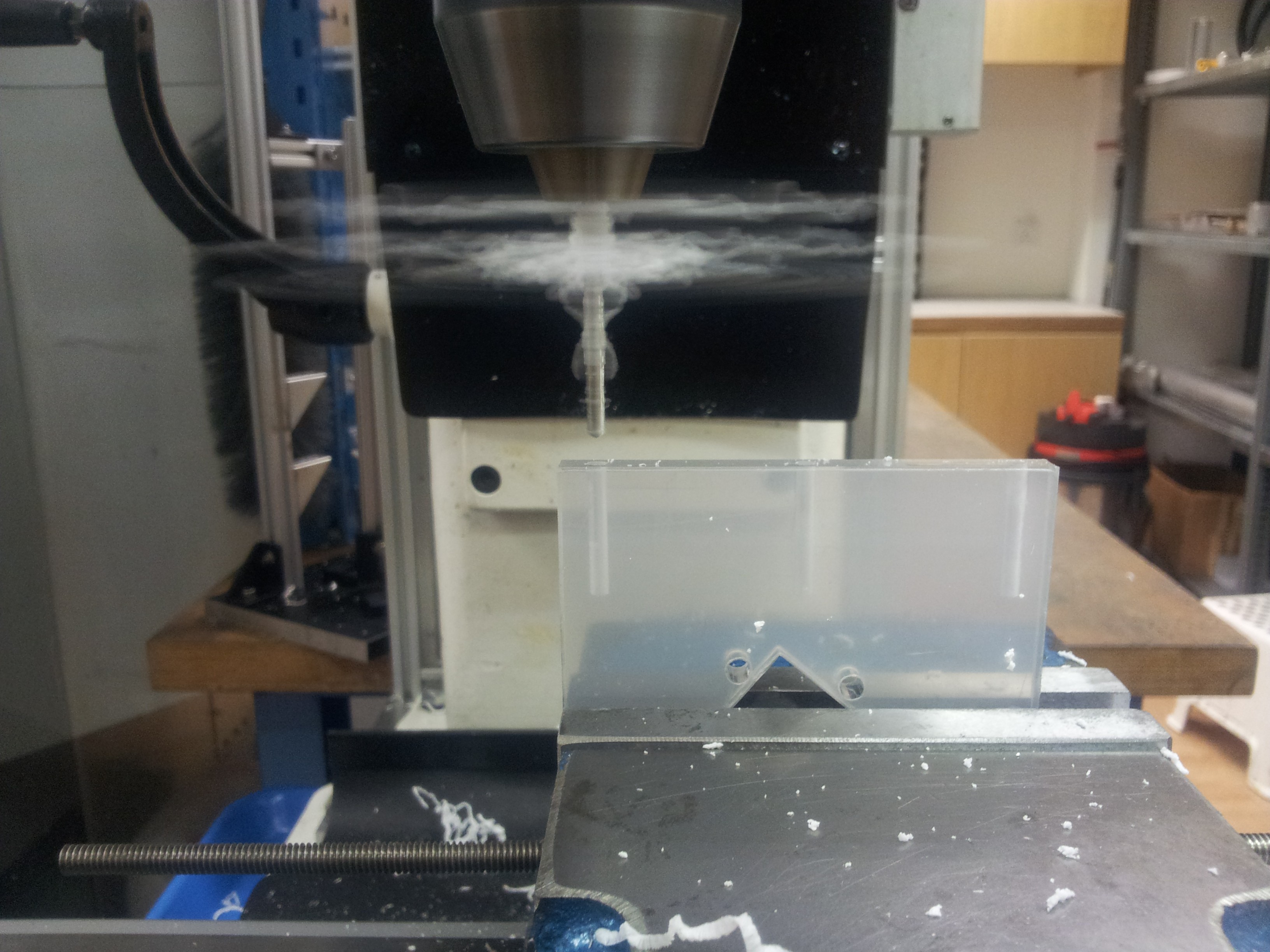

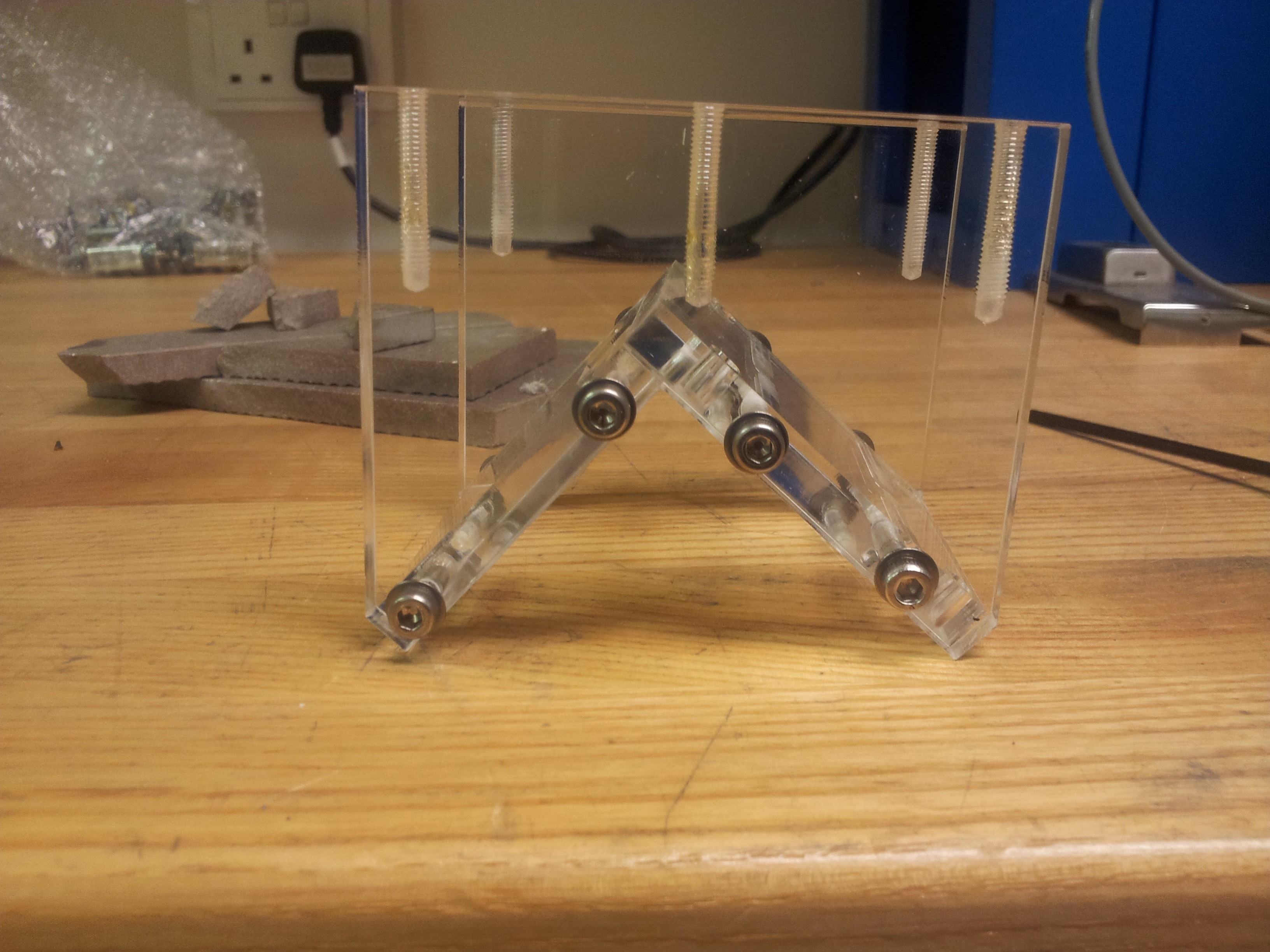

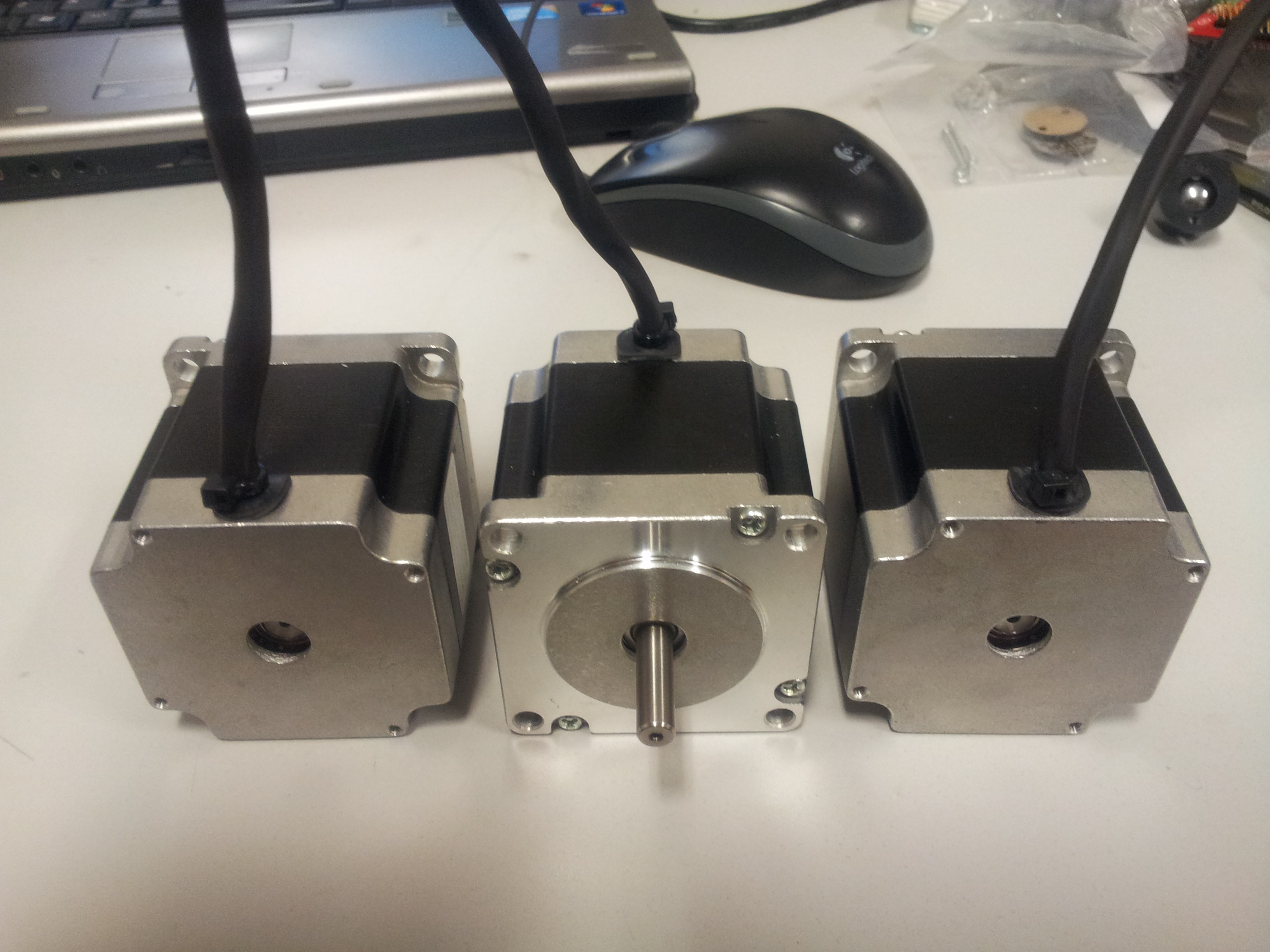

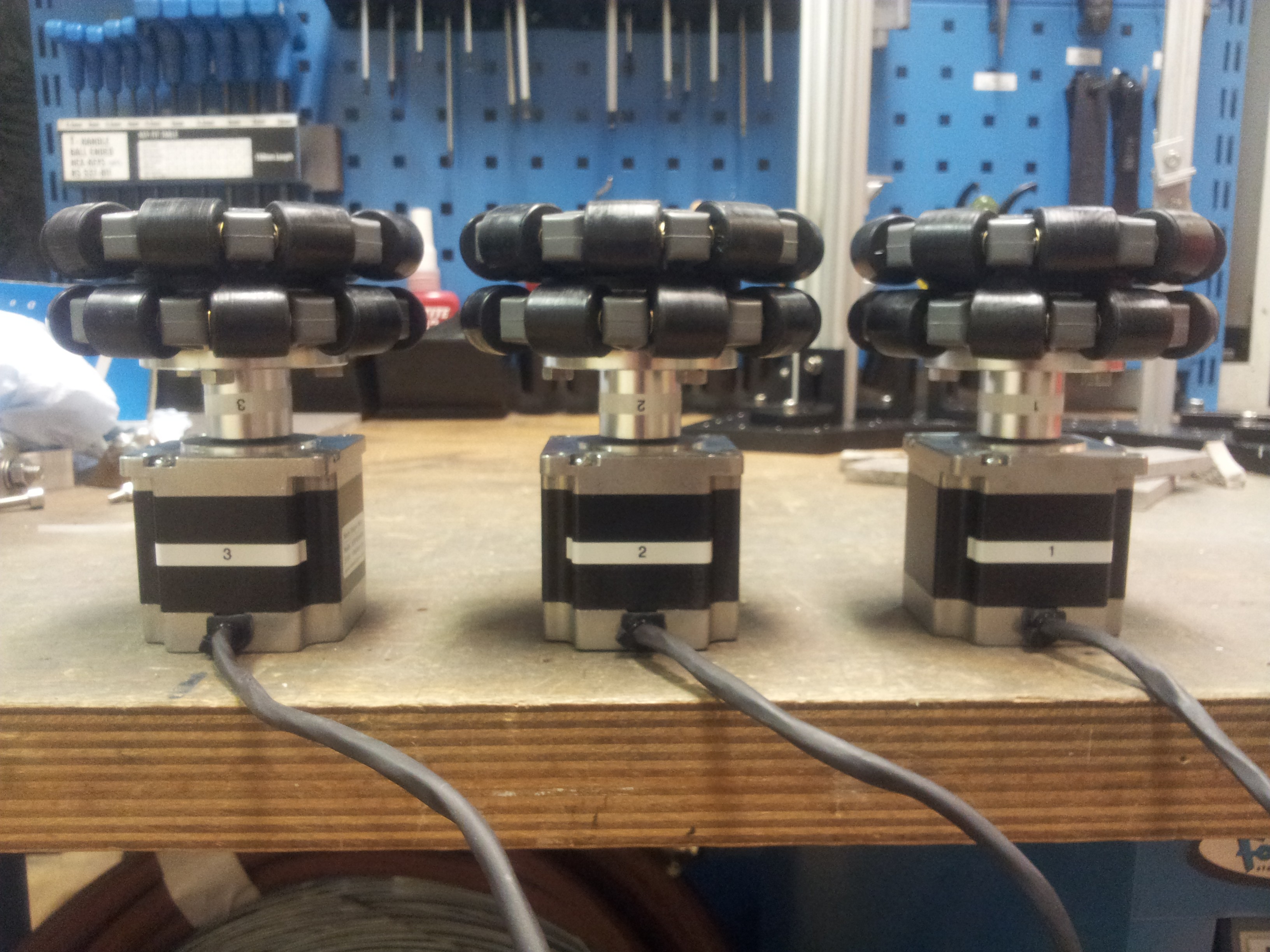

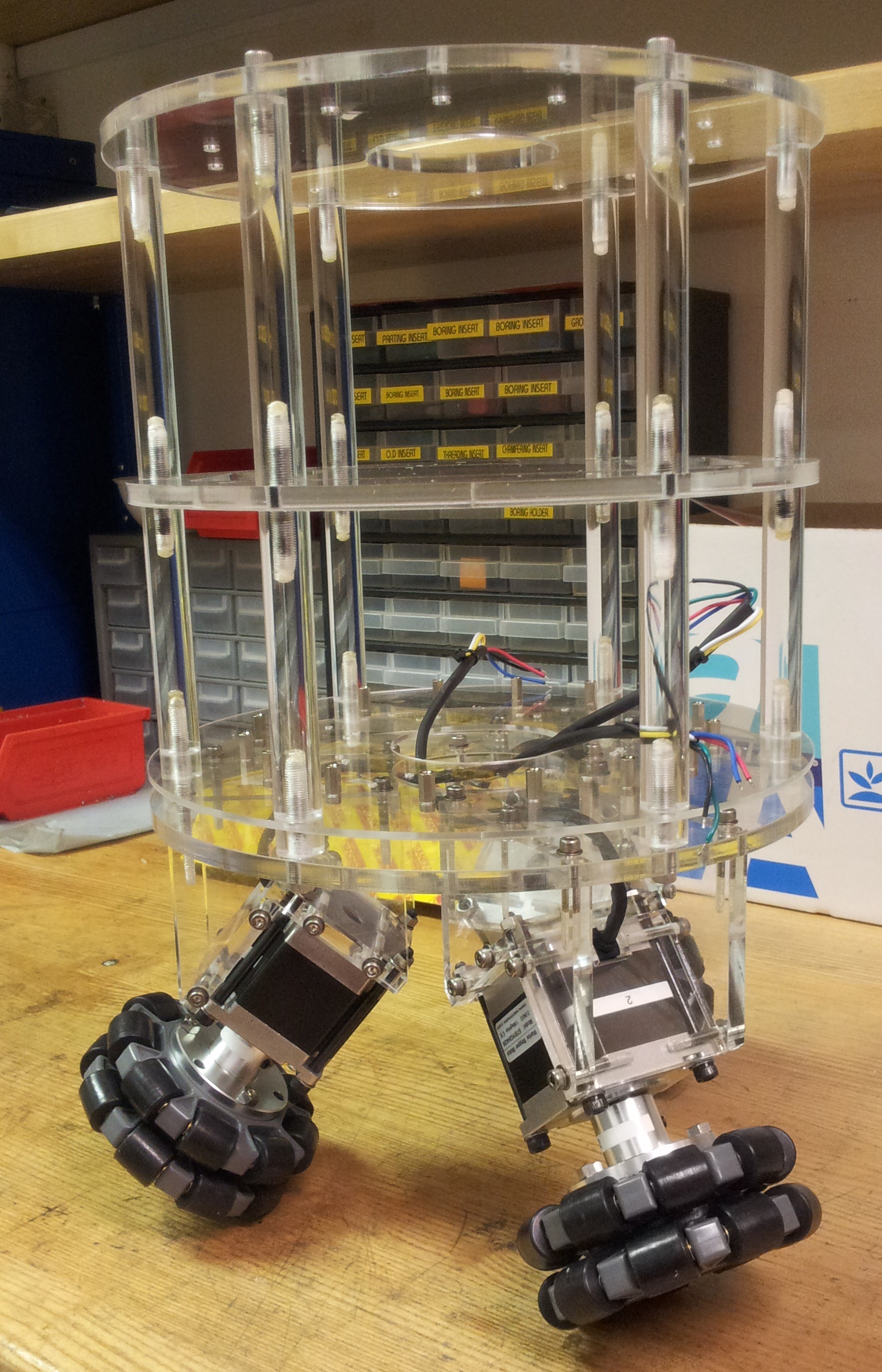

Our team have opted for less common ball-drive configuration shown on the right for OSCAR. The concept of the ball drive is deceptively simple. It consists of three omni wheels (wheels with rollers on their circumference to allow for side way motion) driven by motors which are mounted at an angle to each other. Synchronized movement of the three wheels drive the ball roller allowing for motion in any direction (thus the omni in OSCAR) and even turning in it's own footprint. Attitude of OSCAR will be measured by the IMU. With this information together with the feedback from the rotary encoders, the computer can precisely make the necessary adjustment to maintain OSCAR's balance when stationary or moving.

We have design a set of stabilizing struts to prevent OSCAR from toppling when dormant. As a measure of safety, these struts are spring loaded and can be deployed in an instant in an event of a fault condition which will cause OSCAR to become unbalanced.

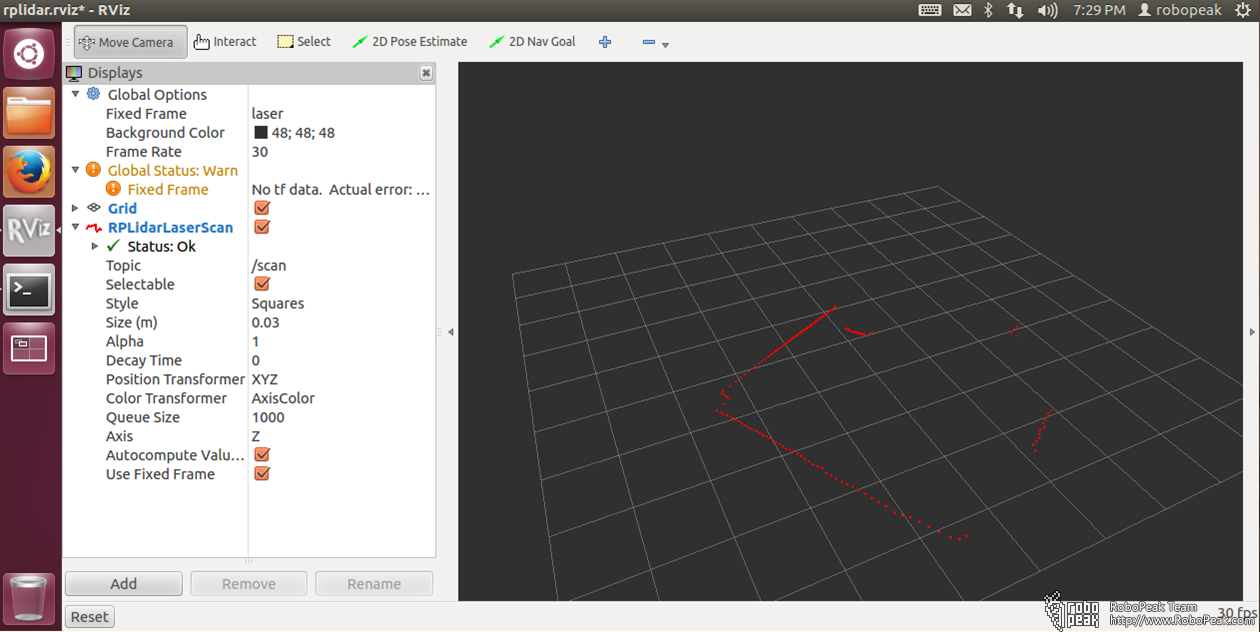

As the interior of a restaurant is relatively fixed and well defined, a map of it can be created and stored in OSCAR. Using map together with the laser range finder (the black range finder window is shown at the lower part of the torso) and head mounted stereoscopic cameras (behind the led face display), OSCAR can perform autonomous navigation and collision avoidance without the need for any markers.

3. Power Source

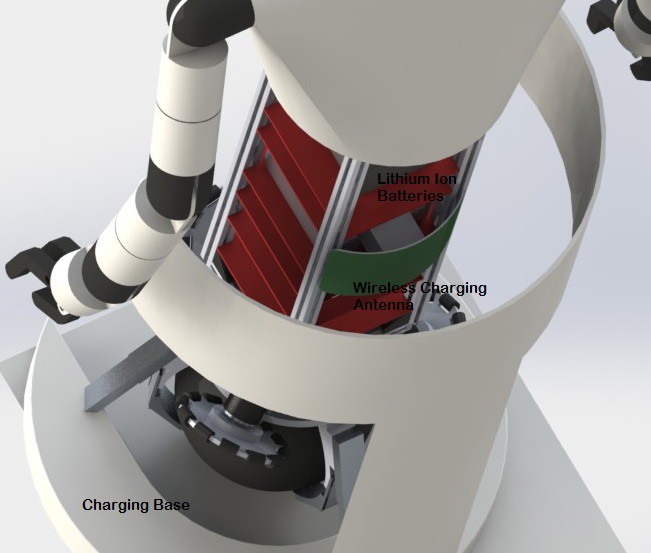

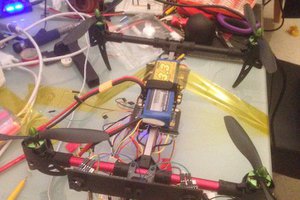

The power source for OSCAR is a series of high capacity lithium ion batteries mounted at the top of the electronics package (see figure below) to provide a high center of gravity. Charging will be done wirelessly with a charging base, eliminating of the need for any external contacts. OSCAR will be able to automonously navigate and dock itself for charging when the batteries are running low.

4. Interactivity

Orders taking and answering to the diners' request require a...

Read more »

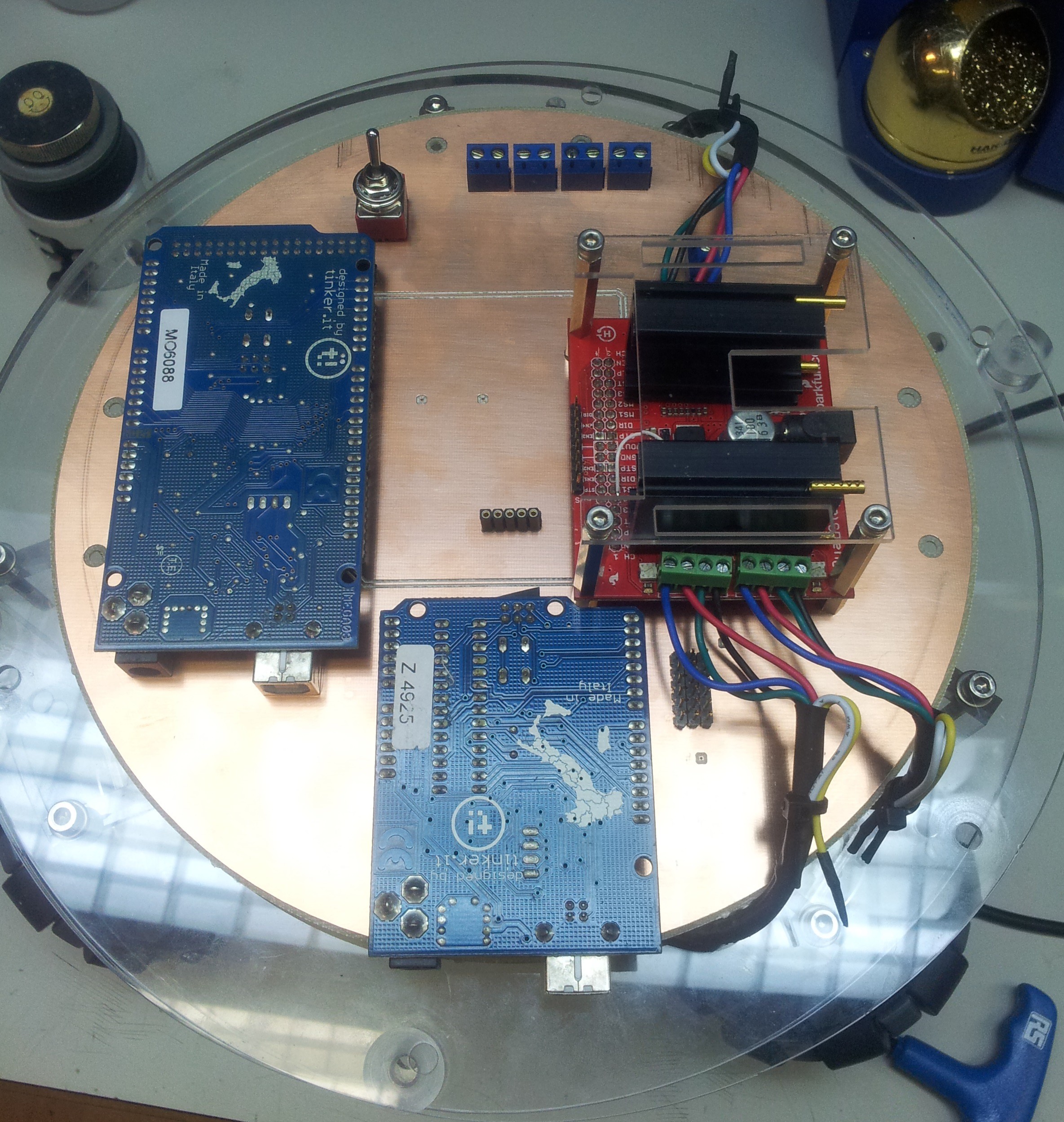

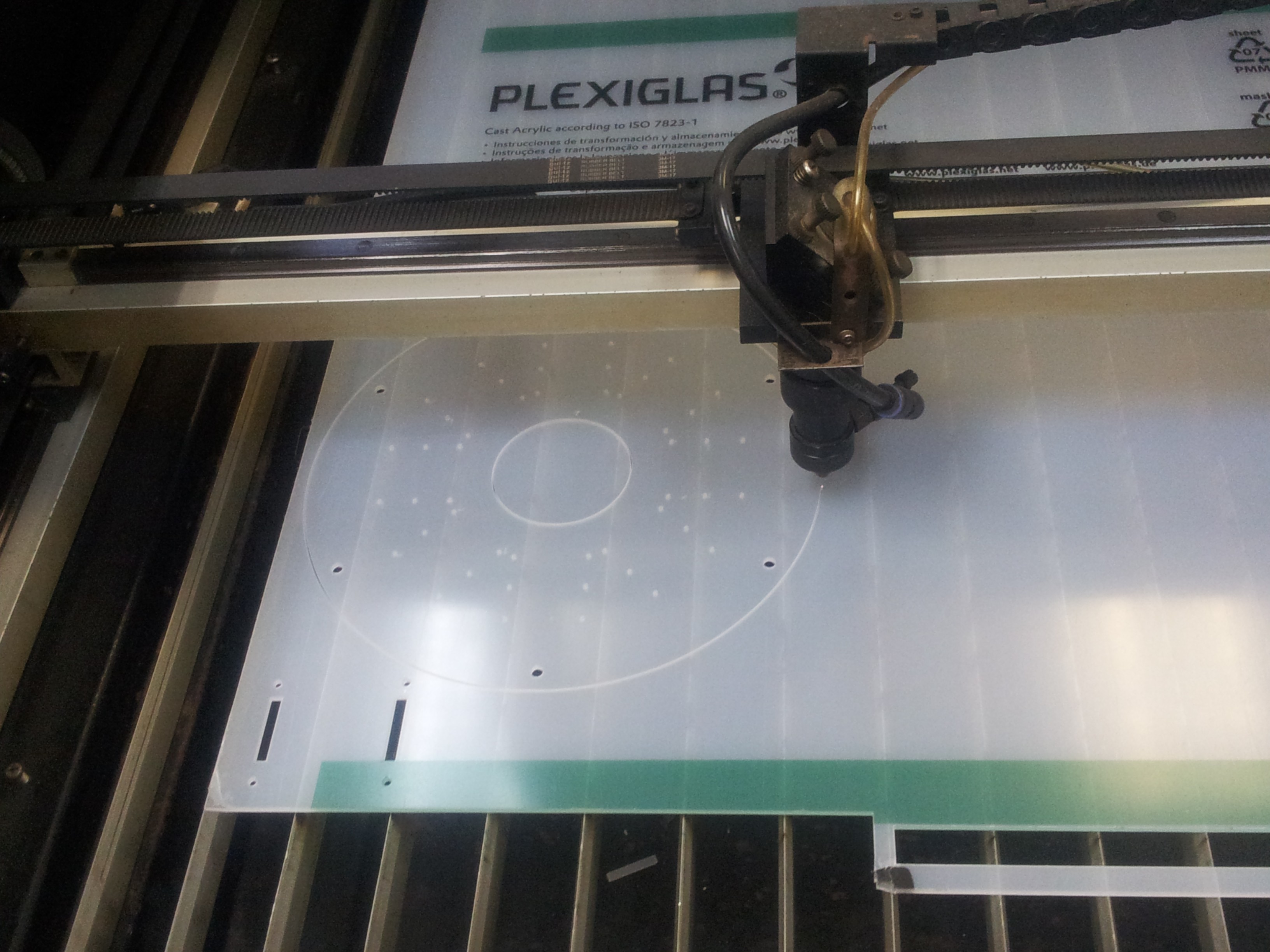

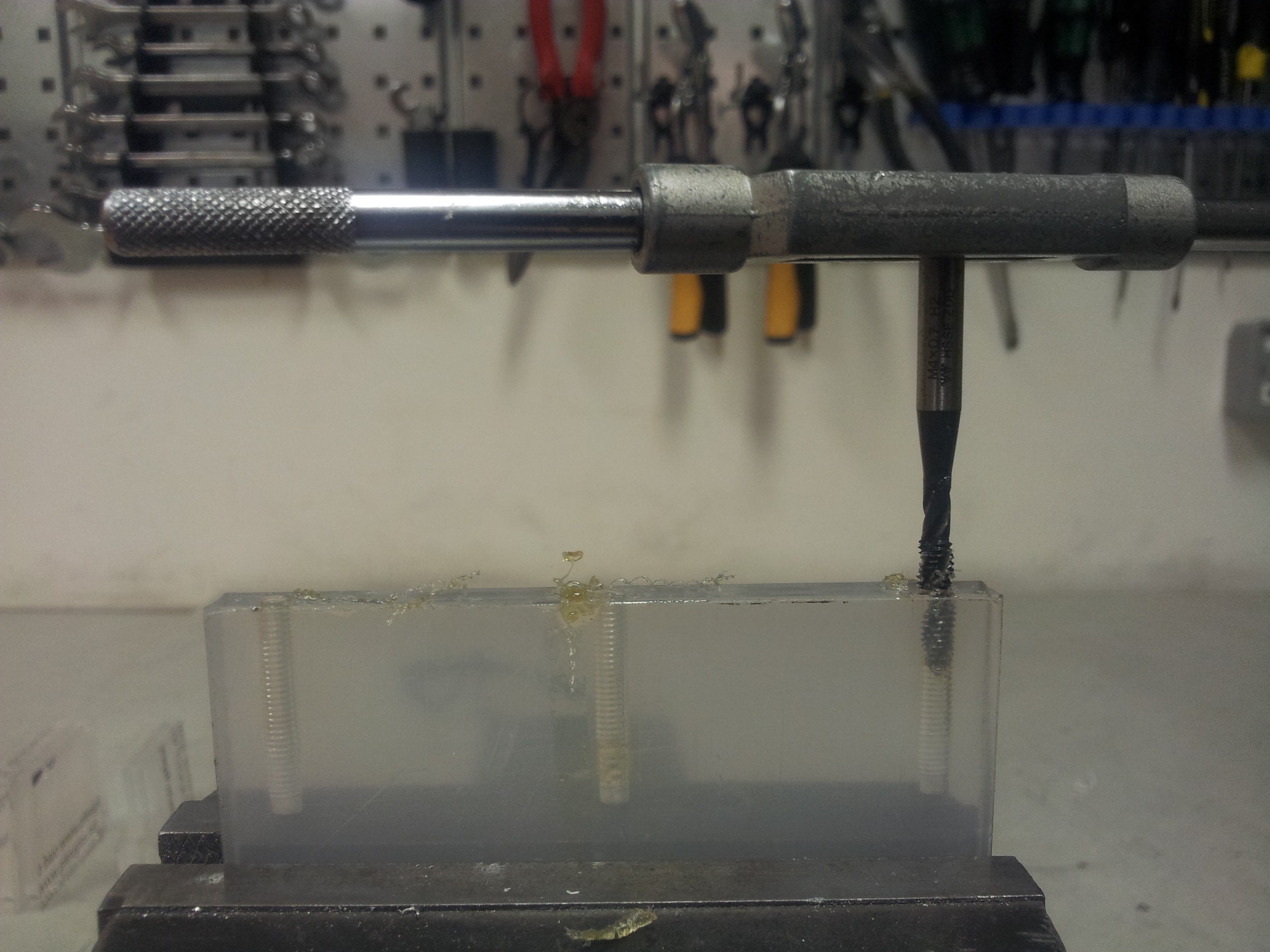

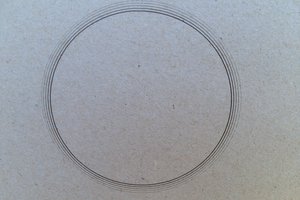

Plates which are about 260mm in diameter are being held together by 10mm diameter spacers made of acrylic rods. Driver and balancing electronics will be mounted on the plate just on top of the stepper motors to minimize cable length. The battery which constitutes a bulk of the weight will be mounted high up for stability (akin to the configuration of an inverted pendulum). We will be using inexpensive Pd acid battery in the beginning which will probably be switched to LiPo in the future.

Plates which are about 260mm in diameter are being held together by 10mm diameter spacers made of acrylic rods. Driver and balancing electronics will be mounted on the plate just on top of the stepper motors to minimize cable length. The battery which constitutes a bulk of the weight will be mounted high up for stability (akin to the configuration of an inverted pendulum). We will be using inexpensive Pd acid battery in the beginning which will probably be switched to LiPo in the future.

Capt. Flatus O'Flaherty ☠

Capt. Flatus O'Flaherty ☠

sad_ken

sad_ken

agp.cooper

agp.cooper

Mr kAr0sh1

Mr kAr0sh1