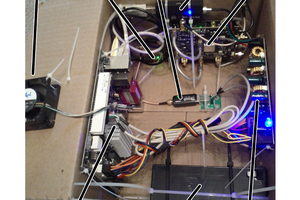

After sending quite a long time learning the details about things like PCIe lanes I eventually managed to specify the parts for this machine:

- GPU: Quad Nvidia GTX 1080 (not yet purchased, using a GTX 970 for now)

- Motherboard: Gigabyte LGA2011-3 Intel X99 SLI ATX Motherboard GA-X99P-SLI

- PSU: EVGA SuperNOVA 1600 G2 80+ GOLD, 1600W

- RAM: Patriot VIPER 4 Series 2800MHz (PC4 22400) 32GB Quad Channel DDR4 Kit - PV432G280C6QK

- CPU: Intel Core i7-5930K Haswell-E 6-Core 3.5GHz LGA 2011-v3 140W Desktop Processor BX80648I75930K

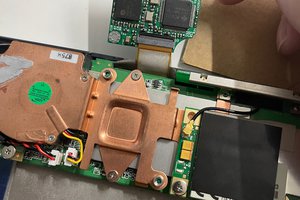

- Cooling: Corsair Hydro Series High Performance Liquid CPU Cooler H60

- Case: Corsair Carbide Series Air 540 High Airflow ATX Cube Case - Black

- OS drive: Samsung 950 PRO Series - 512GB PCIe NVMe - M.2 Internal SSD (MZ-V5P512BW)

- Data drive: Samsung 850 EVO 1 TB 2.5-Inch SATA III Internal SSD (MZ-75E1T0B/AM)

Since the GTX 1080 only just came out I decided not to buy any until the price drops a bit and third party vendors start selling them. I read that its not that smart to buy the "Founders Edition" because the cooling solution is not that good and there is an early adopter price premium. For the moment I am using a single GTX 970 to get everything set up and working, and plan to replace this eventually.

The CPU has 40 PCIe version 3 lanes. The motherboards all seem to have the issue that you can't really tell it how many lanes to use for each slot. I would rather it used x8, x8, x8, x8 leaving 8 lanes for the M.2 drive and other stuff, but in fact it tries to use x16 for the graphics cards and then has to use PCIe version 2 for the M.2. This is a known limitation.

I think for these compute intensive tasks the actual bandwidth between CPU and GPUs is not too important because most of the time is spent computing on the GPU.

I will be installing Ubuntu 14.04, CUDA toolkit 7.5 and CuDNN v4, and Tensor Flow on Python 3.

robotbugs

robotbugs

Greg Duckworth

Greg Duckworth

Wenting Zhang

Wenting Zhang

john

john

crazy how those parts are no longer with half as much as they used to be.