NOTE: I know this can be done easily in other ways, and in fact the project that brought this up has a pi 3 on board, more than enough power to do audio recognition on its own. This is a more "can I do it this way.. ?" project :)

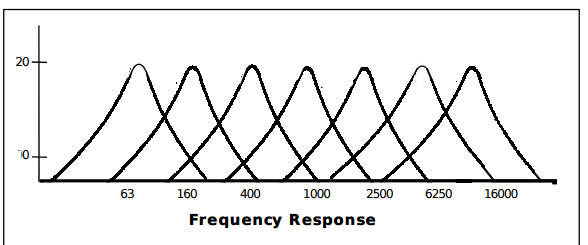

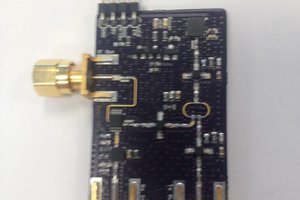

The novelty of this approach is the use of the spectrum analyzer IC commonly used in graphic equalizer circuits found in your common stereo. The chip I will be using for initial testing of the concept is the MSGEQ7. This particular IC will find the peaks for 7 bands, then output those as an analog signal every “clock tick”, sequentially stepping through the bands. That analog voltage is then sensed by the micro-controller and decoded. By using this chip, the micro does not need to do the heavy lifting of performing a FFT function. It simply needs to compare the values to a set lookup table inside the controller. To account for volume differences, peaks will be converted to their lowest ratios. These ratios are then compared to the tables, taking into account a margin of error.

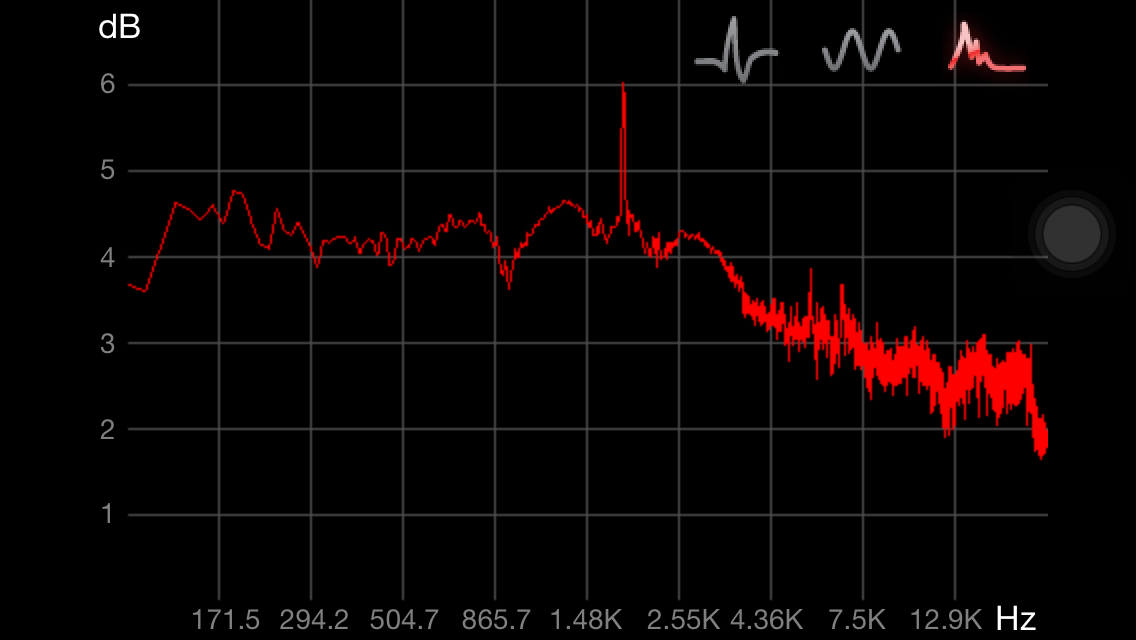

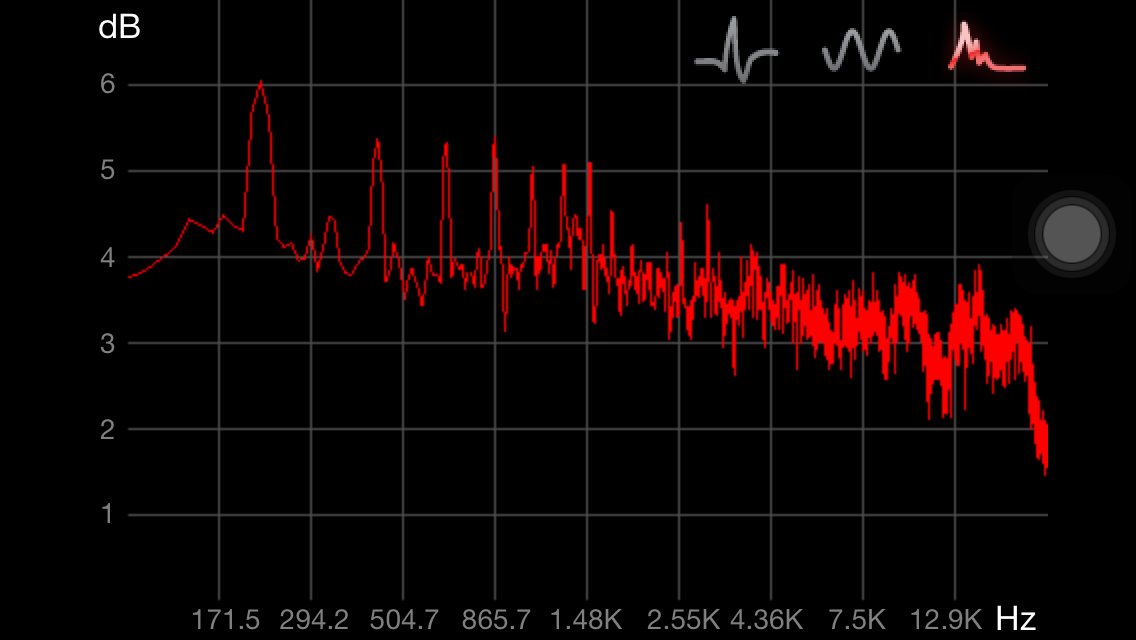

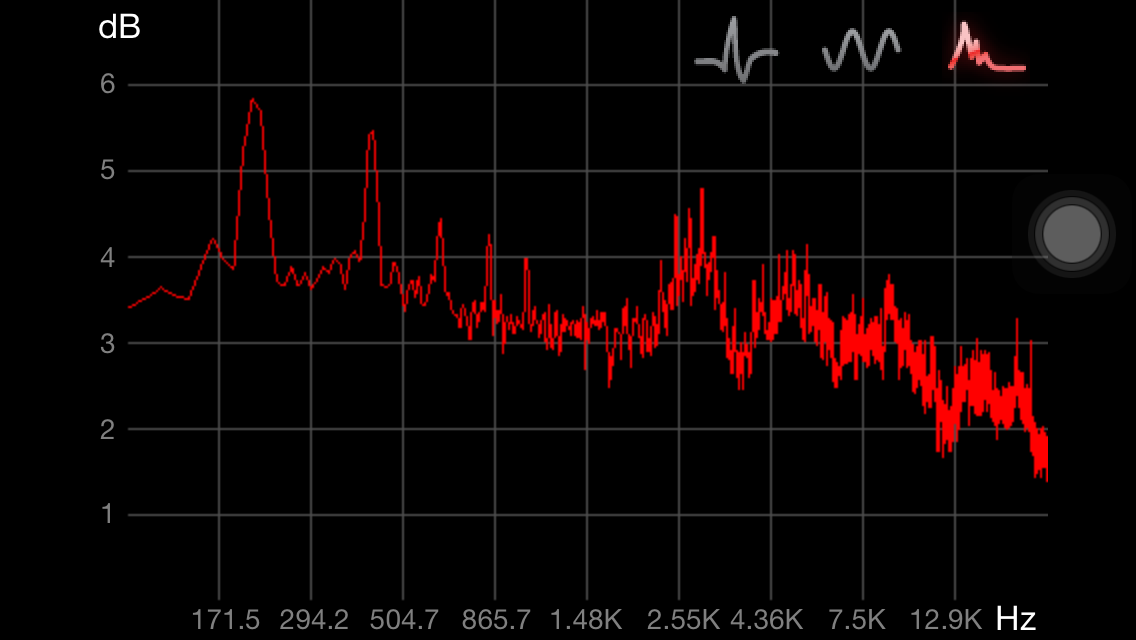

A couple examples of an audio spectrum for two sounds.

The first is me whistling.

The next two are snapshots of me saying different words.

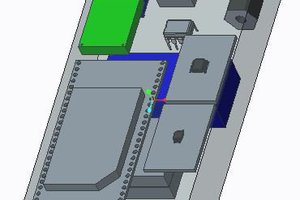

The Microchip Pic16F1459 that I am using includes a 10 bit analog to digital converter. By reading each of the 7 analog signals we can think of the whole thing as a two dimensional array of seven bands, each containing a voltage value.

Frequency Response of the MSGEQ7:

signal[band number][10 bit voltage value].

The resulting numbers are a simplified visual of the full spectrum, such as those seen above.

We then convert these 7 values to their lowest common ratio before utilizing the look up table.

This gives you a

huge variety of signatures from which to choose. In the initial

design the goal is to be able to recognize common words and

outputting the phonemes via USB to the host system.

This project came

about as a component in P.A.L, (

https://hackaday.io/project/12383-pal-self-programming-ai-robot) .

From comments and questions, I have noticed that people have a hard

time separating the various parts of the project. So much goes into

designing and building an autonomous robot from scratch that I feel

the best solution will be to break out each of the major systems into

smaller project chunks for easier digestion.

This work is licensed under a Creative Commons Attribution-NonCommercial 3.0 United States License.

More to come! :)

ThunderSqueak

ThunderSqueak

Sam Pullman

Sam Pullman

The Big One

The Big One

Sensory and then a few other companies had VR happening on DSPs and 16-bit MCUs back around 2000. The drawback was that they were pretty sensitive to noise and they didn't have a wide range of matching on a trained word. The best responses were when the known word list was short, within 5-10 words, and for each to have some unique features that allowed it to be different enough from the others on the list.