I basically just wanted to know when to leave my house to catch the subway every morning. Transit data feeds are pretty heavy to pull down and parse, but a full Raspberry Pi seemed like overkill. Instead, I wrote a small server that fetched what I needed and just sent PNG's to the ESP32.

How things get rendered

Nowadays, we actually send frames in a WebP container, so animation is fully supported. And we have a rendering library that lets you just write simple Python to render things, frame by frame. For example, here's a simple "clock" app:

def main(config):

# figure out the current time

tz = config.get("tz", DEFAULT_TIMEZONE)

now = time.now().in_location(tz)

# build two frames so we have a blinking separator

# between the hour and the minute

formats = [

"3:04 PM",

"3 04 PM",

]

return [

build_frame(now.format(f))

for f in formats

]

def build_frame(text):

# draw a simple frame with the given text

return r.Frame(

root = r.Box(

width = r.width(),

height = r.height(),

child = r.Text(

color = "#fff",

font = r.fonts["6x13"],

content = text,

height = 10,

),

),

)

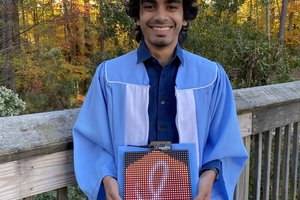

Actually to be entirely accurate, this looks like Python but is actually Starlark, which is a subset of Python. It's very fast to interpret, so we are able to embed it in a Go server. That way, each app can be rendered pretty quickly. This is what the basic clock app above looks like when it's rendered:

Your browser needs to support WebP to be able to see the image above.

Each ESP32 pings our backend server a few times a minute to tell us that it's online and report what it's currently displaying. If there's any new frames for it to render, they get pushed over.

Rohan Singh

Rohan Singh

Idrees Hassan

Idrees Hassan

Fabian

Fabian

Tim Wilkinson

Tim Wilkinson

can you point me to the hardware list? I can find the matrix screen ands the esp32, but what is the board the esp32 is attached to?