Yet another homebrew computer based on 65C02 CPU

To make the experience fit your profile, pick a username and tell us what interests you.

We found and based on your interests.

Today I want to start with the latest update - and for a change, this will be very optimistic one. After I shared last entries on Reddit, I got suggestion to try out different PLD chip variant for address decoding, wait state generation and nRD/nWR stretching. Sure, I have used those in the past for my breadboard build, but they never worked very well, causing all the possible random bus issues. I put them in the components box and never looked back.

As it turns out, it pays of to revisit old findings when circumstances change.

I replaced ATF22V10C-15 for an ATF22V10C-10 and strange things started happening. For one, I could finally remove the external circuitry for nRD/nWR stretching and use PLD for this again. Second, it turned out that the PCB build is much more stable, so I started testing various oscillators, and this is where the amazing screenshot came from:

Yes, you are reading this correctly: DB6502 with OS/1 runs perfectly stable at 16MHz on the latest PCB. You can load programs, they all run just fine. MS BASIC works:

It runs MicroChess too, and for a first time it's pretty snappy:

So yeah, that is a small step for computing, but one giant leap for DB6502. After months of struggle I finally reached one of my goals (system clock running at 14MHz), and even went a few more steps further. Feels great, man!

Key takeaway: revisit old assumptions when circumstances change, share to get feedback and use the feedback to improve.

Setup details

So, how does it work? As I said, all that was required was to replace 15ns PLD by faster, 10ns one. Suddenly all the timing violations disappeared and I could remove the external circuitry for nRD/nWR stretching. Thanks to that fact the PCB is running standalone again.

As for the wait state generator, I'm using no wait states for RAM access, one wait state for I/O operations and two wait states for ROM access (using the 150ns EEPROM variant). It's pretty neat setup, and it pushes all the components to their limits. The only thing that could work faster is the VIA chip - it's capable of running at full 14MHz speed, but since it's part of I/O range, it gets one wait state automatically.

There are also other things on the board I haven't tested fully yet, and the biggest one is memory banking. I have tested all the involved components, but to test drive it properly (and according to original design) I need to change the OS/1 code, memory mapping and other things, so it will not happen overnight. Still, based on what I have seen so far it should be working well.

Should be working well. Famous last words.

Is it all good then?

Well, obviously not. There were some issues with bus ownership handling by the supervisor chip, but this was simple software bug. I had some issues with using supervisor as a clock source, but again - this was resolved by software change.

While fixing those I have discovered something disturbing, and further investigation resulted in another interesting find.

The epic struggle for onboard AVR ISP port

As you probably don't remember, in my first DB6502 v2 prototype board I have messed up AVR ISP port layout. It was actually pretty silly mistake, but easy to make, so you can read about it here. It was fixed pretty easily by adding special adapter wires reversing port polarity, and it worked. Or, rather, I think it did. Thing is I haven't used it a lot, once you upload supervisor firmware (and it doesn't change frequently), you don't really need the port for anything else most of the time.

This time I made sure the port is oriented correctly and it was fine. When I discovered the bug mentioned in the previous section I simply uploaded new version of supervisor code and started using it, just as expected. Then I noticed another small bug, fixed it and tried uploading.

Nope.

Nope.

Nope.

Out of a sudden the AVR ISP port stopped working. I unplugged power, waited a few seconds, powered it all up again and......

Read more »Last time I wrote about simple software bug that caused very scary-looking issue in my serial communication implementation. Before that I also covered problem with 15ns PLD and nRW/nRD stretching. There was another one I haven't even mentioned so far, but it scared the hell out of me: when I started up my latest DB6502 prototype board, the CPU wouldn't just work. Like at all. It was powered and all, but it was not doing anything. Pretty soon I realised that it was the RDY line that was permanently held down, and then I understood - latest revision of the board doesn't use the open collector variant of the RDY circuit, but the parallel RC network for faster operation. All it took was a simple update of the PLD code from this:

RDY.OE = !RDY;

to this:

RDY.OE = 'b'1;

And now, instead of open collector, I had nice "drive always" RDY output from PLD. After fixing all the simulation errors I got it to work nicely.

Still, it's pretty frightening when you spend months on designing the board, pay for fabrication, spend couple of hours soldering and it just doesn't start at all.

Anyway, what I'm trying to say is that apparently my project is getting to the level of complexity where I have to be very, very careful with each step, because the number of moving parts is growing and making sense of it all can be difficult.

ROM flashing issue

Back to where I left off last time - I fixed simple OS/1 code issue and it should have worked. It didn't, because when I tried flashing ROM via the onboard AVR programmer it would just fail silently. Even worse - it failed, but claimed to have succeeded.

Now, I've been meaning to write about it for some time now, but haven't gotten around to it yet. See, there are two ways to write to the ROM memory: you can write a byte (or page of 64 consecutive of these at a time) and wait for 10ms, or you can perform write and just wait for the chip to finish. These chips have this nice feature where after performing write operation you can read any address and there will be two bits that you can use to determine if the previous write is complete. It makes the whole process much faster, because in most (if not all cases) you don't have to wait full 10ms.

Thing is - it has actually already caused one issue in the past, so I wasn't surprised to see it happening again.

This is how the process starts. In this particular scenario, I'm running simple "check Software Data Protection status" code - it reads first byte in ROM (0xA2) and writes the XOR value of it (0x5D). It will read the same address after write operation is completed - and if it is the old one, it means that SDP is enabled, obviously. If it changes (so the SDP is disabled), it will overwrite it again with the initial value to preserve original state.

All the r/W, CLK, BE signals are coming from AVR, the ROM_CS (at the bottom) is calculated by PLD as usual. Actually, RDY is also calculated by PLD and if you look closely, you will notice another problem, but I will deal with this one later.

Anyway, you can see the sequence working as expected: read 0xA2, write 0x5D, keep reading the same address until the two consecutive reads yield the same value on bit 6 - this indicates that the write operation is completed. Unfortunately, this is not what happened:

As you can see, there is something odd about the last read operation: the clock signal is clean, but there is something off about the nRD. It looks like the write operation is completed (two reads of 0xFF), but the next read also results in the same value (0xFF), where we expected either 0xA2 or 0x5D. You have probably guessed by now what happened, so let's confirm with a close-up:

Yep, during single clock cycle there were two read operations performed. Strangely enough, even though these pulses were very short (low pulse measured 25ns, high one 10ns), it was sufficient for the ROM chip to respond as if they were valid. Remember...

Read more »Last time I wrote about strange problem caused by bad solder joint. I fixed it, signal got considerably better, but was it end of all problems? Sure it wasn't, and the funny part is that I had to review some of the things I wrote last time, referring to them as "simple", but I'm getting ahead of myself.

The fun never stops

It's always one thing leading to another. Another simple question, to which there is never simple answer. After fixing the blinking LED issue I moved on to the next thing - strange copy-paste distortion. This was really tricky one, I knew about it for some time now, but I wasn't feeling up to the challenge until recently.

First: it only happened at higher frequencies. Running at 1-2MHz was bug-free. At 4MHz it was happening occasionally. At 8MHz - almost every time. It also requires SC26C92 and serial communication of 115200 baud minimum.

Second: it required very complex software setup. On one hand it was a good sign, suggesting software bug. On the other, it just made troubleshooting all that more difficult - testing produced plenty of bus data I had to analyse.

Third: it looked like very nasty hardware bug. Something like crosstalk between TX/RX channels or something even worse. Bottomless pit of despair or something similar.

It's no wonder I was intimidated by it. Alas, it was really fascinating!

Problem statement

Yeah, I know I haven't described the problem just yet. This one is too good to spoil the fun with premature... description. So get this: you have to boot OS/1, load Microsoft BASIC, go to this page to copy sample BASIC code I use to test the stability of the build. The program I'm using is very useful - while small, it is pretty complex as far as 6502 BASIC goes (using trigonometry functions and floating point numbers), and due to addressing scheme of MS BASIC it uses stack and distant RAM pages heavily switching between them - I used it to detect nRD/nWR timing violation. So the program is:

10 FOR A=0 TO 6.2 STEP 0.2 20 PRINT TAB(40+SIN(A)*20);"*" 30 NEXT A

It's important: you must not type it in, but copy from the page and paste into the serial terminal.

What you get in the terminal is this:

B( FOR A=0 TO 6.2 STEP 0.2 20 PRINT TAB(40+SIN(A)*20);"*" 30 NEXT A

Crap, so the data got distorted during copy/paste, right? Well, this is where it gets interesting:

OK B( FOR A=0 TO 6.2 STEP 0.2 20 PRINT TAB(40+SIN(A)*20);"*" 30 NEXT A LIST 10 FOR A=0 TO 6.2 STEP 0.2 20 PRINT TAB(40+SIN(A)*20);"*" 30 NEXT A OK

Do I have your attention now?

As you can see - the code got distorted (and sometimes it was much, much worse than that), but when you LIST it, everything is fine. Oh, and in case you are wondering:

OK

RUN

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

OKIt runs well too.

OS/1 asynchronous I/O

This is where I should probably explain a bit more about the serial I/O implementation. It uses fully asynchronous, interrupt driven buffered mechanism for sending and receiving data. This makes troubleshooting all that more difficult, because the data is moved around the system in a pretty indeterministic way, but the general flow of a single character is the following:

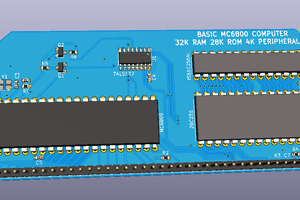

Recently I wrote about making PCB prototyping cheaper. Today I wanted to write about things I have learned from latest PCB prototype of DB6502 build. Let me start with the update first.

DB6502 PCB prototype update

The good news is that I haven't made any major mistakes in my latest design. Unlike the previous prototype, this one didn't require any extra wires nor cutting existing traces. AVRISP port is also oriented properly this time!

Does that mean everything is fine and dandy? Sadly, no. Latest prototype does serve its purpose nicely - I can proceed with testing some of my ideas and verify some assumptions. I can learn and improve, but the board, unfortunately doesn't always work "standalone", without extra chips added to the breadboard next to it. The main achievement here is that I planned for such "failure" and made the board configurable - thanks to this flexibility I was able to spot and fix the problems that were blocking the development of the previous revision.

Let's talk about the problems and lessons learned then.

Problem 1: nRD/nWR signal stretching

In one of the previous entries I wrote about impact of introduction of wait states on 6502->8080 bus mapping. Basically, one of the issues with 6502 CPU is that it uses different encoding of read/write signals than the 8080 and derivatives. It might seem irrelevant for 6502 based build, but unfortunately, both your memory chips (RAM and ROM) and the SC26C92 UART interface are designed for 8080 bus. Instead of single r/W signal from 6502, you have nRD/nWR inputs on 8080-compatible devices.

Ben Eater uses simplest possible trick - ensures that nWR can be low only during high CPU cycle. While that works fine at slow speeds (and is generally accepted solution for most homebrew 6502 builds), it can cause issues when introducing wait states, because it causes write operations to happen twice (or more, depending on number of wait states).

In my previous PCB revision I have already implemented Ben's algorithm in my PLD: nRD and nWR were calculated there, based on r/W signal and clock input. It was very elegant solution and it worked beautifully due to simplicity of the calculation. Unfortunately, when I added wait states to the equation things got messy, and calculation got just a little bit longer.

What you have to take into account is that your nWR/nRD signals must rise no more than 10ns after falling edge of your clock. According to 6502 datasheet this is how long the data/address lines are stable. If you wait longer, your address/data lines might start changing, resulting in bus corruption.

See tDHW above, and this is the actual figure:

The problem with nRD/nWR stretching is that the logic behind it is pretty complex in my case - five signals are included in computation: r/W, CLK, RDY, WS_DEBUG and WS_DISABLE. In general, the logic for the computation is the following (in order of priority):

Rules 3 and 4 can be illustrated using the following diagram:

There is a problem, however - we still have two more signals to go (WS_DEBUG and WS_DISABLE) to include in the computation, and remember, we need the result to be calculated in less than 10ns relative to falling clock edge. Even if we use AC gates, propagation delay is going to be too long. Inverter will take up to 7ns:

Then there is the AND gate (74AC08) with 7.5ns propagation delay:

Last but not least - OR gate (74AC32) with 7.5ns propagation delay:

It all sums up to 22ns pessimistically, and two...

Read more »Experimenting with digital electronics can be a lot of fun, but as each other hobby, it can cost you a small fortune. Especially at the beginning you will make mistakes and buy useless gear, but as the time goes by you will learn to choose wisely. What does it mean? Different thing for each one of us, as we work in different environments, are in different financial and social situations. My work is mostly limited by space (no dedicated area to work, sharing workbench between different hobbies and projects) and time (being parent of four year old, I can't really plan ahead a lot and have to be able to start/stop work on short notice). My solution to these limitations: using multiple PCB revisions instead of breadboards. Sure, it takes time to design them, but all you need is a laptop and you can work on the design everywhere around the house. Completed project is very durable, all you need to do is to grab it from the drawer, plug it in and resume hacking - no need to spend couple of hours looking for loose wires.

But then there is the cost factor: making PCB is not cheap, especially when you order one project at a time - shipping cost from China can be prohibitive. Another thing is at the beginning you tend to make your boards larger than they need to be, just because of lack of experience.

Don't worry, you will get better at it, and while you do, I want to share some tips/hints I have learned recently.

Tip 1: verify your assumptions!

I wouldn't surprise you if I said that using smaller, SMD packages can help you save some money - after all, the cost of PCB nowadays is mostly defined by the size of the board and most of the space is taken up by your ICs. That being said, there are other factors I was surprised to learn about.

Let's start with simple comparison. Below you will find two revisions of the same Z80 development board - first one (on the right) was made with thru-hole components only, the second uses surface mount to some extent (only the simplest packages - SOIC and 1206, and not for all parts). What is also important, the second board is significantly improved - it has added I/O decoder and all the SC26C92 GPIO input pins use pull-down resistors now for stability. Still, the second revision is considerably smaller:

For quite a while I would stick to DIP packages for a very simple reason: chips are expensive, and you have to recycle them. I would design my boards with tooled DIP sockets in mind, and only after several iterations I realized how wrong my assumptions were. Let's look at the costs, shall we?

Variant 1: DIP package

First, you need the chip for about 3,01 PLN, but also a socket, which costs 1,79 PLN. That's not all, you also have to consider the real estate that the package uses.

Variant 2: SOIC package

Second option is to go with the SOIC package - at 2,20 PLN it's considerably cheaper than the DIP counterpart. Actually, it costs only slightly more than the DIP socket itself. When you consider the extra saving from smaller PCB size required, it might get even cheaper.

Variant 3: TSSOP package

This one is even cheaper - only 2,04 PLN, and it uses very little space on the PCB, but TSSOP can be too hard for other people to solder by hand. I will elaborate below why it matters for the cost of your own fabrication.

One could argue that you can get cheaper socket, and it's true. There are non-tooled sockets for 0,50 PLN, but the point is still valid: sometimes using 0,50 USD socket to be able to recycle 0,60 USD chip is not that smart, and SOIC package makes for great introduction to SMD soldering.

Sure, there are other cases - EEPROM, SRAM or CPU cost significantly more than the socket they use, and in these cases savings on PCB real estate do not justify the expense unless you plan to desolder the chips afterwards.

Bottom line: before you start planning the layout of your board, shop for parts a while - it might turn out that small changes...

Read more »Last time I wrote about my experiments with common operational amplifier, but obviously, there was certain context to that, and I found the topic worthy of another post. Again, the inspiration came from this amazing video by George Foot, and this time I would like to tell you a story of building simple adjustable active load circuit, and how it allowed me to break Ohm's Law. Twice!

Let's start at the beginning: adjustable active load is a circuit/device that allows you to simulate certain (and configurable) load on your system. It is critical for testing any kind of power circuits, but the greatest value of it is in the learning opportunity. Also, please note: if you are to test any kind of commercial product design, you should probably buy professional grade device for several hundred dollars.

However, should you decide to build your own, you get to use different components and while troubleshooting any issues you come across, you get to understand them much better. It was fun, it was full of surprises and discoveries. Strongly recommended!

On basic level such a load circuit will consume certain amount of current and convert it into energy, probably heat. If the idea were to use constant, defined voltage and constant, defined current, you could just use power resistor - and that would be it. Want 500mA at 5V? Using Ohm's law you calculate the resistance as 10 Ohm. Power dissipation will be 2,5W so make sure your resistor can handle that.

Things get more complicated when you want your load adjustable (in terms of current passing through) and working with different voltages. This is where single resistor will not suffice. I used the this blog entry as inspiration, and the schematic was as follows:

Let's explain how this circuit is supposed to work:

Now, what happens if the non-inverting input is higher than the 150mV measured on OpAmp inverting input? It will increase output voltage delivered to Q1 MOSFET gate and as a result, the MOSFET will pass more current through. This will keep happening until voltage drop on R4 is equal to the non-inverting input of OpAmp. Beautiful usage of the feedback loop!

What is also great - none of the input parameters to OpAmp depends on the input voltage. R4 voltage drop is calculated against ground, and RV1 output is always measured against the 2,5V reference voltage provided by U1 chip. Lovely, isn't it?

Theory and practice - in practice

The beautiful simplicity of this circuit could be matched only by its utter and complete failure to work. I built it on breadboard, provided 5,35V power from standard 2A charger and started testing. Yeah, it would work pretty well almost halfway through, but at around 600mA it wouldn't get higher anymore. I replaced the MOSFET, tried different variants of R2 biasing resistor. 660mA was the limit and that was that. Sure, I could live with the 600mA limitation, but I wanted to understand where it came from, especially that on paper it looked as if it should be able to pull full...

Read more »I believe I have said it before, but here goes again: one of the things I don't like about all these EE tutorials out there is that most of them are written by people who are actually pretty experienced. They don't remember what was hard to grasp at the beginning and keep using terms that are not that clear for people like myself that are new to the field. The same goes for most books, every single datasheet (for a good reason, really), and majority of videos.

Then there is this split between analog and digital electronics. I've been working way more with the latter, and it all seemed so easy. Sure, there were terms like "input capacitance" or "output impedance" that didn't mean anything to me, but hey, as long as you connect these chips like LEGO pieces, it doesn't seem to matter.

Time went by, and this ignorance was like an itch - something you can forget if you try hard, or have too much of a good time, but it comes back whenever things get rough. As it turns out, there are other people out there having the same problem (pretty decent understanding of digital, but much less of analog electronics), and sometimes they provide excellent inspiration. This great video by George Foot reminded me how badly I need to work on my understanding of the simplest circuits. If you haven't seen it yet, please do, it is really amazing: simple, clear explanation of complex concepts, made by someone who still remembers the difficult beginnings.

I decided to build the circuit myself, trying to understand each part of it the best I can. Since OpAmp is critical part of the circuit, I started there and it was amazing journey so far. So, however it's not related to my DB6502 project, I decided to write about it, because it's definitely something interesting to share.

Fun with OpAmps

OpAmps are virtually everywhere. People that know diode polarity without checking the datasheet probably know everything about them and think that simple two rules explain it all. For everyone.

I tried watching several videos and reading multiple articles, but they all seemed rather convoluted. One way or another, I decided to give it a try and build some basic circuits myself. I will document all the mistakes I made here, because I want to illustrate the learning process and show how insignificant exercise as this one can help you build very strong understanding and intuition about basic rules about electric circuits. Let's get making then!

Simple comparator circuit

To follow along with my exercises, you will need the following:

It all starts simple - two voltage dividers, one of them being adjustable with the potentiometer:

With 5,35V power supply I'm using V2 is around 2,65V and V1 can alternate between 2,40V and 2,92V. I chose the values of the R1, R3 and RV1 resistors to make sure that V1 range is pretty small, around 500mV. After all, we are going to amplify that signal, right?

So, let's go ahead and test the output when turning the potentiometer. I use scope in slow "roll" mode to ensure that slow changes introduced that way are clearly visible on screen. Channel one (yellow) is connected to TP1 above and channel two (pink) to TP2.

As you can see, channel 1 oscillates just a little bit below and above the channel 2 - just as I wanted it to.

To use OpAmp as comparator we need to create something called open loop. In general, the way to use OpAmp is to feed back some of the output signal back into one of its inputs; this is called closed loop configuration, and it allows us to control the gain, or signal amplification factor. Sounds complicated? It did to me, so let's start without feedback loop, with something much simpler.

In the open loop configuration gain is virtually infinite, causing...

Read more »Unfortunately, recently I haven't been able to work on my project as much as I would like to, and the progress is much slower than I was used to. That being said, taking some time off can give you new perspective and lets you reconsider your assumptions, goals and plans. So, not all is lost...

At the moment I decided it's time for another PCB exercise - struggling with 14MHz experiments I kept asking myself whether the problems might be caused by poor connections on breadboard. I know, it seems far fetched and probably is not true, but still - the PCB version I'm using right now was supposed to be temporary and replaced down the line by next iteration while I sort out some of the design questions. I have, actually, so I should probably stick to the original plan.

Sure, making PCBs is not cheap and there is certain delay between order being placed and the board arriving, but given how slow my progress has been recently this is something I can live with. On the upside, I want to use this opportunity to test some new ideas, including some fixes to original design. Stay tuned, I should write about it soon.

For now - there was one issue I didn't want to keep open, and since I was about to make a PCB I needed to decide how to solve it. The issue was nothing new, it's something I mentioned previously: RDY pin on WDC65C02 is a bidirectional pin, so it requires careful handling to avoid damage to CPU.

Problem statement

As I wrote in the "Wait states explained" blog entry, the main issue with RDY pin on 65C02 is that it can work in both input and output modes. Most of the time you will be using only the input mode, supplying information to CPU about wait cycles (if that's not clear, please read the previous entry on the subject), and it's tempting to connect your wait states computation logic circuit output directly to the RDY pin. There is serious risk associated with this approach - if, for one reason or another, CPU executes WAI instruction, RDY pin will change mode to output and line will be pulled low (shorted to GND). At the same time your wait state circuit might be outputting high signal on the same line (shorting to VCC) and you will cause short between VCC and GND, resulting in high current being passed via the CPU. If you're lucky it will cause only high energy consumption, but if not, you might burn your CPU.

Sure, there are some standard approaches to the problem, and I will investigate them below. The thing is that the above section is not all. You also need to remember another thing: if you intend to use wait cycles it probably means you are planning to make your CPU run at higher frequency, giving you less time to spare for any of the solutions to work.

This is why I wanted to compare each of the approaches and discuss pros and cons of each. I hope it will help you choose the approach that is suitable to your build.

Experiment description

So, based on the problem statement above, the question I want to answer is: how do these approaches perform in real scenario given the following below constraints:

Now, the most proper way to do it would be to test it against the actual 65C02 CPU, and I might actually do it in future, but at the moment I needed much simpler setup. I just wanted to test what is the fastest, energy efficient way of delivering RDY signal to receiver and compare some of the ideas I saw on 6502.org forums.

Test setup

As described in the paragraph above, this is what I needed: oscillating high/low CMOS signal exiting output of one gate being fed into input of another gate. This would resemble closely target situation where the producer of the signal is your wait state circuitry and the consumer...

Read more »This is the final part of the 14MHz series, but I'm sure it's not last entry about it. Sorry if it had been a bit stretched, and maybe too beginner-friendly, but I guess for all the experts out there it's all common knowledge. It's the beginners like myself that struggle with these things, so I'd rather write a bit more and make it more useful.

As I wrote in my first post on the subject, all the other issues are secondary, but the timing is the key in running 65C02 at full advertised speed. Bus translation is not very difficult, and documentation quality can be worked around with enough research (remember what that word meant before Google?), but both of these challenges are all the harder with tight timing of 14MHz.

Before we get to the point where I can talk about specifics, I would like to cover one more thing on the subject: what is the timing violation, and how can that affect your build. Again, sorry for going into such basic details, but it might not be obvious for everyone; it certainly wasn't obvious for me.

What happens if you violate chip timing?

We have all done that at some point, and what we know for sure is that it didn't cause the universe to implode. That's already good news, but in fact: where do all these timing restrictions come from and why? Well, our digital logic integrated circuits are not as digital as we would like them to be, nor are they logical. That part I'm sure of - integration and circuitry are still up for debate :)

What happens in a chip like a simple NAND gate is that whenever voltages change on input pins (which, by the way, is also not that very instant!), there is very long and complicated process where different components of the circuit start responding to changing input, and they all do it in very analogue and illogical way. Usually the dance of currents and voltages takes from several to several dozens of nanoseconds. Anything that happens in between is pretty much random, and as with anything random, you can never assume that your result is the proper, final one. It might, just as well, be just random value that resembles the final value closely enough.

What's even worse, this dance is not deterministic. It's not like the access will always take the same amount of time, because both internal and external conditions might change the duration of the process. This is why in datasheets you have pessimistic values for each operation, and while these are not very important at slow CPU speeds, the faster you go, the more it matters. Let's look at the NAND gate used in Ben's project:

Now, it's tempting to assume that the worst case possible scenario at room temperature should be around 15-18ns (taken from rows 4 and 7), but this assumption is valid only if you can guarantee that your operating voltage will not drop below 4.5V. Can you? Sure, we have decoupling caps for that purpose exactly, but still, keep that in mind, it might matter! If the voltage drops below 4.5V threshold, propagation delay will be longer and valid response will appear on output later. Will you notice? Not necessarily. You might be lucky to get response faster thanks to the random operation in the IC.

Still, these are pretty simple cases. When you consider more complex chips it gets even worse. More moving parts means much more unexpected behaviour. It's especially interesting in case of reading ROM memory, which usually will be the slowest part of your build (unless you connect LCD directly to the bus, that is). Let's consider simple example (assuming ROM starts at 0x8000):

LDA $2000

CMP $9000

BNE not_equal

As you can see, I'm reading RAM at address 0x2000 and comparing it against ROM value at 0x9000, jumping to not_equal label when the values differ . How much can you violate the ROM timing for the code to work? Basically, how much can you push that read beyond ROM limits before it fails?

There are two things to consider here:

One interesting thing that Ben doesn't seem to elaborate on in his videos, is the interesting issue of CPU families and resulting chip (in)compatibility. I came across this issue when started using SC26C92 Dual UART chip, but only much later, when tried pushing 6502 to 14MHz limit I noticed some resulting issues.

Let's start with the beginning, though. If you followed Ben's project closely, you might have noticed important difference between IC interfaces. If not, you will notice shortly...

Conveniently, it's very easy to hook up 6522 chip to 6502 CPU bus. No wonder - these belong to the same "family" of CPU and peripherals, and they use the following signals to synchronise operation:

If you check the ACIA chip (6551), you will notice it has the same set of control signals (with fewer registers, but the idea is the same):

Now, if you look at the ROM/RAM chips, these are a bit different:

As you can see, some details are similar (like the low active Chip Select signal), but part of the interface is a bit different. Instead of single Read/Write signal, there are two separate lines: low active Output Enable and low active Write Enable. There is no PHI2 signal, and as a result, to prevent accidental writes, in Ben's video about RAM timing there is necessity to ensure that write operation is performed only during high clock phase.

If you haven't played with any other CPU of the era (I haven't at the time), you might just accept the solution and just move on without thinking too much about it. This is exactly what I did, and only after playing with higher frequencies (and, specifically, wait states) I had to revisit my understanding of the subject. But I'm getting ahead of myself...

Side note: all the issues I ran into when trying to connect to this chip are the reason I started this blog in the first place - I wanted this documented somewhere. Probably will need to write more details about the initialisation and such details. Some day, I guess...

When you read this specific chip documentation you will find it uses interface similar to the one used in ROM/RAM:

As you can see, there are standard A0..A3 register select lines, D0..D7 data bus, low active IRQ output line. The first important difference is the RESET signal, which is high active, but this translation is easy - single inverter or NAND gate will do. Chip Enable (other name for Chip Select) is predictably low active, and there are two signals to control read/write operation: low active RD (identical to low active OE) and low active WR.

Now, it might seem that connecting to this chip is pretty simple, and you should do it in a similar way Ben connected RAM:

This way we ensure that RESET signal is RESB inverted and RDN is low only when R/W is high (indicating read operation), while WRN is low only when clock is high.

Unfortunately, there is an issue here: early during clock cycle, while address lines are still being stabilised, you might get random access to the UART chip (your address decoder might react on the unstable address and accidentally pull UART CEN line low for just a couple nanoseconds). At the same time RDN might be low, resulting in read operation being executed.

Sure, the operation would not be valid - it would be at most 10ns long, which is way below the minimum pulse length, but this is actually not a good thing. It might cause issues with chip operation stability or worse.

How can anything be worse than the chip instability? Actually, as I have learned, certain operations can be executed,...

Read more »

Create an account to leave a comment. Already have an account? Log In.

Hey, thanks for information about MCP2221A - I wasn't aware such chip exists! I will get myself a few to play around and see if they could be used in my design. As for the SMD components on my board - they are optional. If you wish to skip them, you can, all you have to do is to use external UART->USB connector.

As for the PCB, I'm not planning to sell them, they are all open hardware. You can order version 1 directly here:

https://www.pcbway.com/project/shareproject/65C02_Hobby_Computer.html

Or, if you have your own preferred manufacturer, here is the instruction how to get it:

https://github.com/dbuchwald/6502/blob/master/Schematics/README.md#ordering-pcb

All the future revisions will also be open sourced, I promise :)

For some reason I can't reply to your latest post. If you do get one and run into any issues whatsoever, make sure to let me know, I will be happy to help!

Become a member to follow this project and never miss any updates

Keith

Keith

Matt Lacey

Matt Lacey

Gee Bartlett

Gee Bartlett

Dave Collins

Dave Collins

This is a very nice project. I have a deep love for the 6502 CPU because it was what I started with, many years ago.

My only suggestion is to change the FT230X with MCP2221A and the micro USB with mini USB connector. Those will rid you from the SMT parts altogether and will make the board much more consistent.

But nevertheless, great board! Are you planning to release PCBs for sale?