Check the logs for more details!

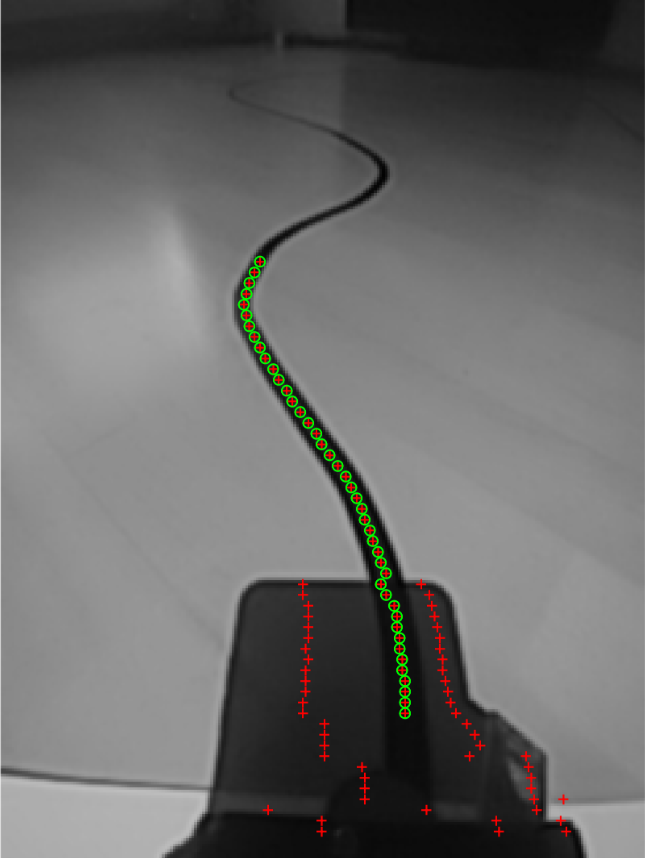

Camera based sensor are currently in beta state. The line sensor is already working robustly for different surfaces & light conditions. For the position sensor (incl. velocity & heading) I'll show what you currently can expect.

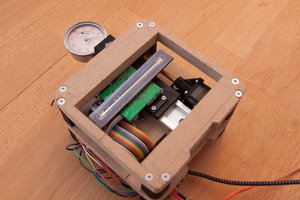

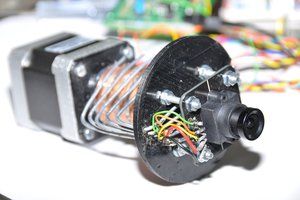

SerialSensor

SerialSensor

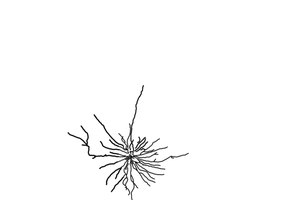

AIRPOCKET

AIRPOCKET