Why build my own?

Sure, there are dozens of existing robots. However, when I started choosing a robot for my summer camp, none of them seemed to be quite what I wanted. I wanted something:

- compact (and not too tall)

- programmable in Python, preferably with an existing library providing high-level functions such as "turn 90 degrees"

- with decent collection of sensors: at least 5 sensor reflectance array (for line following), distance sensor, encoders on motors. An IMU and sensor fusion to determine absolute orientation was highly desirable, too. And an OLED or TFT display for messages, too!

- neat looking, no spaghetti of loose wires

- extendable: it should be possible to attach additional electronics and mechanical attachments. In partiular, I wanted to use Huskylens camera by DFRobot, and forklift or grabber attachments

I looked at Pololu's Zumo and 3 pi+, DF Robot's Maqueen Plus, and Pimoroni's Trilobot. None of them satisfied all my requirements, so I built my own. It took a while - designing the boards, writing the library, and more, but at the end it was worth it.

Key features

- Dimensions: Length: 12.4 cm; width: 13 cm; height: 4.8 cm

- Power: one or two 18650 Li-Ion batteries

- Wheels and motors: uses silicone tracks and 6V, HP, 75 gear ratio micro metal gearmotors, both by Pololu.

- Main controller: ESP32-S3 Feather board by Adafruit, which serves as robot brain. It is programmed by the user in CircuitPython, using a provided CircuitPython library. This library provides high-level commands such as move forward by 30cm

- Electronics: a custom electronics board, containing a secondary MCU (SAMD21) preprogrammed with firmware, which takes care of all low-level operations such as counting encoder pulses, controlling the motors using closed-loop PID algorithm to maintain constant speed, and more.

- Included sensors and other electronics

- 240*135 color TFT display and 3 buttons for user interaction

- Bottom-facing reflectance array with 7 sensors, for line-following and other similar tasks

- Two front-facing distance sensors, using VL53L0X laser time-of-flight sensors, for obstacle avoidance

- A 6 DOF Inertial Motion Unit (IMU), which can be used for determining robot orientation in space for precise navigation (included firmware takes care of sensro fusion)

- Two RGB LEDs for light indication and a buzzer for sound signals

- Four RGBW LEDs used as headlights

- Expansion ports and connections:

- Two ports for connecting servos

- Two I2C ports, using Qwiic/Stemma QT connector

- Several available pin headers for connecting other electronics

- Yozh is compatible with mechanical attachments (grabber, forklift,…) by DFRobot.

Full documentation is available at https://yozh.readthedocs.io/

Applications

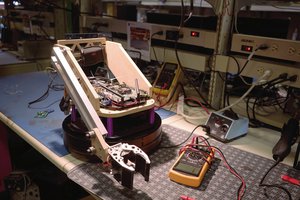

I used (previous version of Yozh for robotics class at SigmaCamp in 2021-2023. Among projects we did was mazerunner (fidn your way out of a maze) and warehouse robot (pick a container and deliver to one of several locations depending on the tag on the container; used Huskylens for reading April tags). See this video:

What does Yozh mean?

Yozh (Еж) is Russian for hedgehog. I always liked them.

Are you planning to sell this?

I was thinking of it, but they are not cheap - the sale price would be around $150. I am not sure how much demand is there for this, and I am not eager to run a crowdfunding campaign.

If you would like one, drop me a private message.

Alexander Kirillov

Alexander Kirillov

Spencer

Spencer

Tom Quartararo

Tom Quartararo

Jack Qiao

Jack Qiao