It is easy to build a very basic robot that runs the open source Robot Operating System: just put a motor controller on a Raspberry Pi and connect them to motors that turn wheels. However, such a basic robot without sensors is unaware of its surroundings. The power of ROS ecosystem is in its vast library of software for intelligent robot behavior, and to tap into that power, a robot needs to provide sensory input those software pieces require.

As the entry-level ROS platform, a TurtleBot offers two streams of sensor data:

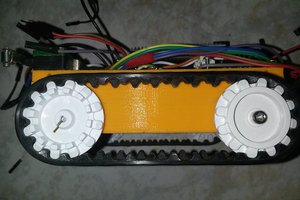

- Encoders that precisely count wheel rotation. We can estimate a robot's position if we know how far each wheel has traveled. This information is commonly presented under the name "/odom" (short for odometry).

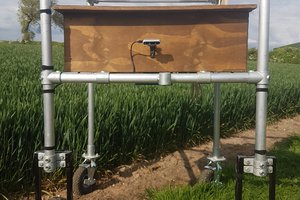

- A laser distance scanner (LDS) that continuously spins a LIDAR module to measure distance to obstacles all around the robot. This information is commonly presented under the name "/scan".

Providing such sensor data in addition to motor control are the minimum requirements to access a range of publicly available algorithms, such as those that allow a robot to gain awareness of its surroundings. This robot task of mapping its surroundings, and locating itself within its map, is called simultaneous location and mapping or SLAM.

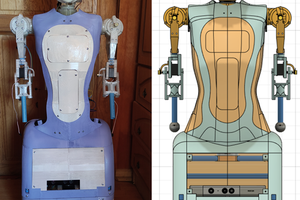

This project, Phoebe, is a DIY TurtleBot variant that can provide odometry and laser scanning data similar enough to the official TurtleBot that Phoebe can run all the same ROS autonomy modules that a TurtleBot can. If all the parts had to be purchased, they would add up to around $200-$250. However, I chose these components because they were already on hand in my parts bin. For a more detailed breakdown on component cost, see build log entry "Cost to Build Phoebe From Scratch"

This "Parts Bin TurtleBot", acronym "PB TB", is called "Phoebe" following tradition set by R2-D2 ("Artoo")

Roger

Roger

Maximiliano Rojas

Maximiliano Rojas

Audrey Robinel

Audrey Robinel

Junglist

Junglist

Joshua Elsdon

Joshua Elsdon

A very nice beginner project for ROS. You ended your project log a little over three years ago. Do you plan to update it anytime in the future?

Here is a bigger version of Phoebe: https://github.com/chrisl8/ArloBot which is based on the discontinued Parallax Arlo robot chassis.

I am presently thinking about building an "off the shelf" version of Arlo using a 14 in diameter chassis, a Rpi 4B/4GB, 2X7 RoboClaw, Pololu D37 motors with encoders, Slamtec A1M8 RPLidar, and Li-Ion batteries.

Regards,

TCIII