Problem

We are destroying our natural spaces with pollution. Our shorelines are littered with debris that wash ashore from the oceans.

When the earthquake struck Japan in 2011, the destruction ended up being washed into the ocean. Eight tonnes of tsunami debris washed onto the beaches of Vancouver Island in a single year. Source: Vancouver Aquarium

Plastics break down into tinier fragments, and pose a threat to the local environment. Effects include:

• Birds ingesting the plastics, mistaking it as food - leading to starvation

• Animals impeded by debris to form their habitats along the shoreline

• Toxins leeching into the sand and eventually waterways

This pertains to GOAL 15: Life on Land of the UN Sustainable Development Goals

Solution

Our solution is a 3d printed robot platform for debris retrieval, exploration, and mobile sensor monitoring.

It's more than just the robot. Two different groups of people, makers and environmentalists, working together and applying their skills to the problem. Volunteers help out to test the robot during Field Tests. Engaging with the public during these tests is valuable to gain their experience on how robots can help improve the environment. Read more about this in our project log about a Field Test with the Great Canadian Shoreline Cleanup.

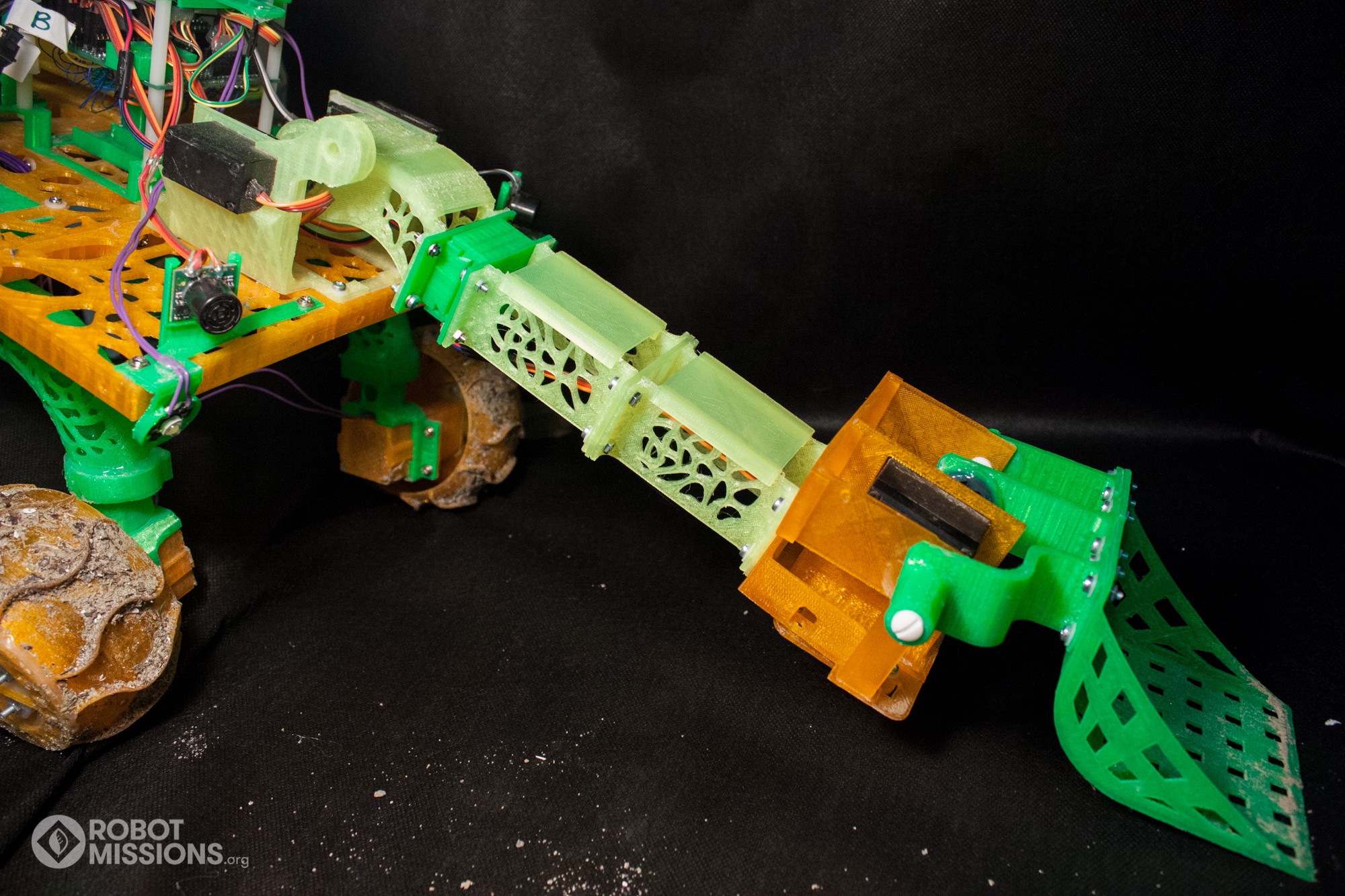

Debris retrieval is focused on tiny trash 10-50mm. An example is a plastic bottle cap. The end-effector scoop on the robot has a filter to let sand fall through, and the debris to remain. Using semi-autonomous behaviours, the robot will be more efficient at collecting this size of debris than by hand. Read more about our work on autonomous routines here, and magnetometer navigation.

Deploying this as a tool for Citizen Science (see log here) enables us to navigate to less accessible areas. Thus far we have tested up to 150m. We have used the GPS data from the robot to gain valuable insights about the location of debris, read more about it here.

During our baseline testing, the robot was able to collect and return 1 piece of debris in less than 90 seconds in 2.2 square meters while manually controlled.

For a 100 square meter portion of Cherry Beach that we frequented for Field Tests, we would estimate that using the robot for 1-2 hours would be able to collect 75% of the debris in that area. Our goal is to achieve this through semi-autonomous operation, rather than manual control.

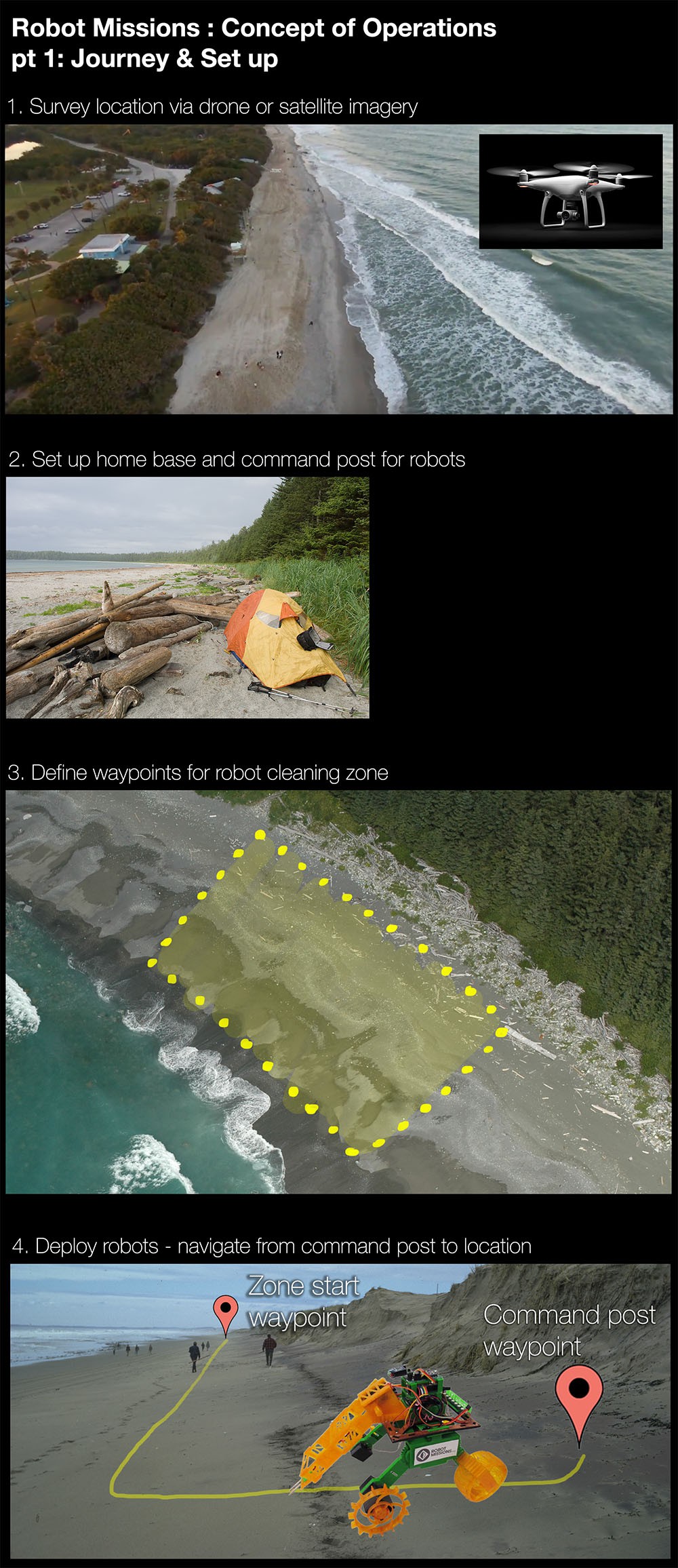

Concept of Operations

Note: The command post is for relaying control commands received remotely from the internet, to the robot locally. Home base tent is in case a robot operator needs to be on site to monitor the initial deployment, and perform research experiments.

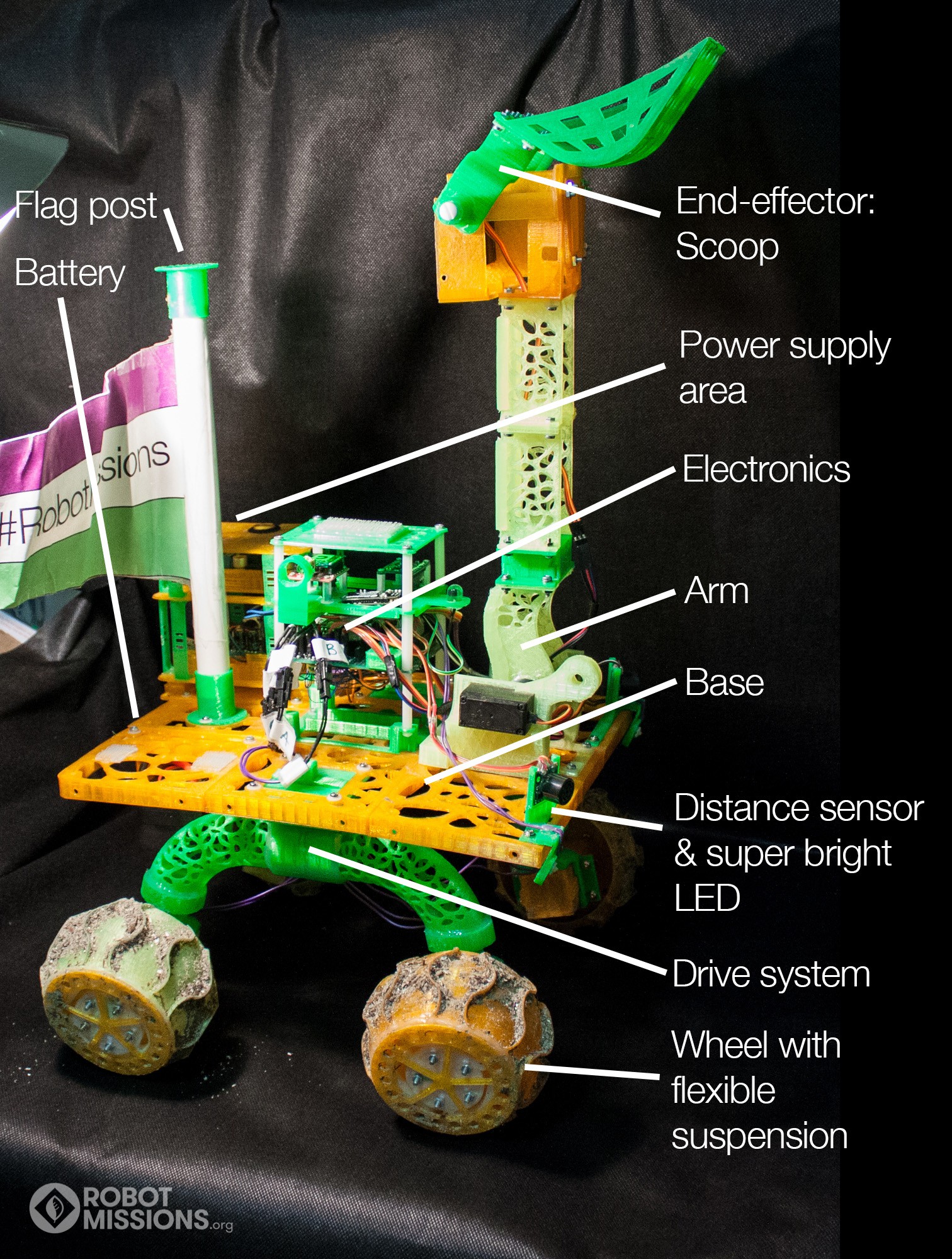

Robot Platform

The arm and drive system are detachable using sliding dovetails, which lock in place with a printed key piece.

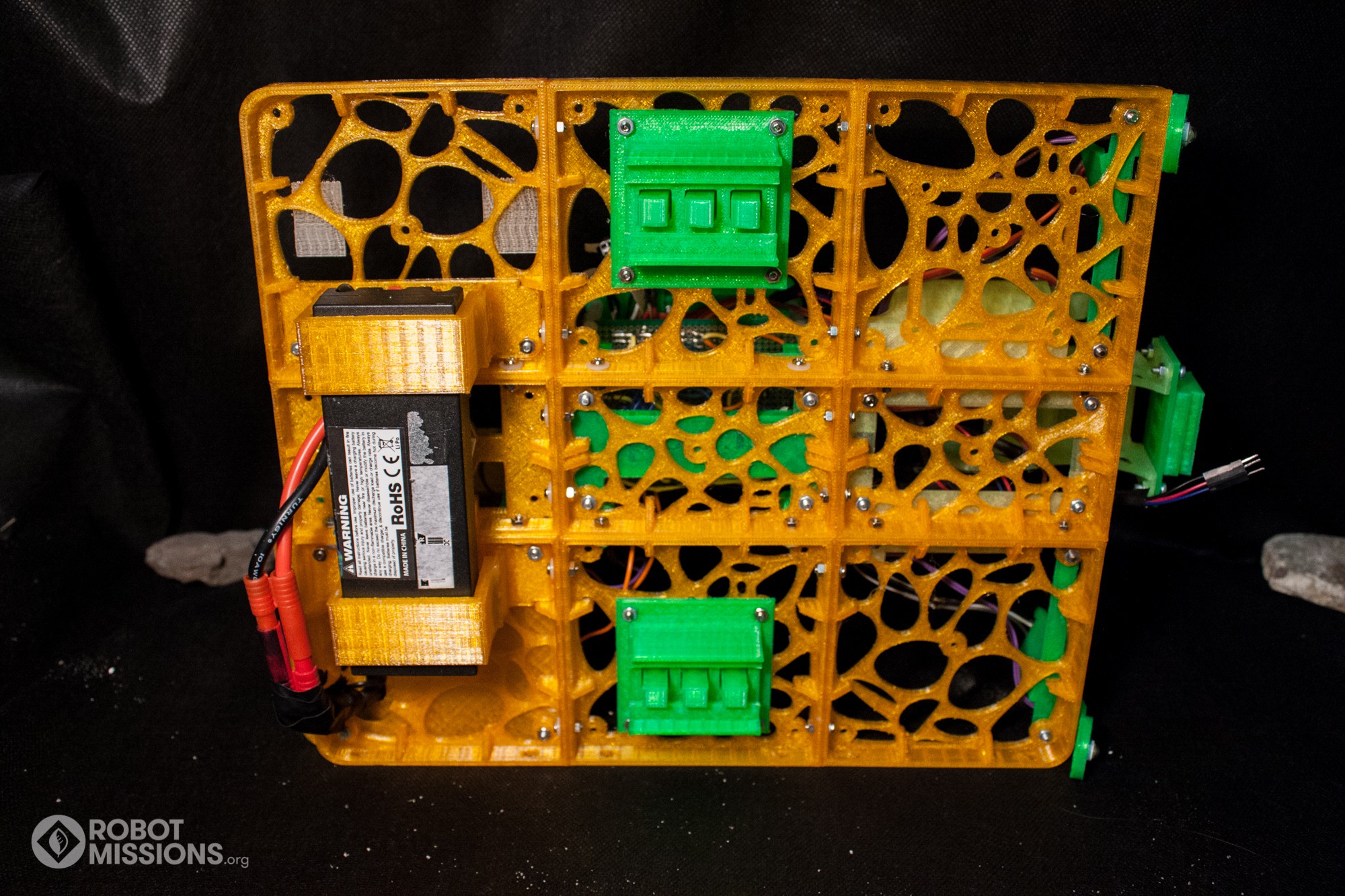

Base of the robot from underneath, without the arm and drive system connected. You can see the storage area for the battery on the left.

One of the challenges we encountered was reducing the amount of force applied to the motor - particularly when traversing rocky shorelines. See this project log for our force simulation. We designed a multi-material 3d printed wheel assembly with a flexible piece for suspension. This means we can use the existing motors - not needing to buy more expensive ones, and that this assembly can be replicated - no need to be shipping springs or other parts. Read more in this project log

Code: Our code for the robot can be found in this repository. Specifically, here is the code for the Main Board, GPS Unit, and Controller.

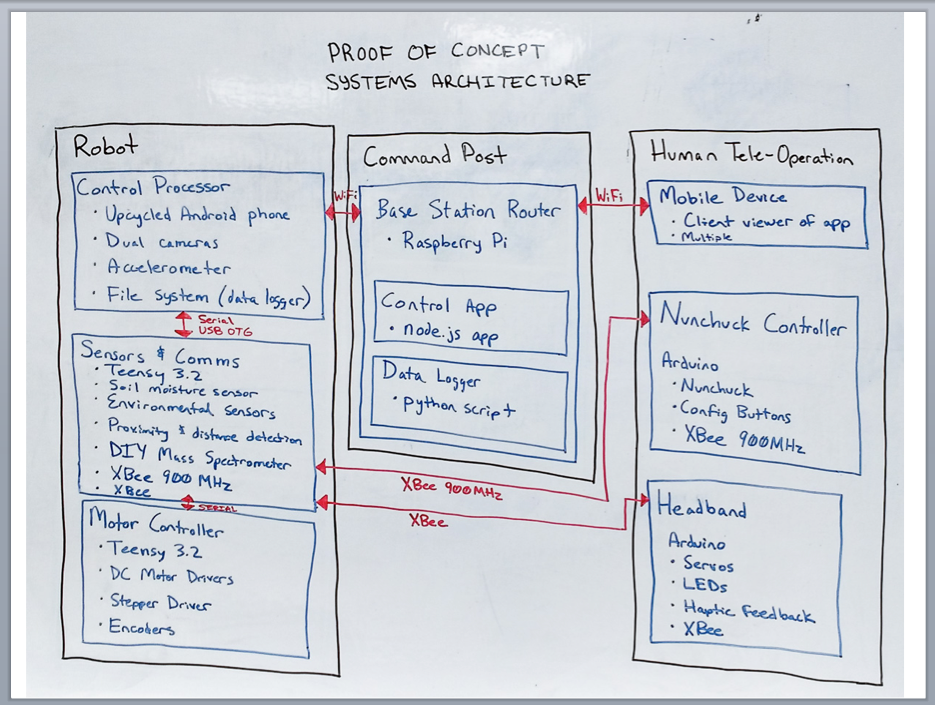

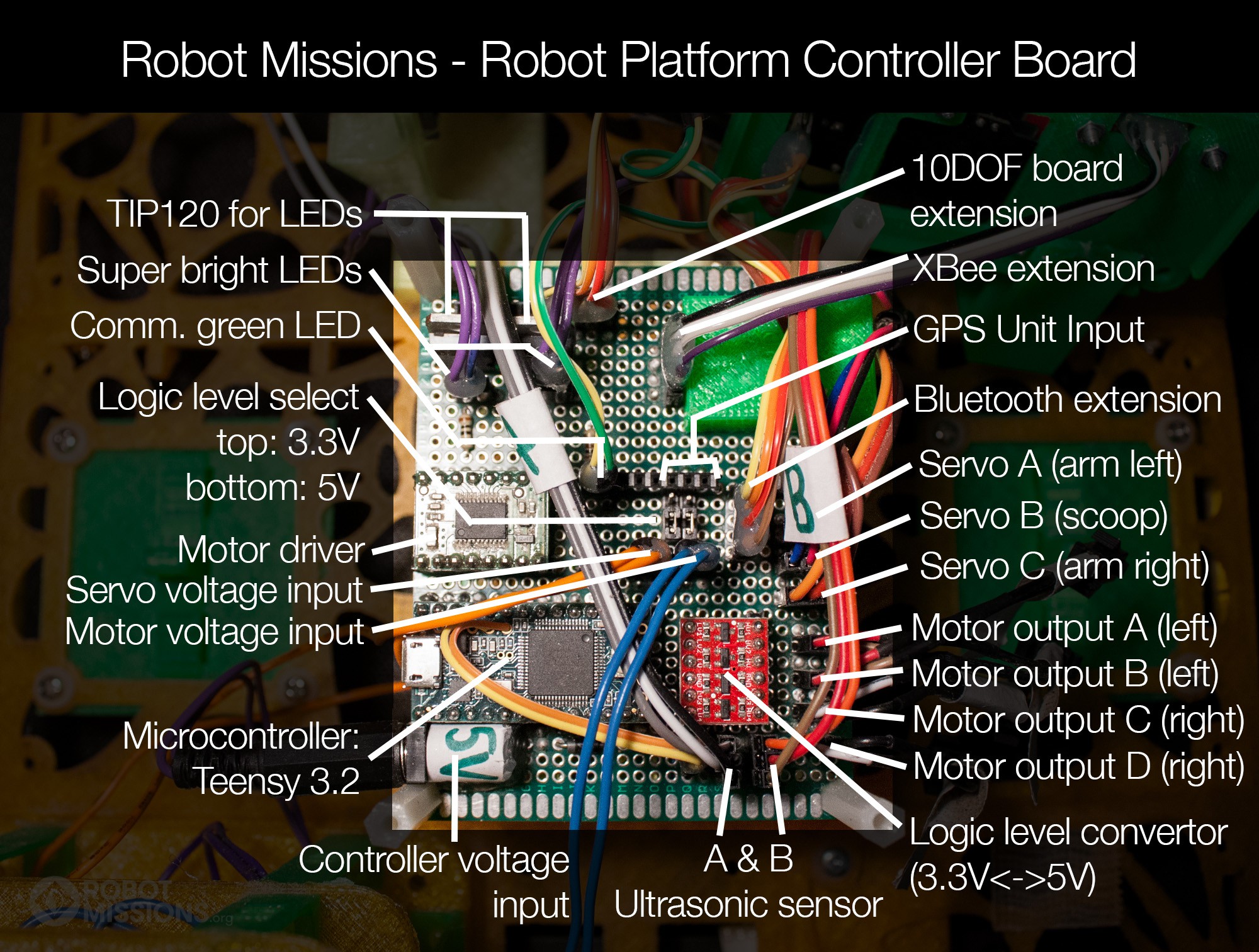

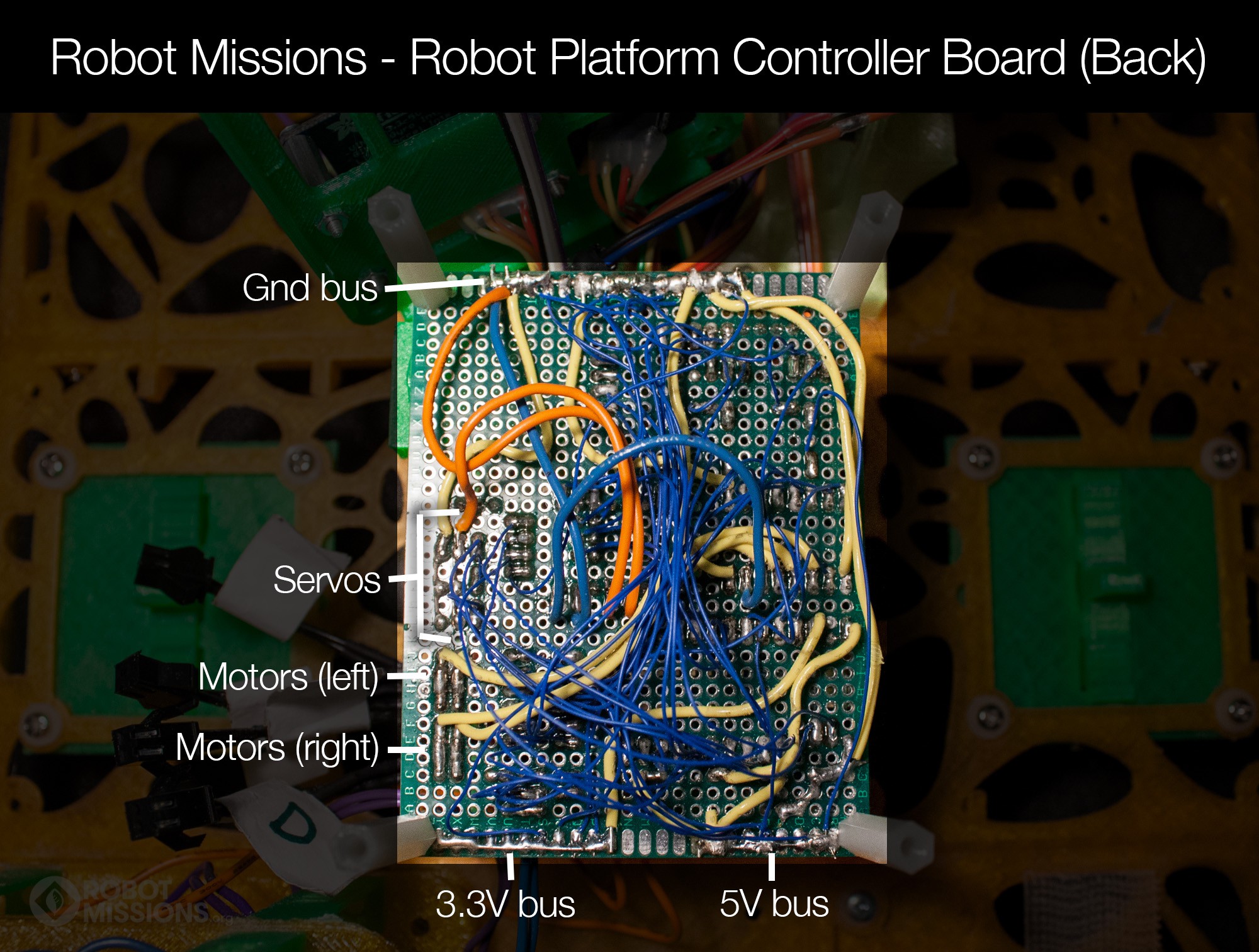

System

To date on the systems architecture, we have the Nunchuck Controller working to control the Motor Controller onboard the robot, aka the Main Board. The Main Board has some of the sensors from the envisioned Sensors & Comms board to reduce complexity at this stage. We use the GPS Unit to communicate with the Main Board via serial to provide the robot's current latitude and longitude.

We are excited for the continued development of the system - in particular the command post, which will enhance the way we control the robot, make observations, and collect data.

Physics

To create better simulations of the robot driving on loose sand, wet sand, packed sand, and other terrain, we created two calculators for the mechanics of the robot.

1. Wheel forces and mass distribution

With this calculator we can estimate how much mass is on the front and back wheels depending on where the center of mass is. For example, if the robot is lifting a heavy payload, this will change the force on the front wheels. View the calculations here and the spreadsheet here

2. Motor force

With this calculator we can estimate the resultant force from the motor: if it will start to slip or not. Given that the wheel is on an incline (aka sand dune in real life) with some friction on the surface. The wheel is spinning at the rated motor torque, which can be adjusted. View the calculations here and the spreadsheet here

Electronics

In lieu of schematics at this stage of the prototype, we have a documented list of pinouts for the boards. Please see Robot Platform Pin Assignments v1.0.xlsx in the Files section

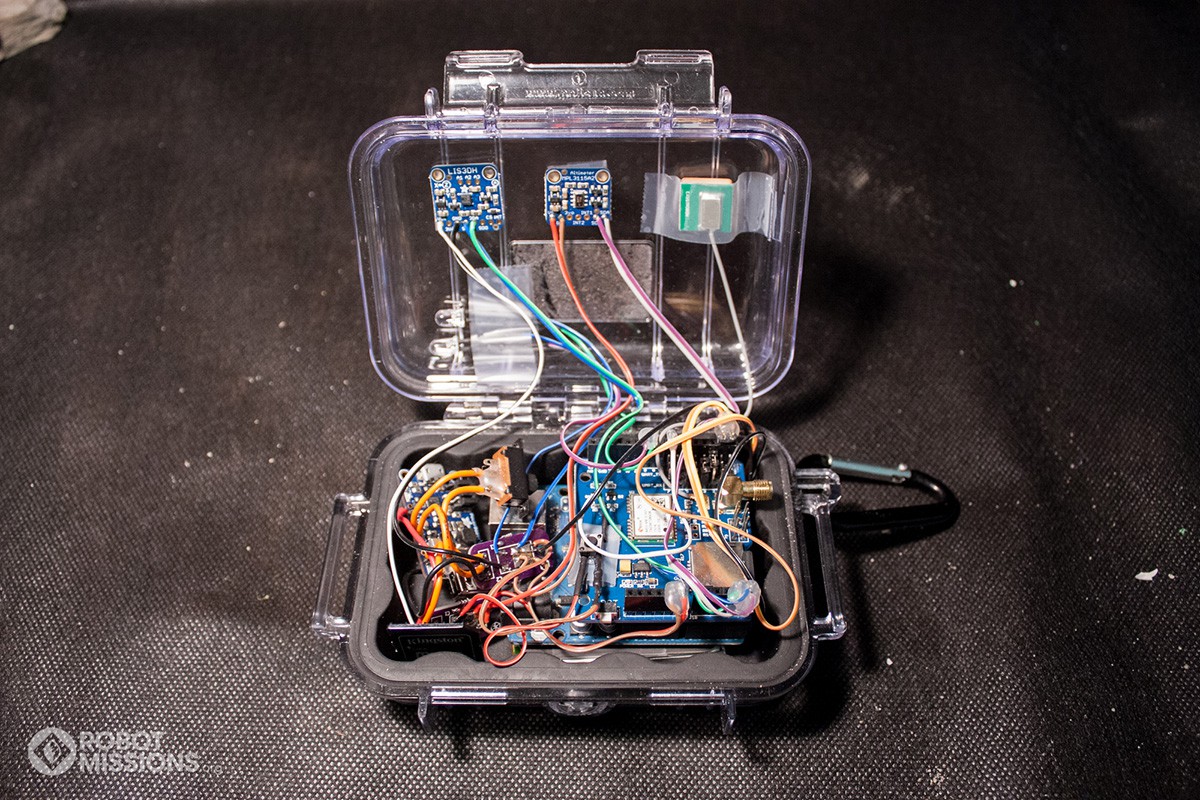

GPS Unit

The GPS Unit is standalone within a hard water resistant case and can be attached onto the base of the robot using dual lock. This modularity allows for the GPS Unit to be taken on other adventures as well, such as a stand up paddleboard. The connector can be added from the unit to the robot to feed it the current latitude and longitude necessary for navigation to a waypoint. The coordinates are logged every 2-5 seconds to a file on the micro sd card.

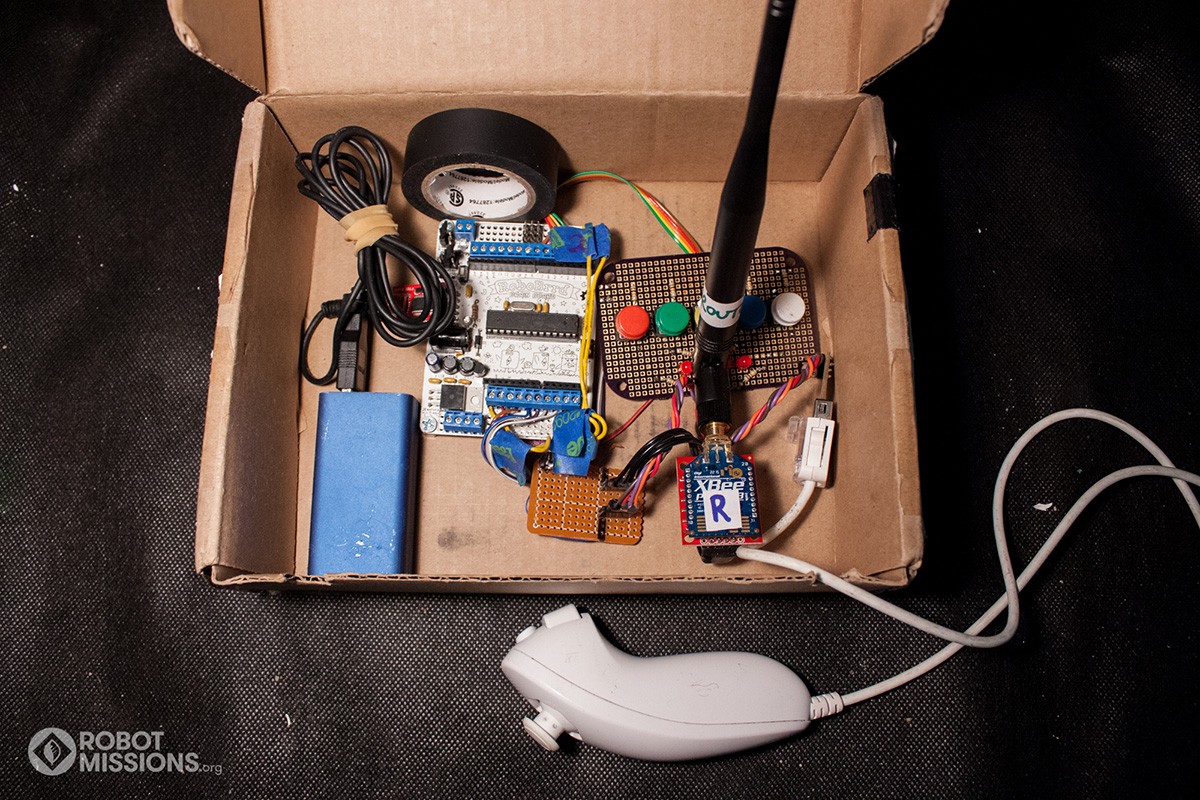

Control Box

The control box is used to remotely operate the robot. Using the XBee, we can achieve far signal distance - rated to 1km, and we have tested it at 150m. See here for the project log. There are 5 buttons for advanced functions. The control uses an off the shelf "Wii Nunchuck" (video game controller) that can be used one-handed, and has two buttons which control the arm and scoop pitch. These are placed in a cardboard box, where the extra space comes in quite handy in the field when storing debris or phones for remote viewing on a camera. Our current setup uses a derivative of the "RoboBrrd Brain Board", an Arduino 328.

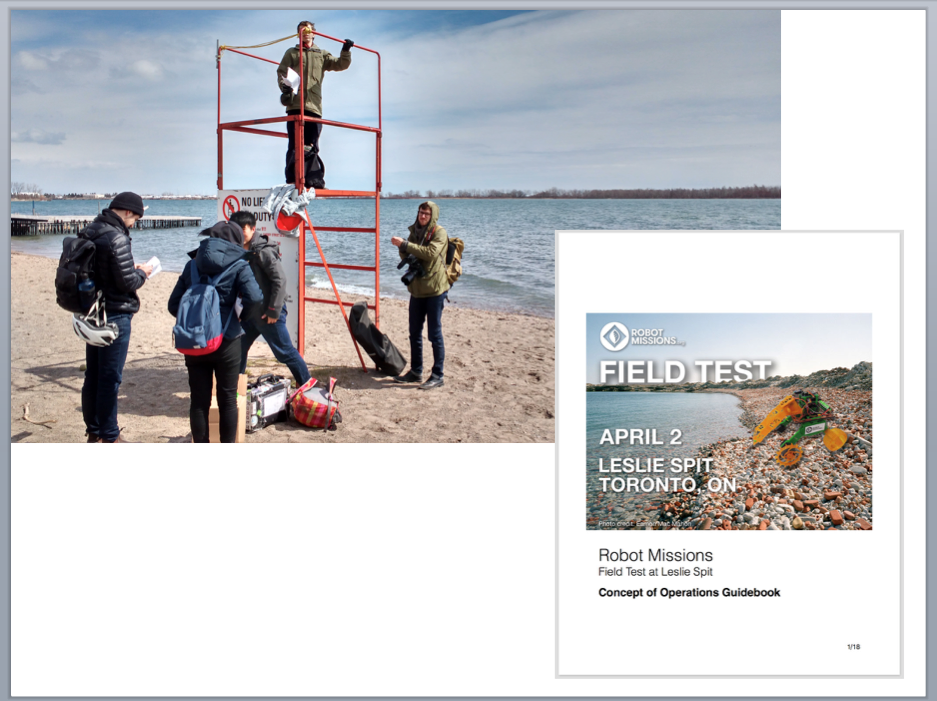

Field Tests

We embark on Field Tests to test the robot in the environment. What works in theory, might not work in reality! We continuously iterate based on our observations.

The crew members get a guidebook at the beginning of the Field Test that details the subteam roles, tasks, and observation prompts.

The crew members get a guidebook at the beginning of the Field Test that details the subteam roles, tasks, and observation prompts.

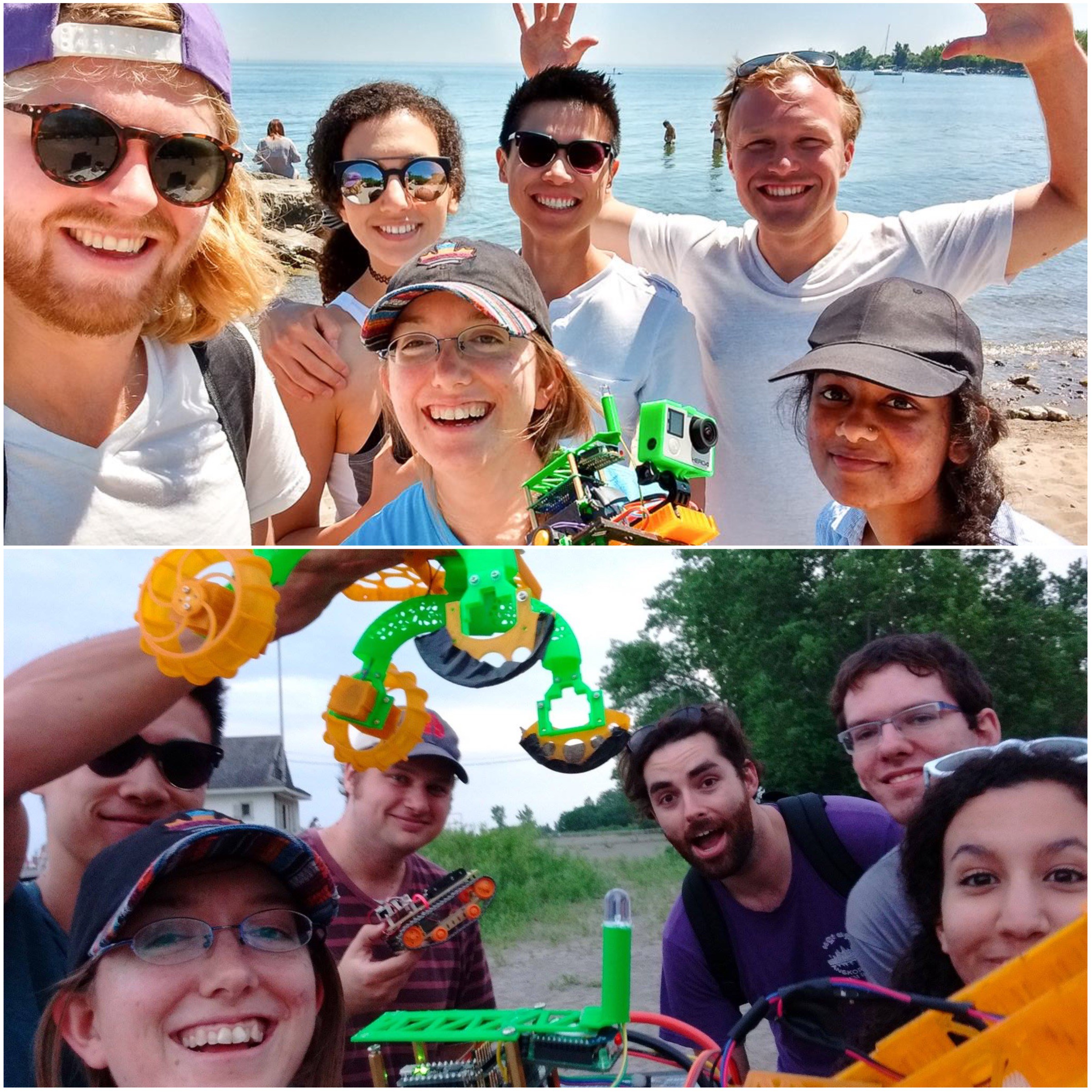

We celebrate with the Field Test crew group photo, including the robot!

Workshops

We run workshops to start people thinking about robotics in a different way

• Who / what the robot is helping

• Outcome of the robot task

• How the robot does it

Scaling the Impact

The direction we are headed in is to create new chapters of Robot Missions in other communities. They will be able to apply the robot to a challenge they find locally, and develop new modules that can be shared globally online.

An ideal starting line for replication is through the Fab Lab network. The initial 5 Fab Lab candidate locations are:

Protecting Nature

Debris collected during Field Tests. Read this project log about the nurdles we found, and finding a fish with the robot

We have the responsibility to protect our environment, and we must convert this into action. Together, by developing new tools such as robots, we can reverse our damage and enable nature to thrive. We work towards this with Robot Missions, at the intersection of technology and nature.

Robot protecting a small ladybug

EK

EK