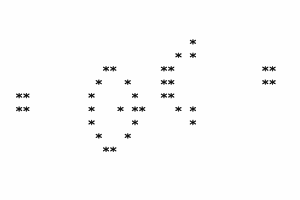

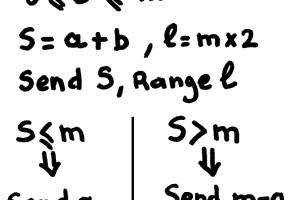

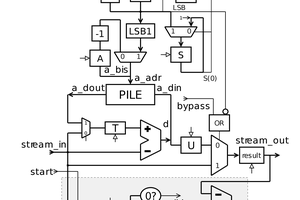

A µδ CODEC adds only one XOR gate to the critical datapath of a circuit that computes the simultaneous sum and difference of two integer numbers. The transform is perfectly bit-reversible and the reverse transform is almost identical to the classic version.

The two results (µ and δ) are as large as the original numbers, whereas a classical sum&difference expands the data by 2 bits (yet the result can only use 1/4 of the coding space).

The results are distorted : the MSB of µ gets disturbed by the sign of δ, which is simply truncated. The bet is that this distorsion is not critical for certain types of lossless compression CODECs, while reducing the size and consumption of hardware circuits.

Logs:

1. First publication

2. X+X = 2X

3. Another addition-based transform

Yann Guidon / YGDES

Yann Guidon / YGDES

The founding article will be published in french kiosks in a few days now :-) I can't wait !