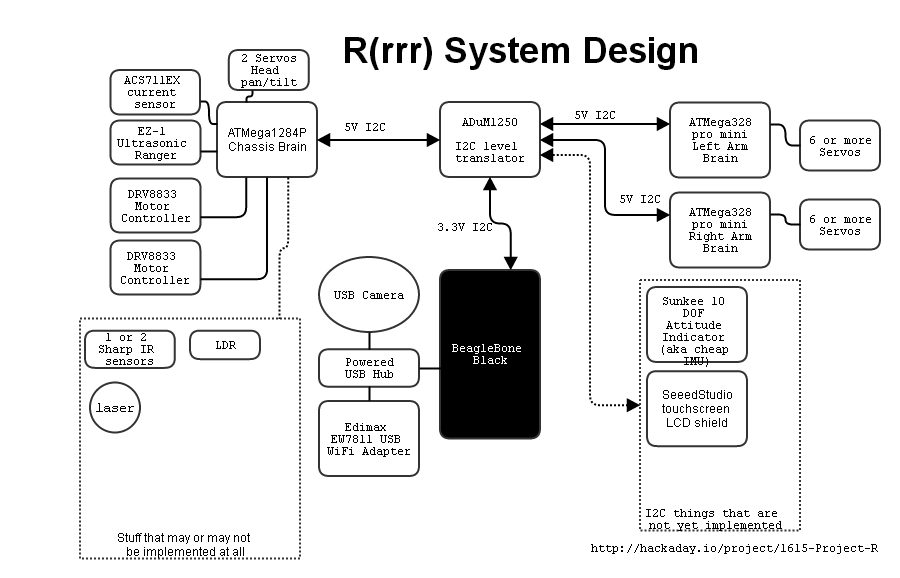

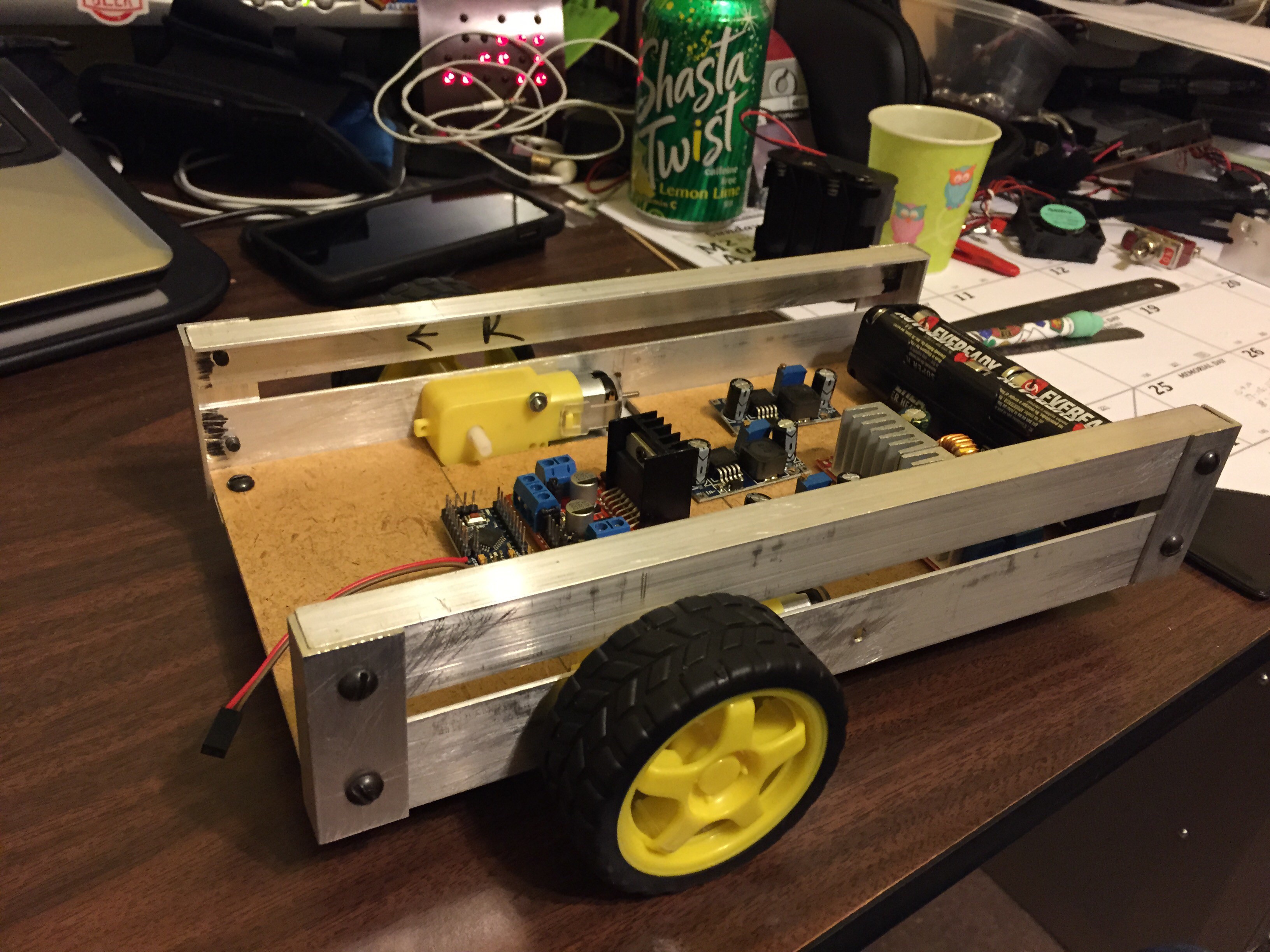

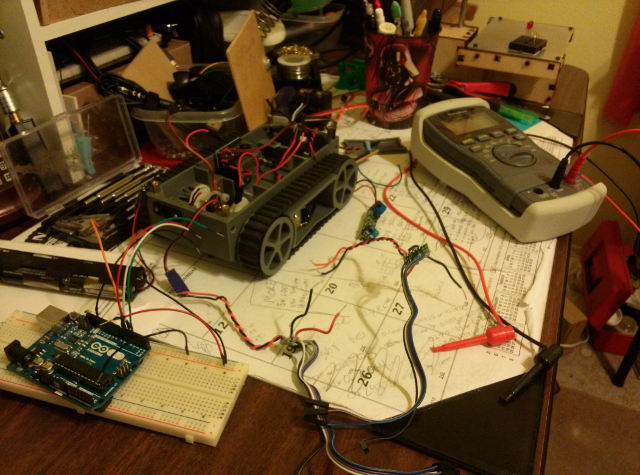

Project R is semi-modular in that the main components (e.g. chassis and arms) have their own mini brain of an ATMega. Then a main brain commands those parts over I2C. Thus the main brain can be swapped out for any suitable controller than can speak I2C, like the BeagleBone Black, Raspberry Pi, pcDuino, or even a Propeller or Arduino if one doesn't desire a USB system. With a sufficiently powered main brain R(rrr) may even be able to run ROS so one could research like the big guys (no promises though).

Using a BeagleBone Black for a main brain, Ruprecht has a wifi dongle and a webcam on its USB. The wifi could allow for on-robot software development as well as having a control interface accessible via LAN. Eventually it could let Project R harness the power of the cloud in its activities. (I'm truly sorry, but the "needs more buzzwords" part of my brain is screaming to be included.) The webcam is intended for using OpenCV or the like to work with object recognition. It could also, obviously, be used for telepresence operations like checking on your house or observing pets while you're out.

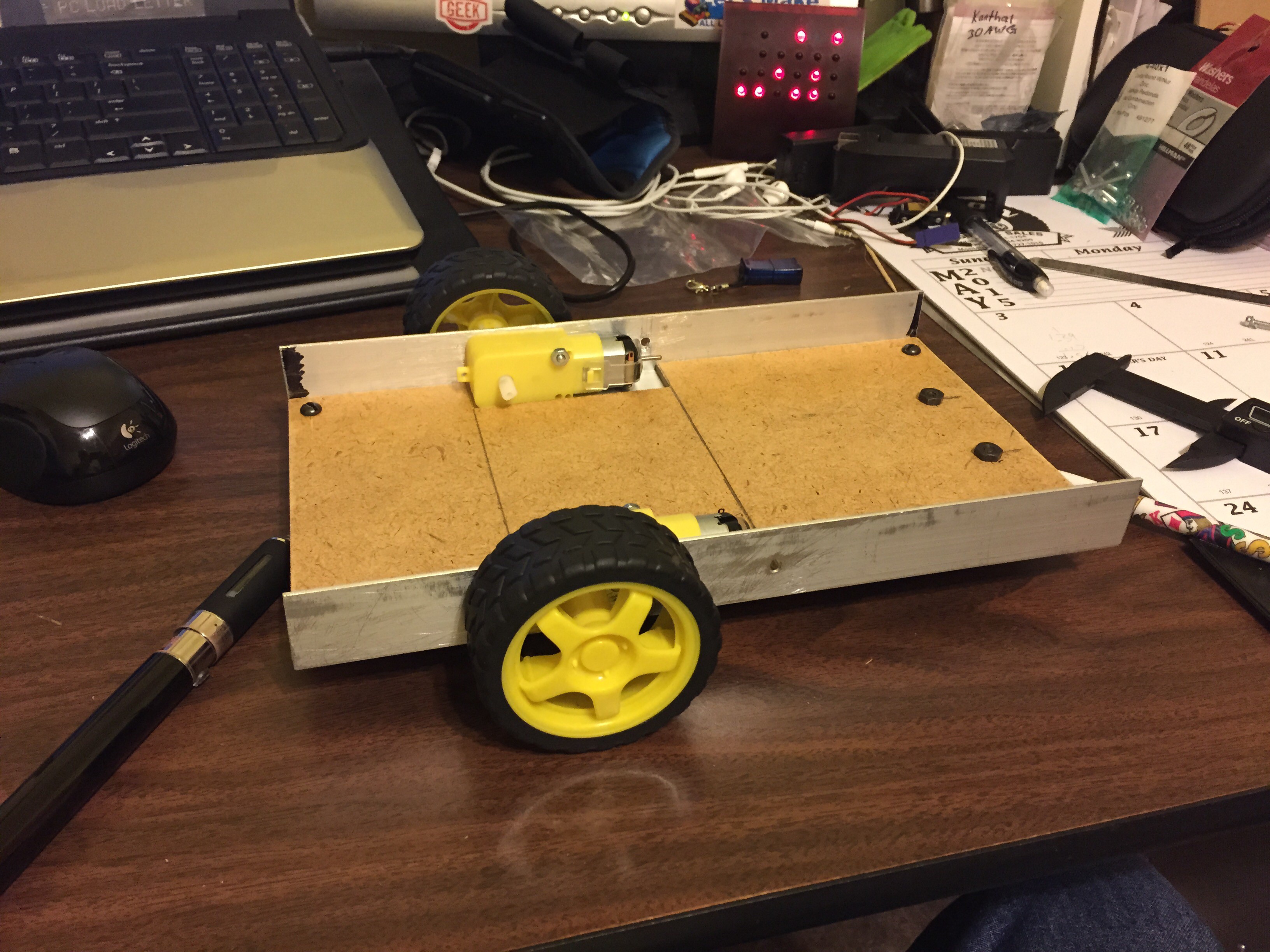

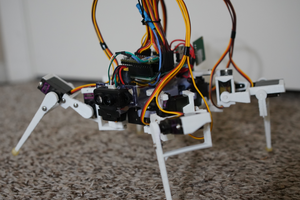

What good is a research robot if it can't grab things, right? Ruprecht will begin with a pair of simple 5 DOF arms made of hobby micro servos. Hopefully some more powerful arms are in his future.

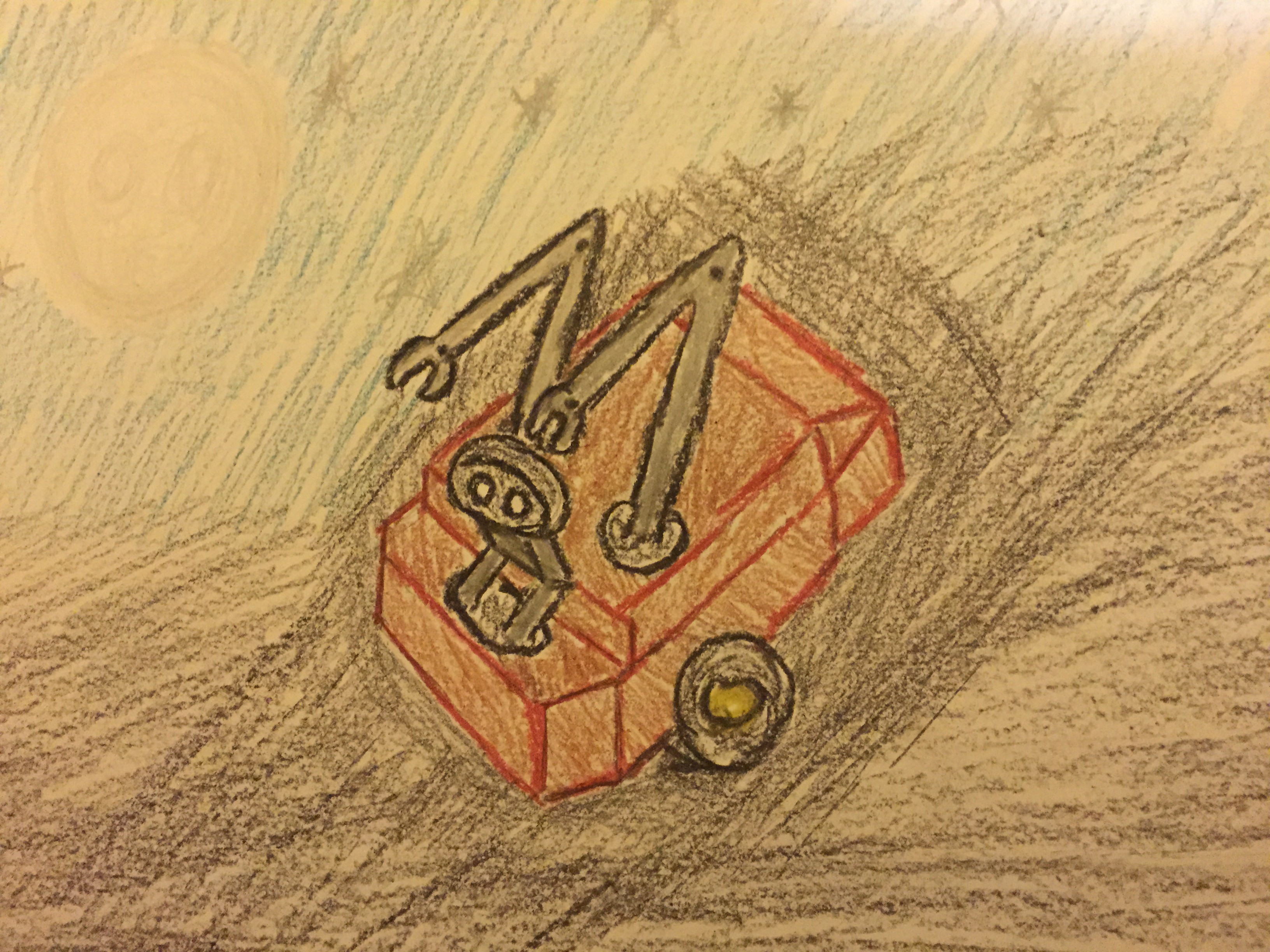

Here's a rough diagram showing some of what's already there, and what I intend to put in at some point.

Ooh, I made a video!

Rudolph

Rudolph

Mister Malware

Mister Malware

Jacob David C Cunningham

Jacob David C Cunningham

Dan Royer

Dan Royer

Steve

Steve

I love this little guy!